There’s a small grocery store near my home that refuses to sell certain high-demand items in bulk to new customers. If you walk in for the first time and try to buy ten bags of sugar, the shopkeeper will quietly limit you to two. Regular customers, however, face no such restriction. It’s not written anywhere, and there’s no formal system behind it—but over time, it has become a kind of embedded rule. The logic is simple: prevent hoarding, reduce arbitrage, and make sure supply reaches genuine buyers. It’s a practical response to behavior, not a theoretical design.

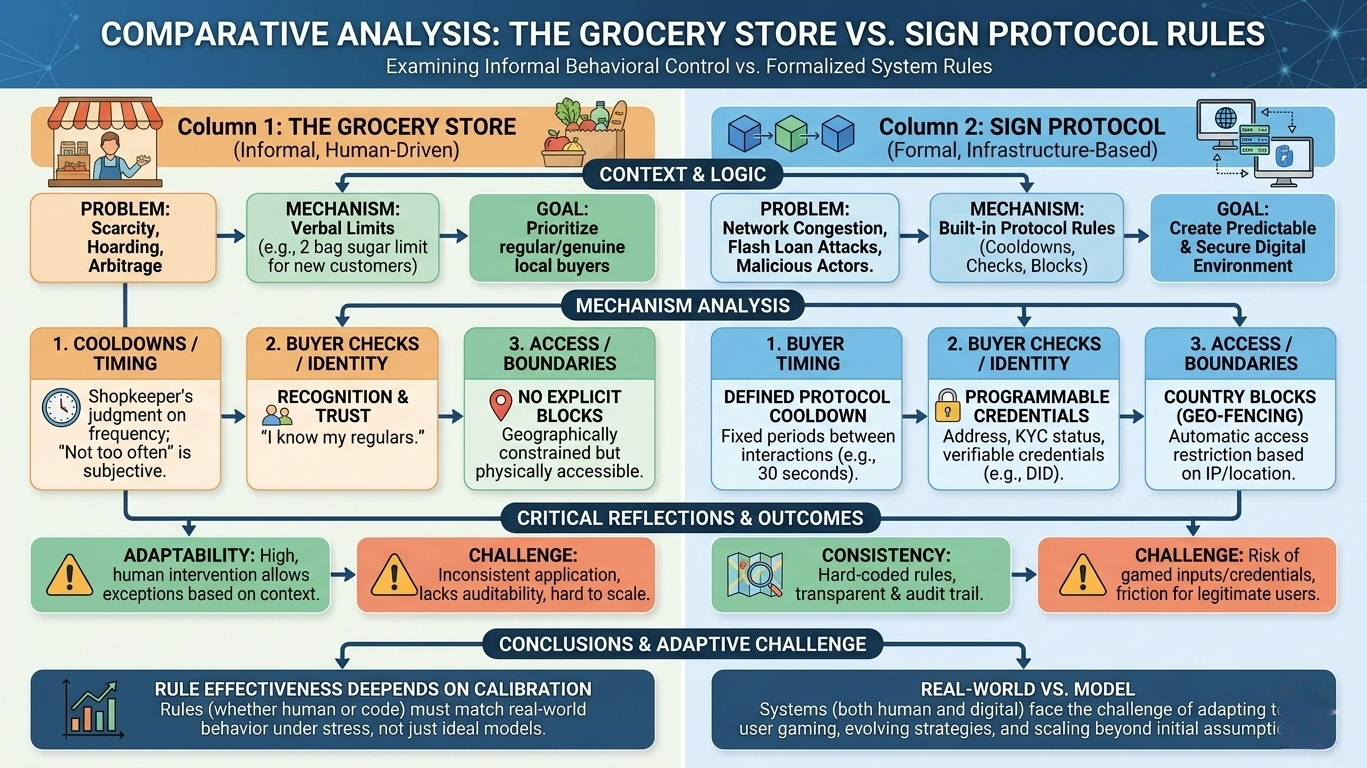

When I think about Sign Protocol’s built-in rules—cooldowns, buyer checks, and country blocks—I see something similar, but formalized into infrastructure. These mechanisms are essentially attempts to encode behavioral assumptions into the system itself. Instead of relying on human judgment like the shopkeeper does, the protocol tries to predefine how participants are allowed to interact. In theory, this reduces abuse, aligns incentives, and creates a more predictable environment. But in practice, it raises deeper questions about how systems behave once real users, with real incentives, begin to engage.

Cooldowns, for instance, are meant to slow things down. They introduce friction where speed might otherwise be exploited—rapid flipping, coordinated manipulation, or automated extraction strategies. Conceptually, this makes sense. Many real-world systems rely on timing constraints to maintain stability. Financial markets use settlement periods; supply chains operate on lead times; even institutions impose waiting periods to prevent impulsive decisions. But these mechanisms only work when they are calibrated correctly. Too short, and they fail to deter bad actors. Too long, and they begin to frustrate legitimate users, pushing activity elsewhere. The challenge is not in adding a cooldown—it’s in setting it at a level that reflects actual behavior under pressure, not just expected behavior in a controlled model.

Buyer checks introduce another layer. They attempt to answer a fundamental question: who should be allowed to participate? In traditional systems, this is handled through identity verification, credit scoring, or institutional trust. Sign Protocol seems to be trying to replicate this logic in a more programmable way, tying access to certain conditions or credentials. Again, the idea is structurally sound. Systems that allocate value need some way to distinguish between participants, especially when resources are limited or risks are unevenly distributed.

But this is where things start to hinge on the quality of inputs. A buyer check is only as reliable as the data it depends on. If the underlying credentials can be gamed, borrowed, or manufactured, then the check becomes more of a gatekeeping illusion than a real safeguard. In traditional finance, entire industries exist just to validate and audit these inputs. Translating that into a decentralized or semi-automated environment doesn’t eliminate the problem—it shifts it. The burden moves from institutions to the integrity of issuers and the resilience of the verification layer.

Country blocks are perhaps the most explicit acknowledgment that systems don’t operate in a vacuum. They reflect regulatory boundaries, risk management decisions, and sometimes political realities. In one sense, they make the system more compatible with the world as it exists. In another, they highlight a tension: a protocol that aims to be global and neutral is still shaped by jurisdictional constraints. This isn’t necessarily a flaw, but it does challenge the idea of universality. If access can be restricted based on geography, then the system inherits the same fragmentation that traditional systems already struggle with.

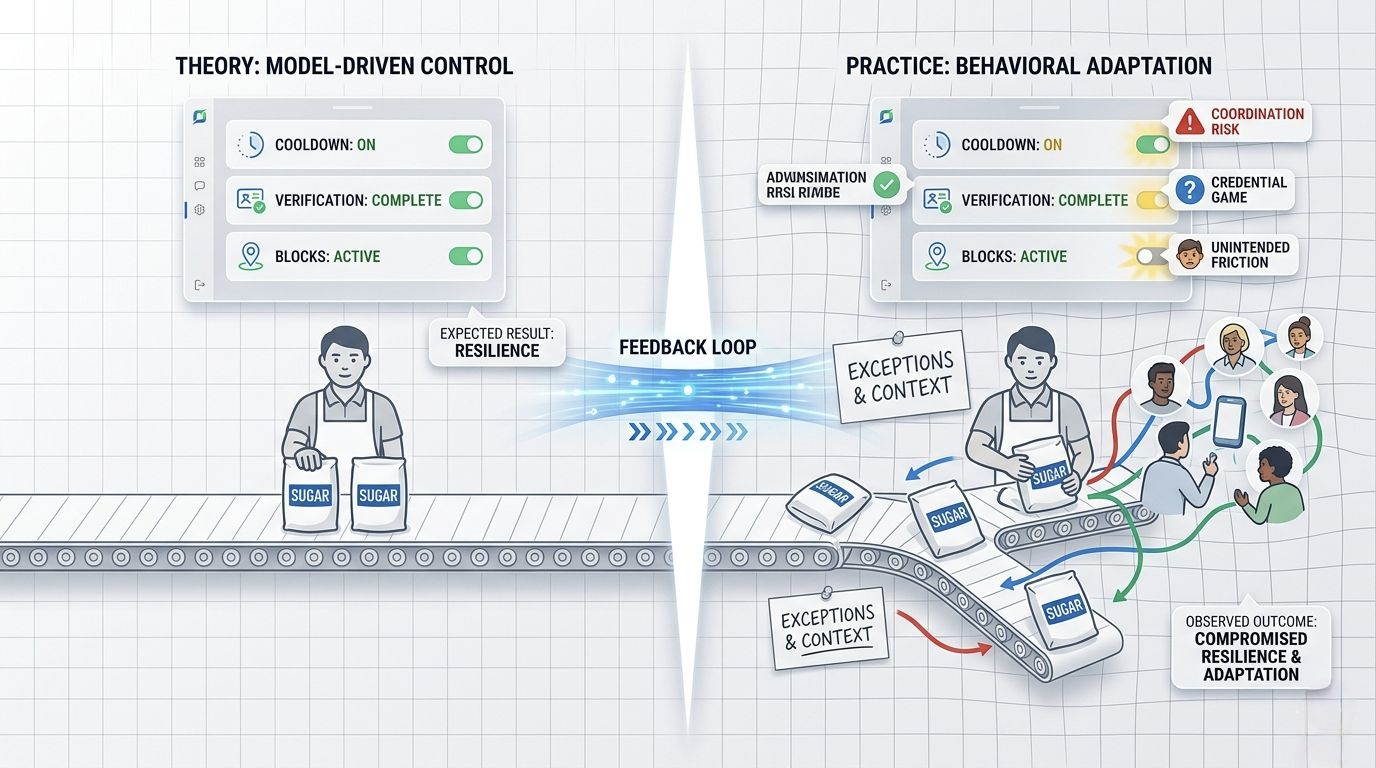

What ties all of these mechanisms together is an attempt to preemptively manage behavior. Instead of reacting to misuse after it happens, the protocol tries to constrain what is possible from the outset. This is a common pattern in engineered systems. In logistics, routes are optimized to reduce congestion before it occurs. In manufacturing, processes are standardized to minimize defects. But these systems also rely heavily on feedback loops. They are constantly adjusted based on observed outcomes, not just initial assumptions.

That’s where I find myself slightly skeptical. Built-in rules can create a sense of control, but real-world environments are adaptive. Participants learn, strategies evolve, and incentives shift. A cooldown that works today might be bypassed tomorrow through coordination. A buyer check that filters effectively at launch might become irrelevant as new forms of credentials emerge. A country block might reduce regulatory risk but also limit network effects in ways that are hard to reverse.

There’s also an operational dimension that’s easy to overlook. Every additional rule introduces complexity—not just in code, but in user experience and system maintenance. Users need to understand why they are being restricted, and systems need to handle edge cases, disputes, and unintended consequences. In traditional settings, these issues are often resolved through human intervention. In a protocol-driven environment, that flexibility is harder to achieve without undermining the very rules that were put in place.

I keep coming back to the grocery store analogy. The shopkeeper’s system works not because it is perfect, but because it is adaptable. He can make exceptions, recognize patterns, and adjust based on context. A protocol, by contrast, has to define its behavior in advance. That makes it more consistent, but also less forgiving when reality doesn’t match the model.

My overall view is cautiously neutral. I think the inclusion of cooldowns, buyer checks, and country blocks shows an awareness of real-world risks, which is a positive sign. These are not abstract ideas—they are practical tools drawn from how systems already manage scarcity, trust, and compliance. But their effectiveness will depend less on their presence and more on how they perform under stress. The real test isn’t whether these rules exist, but whether they continue to hold up when participants actively try to work around them, and when the system scales beyond its initial assumptions. If Sign Protocol can adapt these mechanisms over time based on evidence and outcomes, it may find a workable balance. If not, the rules themselves could become just another layer of friction without delivering the resilience they are meant to

That’s when we’ll know if this is real infrastructure—or just a well-structured assumption.

@SignOfficial #SignDigitalSovereignInfra $SIGN