one thing i keep coming back to with S.I.G.N. is how naturally it handles something most systems struggle with from the start. it doesn’t act like privacy and sovereign control are enemies forced into the same space. instead, it treats them like two realities that have to coexist if anything serious is going to be built. most platforms rush into picking a side. they either go all in on privacy and lose institutional trust, or they lean into control so heavily that verification starts to feel like quiet surveillance. and that tension doesn’t stay hidden for long. it shows up exactly where it matters most, in identity systems, payment flows, and public infrastructure where real data carries real consequences.

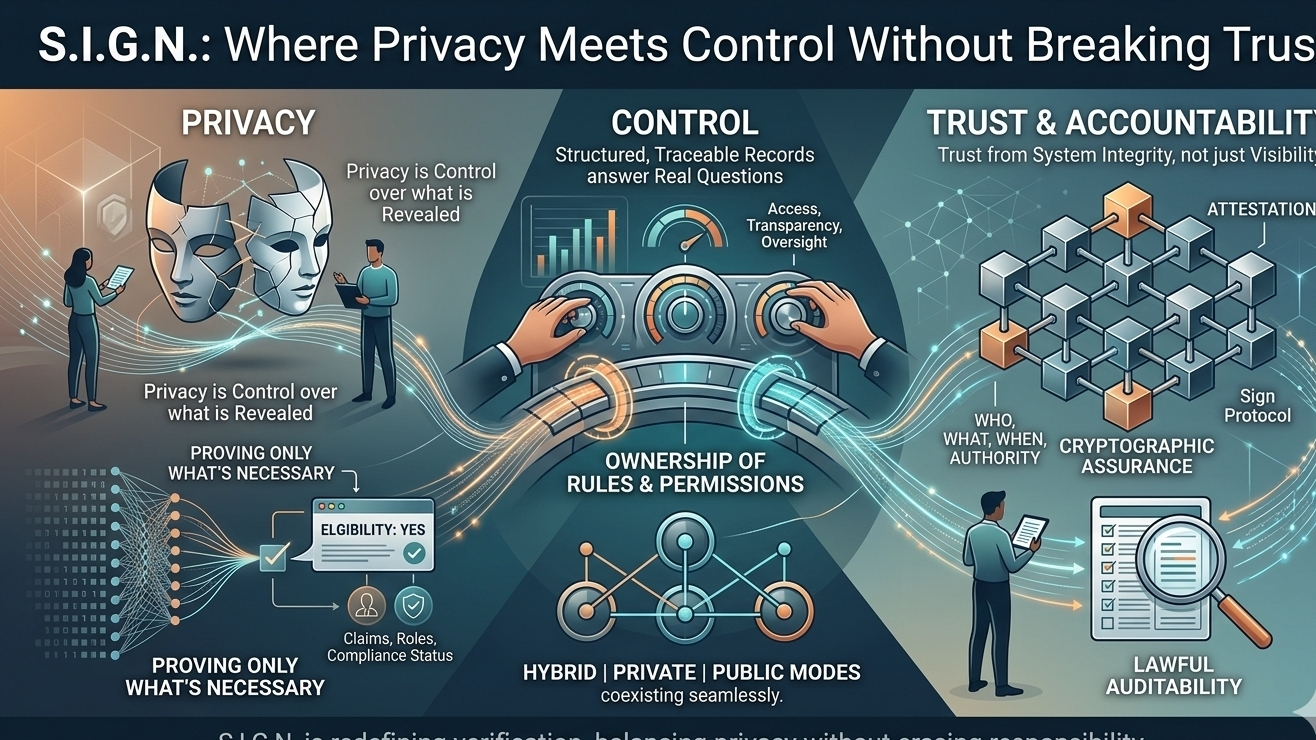

what makes this approach feel different is that it doesn’t try to simplify the problem. it accepts it. the core idea feels less about hiding everything or exposing everything, and more about being precise with what actually needs to be proven. instead of pulling full identity records every time something needs to be checked, the system leans into proving only what is necessary. a single claim, a specific eligibility condition, a verified role, or a compliance status can stand on its own without dragging the rest of a person’s data into the open. that shift sounds small, but it changes the entire experience of privacy. it turns privacy from secrecy into control over what gets revealed and when.

at the same time, it doesn’t ignore accountability, which is where a lot of “privacy-first” ideas fall apart. S.I.G.N. keeps bringing the conversation back to evidence. not vague proof, but structured, traceable records that answer real questions later. who approved something, under what authority, when it happened, and what rules were in place at the time. that layer matters because systems don’t just need to function in the moment, they need to stand up to audits, disputes, and oversight. the role of Sign Protocol here feels central, acting like a backbone where these attestations live, whether they are public, private, or somewhere in between. it creates a situation where privacy doesn’t erase responsibility, and responsibility doesn’t require exposing everything.

what stands out even more is how control is being defined. it’s not about having constant visibility into every piece of data. it’s about owning the rules, the access, the permissions, and the ability to step in when needed. private, hybrid, and public modes aren’t treated like competing ideologies, but as tools for different situations. some systems need confidentiality at their core, others need transparency, and many need both depending on the context. the idea that one infrastructure can handle all of that without forcing everything into a single model is what makes this feel closer to real-world systems rather than theoretical ones.

the more i think about it, the more it feels like S.I.G.N. is redefining what verification should actually mean. it’s not about exposing everything just to prove one point. it’s about structuring claims so they can stand independently, backed by cryptographic assurance and tied to real authority. that way, trust doesn’t come from visibility alone, but from the integrity of the system itself. and that’s a much stronger foundation than most approaches offer.

the only place where things still feel uncertain is not in the design, but in how it plays out in reality. “lawful auditability” always sounds clean on paper, but in practice it depends on governance, incentives, and how responsibly power is used. no system can fully solve that. it can only create the conditions for balance. and that’s what this feels like at its core. not a perfect answer, but a serious attempt to build something where privacy doesn’t disappear and control doesn’t become overwhelming.

and maybe that’s why it keeps pulling my attention back. because it doesn’t try to escape the hard parts. it builds around them.