SIGN didn’t feel important to me at the beginning. It came across like many other projects—clear message, simple structure, and a promise that sounded complete if you didn’t look too closely. I’ve seen enough of those to know that first impressions don’t mean much here. Things often look solid early on, then slowly lose shape when they’re actually used.

So I didn’t pay much attention at first. I let it sit in the background.

But over time, certain ideas keep coming back, not because they’re loud, but because they show up when something else isn’t working. Credential verification is one of those. It doesn’t matter much when everything is smooth. It becomes important when someone starts asking questions—when something needs to be proven, not just assumed.

That’s where SIGN started to feel a bit more real to me.

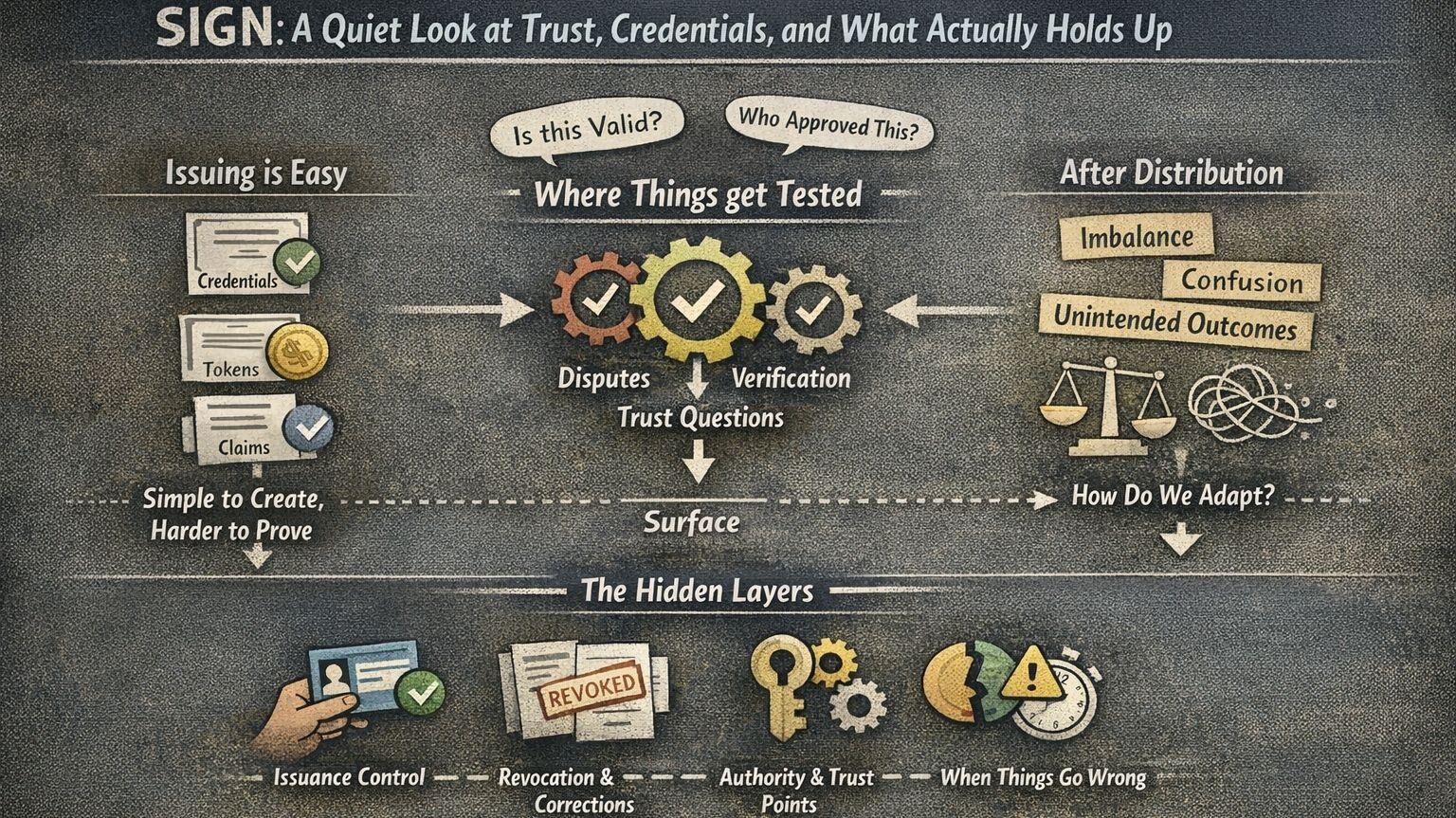

Not because of what it says it does, but because of the space it’s trying to sit in. There’s a quiet difference between creating something and being able to stand behind it later. Most systems focus on the first part—issuing tokens, assigning credentials, making things visible. The second part, the part where someone asks “is this actually valid?”, is usually weaker.

SIGN seems to lean into that second part.

And that’s not an easy place to build from.

Because verification only matters when things aren’t simple. When someone disagrees. When something feels off. That’s when systems get tested. Not in perfect conditions, but in moments where trust is questioned. And most systems aren’t really designed for that—they’re designed to work as long as no one looks too closely.

So naturally, I find myself thinking less about what SIGN promises, and more about how it would behave in those uncomfortable situations.

If a credential is challenged, what actually happens? Who decides what’s true? Can something be corrected if it turns out to be wrong? And how much of that process still depends on trust outside the system?

These questions don’t have clean answers. They never really have.

There’s also the part that people don’t usually talk about—the small details. How credentials are issued, who controls that flow, what happens when mistakes happen. Because mistakes always happen. Not in obvious ways, but in quiet ones that only become visible later.

And then there’s distribution.

Giving out tokens has never been the hard part. What matters is what happens after. Distribution shapes behavior. It can create imbalance, confusion, or unintended outcomes. A system might work perfectly at launch and still struggle over time because of how those tokens move and settle.

SIGN seems to understand that distribution without verification doesn’t really solve anything. It just delays the problem.

Still, recognizing that doesn’t automatically mean it’s solved.

I’ve been around this space long enough to see a pattern. The more a system tries to define trust, the more complicated it becomes. Because trust isn’t just technical. You can build structures, rules, layers—but at some point, people are still involved. Decisions still come from somewhere.

And that’s where things usually get messy.

So I don’t think about SIGN in terms of whether it’s good or bad. I think about how it might hold up when things stop being predictable. That’s usually where the truth shows itself.

For now, it’s something I’m watching quietly. Not with excitement, not with doubt strong enough to dismiss it either. Just with a bit of distance.

It hasn’t fully revealed itself yet. And I’m okay with not rushing that. Some things only make sense with time, and I’d rather see where it goes than decide too early what it is.