There was a moment when I tried to reconnect a wallet across multiple Web3 applications after switching devices, and what surprised me wasn’t the connection itself, but how different each platform treated the same identity step. One app verified instantly, another kept me waiting, and a third simply failed without giving any meaningful reason. That inconsistency stayed in my mind longer than the actual task I was trying to complete.

What I noticed over time is that identity related processes in crypto don’t fail in an obvious way. They fail quietly, through delays, retries, and unclear states. From a user perspective, it just feels like “lag,” but from a system perspective, it usually points to something more structural: coordination gaps between verification, data propagation, and execution layers that don’t always align under load.

If I try to simplify it, it reminds me of a large library where every section has its own catalog system, but none of them share a unified index. You might find the same book in one section instantly, while in another section you are told it exists but cannot be located right away. Nothing is broken individually, but the overall experience becomes unpredictable because there is no shared coordination layer connecting everything together.

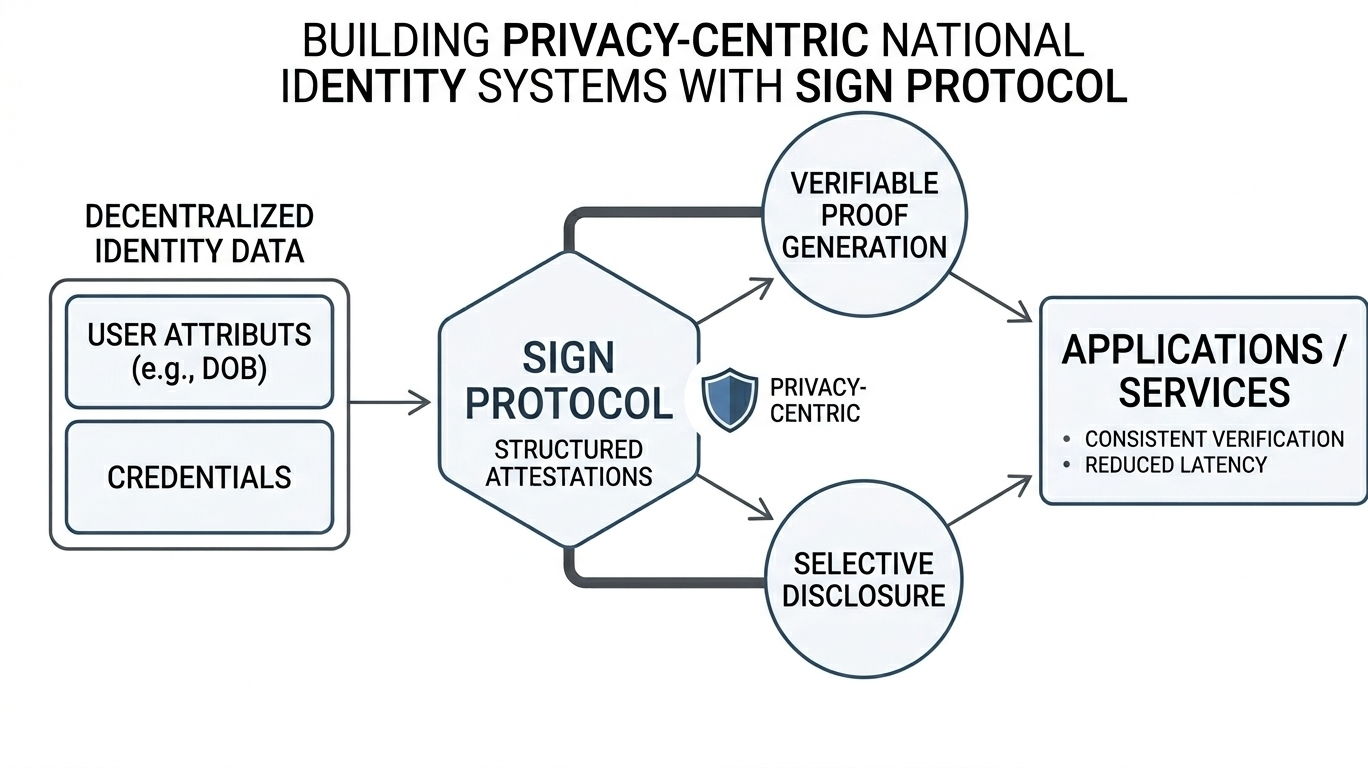

When I look at how Sign approaches this, what caught my attention is the attempt to make attestations behave less like scattered events and more like structured, portable units of verification. Instead of identity proofs being recreated or reinterpreted at every step, the idea seems to lean toward a more consistent flow where verification can move through systems without losing its structure or meaning.

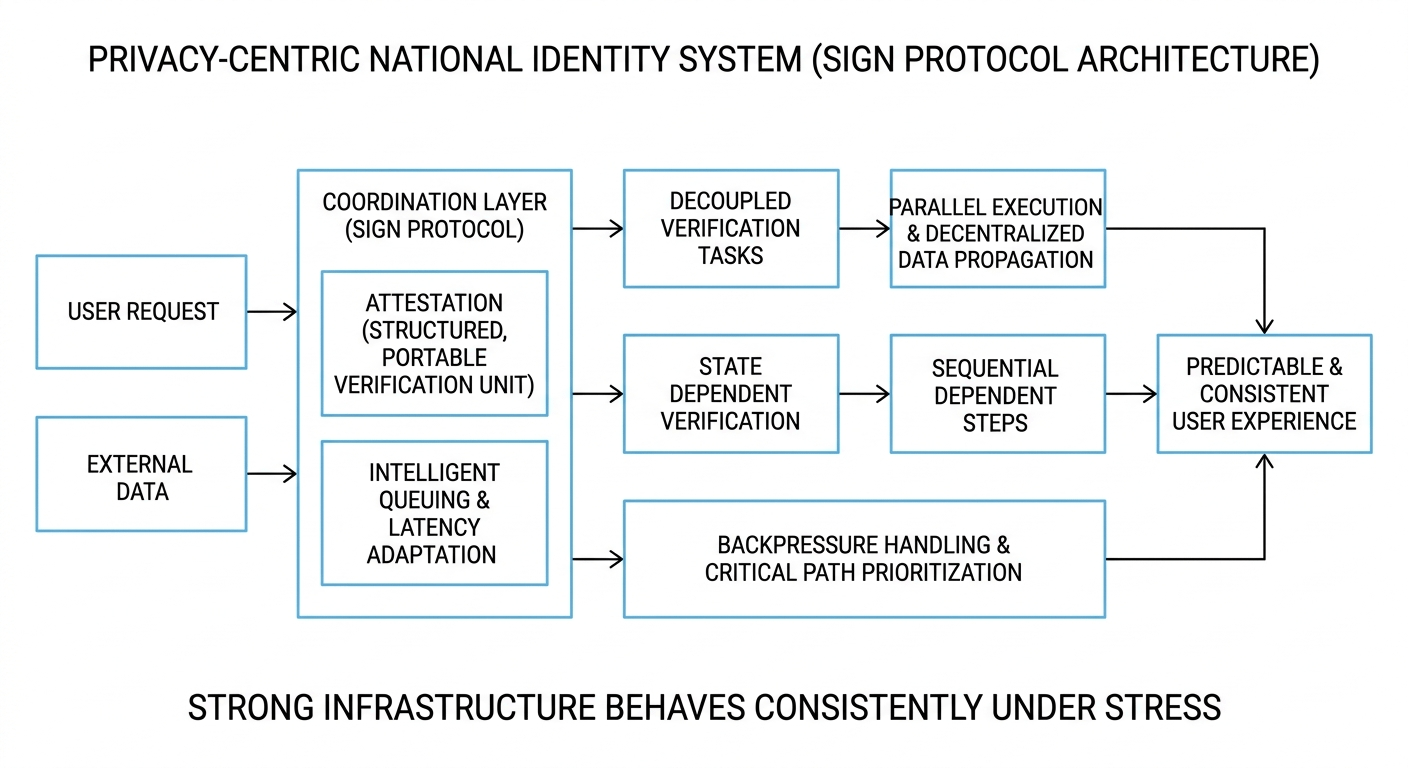

From a system perspective, what interests me most is how such a design handles real world pressure. I usually think in terms of workflow architecture: how tasks are scheduled when demand increases, how verification is separated from other heavy operations, and whether the system allows independent components to scale without blocking each other. In many traditional setups, everything is processed in a single sequence, and that becomes the first point where delays start to accumulate.

What matters in practice is how congestion is absorbed. In real networks, traffic is never stable. It comes in bursts, slows down, then spikes again unexpectedly. A resilient system doesn’t try to eliminate this reality; it adapts to it. That might involve intelligent queuing, distributing workloads across multiple nodes, or simply ensuring that non-essential tasks don’t block critical verification paths.

Another layer that I find important is the balance between ordering and parallel execution. Identity systems cannot fully parallelize everything because some steps depend on previous validation. But forcing strict ordering across all operations creates unnecessary bottlenecks. The real challenge is designing a structure where only the truly dependent steps remain sequential, while everything else flows in parallel without breaking consistency.

Backpressure is where the system’s behavior becomes most visible. When demand exceeds capacity, does it fail loudly, or does it slow down in a controlled and predictable way? Does it preserve essential operations while deferring less critical ones? These are subtle design choices, but they define whether a system feels stable under stress or fragile when conditions change.

When I step back from all of this, the idea that stays with me is simple. Strong infrastructure is not defined by how fast it performs in ideal conditions, but by how quietly and consistently it behaves when conditions are not ideal. The best systems don’t call attention to themselves they just continue working even when everything around them becomes unpredictable.