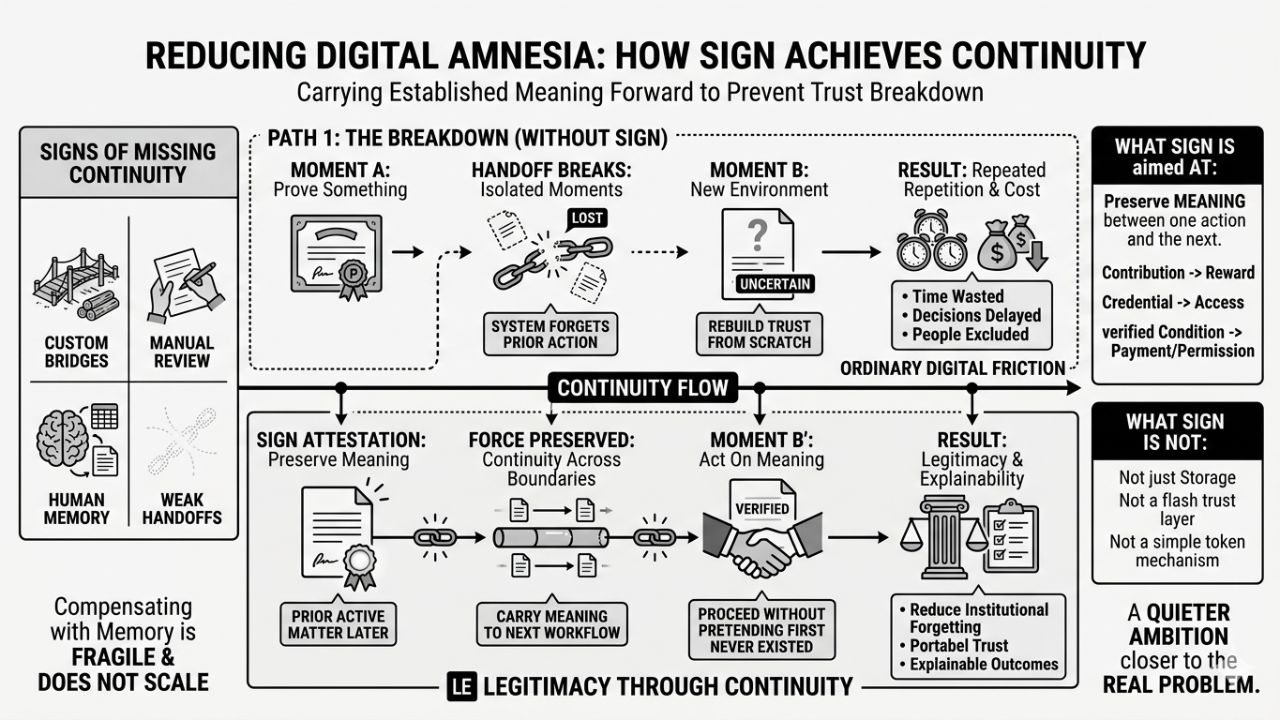

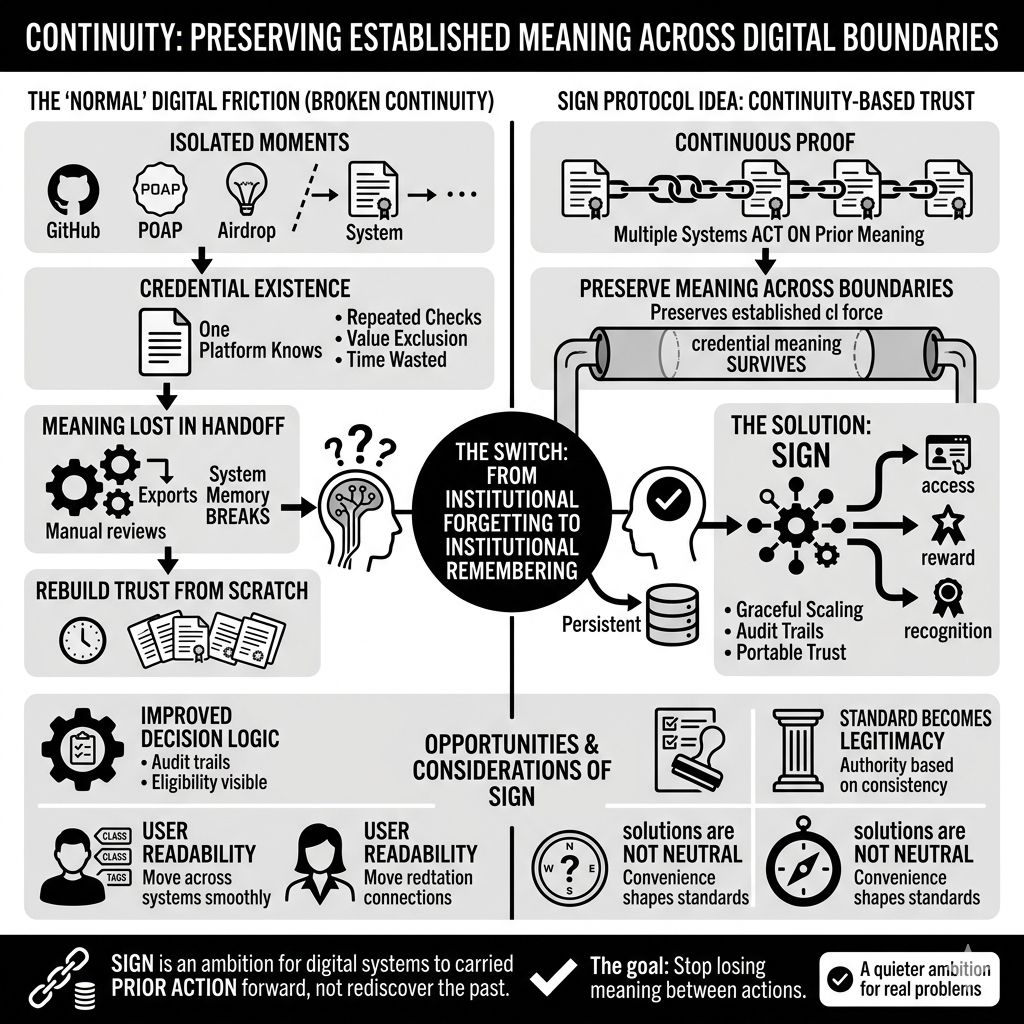

To be honest, The internet is full of isolated moments that do not connect well enough. A person proves something here. earns something there. participates somewhere else. gets recognized in one system, then disappears into ambiguity in the next. The record may exist, sometimes very clearly, but the continuity breaks. And once continuity breaks, trust gets rebuilt from scratch.

That happens so often online that people start treating it as normal.

I used to think of that as just ordinary digital friction. Annoying, yes, but expected. Different systems have different rules. Different communities trust different signals. Different institutions ask for different proof. Fine. That seemed like a coordination problem, not a deeper structural one. But after a while you notice how much cost sits inside that repetition. Time gets wasted. decisions get delayed. people get excluded by weak handoffs rather than clear rules. value gets distributed through processes that are harder to defend than they first appear.

That is where things start to shift.

Because the issue is not simply whether a credential exists. The issue is whether the meaning of that credential survives contact with another environment. A lot of digital systems can create proof. Far fewer can preserve the force of that proof across boundaries. One platform may know you contributed. One protocol may know you hold something. One organization may know you qualify. But the moment another system needs to act on that, everything becomes uncertain again.

You can usually tell when continuity is missing because people compensate with memory.

Not system memory. Human memory.

Someone on the team remembers how eligibility was decided last time. Someone has the old spreadsheet. Someone knows why one wallet was included and another was not. Someone can explain which credential mattered and which one did not. In small systems, that works for a while. In larger systems, it becomes fragile. The more a process depends on internal memory, the less stable it actually is.

That is the angle from which SIGN starts to feel important to me.

Not as a flashy trust layer. Not as a simple token mechanism. More as an attempt to reduce the amount of institutional forgetting built into the internet. A credential is one way of saying something has already been established. A distribution process is one way of acting on that. Put those together and the deeper question becomes whether digital systems can carry prior meaning forward instead of repeatedly acting like every context is new.

That sounds modest, maybe even obvious.

But it is not obvious in practice.

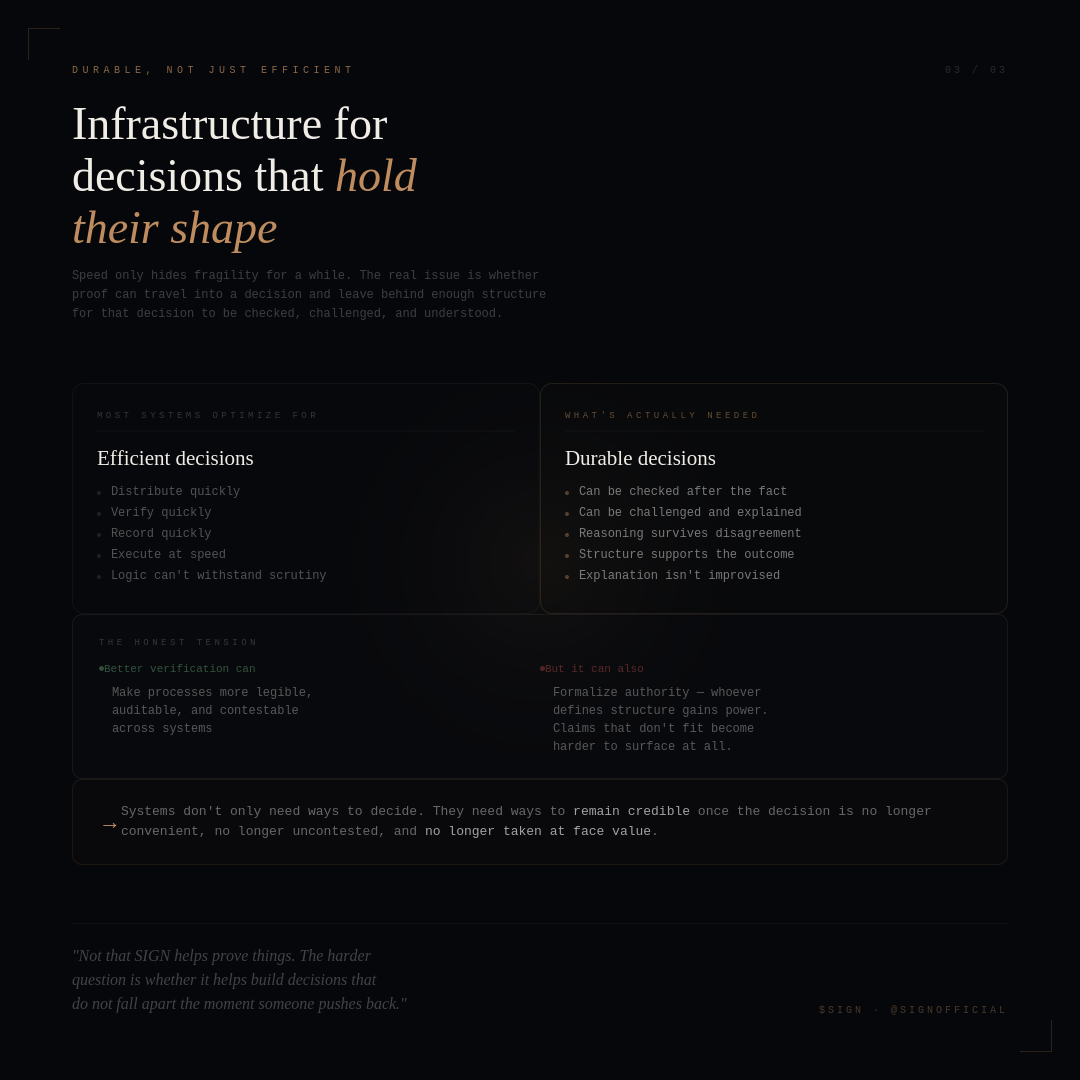

Most systems still behave as if proof is local. true here, unclear there. valid in one workflow, awkward in another. accepted by one community, not easily legible to the next. So builders keep creating bridges by hand. custom rules, exports, manual review, patched logic, repeated checks. It works, but it does not scale gracefully. And more importantly, it does not create much confidence when outcomes need to be explained.

That part matters because continuity is not just about convenience. It is also about legitimacy.

If someone receives access, value, status, or recognition, the system should be able to show why in a way that does not collapse under scrutiny. If someone is excluded, that also needs to be explainable. The more systems rely on fragmented records and temporary coordination, the harder that becomes. At that point the problem is no longer technical in a narrow sense. It becomes operational, social, and sometimes legal.

That is where things get interesting.

Because continuity is also a kind of power. The system that helps preserve meaning across contexts starts influencing which meanings survive. Which credentials travel well. Which issuers get trusted. Which forms of proof become standard. Which users remain legible when they move across systems, and which users become harder to classify. So even though the problem is real, the solution is never neutral.

That is why I do not look at SIGN and assume more structure automatically means a better internet.

It might mean fewer repeated checks. better distribution logic. stronger audit trails. more portable trust. All of that could matter. But it could also mean that certain categories of legitimacy become easier to circulate than others. Some credentials fit neatly into shared systems. Others stay messy, local, or hard to transfer. Convenience has a way of becoming standard, and standard has a way of becoming authority.

Still, the underlying need feels hard to dismiss.

The internet keeps creating situations where prior action needs to matter later in another setting. Not just be visible, but actually count. A contribution needs to support a reward. A credential needs to support access. A verified condition needs to support a payment or a permission. That requires more than storage. It requires continuity strong enough that a second system can proceed without pretending the first one never existed.

That is why SIGN stays interesting to me.

Not because it promises some final answer to trust. That feels too clean. More because it seems to be aimed at one of the internet’s more ordinary and persistent failures: the inability to carry established meaning forward without losing it in the handoff. A lot of digital systems still work as though every new context has to rediscover the past for itself.

That gets expensive after a while.

It also gets tiring.

So when I think about SIGN, I do not really think first about credentials as objects or tokens as outputs. I think about whether online systems can become a little less forgetful. Whether proof can retain enough shape, enough context, enough legitimacy that the next decision does not have to begin from scratch. That feels like a quieter ambition than most technology language allows for.

But maybe that is why it feels closer to the real problem.

Not how to create more digital activity.

How to stop losing meaning between one action and the next.

@SignOfficial