Last night at 2:17 AM right after the CreatorPad snapshot window quietly closed I found mself still staring at the chain instead of logging off. It didn’t feel like the end of a campaign. It felt more like I had just witnessed a system settle into place. A few attestation calls were still moving through the network in smal disciplined bursts and wht caught my attention wasn’t size or hype, but rhythm. Gas crept slightly above its usual range, hovering just enough to suggest coordinated activity rather than random interaction. I kept noticing repeating traces like 0x7f3.. pushing schema registrations and 0x2ab4.. finalizing validator confirmations within tightly grouped blocks. The average cost per attestation sat somewhere in that 45k–70k gas range but the real signal was not efficiency alone it was consistency. It felt engineered, almost deliberate like something designed to be reused rather than consumed once and forgotten.

Okay I tell you at one point during a simulated credential flow, I hit a pause that stayed with me longer than I expected. The credential had been issued, but the validator confirmation lagged by a few seconds. Nothing broke, nothing failed, but I was sitting in a strange in-between state where the proof technically existed yet wasn’t usable. That small delay forced me to confront something deeper. The system assumes a clean progression issuance, validation, usage but reality doesn’t always respect sequence. In that moment, I wasn’t interacting with a finished piece of infrastructure. I was experincing the fragility of timing inside a trust system. It made me realize that even the smallest latency can introduce doubt, not in the code, but in perception.

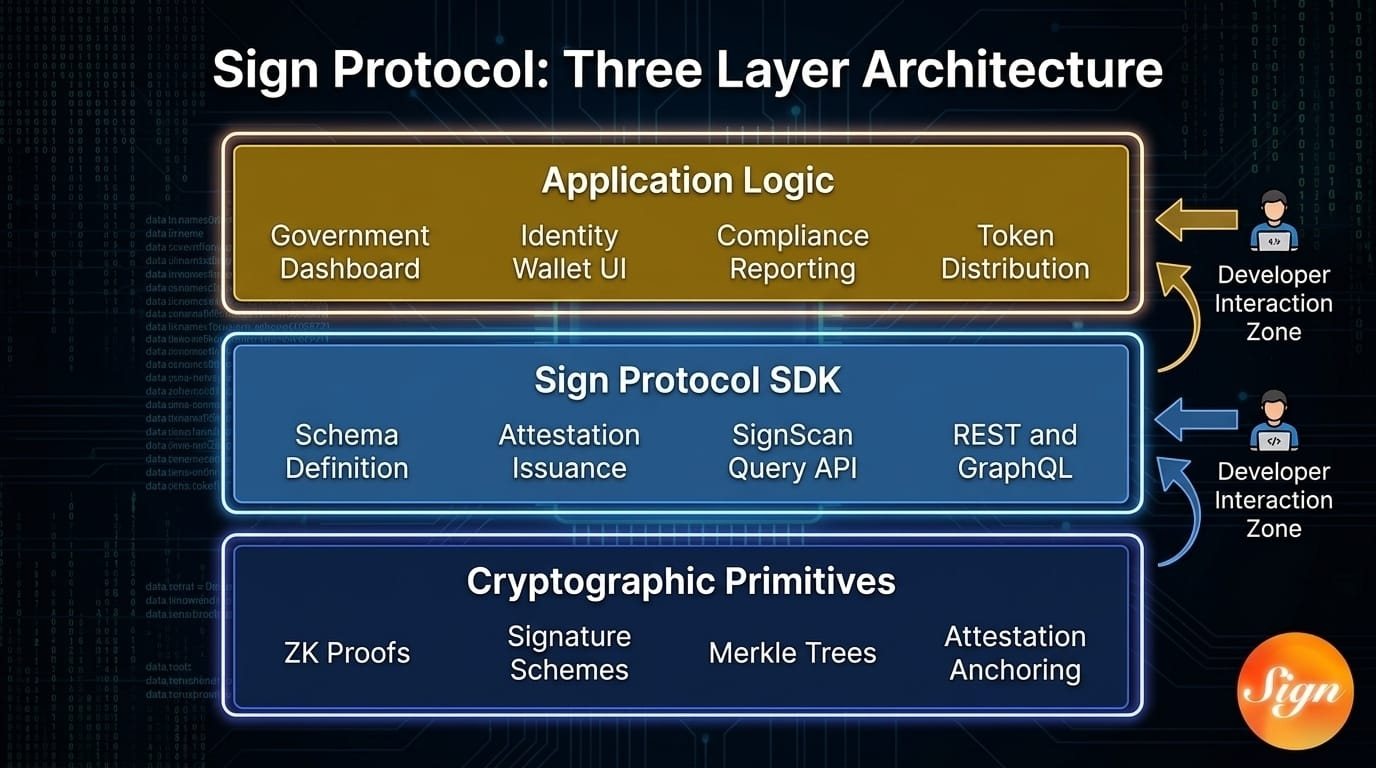

As I kept tracing the mechanics I stopped seeing @SignOfficial as a stack of layers and started seeing it as a loop. The economic incentives shaping validators are not neutral they are directional, influenced by token dynamics that still carry visible pressure, especially with the gap between circulating valuation and fully diluted expectations. That pressure inevitably feeds into how validation is performed, which then defins what becomes accepted as truth at the technical level. And once that truth is encoded, governance decisions begin to form around it schemas, revocation rights, acceptable proofs. But governance doesn’t sit above the system; it bends back into incentives again. Everything feeds everything else. It’s not layered architecture. It’s recursive design.

I observe all of this and while observing this I couldn’t help but mentally contrast it with systems like Chainlink and Bittensor. Chainlink focuses on pulling external truth into the chain, acting as a bridge between worlds, while Bittensor optimizes for the production and ranking of intelligence itself. Sign, however, feels like it operates on a different axis entirely. It’s not asking what is true or who is most intelligent. It’s asking something quieter but more structural: once something is verified, how far can that verification travel without breaking?

The honest part I keep returning to is this every credential is a snapshot, but nothing about reality is static. A verified identity, a credential, a proof it all represents a moment that has already passed. And yet the system is designed to make that moment reusable across contexts, across platforms, across time. That’s where a subtle tension begins to emerge. Validity doesn’t guarantee relevance. A proof can remain technically correct while slowly drifting away from the context in which it actually makes sense. There’s no dramatic failure when that happens, no visible exploit. Just a quiet misalignment that grows over time.

Even when I look at the market behavior around SIGN the pattern feels familiar on the surface but slightly different underneath. The post-TGE movement, the sharp rise to its peak, the rapid correction, and then the partial recovery all fit within expected crypto cycles. But the structural gap between market cap and FDV still lingers as a reminder that future supply will test the system’s resilience. Narrative alone won’t carry it. The infrastructure has to hold under real usage, under real pressure, when incentives begin to stretch.

What stays with me more than anything is how unflashy the entire experience feels. There’s no immediate wow moment. No obvious spectacle. Instead, it creates a kind of quiet friction in thought. It keeps pulling me back to the same question again and again are we actually removing friction, or are we just relocating it somewhere less visible? Because it increasingly feels like Sign Protocol isn’t eliminating complexity. It’s compressing it, structuring it, and making it portable.

And somewhere at the edge of all this design, there’s still a human being interacting with it. Not a validator or a schema designer, but an individual trying to prove something about themselves in a system that prefers clean, reusable truths. I keep wondering whether making trust portable actually empowers that person, or whether it gradually standardizess tham into fixed representations that don’t evolve as quickly as they do. That’s the ripple I’m still sitting with, and I don’t think it resolves easly.