I’ve noticed something about myself lately I’ve started tuning out whenever a new infrastructure project claims it can “fix trust.” Maybe it’s fatigue. Maybe it’s pattern recognition. After a while, everything begins to sound the same: new primitives, better rails, seamless verification. The promises stack up faster than the systems ever seem to deliver. And somewhere along the way, I stopped expecting any of it to actually change how things work in the real world.

It wasn’t a single moment that caused that shift. More like accumulation. Watching people go through the same loops submitting documents, verifying identities, re-verifying them again somewhere else, slightly altered, slightly mistrusted. I remember helping someone navigate a cross-border payment issue that had nothing to do with money itself. The funds were there. The sender was known. The receiver was legitimate. But the system on the other side needed its own proof, its own format, its own interpretation of what “verified” meant. So everything had to be redone. Not because anything was wrong but because nothing carried over cleanly.

That’s the part that started bothering me. Not fraud. Not even inefficiency, exactly. Something more subtle. It felt like meaning itself wasn’t portable.

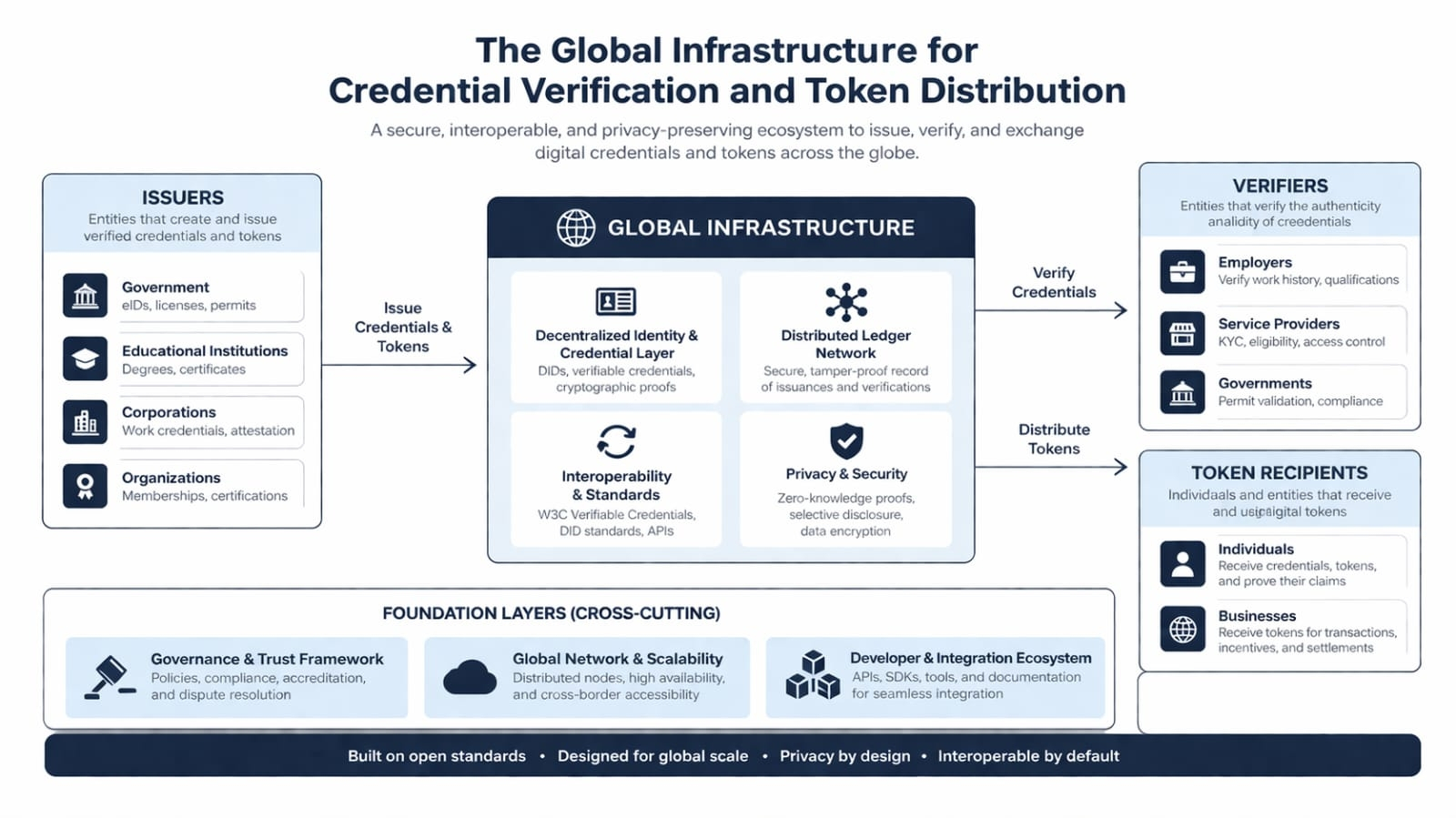

I didn’t think much of it until I came across SIGN not in the usual promotional way, but buried in a discussion about attestations. At first glance, it looked like another attempt to formalize verification. Credentials, signatures, distribution layers. I almost dismissed it. But something about the framing caught me off guard. It wasn’t trying to prove truth in isolation it was trying to preserve how that truth was understood across systems.

That distinction felt small, but it stayed with me.

Because maybe verification was never the core problem. Systems are already quite good at proving things. The issue is what happens after. A credential can be valid, cryptographically sound, even widely recognized and still fail the moment it crosses into a new environment that doesn’t share the same assumptions. So it gets checked again. And again. Each time slightly differently, each time losing a bit of its original context.

What SIGN seems to be circling around is not just whether something is true, but whether that truth can survive translation.

I keep coming back to that. The idea that infrastructure isn’t really about data movement it’s about meaning preservation. And once you start looking at it that way, a lot of things shift. Identity systems aren’t just identity systems. Payment rails aren’t just about settlement. Even compliance frameworks start to look less like rules and more like competing interpretations of the same underlying signals.

It makes sense, then, why verified data keeps getting re-verified. Not because it’s untrustworthy, but because its meaning isn’t universally legible. A credential issued in one jurisdiction carries assumptions legal, cultural, procedural that don’t automatically map onto another. So the receiving system doesn’t just check the data; it reconstructs the context. Or tries to.

That reconstruction is where friction lives.

And maybe that’s where something like SIGN fits in not as a solution in the traditional sense, but as a kind of connective layer. Attestations instead of isolated signatures. Systems that don’t just assert facts, but attach context to them in a way that other systems can interpret without starting from zero. At least, that seems to be the direction.

I’m not entirely convinced it works. But I can see the shape of the problem more clearly now.

If you extend this out, the implications get uncomfortable pretty quickly. Think about identity across jurisdictions how many times a person has to prove who they are in slightly different ways depending on where they are and what they’re trying to access. Or compliance systems, where the same transaction might be acceptable in one framework and suspicious in another, not because the underlying reality changed, but because the interpretation did.

Even distribution systems aid, subsidies, emerging CBDCs run into this. It’s not enough to know that someone is eligible. That eligibility has to be understood and accepted across multiple layers of infrastructure, each with its own logic. When that breaks down, you don’t just get delays. You get exclusion.

And all of it traces back to this quiet instability: truth that doesn’t travel well.

What’s interesting is how invisible this usually is. Infrastructure only really shows itself when something fails. When a payment stalls, or an identity gets rejected, or a process has to restart for reasons no one can quite explain. Those moments feel like edge cases, but they’re not. They’re signals that the system underneath isn’t aligned on meaning.

From that angle, repetition starts to look less like redundancy and more like a symptom. Every time something has to be re-validated, it’s a sign that the original validation didn’t carry enough context to be trusted elsewhere. And if that’s happening at scale, it’s not just inefficient it’s structural.

So the value of infrastructure might not be in how fast it moves data, but in how rarely it forces truth to be re-proven.

That’s a strange way to think about it, especially in a space that tends to prioritize speed and throughput. But it feels closer to the actual friction people experience.

Of course, there’s a tension here that I can’t quite resolve. The more you try to preserve context across systems, the more you risk exposing it. Privacy starts to blur into traceability. Zero-knowledge approaches try to minimize what’s revealed, but then you run into the opposite problem systems that can verify without understanding. And if understanding is the goal, not just verification, then something has to give.

There’s also the question of control. Who defines the context that gets attached to an attestation? Who decides which interpretations are valid? Standardization helps, but it also compresses nuance. And in some cases, that compression might be the point.

When I look at where these ideas are being tested parts of the Middle East, emerging markets, places experimenting with digital identity and programmable distribution it doesn’t feel like a clean rollout. More like parallel experiments happening without a shared playbook. Pilots that work in isolation but don’t always translate. Systems that function technically, but still rely on off-chain judgment to resolve edge cases.

That gap between pilot and production feels significant.

And then there’s the quieter risk the kind that doesn’t show up in whitepapers. Infrastructure has a way of shaping behavior without announcing itself. If meaning starts to travel more efficiently across systems, that could reduce friction in ways that are genuinely useful. But it could also make certain forms of control more seamless, more ambient. Less visible.

I don’t think SIGN, or anything like it, resolves that tension. If anything, it brings it closer to the surface.

Which leaves me in a slightly uncomfortable place. I can see why this matters more than I initially thought. The problem it’s pointing at feels real, and deeper than most of the narratives built around it. But I’m not sure what it becomes once it actually works whether it makes systems more interoperable in a way that empowers people, or just more coherent in a way that benefits whoever defines the rules.

And maybe that’s the part I can’t shake.

Because if trust and meaning really can move across systems without being reinterpreted every time, then something fundamental changes.

I’m just not sure yet who that change is really for.