There was a moment when I looked at a verified on chain record and felt something I didn’t expect. Everything was correct the signature checked out, the data matched, nothing looked off. But the more I looked at it, the more I realized I wasn’t completely sure what it meant anymore. Not in a technical sense, but in a practical one. Depending on how I thought about the surrounding context, the same attestation seemed to tell slightly different stories. That feeling stayed with me.

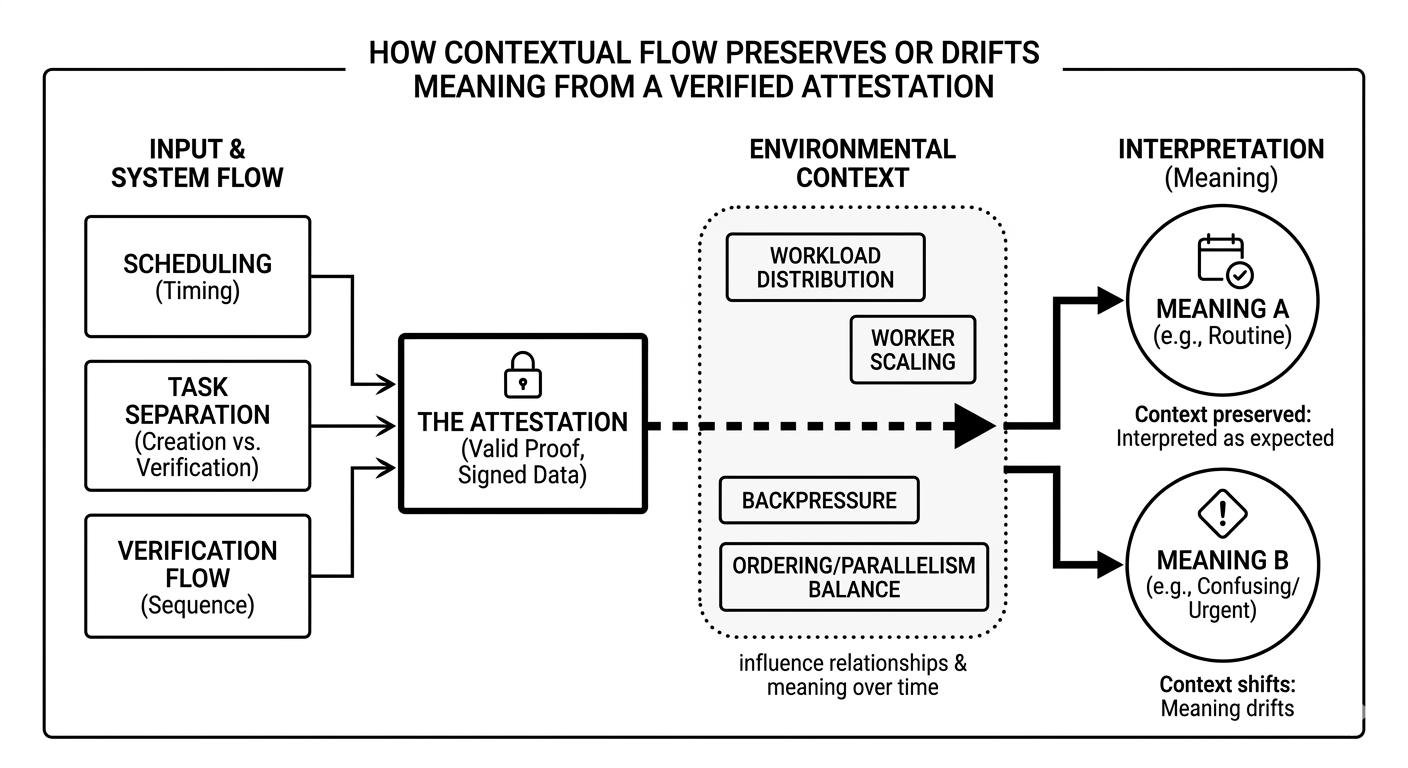

After noticing this a few times, I started to pay more attention to something we don’t usually talk about enough. We often assume that once something is verified, its meaning is fixed. But what I noticed is that meaning is not always locked in the same way as validity. The system can confirm that something happened, but how that “something” is understood can still shift depending on timing, sequence, or what else is happening around it. And that gap is easy to miss until you actually feel it.

I tend to think of it like a package moving through a busy delivery network. Every check point stamps it as verified, and each stamp is correct. But the meaning of that package can still change. It might be urgent if it arrives early, routine if it arrives late, or even confusing if it shows up out of expected order. The label doesn’t change but the context around it does. And that context quietly shapes how the package is understood.

When I look at how Sign approaches this, what caught my attention is that it doesn’t seem to treat attestations as isolated pieces of truth. Instead, it feels like the system is trying to account for the environment those attestations exist in. From a system perspective, that shift is subtle but important. It suggests that producing a valid proof is not the end of the story preserving its meaning over time is just as important.

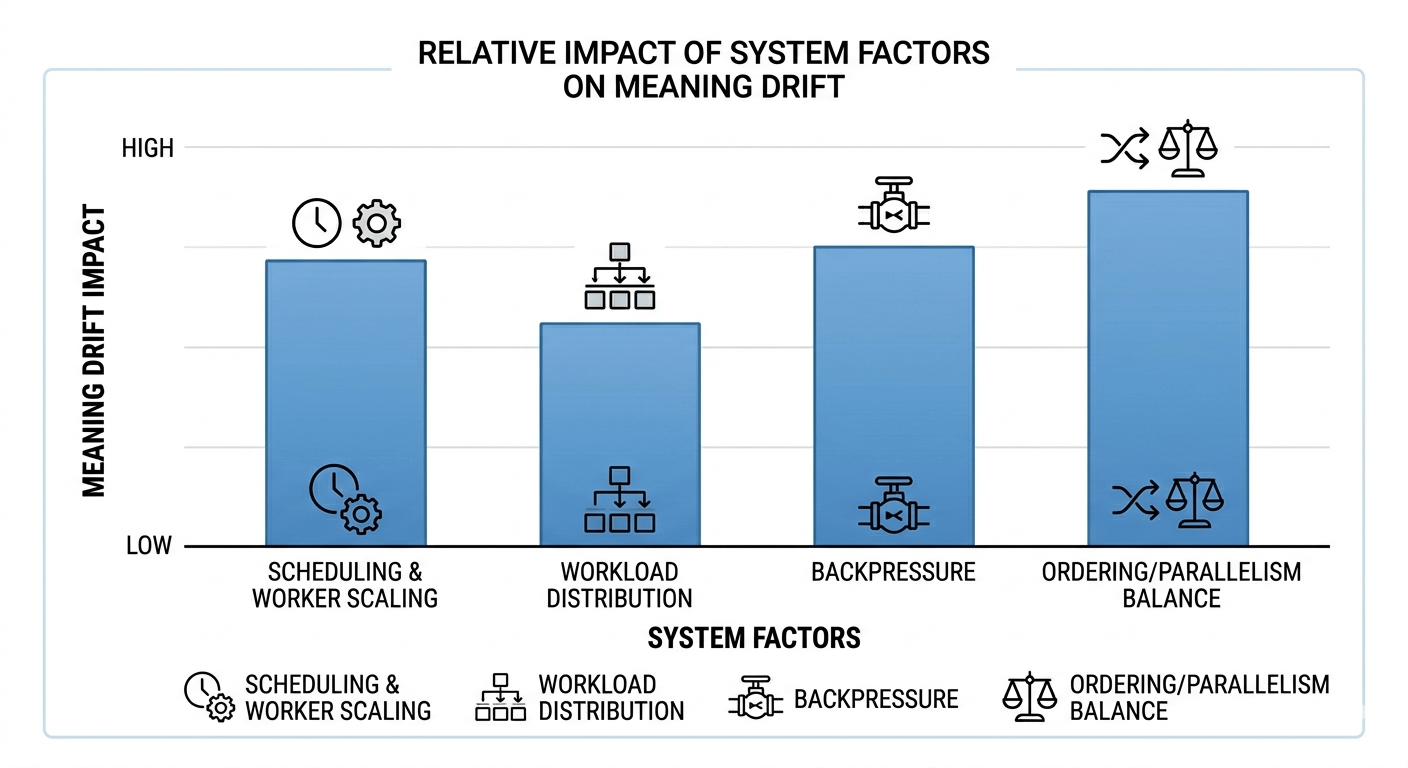

What interests me more is how this idea shows up in the structure itself. Scheduling affects when an attestation enters the system, which can influence how it relates to others. Task separation helps keep the creation of data from interfering with its verification, which reduces the chances of distortion. The verification flow feels less like a single checkpoint and more like a path that maintains consistency across different conditions.

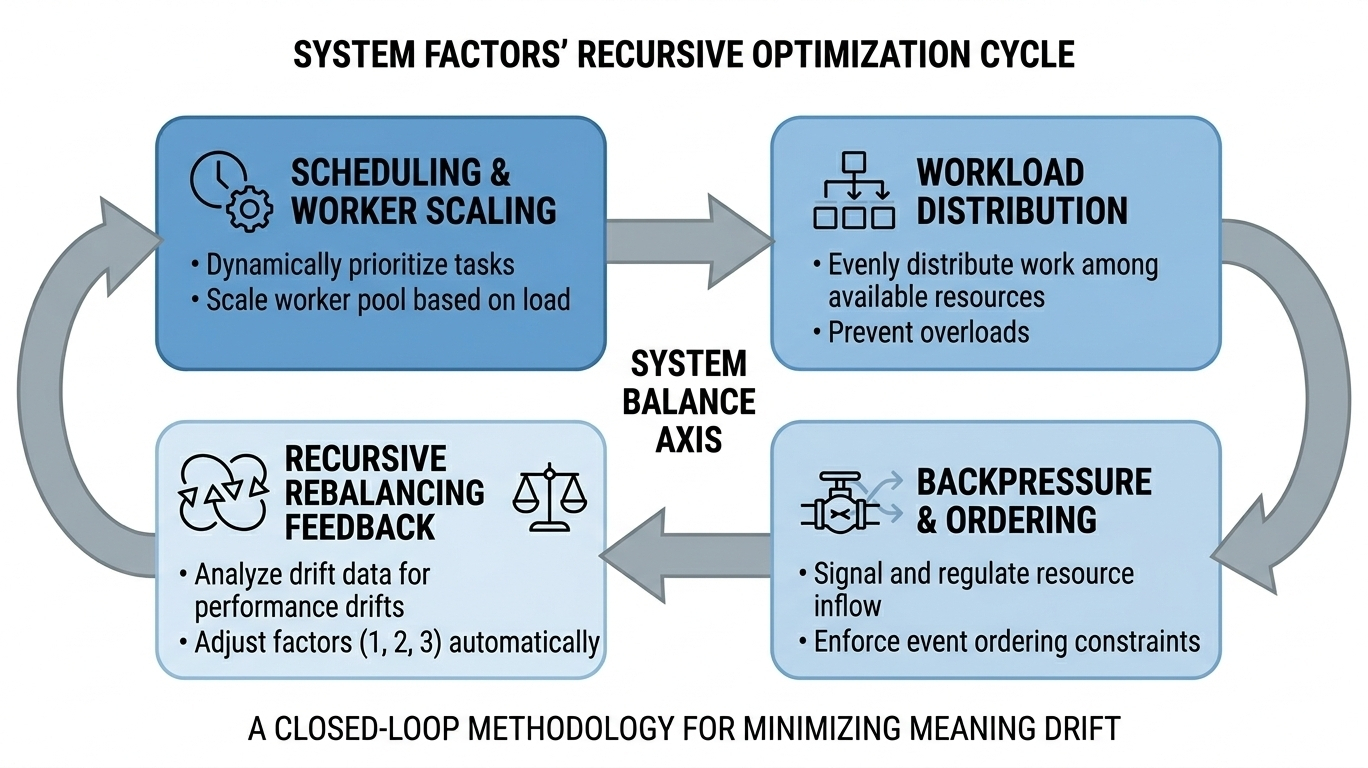

Then there are the quieter parts workload distribution, worker scaling, and backpressure. These are the things you don’t notice when everything is smooth, but you definitely feel when they’re missing. If one part of the system slows down, even slightly, it can change how events line up. And once that alignment shifts, interpretation starts to drift, even if the data itself is still correct.

The balance between ordering and parallelism also plays into this. Real world events don’t happen in perfect order, but systems still need to present them in a way that makes sense. Too much ordering can slow things down. Too much parallelism can blur relationships between events. What matters in practice is how naturally the system handles that tension without making it visible to the user.

The more I think about it, the more I realize that an attestation is never just a static piece of data. It carries timing, relationships, and context with itbeven if those things aren’t immediately visible. And if the system doesn’t preserve that context carefully, meaning can slowly drift, even when everything is technically correct.

A reliable system, at least from what I’ve seen, is not the one that simply produces valid proofs. It’s the one that quietly keeps those proofs meaningful, no matter when or how you look at them. The kind of system where you don’t have to second guess what you’re seeing, because it feels consistent every time.