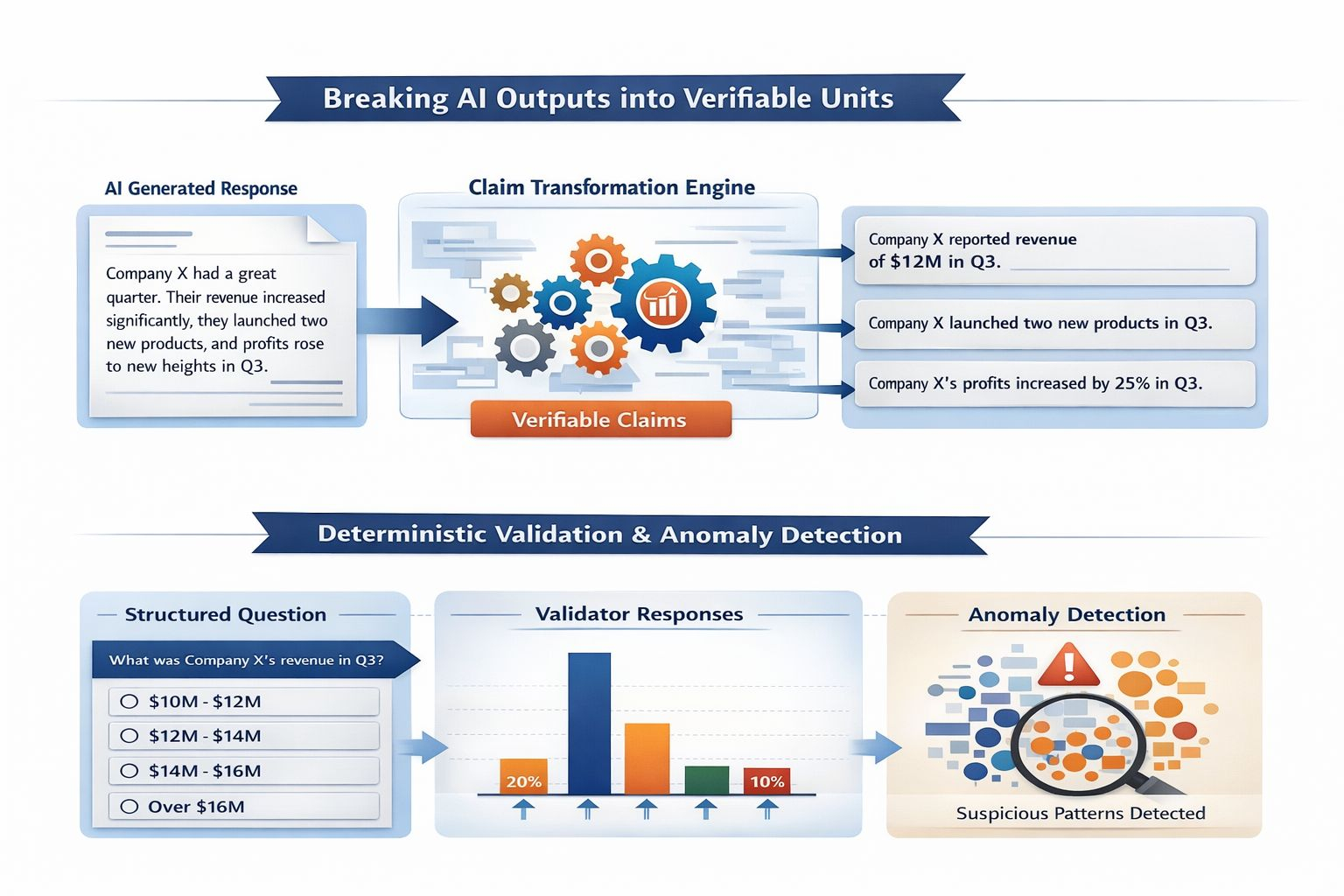

As artificial intelligence systems become more autonomous, the need for structured verification grows increasingly urgent. Within the architecture of @Mira - Trust Layer of AI , the Claim Transformation Engine serves as a foundational layer that converts unstructured AI outputs into verifiable, consensus-ready units. This mechanism transforms probabilistic text generation into structured, testable information.

At its core, the Claim Transformation Engine performs output decomposition. When an AI model generates a long-form response, it often contains multiple embedded assertions—facts, relationships, numerical values, causal statements, and conditional logic. Instead of treating the response as a single block of text, the engine isolates each atomic statement into what can be defined as an entity-claim pair.

An entity-claim pair follows a structured logic format: a clearly identifiable subject (entity) combined with a precise, testable assertion (claim). For example, rather than validating a paragraph about a company’s performance, the engine extracts structured units such as: “Company X reported revenue of $Y in QZ.” Each extracted unit becomes independently verifiable. This granular breakdown reduces ambiguity and allows distributed validators to focus on measurable truth conditions.

To enhance deterministic validation, the system converts open-ended claims into multiple-choice formats wherever possible. Instead of asking validators whether a statement is true or false in abstract terms, the protocol reframes claims into bounded outcome sets. For instance, a numerical claim may be transformed into selectable ranges, while categorical assertions may be converted into discrete answer options. This structured framing significantly reduces interpretive variance between validators and increases consensus efficiency.

The multiple-choice transformation serves another critical function: statistical detectability of dishonest behavior. In an open-ended validation environment, malicious participants could submit arbitrary justifications that are difficult to compare. However, when responses are constrained to defined options, probabilistic modeling becomes possible. The system can measure deviation patterns, detect anomaly clusters, and identify statistically improbable agreement rates that suggest collusion or random guessing.

Statistical detectability plays a central role in maintaining economic integrity. If validators were guessing randomly in a four-option structure, the expected accuracy rate would approximate 25 percent over time. The network can monitor validator performance distributions and compare them against expected random baselines. Persistent deviation below or suspiciously above statistical norms can trigger review mechanisms or economic penalties. This ensures that participation is not only decentralized but also measurable and accountable.

By combining entity-claim structuring, multiple-choice normalization, and probabilistic monitoring, the Claim Transformation Engine creates a bridge between generative AI and decentralized verification. It shifts validation from subjective interpretation to structured evaluation. Each claim becomes a discrete unit that can be audited, scored, and economically incentivized.

In this model, reliability is no longer an afterthought applied after AI output is generated. Instead, verification is embedded directly into the transformation pipeline. The Claim Transformation Engine does not merely check AI responses—it redesigns them into a format where truth can be measured, consensus can be achieved, and trust can be mathematically enforced.