Why I Believe Trust Is the Missing Piece in AI

When I look at how fast artificial intelligence is growing, I feel both excitement and concern at the same time. AI can now write full articles, help doctors study medical data, support businesses in making decisions, and even assist in scientific research. It feels like we are living in the future. But if I am honest, there is something that still makes me uncomfortable. AI can sound confident even when it is completely wrong. And when something sounds confident, people naturally trust it.

This is the real problem Mira Network is trying to solve.

Mira Network is not just another AI project. It is a decentralized verification protocol built to make AI outputs reliable. Instead of asking us to blindly trust what an AI says, Mira creates a system where AI answers can be checked, verified, and confirmed using blockchain consensus and economic incentives. It is not about replacing AI. It is about protecting us from its mistakes.

The Problem We All Feel But Rarely Talk About

If you have ever used an AI tool, you may have noticed something strange. Sometimes it gives perfect answers. Other times it creates information that sounds real but has no basis in fact. These are called hallucinations. The system is not lying on purpose. It is predicting patterns. But when predictions are wrong, the result becomes misinformation.

Now imagine this happening in healthcare. Or in legal advice. Or in financial systems. A small mistake can lead to serious consequences.

We are also seeing bias appear in AI systems. If the training data contains unfair patterns, the AI can repeat them. And because the answer looks polished and professional, people may not question it.

This is where I think Mira Network has a powerful role. Instead of trying to make one AI perfect, they are building a system that checks AI outputs before we trust them.

How Mira Network Changes the Game

The idea behind Mira is surprisingly simple, but very powerful.

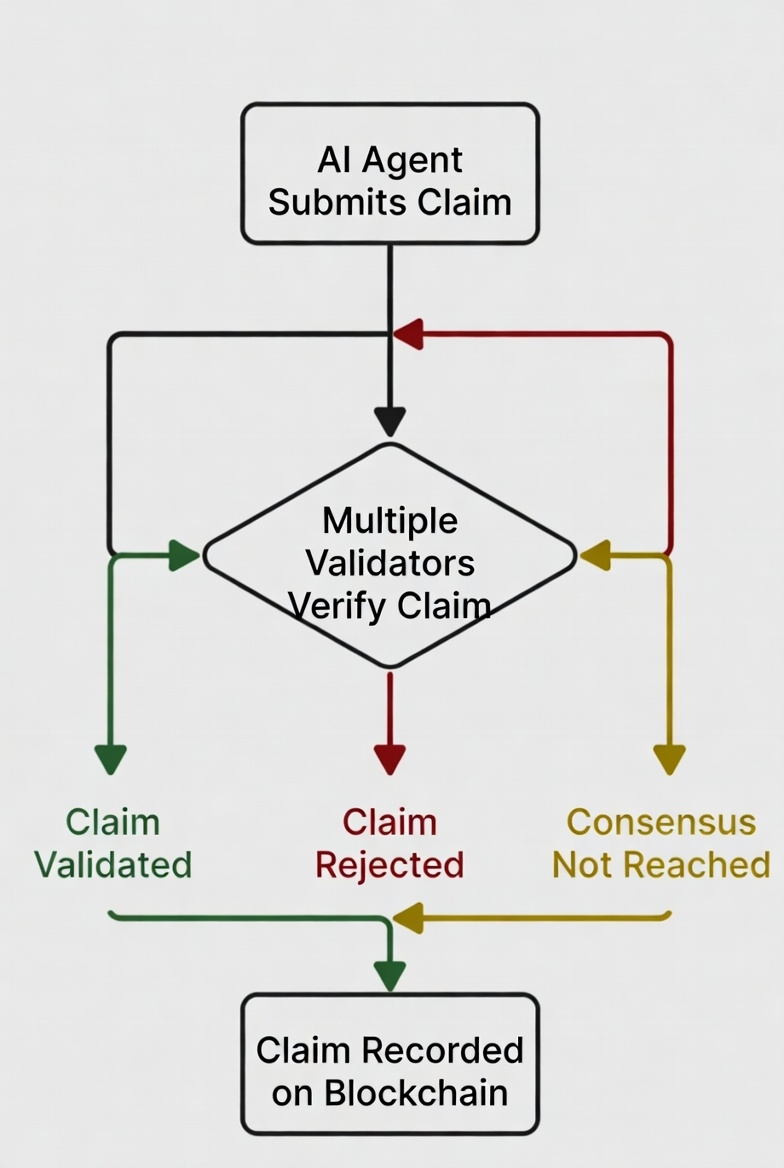

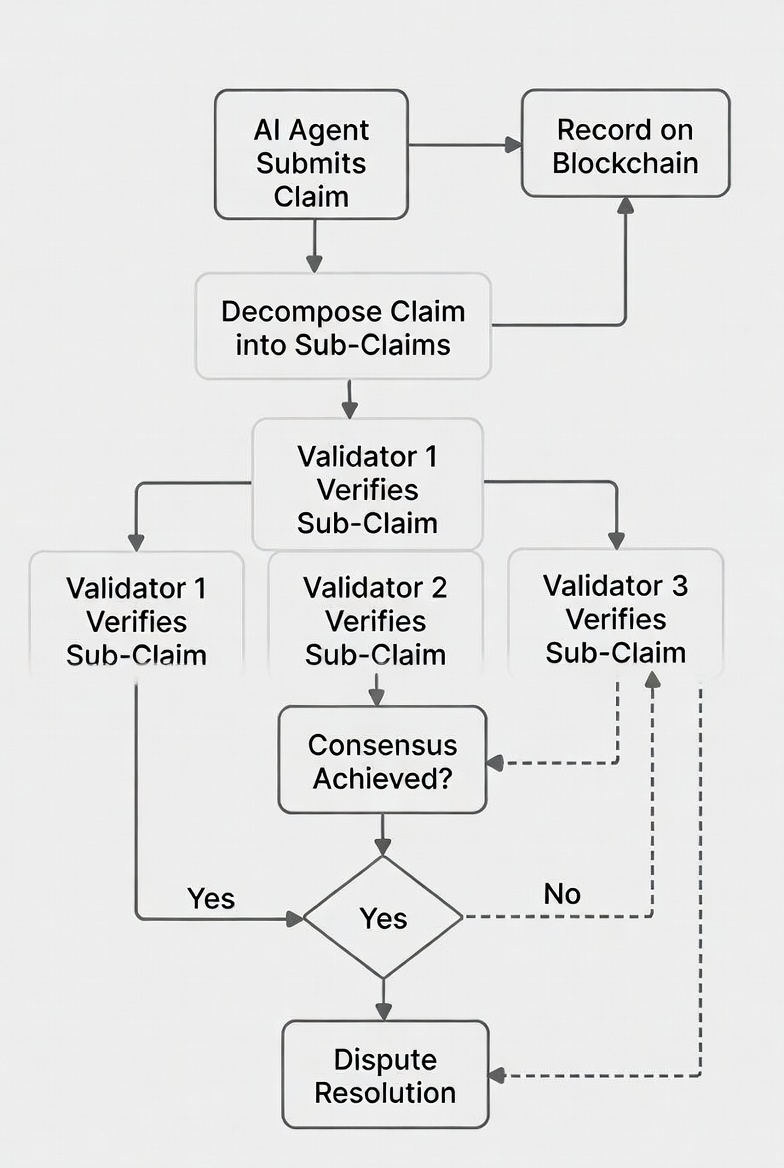

When an AI generates a complex answer, Mira breaks it down into smaller statements called claims. Each claim is something that can be tested. Instead of accepting the full response as one block of information, the system treats it like a list of individual facts.

These claims are then distributed across a decentralized network of independent AI models and validators. They review the claims separately. If enough independent validators agree that a claim is correct, it becomes verified through blockchain consensus.

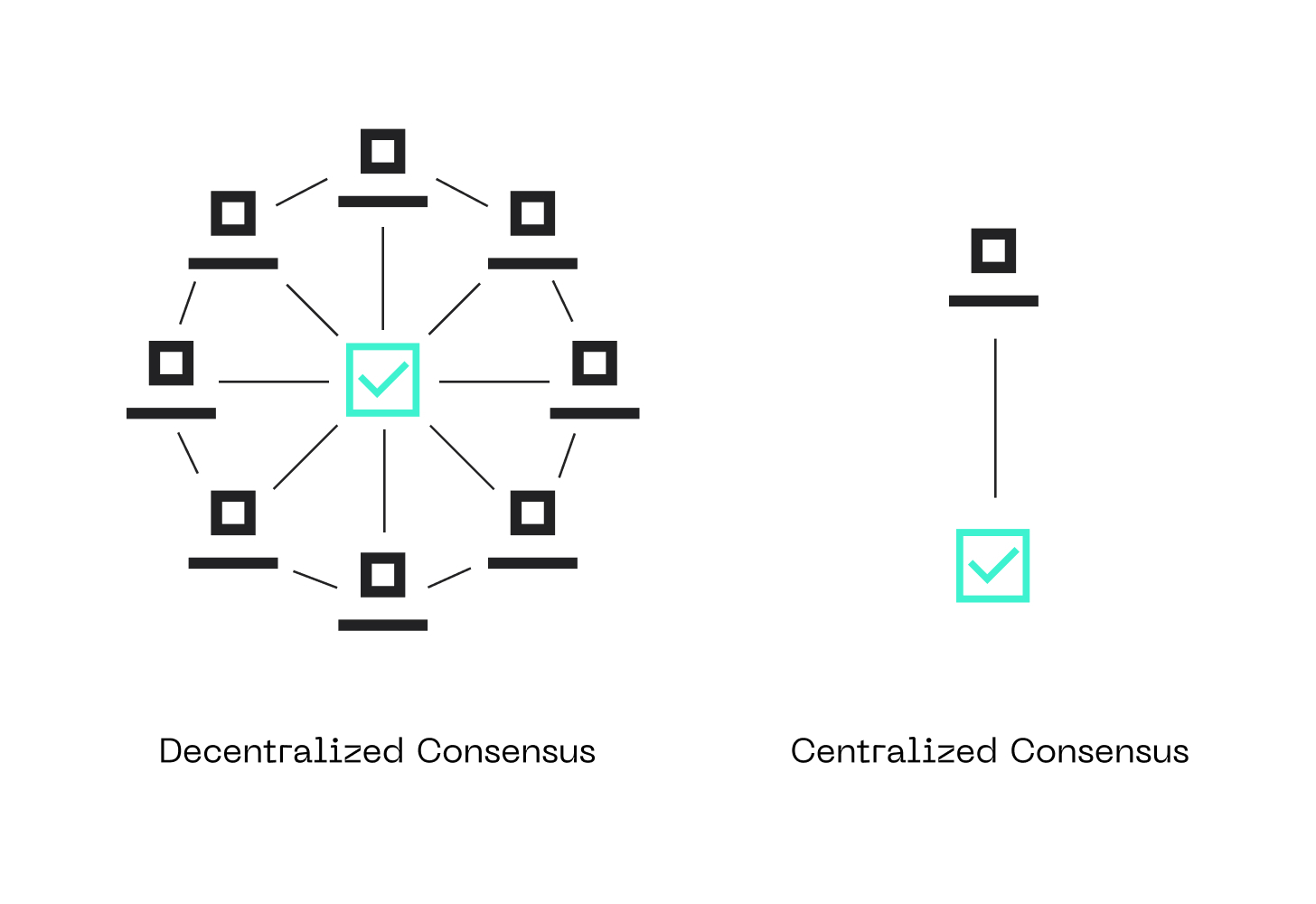

What I find interesting is that this process does not depend on one central authority. There is no single company deciding what is true. The network reaches agreement collectively. It becomes a system of shared responsibility rather than centralized control.

Why Blockchain Matters Here

Some people hear blockchain and immediately think about tokens or trading. But in this case, blockchain is used as a trust machine.

When validators confirm claims, the results are recorded in a transparent and tamper resistant way. This means no one can secretly change the verification outcome later. It creates accountability.

Economic incentives are also part of the design. Validators are rewarded for honest behavior. If they try to act dishonestly, they risk losing value. This structure encourages truth instead of manipulation.

It becomes a system where doing the right thing is financially aligned with network growth. That is a strong foundation.

A More Human Way to Think About It

I like to think of Mira Network as a digital jury system for AI. Instead of trusting one voice, we ask many independent voices to review the claim. If most agree, the information becomes stronger. If there is disagreement, the system can flag it.

We are seeing more conversations globally about AI safety and regulation. Governments and researchers are worried about autonomous systems acting without proper oversight. Mira fits naturally into this conversation because it addresses the verification layer directly.

If AI is going to make decisions in hospitals, financial markets, or infrastructure systems, we need something more than hope. We need proof.

Where Verified AI Can Make a Real Difference

In healthcare, AI tools can help detect diseases or recommend treatments. But before a doctor relies on that output, it should be verified.

In finance, AI can analyze risk and suggest investment strategies. Verified outputs reduce the chance of misinformation affecting markets.

In legal environments, AI may summarize cases or interpret documents. Verified claims can prevent misinterpretation.

As AI moves closer to autonomy, verification becomes even more important. If machines are allowed to act independently, their decisions must be grounded in truth.

The Bigger Vision

What stands out to me is that Mira Network is not competing to build the smartest AI model. Instead, they are building the trust layer for all AI systems. That is a different mindset.

We are entering a time where artificial intelligence will influence billions of decisions every day. If even a small percentage of those decisions are wrong, the impact can be massive. Verification reduces that risk.

If AI continues to grow without reliable oversight, it becomes unpredictable. But if systems like Mira succeed, AI can become more dependable and transparent.

Why This Feels Important

I believe the future of AI is not only about intelligence. It is about responsibility. We are giving machines more power every year. With that power comes the need for accountability.

Mira Network represents a shift from blind trust to earned trust. It tells us that technology should not just be advanced. It should be verifiable.

It becomes clear that the real evolution of artificial intelligence is not just smarter models, but systems that prove their correctness before we rely on them. And in a world where digital informa

tion spreads instantly, verified truth may be the most valuable infrastructure of all.