I keep coming back to one ugly thought: a game should not need an ad engine to prove it has users. That is the part people keep trying to dress up with nice words like optimization, retention, machine learning, smart rewards. I do not buy the costume.

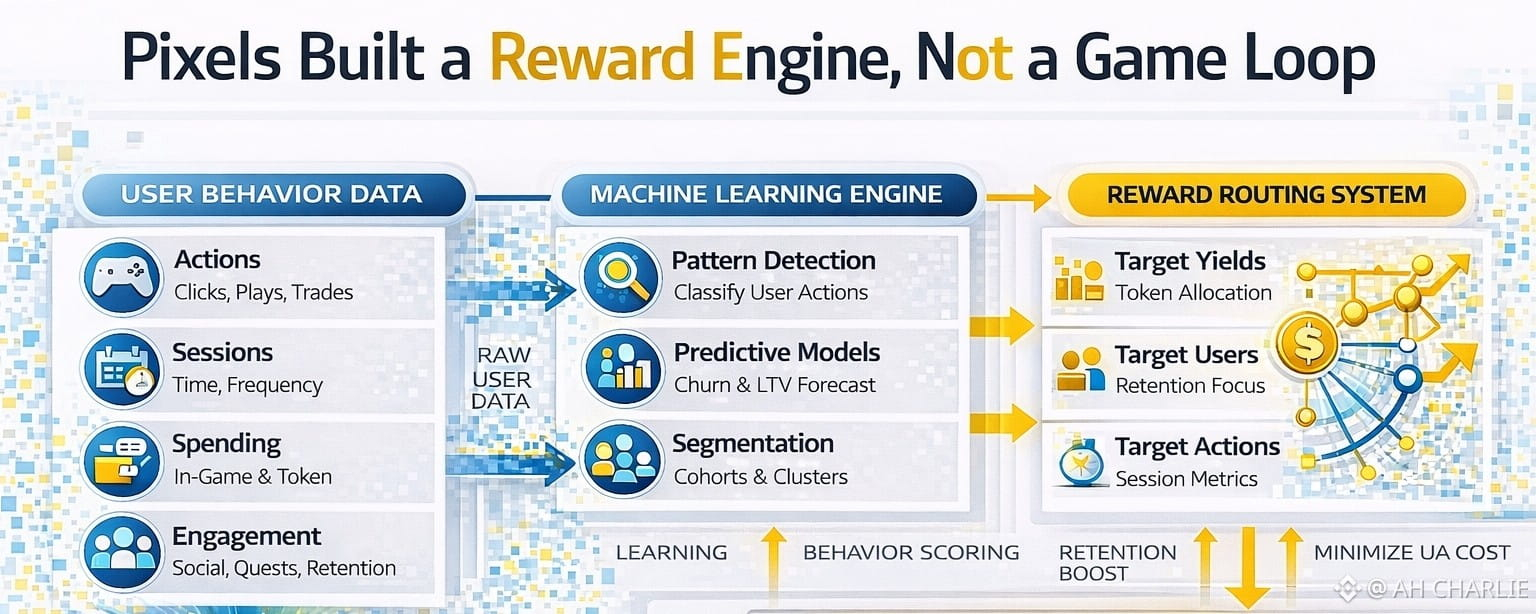

When a protocol says it tracks player actions at scale, scores them, then routes token yield to the actions most likely to cut user acquisition cost and keep people from leaving, I stop seeing a game loop.

I start seeing a paid behavior loop. That matters, because incentive design tells you what the product really is when the pitch deck shuts up. If the system needs a constant stream of targeted rewards to keep activity alive, then the hard question is not whether the data stack is advanced.

The hard question is whether the core product creates enough real pull on its own. If the answer is no, then the token is not boosting a healthy economy. It is covering a weak one. Look, the first fracture is simple: this model does not reward fun first, it rewards what the model can measure and buy. That sounds small. It is not.

Once the machine learns that some player actions improve retention stats, lower churn, or stretch session time, the token flow starts to chase those actions. Not the most creative actions. Not the most social actions. Not even the most useful actions for a healthy in-game economy. Just the actions that score well inside the model.

That is where incentive honesty starts to break. A real product gets demand because users want the thing. This setup can get activity because users want the payout path. Those are not the same.

One is demand. The other is trained compliance. And trained compliance is fragile, because it vanishes the second the bribe falls below the user’s pain threshold. I have seen this in crypto too many times. Teams call it engagement. The wallet calls it mercenary flow.

Actually, the second fracture is even worse: once the system is built to minimize UA cost, the user stops being the customer and becomes inventory. That is the ad-tech smell in plain sight.

In a normal game, user joy is the product. In this kind of loop, user behavior becomes the raw material the system mines, sorts, and steers. The machine is not asking, “What makes the world more alive?” It is asking, “What is the cheapest reward mix that keeps this cohort active?” That is a very different moral and economic frame.

The token stops being a medium of shared value and turns into a precision coupon. A tool for steering behavior at the lowest cost possible. Fine, that may work for a while. Plenty of ugly systems work for a while.

But it creates a hidden ceiling. When people learn the rules are not built to reward honest value creation, but to manage their behavior like a funnel, trust starts to rot. And once trust rots, every payout has to work harder.

The machine becomes more expensive to run right when the product should be standing on its own legs. Wait, this is where the market risk gets nasty: a reward engine built around retention math tends to create false signals of demand.

On paper, activity can look strong. Users return. Actions rise. Maybe wallet counts stay healthy. Maybe session stats look sharp enough to impress lazy investors. But if that activity is mostly a response to targeted yield routing, then what you are looking at is subsidized motion, not clean demand.

The market usually figures this out late, because dashboards do not show motive well. They show behavior. So the protocol may look alive while its real economic center is hollow. That hollow core shows up later in bad ways.

Token emissions have to stay smart, then stay high, then stay precise, because the system taught users to follow reward gradients instead of product love. The moment rewards get cut, mispriced, or delayed, the behavior can snap back hard.

Then everyone acts shocked that “community conviction” was weak. No. It was rented. That is the word people hate because it ruins the fairy tale. Rented demand breaks fast. Okay, my main problem is not that the team uses data.

My problem is what the data is there to solve. If the stated target is lower UA cost and stronger retention, then the protocol is openly saying the token exists in part as a user control layer. That should make any serious market observer pause.

Tokens work best when they clear real access, real ownership, real utility, real scarcity, or real network coordination. They work badly when they are used as a constant patch over weak native demand. In that setup, the token is less an asset and more a subsidy rail. The danger is not only price pressure. The deeper danger is design decay.

Teams start tuning the economy to satisfy the machine instead of the user. Users learn to optimize the payout map instead of the product. The entire system starts looking active while becoming less honest. And in markets, dishonest incentive maps always send the bill later. I am not calling this smart growth. I am calling it a polished way to pay for loyalty without admitting that loyalty was not earned. Maybe that sounds harsh.

Fine. Harsh is useful when the model itself is hiding behind neat language. I do not care how clean the dashboard looks or how sharp the machine-learning stack sounds. If the engine needs constant behavioral rewards to hold attention, then I treat the whole thing like a fragile paid funnel with a token taped to the side. Real value holds when rewards cool off.

Real demand survives without being nudged every second by a scoring model. If this system cannot do that, then the market is not seeing product-market fit. It is seeing a well-run bribery layer. And that is the kind of design that looks efficient right up until the cash burn, trust loss, and user drift all arrive on the same day.

@Pixels #pixel $PIXEL #Web3Gaming