There was a moment when I was playing a Web3 game during a busy network period, and something very small started to bother me. I would tap an action, see it register, and then wait… not because it failed, but because the system took slightly longer than usual to respond. Nothing was technically wrong, yet the experience didn’t feel consistent. That small gap between action and confirmation stayed in my mind.

After seeing this happen a few times, what I noticed is that Web3 gaming issues are rarely about gameplay itself. They are about timing, coordination, and how systems behave when too many people interact at once. When activity increases, the system doesn’t stop it adjusts. But that adjustment often shows up as uneven speed across users.

From a system perspective, it feels like a shared space where every action needs to be confirmed, recorded, and synced across a wider structure. Each player is doing something locally, but the system has to keep everything consistent globally. That’s where delays start appearing not in what you do, but in how the system aligns what everyone is doing.

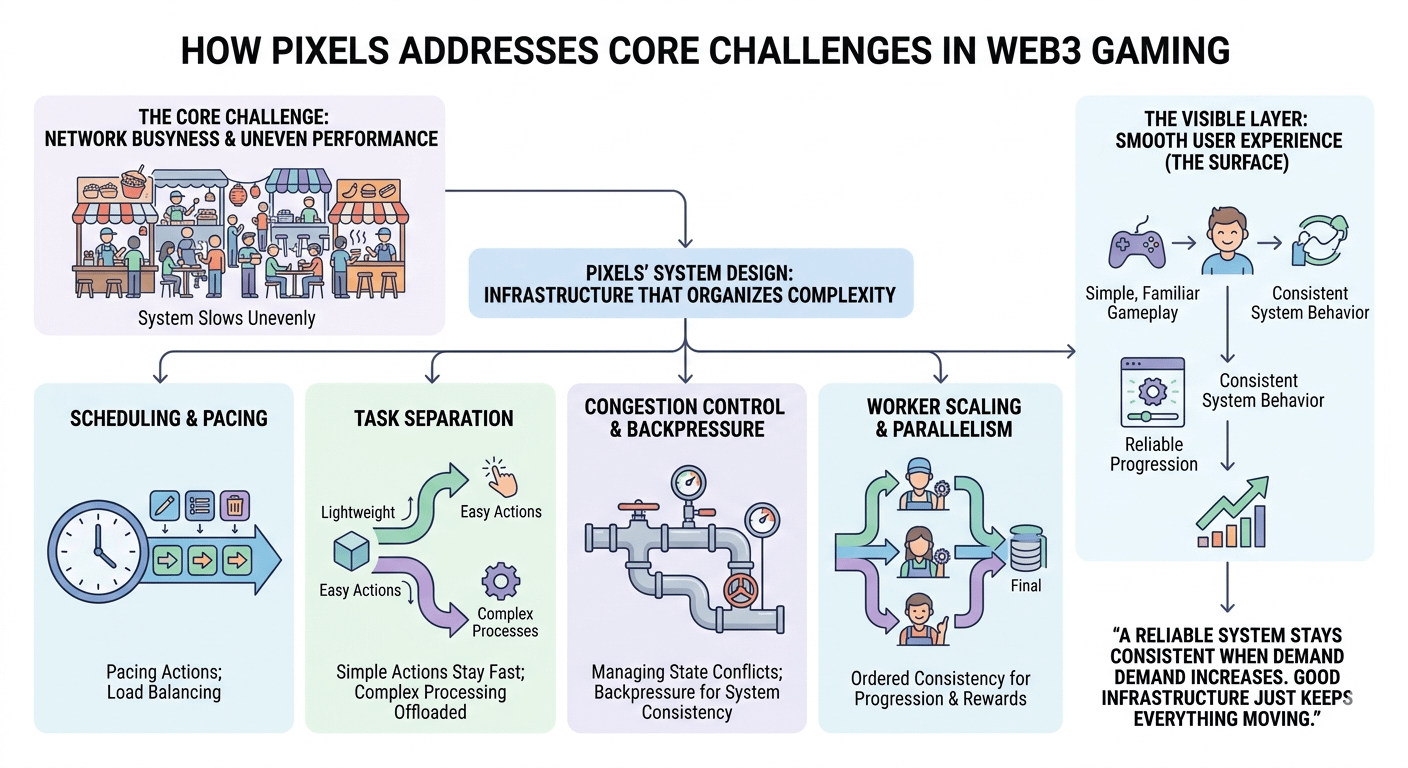

I often think about it like a busy food street where every vendor is serving customers at the same time. When it’s calm, everything feels smooth. Orders go out quickly, nothing feels delayed. But when it gets crowded, the system doesn’t break it just slows unevenly. Some orders move fast, others take longer, even if they are similar.

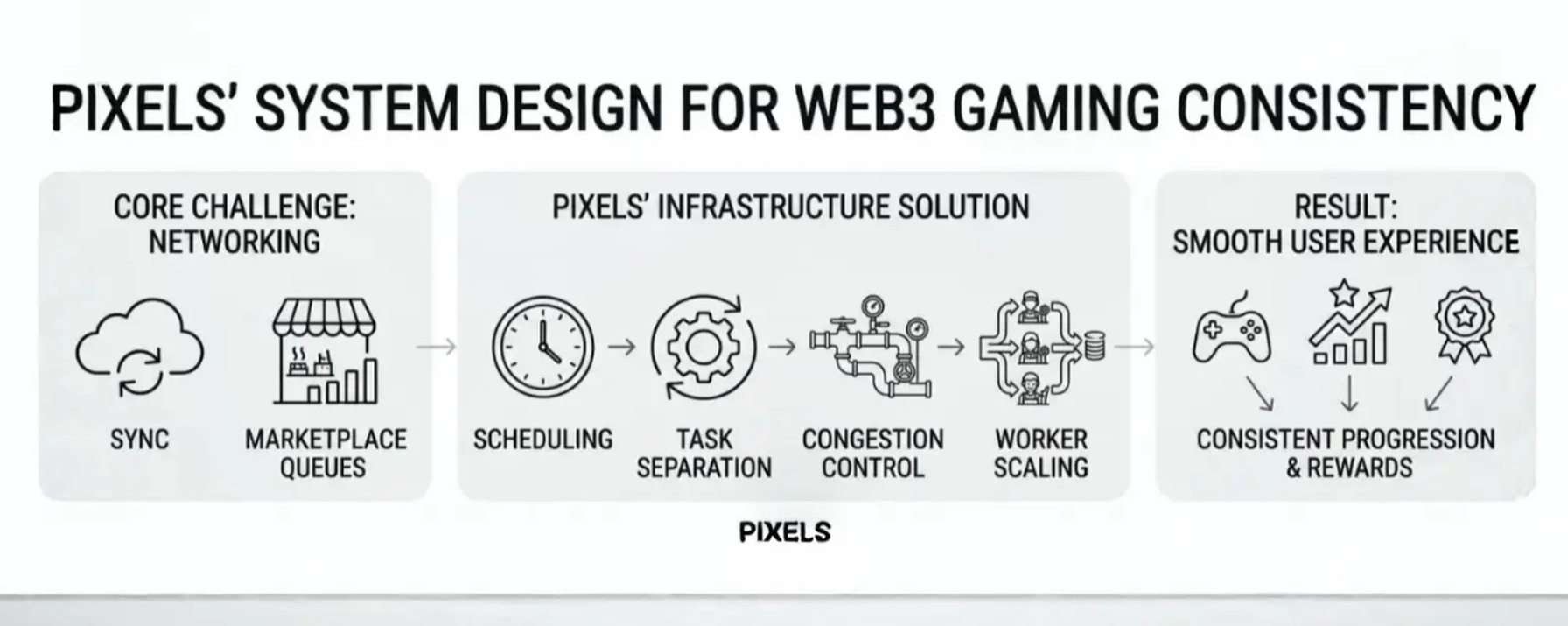

When I look at how @Pixels approaches this, what caught my attention is that it doesn’t try to hide this complexity. Instead, it feels like it organizes it. The experience on the surface is still simple and familiar, but underneath, there is structure shaping how actions move through the system.

What interests me more is how that structure quietly supports stability.

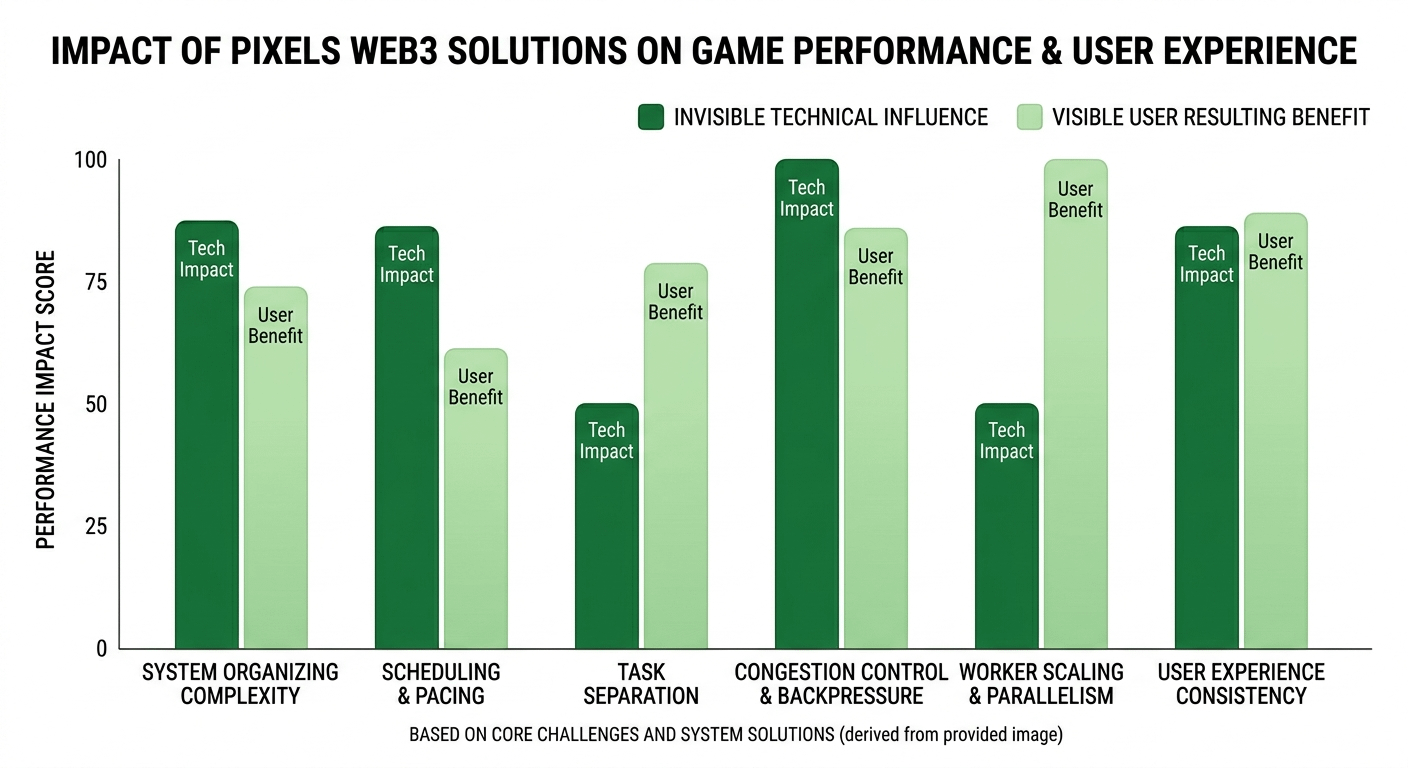

Scheduling seems to play a role in how actions are paced. Not everything is processed in the exact same way or at the same speed. Some interactions move quickly, while others are slightly delayed—not randomly, but in a way that suggests the system is balancing load.

Task separation also feels important. Basic gameplay actions stay lightweight and responsive, while more complex processes seem to sit in separate flows. From a system perspective, this helps prevent everything from competing for the same resources at once.

Verification flow adds another layer. Some actions are confirmed immediately, while others pass through additional checks. In my experience watching systems, this is often how platforms prevent overload during high activity periods.

Then there is congestion control. Systems that scale well don’t try to push everything through instantly. They absorb pressure and slow certain parts when needed. That backpressure is not always visible, but it is what keeps the system from becoming unstable.

Worker scaling and workload distribution also matter, but only when activity is spread properly across different paths. If everything funnels into one point, scaling doesn’t help much. What matters is how evenly the system distributes work under pressure.

And then there’s ordering versus parallelism. Parallel flow keeps the experience responsive, but ordering is often needed to maintain consistency in progression and rewards. Balancing both is where system design becomes real.

What stands out to me is that Pixels doesn’t feel like it removes these challenges it feels like it manages them quietly in the background while keeping the surface experience simple. You still feel like you’re just playing, just farming, just progressing. But underneath, there is structure deciding how that progression moves.

And over time, that makes me think differently about “smooth experience” in Web3. It’s not about everything happening instantly. It’s about the system still making sense even when things get busy.

A reliable system is not the one that removes delay completely, but the one that stays consistent when demand increases. Good infrastructure doesn’t draw attention to itself. It just keeps everything moving in a way that still feels understandable.