I logged in expecting nothing unusual. The same loop I had run dozens of times—plant, harvest, craft, move. It was smooth, almost automatic. My hands knew what to do before I thought about it. For a while, that familiarity felt like progress. But somewhere in the middle of that routine, something started to feel slightly off. Not broken—just… too aligned. Too efficient in a way that didn’t quite feel like my own choice.

I might be wrong, but that was the moment I stopped seeing it as just a game.

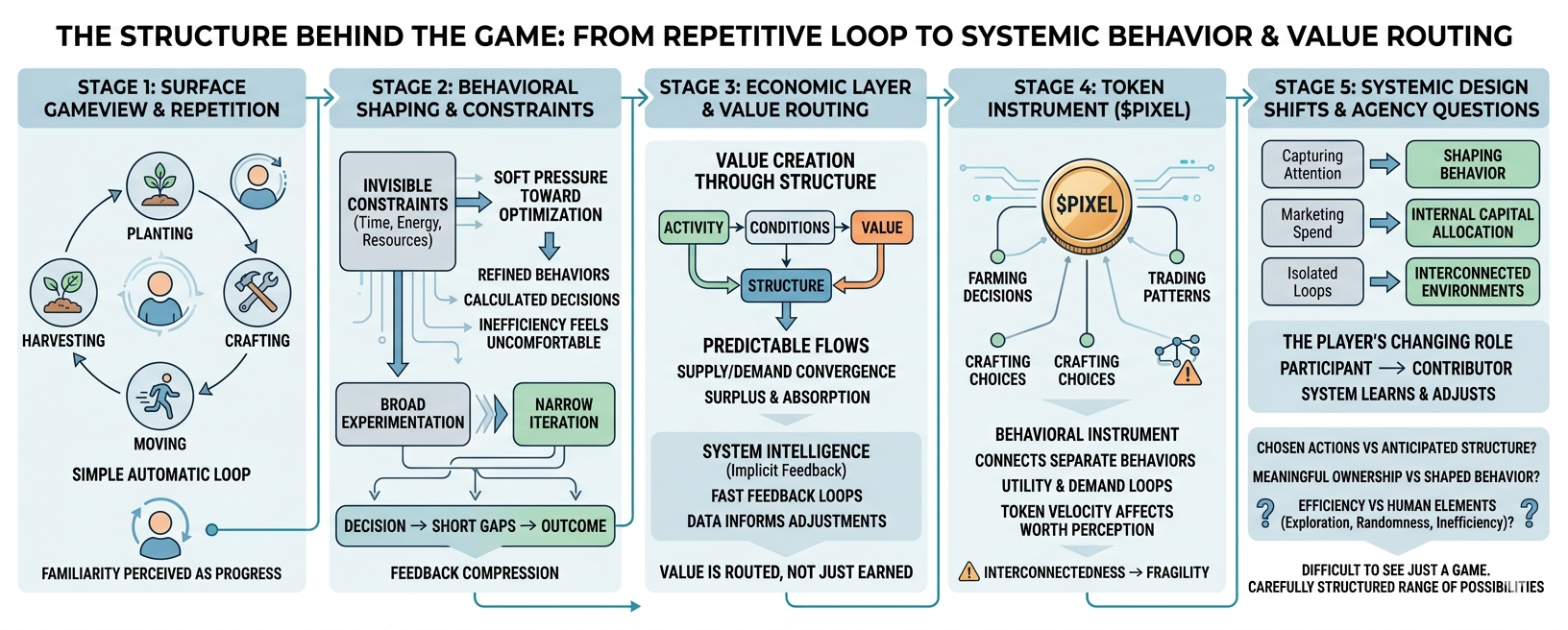

At the surface, the system is simple. You perform actions, you earn rewards, and you repeat. It’s clean, accessible, and easy to internalize. That simplicity is what draws people in. But the longer I stayed inside that loop, the more it started to feel like the simplicity wasn’t the point—it was the interface.

Underneath it, something else was happening.

What I began noticing wasn’t tied to any single feature. It was the way my behavior started adjusting without any explicit instruction. I wasn’t told to optimize my routes, but I did. I wasn’t forced to prioritize certain tasks, but over time, I naturally leaned toward them. The system never demanded efficiency—it just made inefficiency feel slightly uncomfortable.

That’s when the core idea started to take shape for me: systems don’t change loudly—they reshape behavior quietly.

And this one does it well.

The loop I thought I was controlling had subtle constraints built into it. Time, energy, resource availability—none of these were restrictive on their own. But together, they created a soft pressure toward optimization. Over time, repetition wasn’t just about familiarity. It became refinement. Each cycle slightly more efficient than the last, each decision a bit more calculated.

It didn’t feel like I was being pushed. It felt like I was learning.

But learning what, exactly?

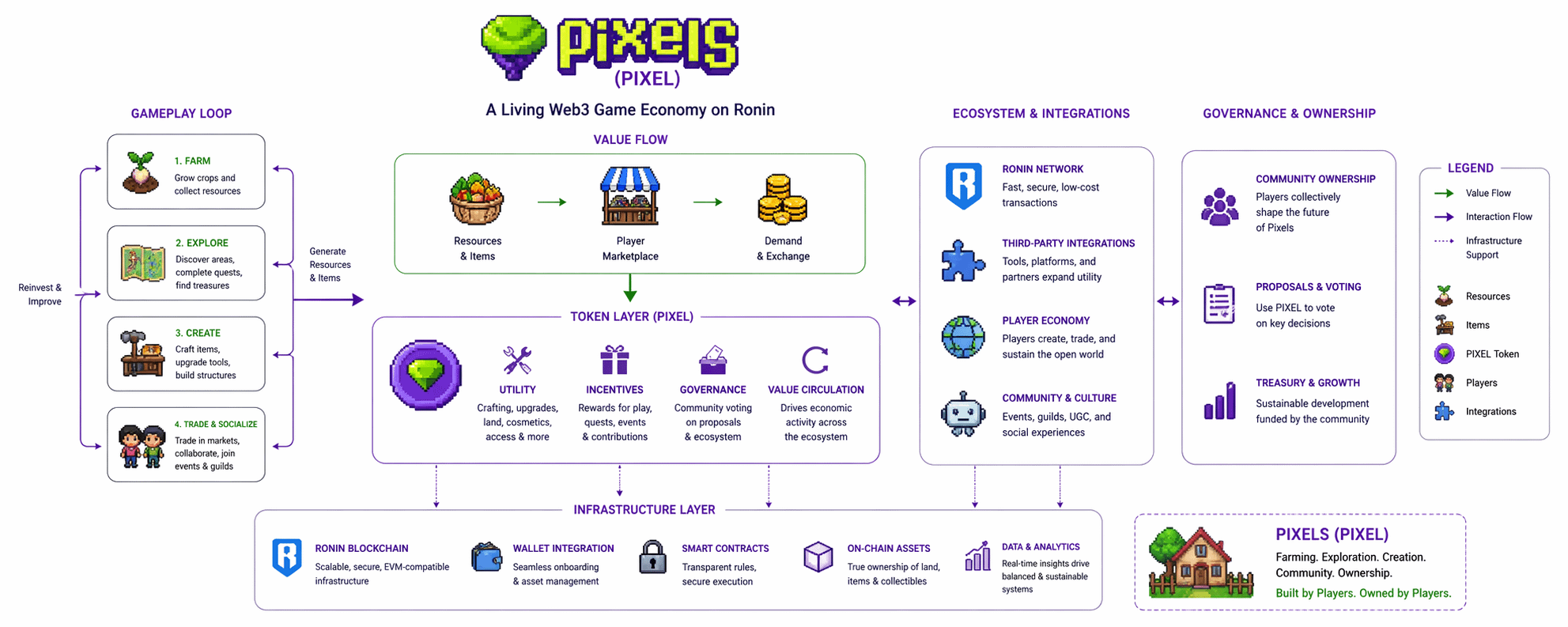

That question led me deeper into how value actually moves through the system. On the surface, it looks like value is created through activity. You play more, you earn more. But that framing started to feel incomplete. Activity alone doesn’t create value—it only generates the conditions for it.

The real shift happens in how that activity is structured.

Certain actions produce outputs that other players need. Some loops generate surplus, others absorb it. Over time, patterns emerge—not because the system tells players what to do, but because it quietly rewards certain behaviors more efficiently than others. What looks like free choice begins to converge into predictable flows.

It started to feel less like a game and more like a living system—one where value isn’t just earned, but routed.

And once I began seeing it that way, another layer became visible.

There’s a kind of system intelligence embedded here. Not in the sense of something conscious, but in how quickly feedback loops close. Actions produce data. That data informs adjustments—sometimes by the player, sometimes by the system itself. The gap between decision and outcome feels compressed.

You try something, and within a short cycle, you understand whether it works. Not through explicit feedback, but through subtle shifts in output, timing, or reward.

Over time, that compression changes how you behave.

You stop experimenting broadly and start iterating narrowly. You refine instead of explore. Efficiency becomes the default mindset, not because it’s required, but because the system makes it feel natural. It rewards clarity over curiosity, consistency over randomness.

I started noticing that my decisions were becoming less about what I wanted to do and more about what made sense to do.

That distinction is easy to miss, but it matters.

Because when behavior aligns too closely with system incentives, engagement can remain high even as agency quietly narrows. You’re still active, still progressing—but the range of meaningful choices starts to compress.

This is where the difference between engagement and value creation becomes important.

Not all activity contributes equally. Some actions sustain the system, others extract from it, and a few actually expand it. But from a player’s perspective, these distinctions aren’t always visible. Everything feels like progress.

In reality, value tends to concentrate around specific loops—places where supply meets demand in a way that generates consistent exchange. And the system, intentionally or not, nudges players toward those loops.

That’s where behavioral design turns into economic structure.

The token layer adds another dimension to this. At first glance, a token like $PIXEL looks like a reward mechanism—a way to compensate players for their time. But the longer I observed it, the more it started to feel like something else.

A behavioral instrument.

Its role isn’t just to distribute value, but to influence how value moves. Its utility across different parts of the system creates overlapping demand loops. Its velocity—how quickly it circulates—affects how players perceive its worth, not just in price terms, but in usefulness.

When a token is integrated across multiple activities, it begins to connect otherwise separate behaviors. Farming decisions, crafting choices, trading patterns—they all start to link through a shared economic layer.

That interconnectedness can be powerful. But it also introduces fragility.

If one part of the system weakens, it doesn’t stay isolated. It propagates.

And that brings me to a more cautious perspective.

I might be overinterpreting, but systems like this tend to face pressure as they scale. What works in a contained environment doesn’t always translate cleanly when the player base grows or diversifies. Behavioral patterns that feel organic at a small scale can become rigid or imbalanced over time.

There’s also the question of integration quality. Expanding utility sounds good in theory, but if new layers don’t carry real demand, they risk diluting the system rather than strengthening it. Not all growth is additive. Some of it introduces noise.

And then there’s the variability of players themselves.

Not everyone engages with the same intent. Some optimize aggressively, others play casually. Some seek economic return, others just want a structured experience. Balancing these different behaviors within a single system is difficult. What feels rewarding for one group can feel restrictive for another.

That tension doesn’t always surface immediately. But it’s there.

Still, despite these uncertainties, I keep coming back to the same broader realization.

What I’m observing here isn’t just about a single game or token. It reflects a larger shift in how digital systems are being designed.

We’re moving from capturing attention to shaping behavior.

From spending on marketing to allocating capital within systems.

From isolated gameplay loops to interconnected economic environments.

And in that shift, the role of the player changes.

You’re no longer just participating. You’re contributing to a system that learns from you, adjusts around you, and, over time, subtly guides you.

That doesn’t necessarily mean control is lost. But it does raise questions about where influence actually sits.

Am I choosing my actions, or am I responding to a structure that has already anticipated them?

Is ownership meaningful if behavior is continuously shaped?

Does efficiency enhance the experience, or does it slowly replace something more human—like exploration, randomness, or even inefficiency itself?

I don’t have clear answers to these questions.

But I’ve started noticing that the more aligned I feel with the system, the less I question it. And the less I question it, the harder it becomes to see where my own intent ends and the system’s design begins.

Maybe that’s the point. Or maybe it’s just a byproduct of well-designed loops.

Either way, it leaves me with a quiet sense that what looks like freedom on the surface might actually be a carefully structured range of possibilities underneath.

And once you start seeing that, it’s difficult to go back to seeing just a game