Understanding the Problem With Modern AI:

Over the past few years AI has moved quickly from being an experimental tool to something people use every day. Writers use it to draft ideas. Traders use it to scan markets. Businesses rely on it to automate tasks. It feels smart and fast. But there is a problem many users notice after using it long enough. Sometimes AI sounds completely sure of something that is not actually true.

This happens because AI does not think the way humans do. It does not check facts or understand reality. It predicts words and outcomes based on patterns it learned from data. When those patterns are unclear the system can produce answers that look convincing but are inaccurate. These are often called hallucinations. Bias is another issue where the data used for training shapes responses in ways that are not always balanced.

Even the best models cannot fully remove these mistakes. Making a model more precise can sometimes make it less flexible. Making it broader can introduce more inconsistency. This tradeoff has created a ceiling on how reliable a single AI system can be, especially in areas where accuracy matters most.

Why One AI Model Is Not Enough:

Mira starts from a different assumption. Instead of trying to build one perfect model it accepts that no single system can solve the reliability challenge alone. Every AI model is trained differently. Each one carries its own strengths and blind spots.

In real life we already handle important decisions this way. Doctors consult other doctors. Researchers rely on peer review. Critical conclusions are rarely based on one voice. Mira applies this same logic to artificial intelligence by letting multiple systems evaluate the same information instead of trusting just one output.

How Mira Turns AI Outputs Into Verifiable Information:

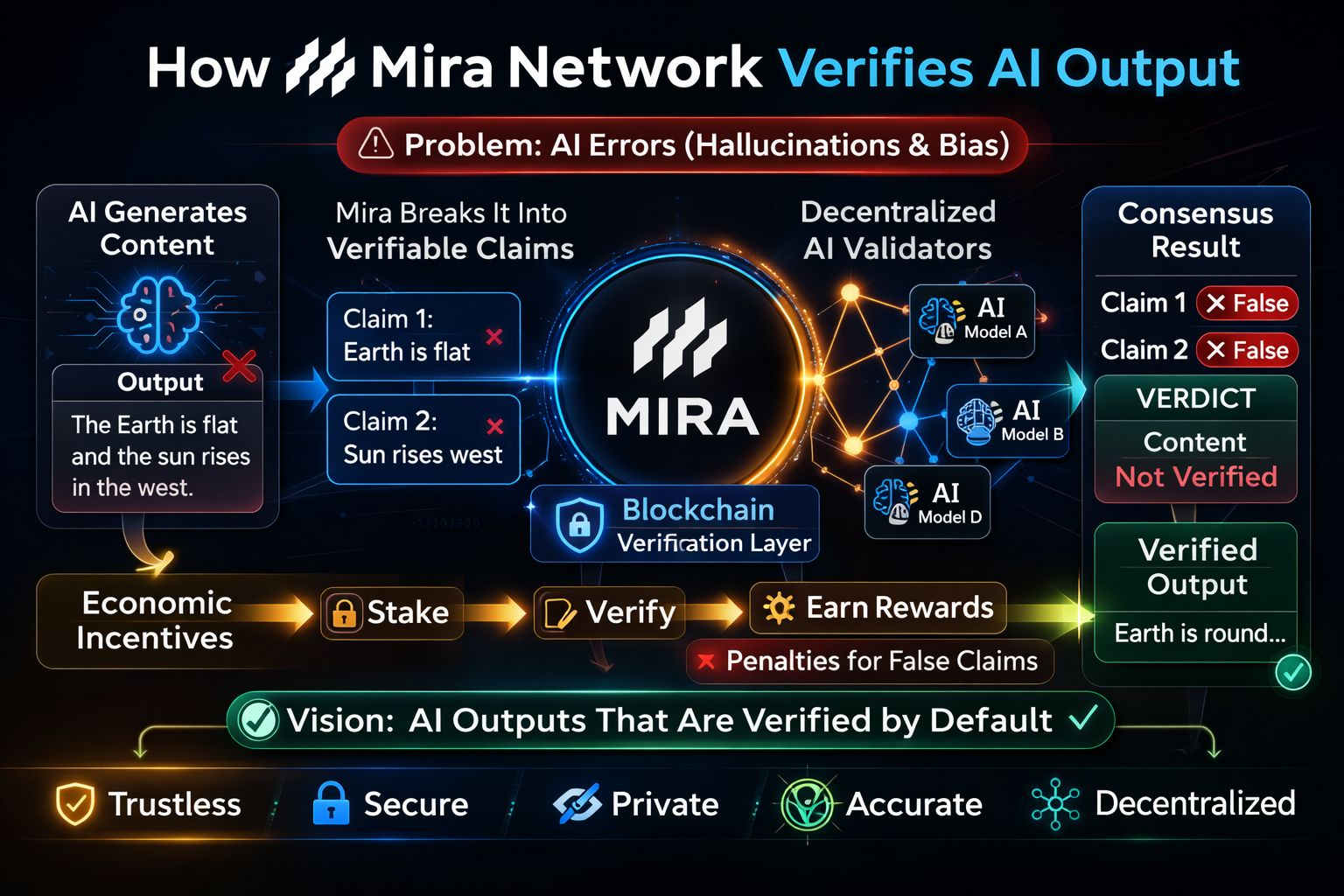

The network introduces a verification step between generation and usage. When an AI produces a piece of content Mira does not treat it as a single answer. It breaks that content into smaller claims that can be checked individually. Each claim is distributed across independent validators running different models.

These validators review the same claim and submit their conclusions. The system then looks for agreement across the network. If enough participants reach the same result the claim is considered verified. This process turns something probabilistic into something tested.

Blockchain records the outcome so it cannot be changed later. That record acts like a receipt showing how verification happened and which participants agreed. Trust comes from transparency rather than authority.

Market Context and Current Price Activity:

On 27 February 2026 Mira is trading around 0.095 with an observed daily range between 0.0857 and 0.1246. The price movement reflects increasing attention toward projects that focus on AI reliability rather than just faster computation. As AI adoption expands investors are beginning to watch infrastructure layers that aim to make AI dependable in real world settings.

Incentives That Encourage Honest Validation:

Technology alone does not secure a system. Mira also uses economic rules to guide behavior. Participants must commit value to take part in verification and they earn rewards when their work aligns with consensus. If they attempt to manipulate results they risk losing that stake.

This structure blends elements of Proof of Work and Proof of Stake but the purpose is practical rather than theoretical. Honest participation becomes the rational choice because dishonesty carries a clear cost.

Privacy is handled carefully as well. Since information is divided into fragments before being sent to validators no single node has access to the entire dataset. That makes it possible to verify sensitive material without exposing it.

Where This Model Can Be Used:

The need for dependable AI is not limited to one sector. Financial systems require accurate analysis. Healthcare tools must avoid errors. Legal workflows depend on precise information. Autonomous technologies cannot function safely without strong validation.

Mira positions itself as a supporting layer for these environments. It does not replace AI models. It checks them. The goal is to make AI outputs usable in places where mistakes are not acceptable.

Conclusion:

AI has reached an important stage. It can generate ideas faster than ever but reliability still determines whether those ideas can be trusted. Mira focuses on solving that gap by adding verification as a built in process rather than an afterthought.

By combining decentralized review, cryptographic records, and incentive driven participation the network tries to shift AI from being impressive to being dependable. As conversations around artificial intelligence mature the question is no longer only what AI can create. The real question is what can be proven correct before people rely on it. Mira is built around answering that question.

@Mira - Trust Layer of AI #Mira $MIRA