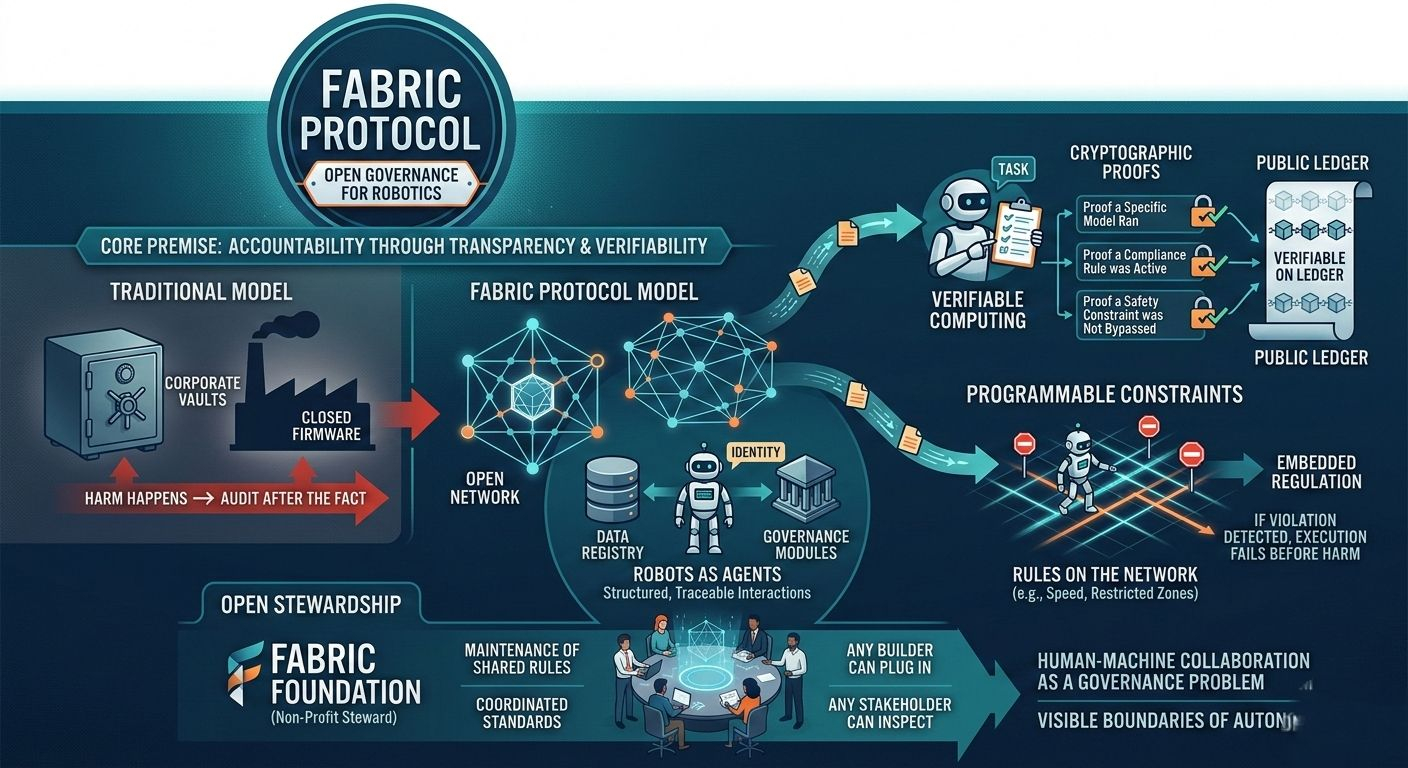

Fabric Protocol starts from a different premise: if robots are going to operate in shared spaces, their decision logic can’t be sealed in corporate vaults. It has to live in an open system where computation can be verified, policies can be inspected, and updates can be governed rather than imposed.

This isn’t about making robots smarter. It’s about making them accountable.

The protocol is backed by the non-profit Fabric Foundation, which plays steward rather than ruler. The distinction matters. Stewardship implies maintenance of shared rules, not command over machines. The foundation helps coordinate standards and long-term evolution, but the network itself is designed to be global and open—any builder can plug into it, any stakeholder can examine its logic.

At the core is verifiable computing. Not a buzzword—an architectural choice. When a robot performs a task under Fabric, the computation behind that task can produce proofs. Proof that a specific model ran. Proof that a compliance rule was active. Proof that a safety constraint wasn’t bypassed. Instead of trusting a manufacturer’s claim, you can check cryptographic evidence anchored to a public ledger.

That small shift changes the power dynamic.

Consider a logistics robot in a warehouse. Traditionally, its behavior is defined by internal firmware and cloud-based updates. If something goes wrong, you audit logs after the fact. Under Fabric’s design, rules about speed limits, restricted zones, or human proximity thresholds can exist as programmable constraints on the network itself. If an instruction violates those constraints, execution fails before harm happens.

Regulation becomes embedded rather than reactive.

Fabric also treats robots as agents, not appliances. An agent has identity. It can access data feeds, request compute resources, and operate within economic frameworks. Through modular infrastructure—identity layers, data registries, governance modules—robots plug into a coordinated environment where every interaction is structured and traceable.

Data provenance stops being a guessing game. Compute providers can attach execution proofs. Policy updates can pass through defined governance processes instead of silent over-the-air pushes. A city deploying service robots could inspect the exact compliance modules active within its jurisdiction. An insurance provider could require specific safety proofs before underwriting a robotic fleet.

Transparency becomes operational, not rhetorical.

There’s also a subtle cultural shift embedded in the design. Fabric assumes that human-machine collaboration isn’t a marketing phrase; it’s a governance problem. Humans don’t simply supervise robots. They participate in shaping the rule layers those robots obey. Through programmable governance rails, communities and institutions can influence how robotic systems evolve.

That creates friction. It slows unilateral control. It introduces debate.

But friction is often what keeps systems honest.

As general-purpose robots move beyond factories into hospitals, streets, and homes, the question won’t be whether they can navigate stairs or fold laundry. It will be who defines the boundaries of their autonomy—and whether those boundaries are visible.

Fabric’s answer is structural. Build robots on shared, verifiable rails. Coordinate data, computation, and regulation through a public ledger. Make governance modular. Let standards evolve in the open.

@Fabric Foundation #ROBO $ROBO