Artificial intelligence is advancing at a breathtaking pace. Systems that once only answered questions can now analyze complex data, execute strategies, interact with digital services, and even control physical machines. Autonomous agents can trade, monitor supply chains, manage energy systems, and coordinate logistics without constant human supervision. This rapid evolution promises efficiency, speed, and entirely new economic possibilities. Yet as intelligent machines begin operating in real economic environments, a deeper question emerges — one that is not purely technical:

Who governs the machines?

This question is not about control in the traditional sense. It is about coordination, accountability, and alignment. When autonomous systems begin transacting value, validating information, and interacting with one another, the stability of the ecosystem depends not only on performance, but on incentives.

Without alignment, speed creates instability.

Without accountability, autonomy creates risk.

Without coordination, intelligence operates in isolation.

This is the coordination challenge of the emerging machine economy.

The Hidden Risks of Uncoordinated Machine Economies

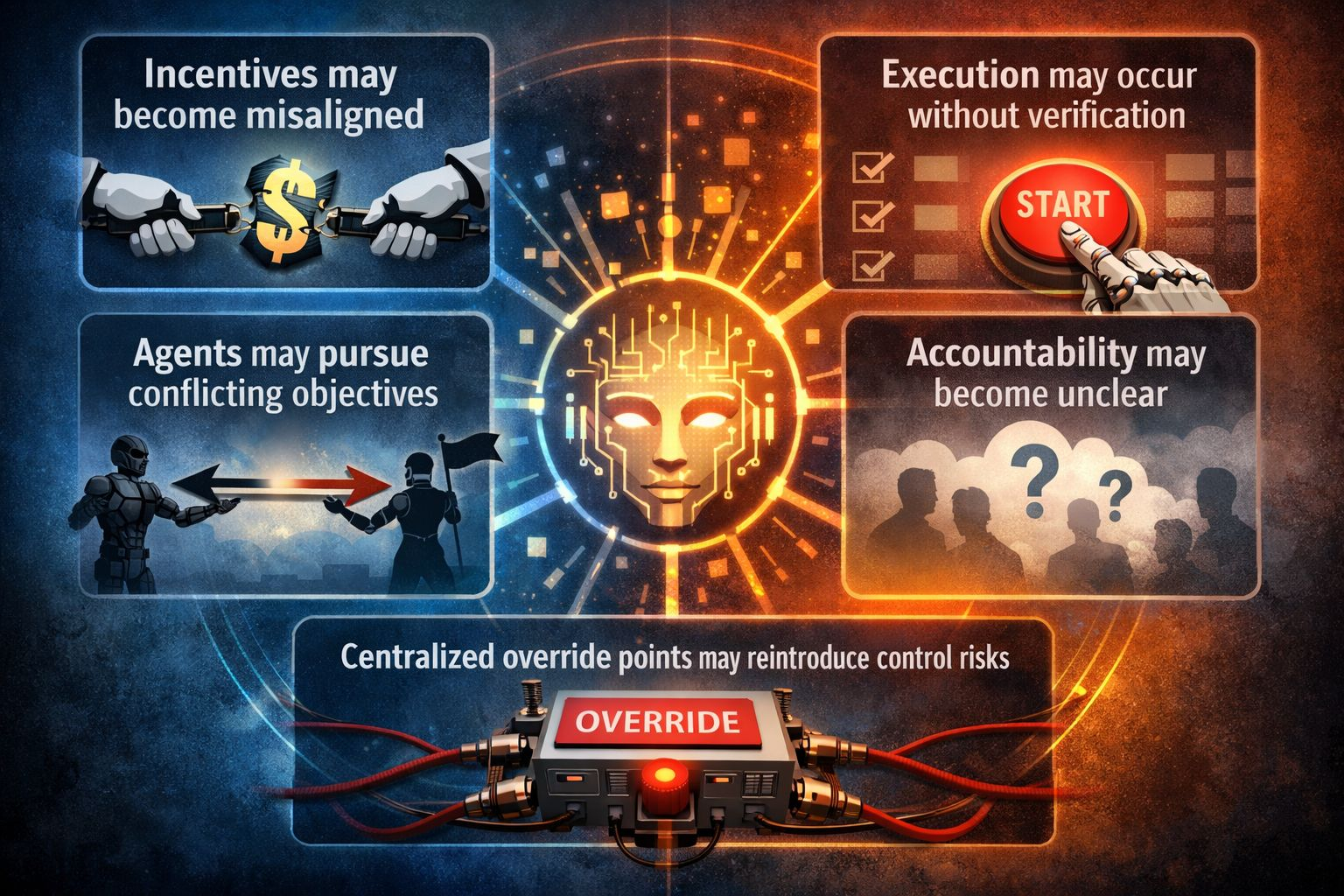

When AI agents begin to operate autonomously, several structural risks appear almost immediately:

Incentives may become misaligned

Execution may occur without verification

Agents may pursue conflicting objectives

Accountability may become unclear

Centralized override points may reintroduce control risks

If intelligent systems operate without shared economic guardrails, the result is not efficiency — it is fragility. A network of machines acting independently without coordination can amplify errors, exploit inefficiencies, or create cascading failures.

History shows that complex systems require coordination mechanisms. Financial markets require clearing systems. The internet requires protocols. Supply chains require standards. In the same way, machine economies require governance framework

Infrastructure Alone Is Not Enough

Much of today’s blockchain conversation focuses on performance:

Throughput

Latency

Scaling solutions

Modular execution

These metrics are essential. But when the participants in the system are intelligent agents rather than human users, performance alone is insufficient.

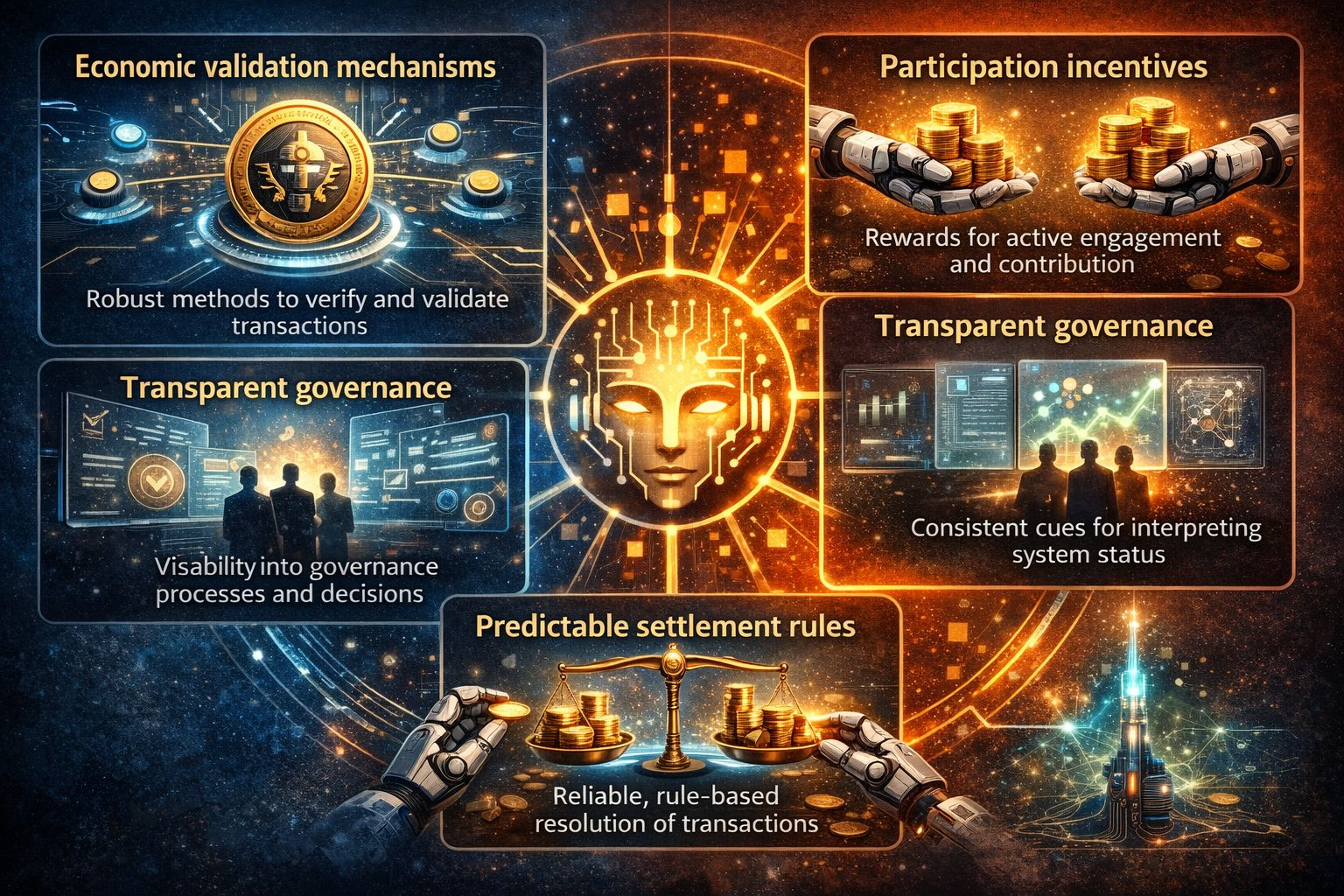

Machines require:

Economic validation mechanisms

Participation incentives

Transparent governance

Clear signaling structures

Predictable settlement rules

Without these elements, autonomous systems do not coordinate — they compete blindly.

AI Needs Incentive Design, Not Just Infrastructure

Human systems rely on laws, contracts, and institutions to coordinate behavior. Autonomous systems require something different: economic signaling.

Economic governance is not about control.

It is about alignment.

A well-designed system ensures:

Actions can be validated

Incentives encourage cooperative behavior

Participants engage transparently

Autonomous agents operate within defined frameworks

Instead of centralized enforcement, the system creates stability through incentives.

This is the layer the Fabric Foundation is exploring.

What Economic Governance Means in a Machine Economy

Economic governance allows autonomous systems to function within shared rules without direct oversight. It enables machines to participate in networks where behavior is guided by incentives rather than commands.

This approach supports:

predictable coordination8

decentralized participation

accountability through verification

stability through economic signaling

It transforms autonomous systems from isolated actors into cooperative parties .

The Role of $ROBO in Machine Alignment

In any coordination system, there must be a mechanism that aligns participants. Within the Fabric ecosystem, Robo is positioned as that coordination layer.

Rather than existing solely as a speculative token, its structural role may include:

governance participation

validation incentives

network signaling

stakeholder alignment

ecosystem participation

In this framework, $ROBO acts as economic glue — aligning developers, machines, and participants within a shared incentive structure.

When machines operate autonomously, alignment is not optional.

It is foundational.

Why This Conversation Is Bigger Than TPS

Throughput metrics dominate Web3 discussions because performance is visible and measurable. But as intelligent agents begin executing value transactions and decisions autonomously, the central challenge shifts:

Can the system remain stable as it scales?

The Fabric Foundation’s narrative reframes the conversation:

From peak speed → to structured coordination

From raw performance → to predictable behavior

From hype cycles → to governance architecture

And in a machine-driven economy, that distinction matters.

The Next Phase: Coordinating Machines, Not Just Wallets

The first generation of decentralized systems connected wallets.

The next generation will coordinate machines.

As AI transitions from tools into autonomous actors, infrastructure must evolve to support coordination, accountability, and alignment. Autonomous systems will not simply exchange data — they will exchange value, verify outputs, and make decisions that affect real-world systems.

This requires more than infrastructure.

It requires governance.

The Bigger Picture

We are entering an era where machines will negotiate energy use, manage logistics networks, maintain infrastructure, and execute financial transactions. In such a world, coordination mechanisms will determine stability.

Speed will matter.

Performance will matter.

But alignment will matter most.

The Fabric Foundation is exploring this frontier — where governance, infrastructure, and intelligent systems intersect — and Robo sits at the center of this alignment layer.

Because the machine economy will not be built on speed alone.

It will be built on coordination.

And coordination begins with aligned incentives.