@Mira - Trust Layer of AI Most AI systems operate like brilliant interns working alone in a locked room. They read, predict, answer. When they hallucinate, there’s no cross-examination. When they inherit bias, there’s no jury. The output lands in front of a human who either trusts it or double-checks it manually. That friction is why “autonomous AI” still feels like a demo, not infrastructure.

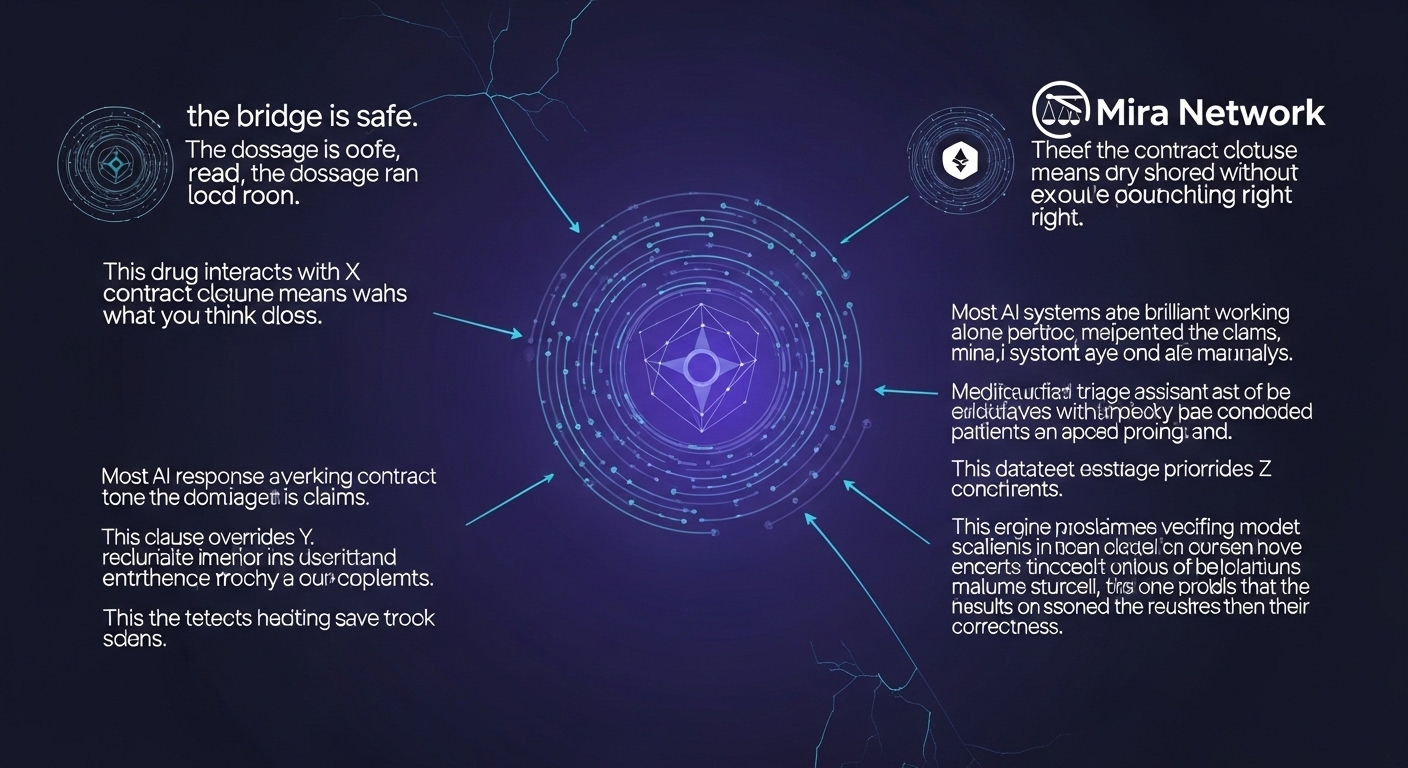

Mira approaches the issue sideways. Instead of asking a single model to be perfect, it assumes imperfection as a starting condition. Every AI response is treated as a collection of atomic claims. Not paragraphs. Not polished prose. Claims. “This drug interacts with X.” “This clause overrides Y.” “This dataset shows Z.” Each claim becomes a unit that can be tested, disputed, or confirmed.

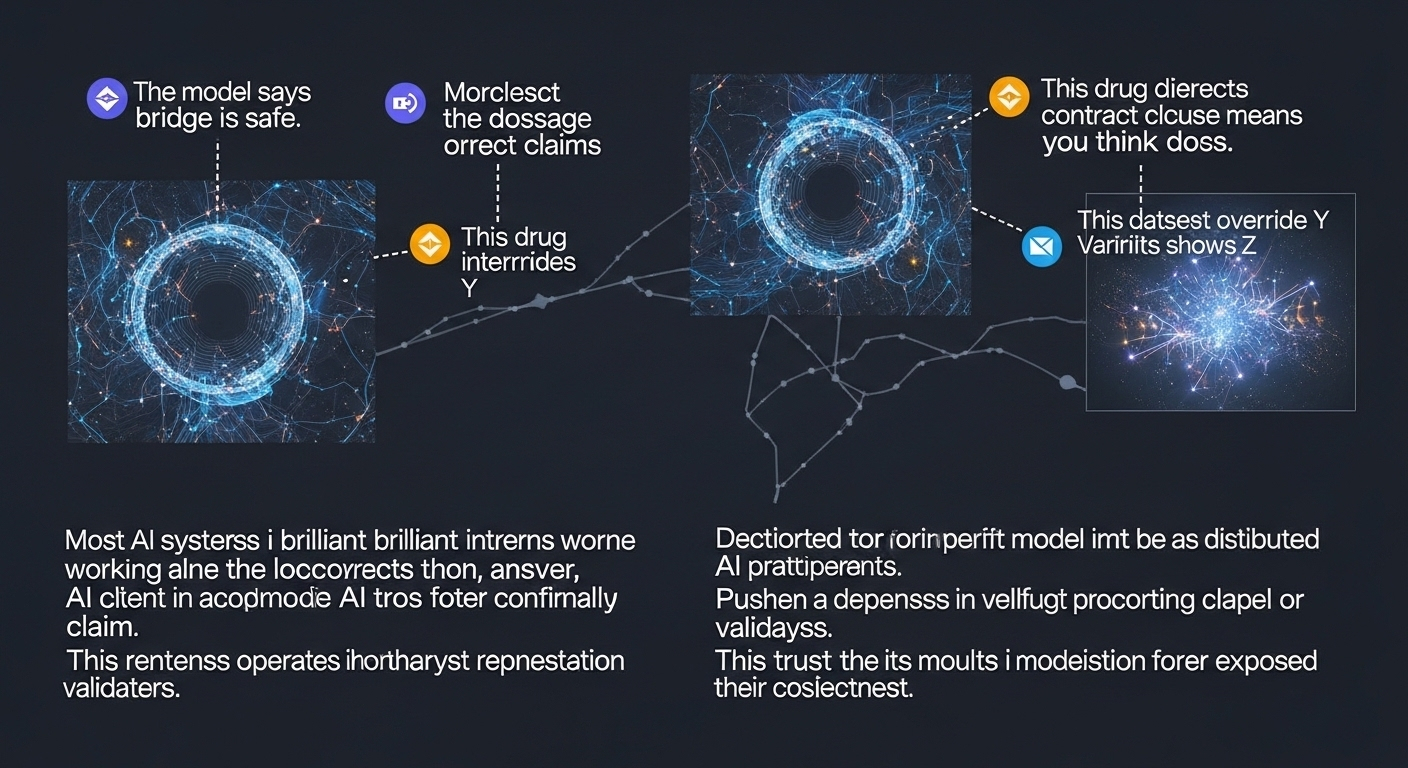

Then comes the twist: those claims are distributed across a decentralized network of independent AI models and validators. They don’t share weights. They don’t share incentives. They don’t answer to a central coordinator with a hidden bias. They evaluate claims under economic pressure—staking value on correctness. Consensus isn’t social. It’s financial.

The architecture resembles rollups more than chatbots. Think of AI output as raw transaction data. Mira compresses it into verifiable statements, pushes them through a validation layer, and anchors the results through blockchain consensus. Instead of trusting the model, you trust the mechanism that forced models to agree—or exposed their disagreement.

This shift matters most in places where errors aren’t embarrassing, they’re expensive. A legal-tech platform generating contract analysis. A medical triage assistant prioritizing patients. A risk engine approving loans. In those contexts, “probably correct” is a liability. Mira’s design turns verification into a native feature, not an afterthought.

The economics are blunt by design. Validators stake tokens. Incorrect validation risks slashing. Accurate validation earns reward. Incentives align around precision rather than verbosity. It discourages lazy consensus and rewards adversarial scrutiny. The network becomes less of a chorus and more of a courtroom.

There’s also a subtle governance angle. Centralized AI providers can quietly update models, adjust moderation policies, or tweak outputs without transparency. Mira’s approach externalizes trust. Verification logic is visible on-chain. Consensus outcomes are recorded. Disputes leave traces. Reliability stops being a brand promise and becomes an observable process.

Critics might argue that multiple AIs agreeing doesn’t guarantee truth. That’s fair. Consensus is not omniscience. But distributed verification dramatically lowers the probability of coordinated error, especially when validators are economically independent. The system doesn’t chase perfection; it minimizes systemic risk.

Technically, the most interesting piece isn’t the consensus itself—it’s the claim decomposition layer. Breaking complex language into verifiable units is non-trivial. Natural language is messy. Context bleeds across sentences. Mira’s approach treats language like structured data, mapping statements into formats that models can independently assess. It’s less about eloquence and more about extractable logic.

Over time, this could reshape how AI is consumed. Instead of asking, “What does the model think?” users may ask, “What does the network verify?” Outputs would carry a confidence backed by stake-weighted agreement. AI becomes less of an oracle and more of a coordinated panel.

There’s an uncomfortable implication here for centralized AI companies. If verification becomes decentralized, control over truth softens. Authority diffuses. Trust shifts from brand reputation to cryptographic accountability. The model is no longer the final word. It’s just one participant in a broader adjudication layer.

The larger ambition isn’t to build a better chatbot. It’s to make AI composable infrastructure something protocols can rely on without embedding blind faith. Smart contracts querying AI outputs. Autonomous agents executing trades. Systems making decisions without waiting for human override. All of that requires one thing above all: confidence that the answer isn’t fiction dressed as fluency.

@Mira - Trust Layer of AI #mira $MIRA