#mira || #Mira || @Mira - Trust Layer of AI || $MIRA

The AI boom has pretty much been all about one thing: what these systems can do. We’ve watched language models write like people, AI tools pump out code, create art, and handle all sorts of tasks. The pace has been wild. But now, as more people start relying on AI, a new worry is taking over can we trust it? That’s where this whole “Verified AI” idea comes in.

For a long time, the race was about making bigger, faster, more impressive models. Companies like OpenAI & Anthropic kept pushing limits trying to outdo each other. But the best systems still mess up. They make things up, get facts wrong, or sound confident while being totally off base. If you’re just messing around or using AI for fun, you might let it slide. But in areas like finance, healthcare, law, or anything that actually matters you really can’t.

That’s the big gap: AI can do a lot, but can you count on it? That’s where Verified AI steps in.

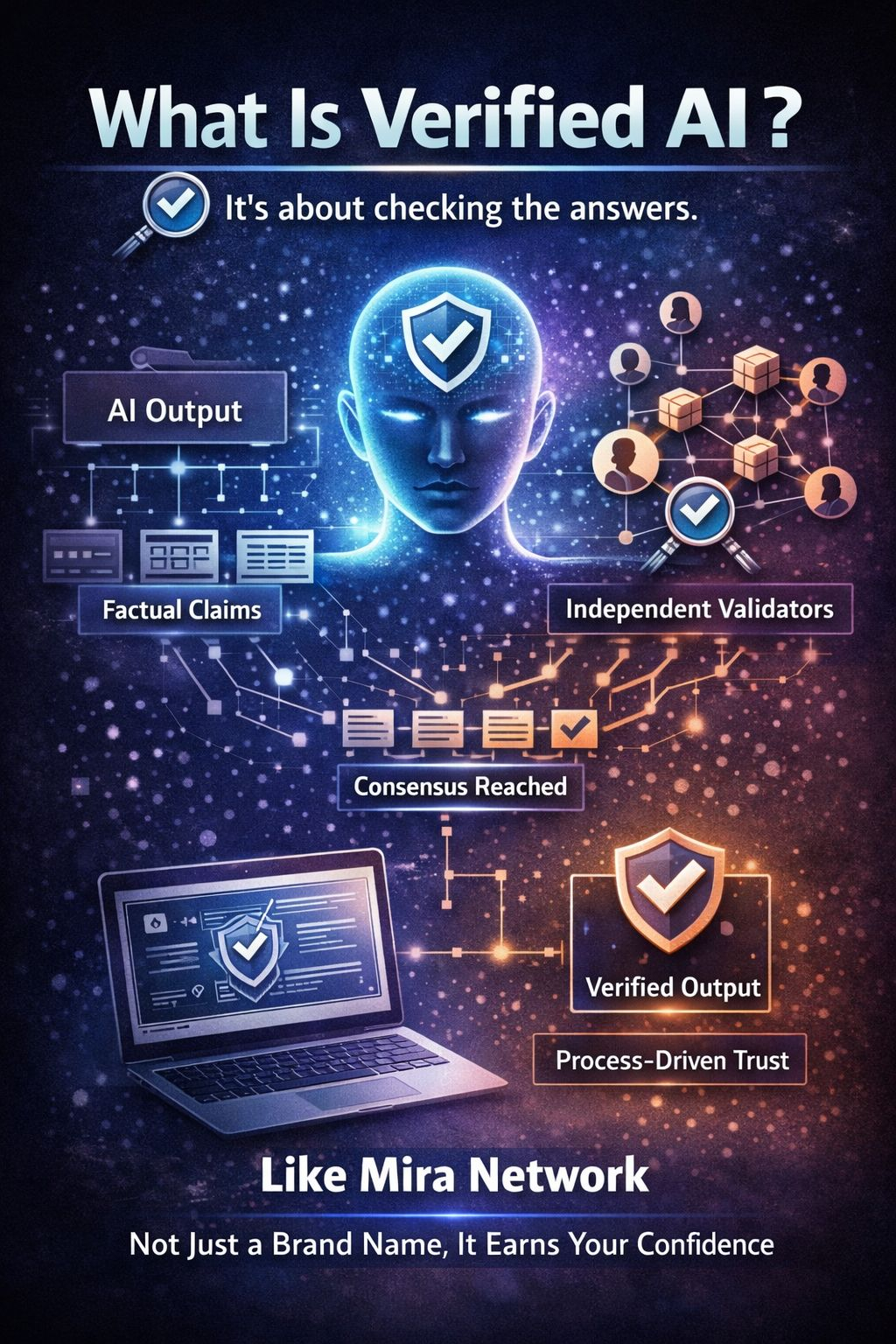

So, what’s Verified AI?

It’s not just about generating answers. It’s about checking them. Verified AI means systems that don’t just spit out a response they back it up. Sometimes that means cryptography, sometimes a group of models double checking each other, sometimes both. The point is, you don’t have to just trust what the model says; the system itself makes sure it’s right before you see it.

Take the Mira Network, for example. They’re breaking new ground with this. Mira splits up what the AI says into little factual pieces and spreads them out to independent validators. These folks check the claims, and only when there’s agreement does the system say, “Yep, this is verified.” So now, trust isn’t about a big brand name it’s about a process that earns your confidence.

That changes everything. AI stops being just about making stuff up, and starts being about real, agreed upon truth.

Why is this happening now?

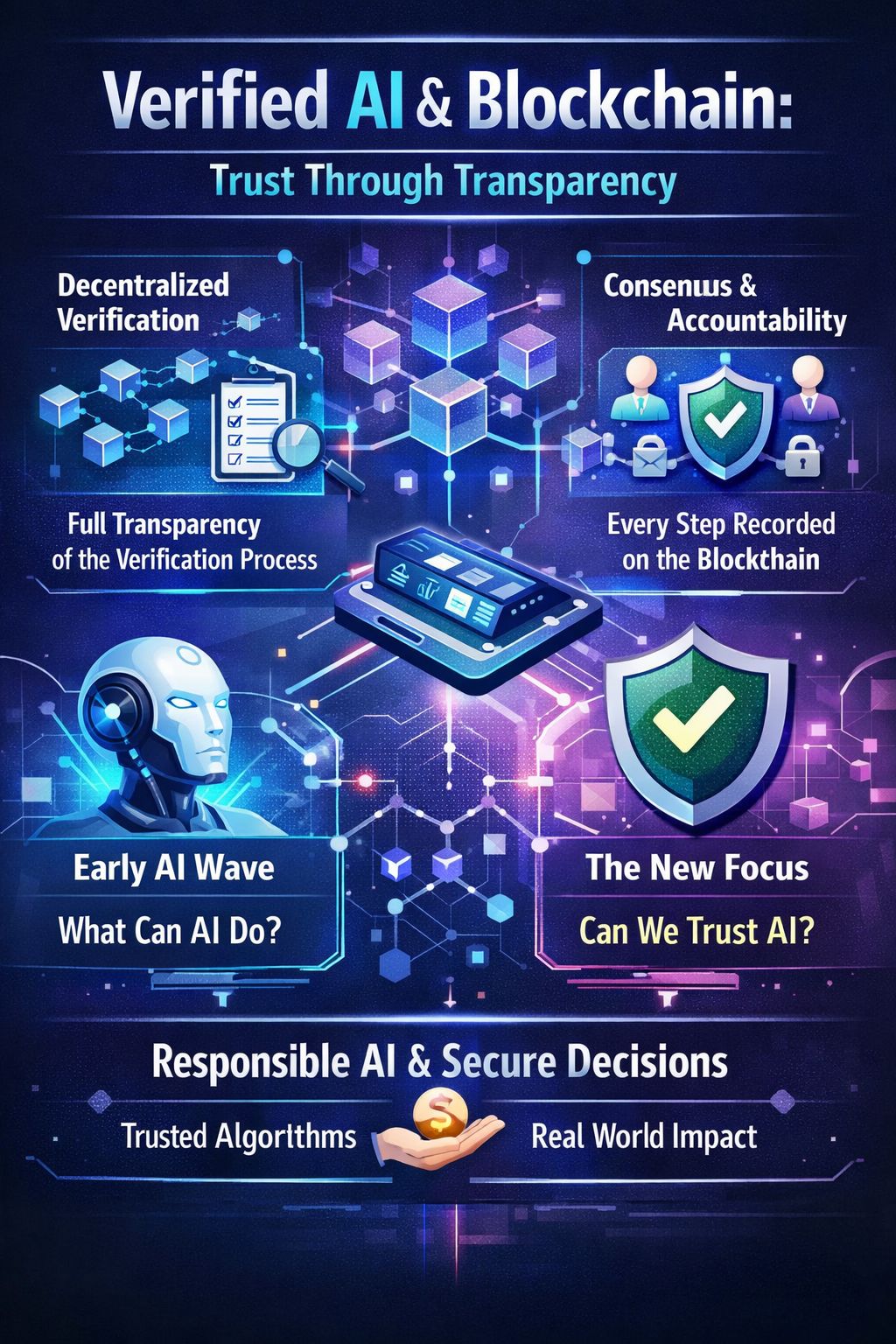

It’s all about timing. As companies start building AI deeper into the way they work, they’re feeling the pressure from regulators, from risks, from the need to show their work. Governments are looking at how to manage AI, and businesses want systems they can audit.

Verified AI fits right in with blockchain tech. Both are built on the idea of consensus and transparency. With a decentralized setup, you can record every step of the verification process, so you always know how a decision got made. It’s a mashup of two of the biggest tech shifts in recent years: artificial intelligence and decentralized networks.

Plus, the market has grown up. The first wave of AI was all about what it could do. Now, the focus is shifting to whether we can trust it especially as AI starts making real decisions and moving actual money.

The trust economy

There’s another reason people are talking about Verified AI: incentives. Old school AI relies on a handful of people watching over things. With Verified AI especially the decentralized kind you can build in economic rewards and penalties. Validators get paid for accuracy and lose out if they try to cheat. Suddenly, telling the truth isn’t just good practice it’s how you get paid.

If this model scales, it could seriously cut down on AI making stuff up, and boost trust without needing to retrain the models from scratch.

So, is this the next big thing?

Tech stories always chase whatever problem nobody’s solved yet. Crypto needed to scale so Layer 2s happened. Security was the hole, so zero knowledge proofs exploded. In AI, reliability is the headache.

Verified AI goes right at that.

If decentralized checking can really make AI more accurate without bogging it down, it could become the backbone for enterprise AI. The real question isn’t whether AI will keep getting better it will. The question is, will anyone accept unverified answers when the stakes are high?

As we move from playing with AI to actually letting it run things, trust is everything. Verified AI isn’t just a buzzword. It’s probably the next step AI has to take.