I’ve lost count of how many times I’ve had to double-check something that should have been simple.

A transaction that “went through,” but didn’t show up where I expected. An in-game item that I owned, but couldn’t prove outside that specific environment. Even something as basic as account activity—visible in one place, completely invisible in another. So you end up refreshing pages, taking screenshots, asking others to confirm what you already know happened.

It’s a strange kind of uncertainty. Not because the action didn’t happen, but because there’s no shared way to verify it.

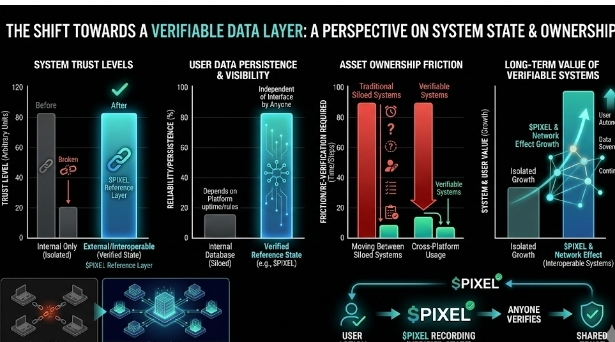

At that moment, I realized most systems don’t really care about being verifiable beyond their own boundaries. They just need to function internally. As long as their own database agrees with itself, that’s enough. But the moment you step outside that system, the “truth” becomes harder to carry with you.

That’s where something like @PIXEL started to feel relevant, even if I didn’t fully trust it at first.

Initially, I thought the idea of making everything “verifiable” sounded excessive. Not every action needs to be tracked or proven externally. In many cases, it just adds friction. More steps, more structure, more things that can go wrong.

It felt like solving a problem that most users weren’t actively complaining about.

But then I kept coming back to the same pattern—systems agreeing internally, but conflicting externally. And that’s where the idea of something being both verified and verifiable started to land differently.

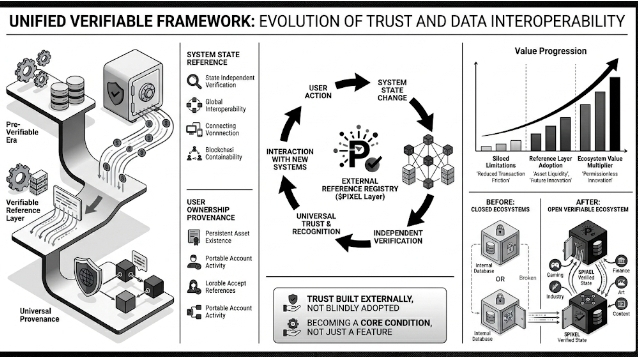

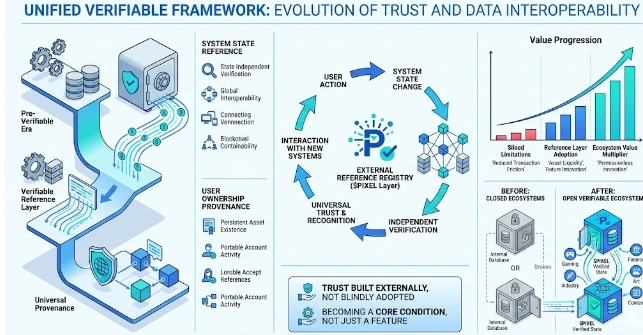

The role of $PIXEL, at least how I understand it now, isn’t just to represent value. It acts more like a reference layer for state. A way for actions, ownership, or participation to be recorded in a form that can be checked, not just assumed.

So instead of trusting a platform because you’re inside it, you can verify outcomes from outside it.

That shift is subtle, but it matters.

A completed task isn’t just “done” because the system says so—it’s something that can be proven. An asset isn’t just visible in your inventory—it’s something that exists independently of that interface. And participation isn’t just remembered—it’s recorded in a way others can recognize.

What I find interesting is that this doesn’t necessarily change how users behave immediately.

Most people won’t think about verification layers while playing a game or interacting with a system. They just expect things to work. So adding something like Pixel risks feeling unnecessary, especially if the core experience already feels smooth.

And honestly, that was my hesitation.

If everything is already functioning, why introduce another layer? Why complicate something that users have already adapted to?

But upon reflection, the value doesn’t show up in isolated moments. It shows up when systems start overlapping.

When different environments need to recognize the same action. When ownership needs to persist beyond a single platform. When users move between systems and expect continuity, not resets.

That’s where being verifiable starts to matter.

If @PIXEL can act as a shared point of reference, then systems don’t need to rebuild trust every time. They can rely on something external, something already established. Not blindly, but consistently.

And if that works, it opens up a different kind of structure.

You get environments that don’t need tight integrations to interact. Assets that don’t lose meaning when moved. Progress that doesn’t disappear when the interface changes. Everything starts to feel a bit less temporary.

But I don’t think this plays out easily, at least not yet.

There are real constraints. Most systems are still closed by design. Adoption requires coordination, and coordination is slow. There’s also the question of whether users actually demand this level of verification, or if it remains more of an infrastructure concern than a user-facing need.

Right now, I’m still observing.

I hold a small amount of $PIXEL, mostly as a way to stay connected to how the system develops. I’m not fully convinced the verification layer becomes essential, but I can see where it might start to matter.

For me, the proof isn’t in announcements or technical claims.

It’s in behavior.

If users stop questioning whether their actions “count,” if systems begin referencing external states without friction, if verification becomes something that’s used rather than advertised—then something real is forming.

Not because it was designed that way, but because systems slowly started depending on it.

That’s when “verified and verifiable” stops being a concept and starts becoming a condition.