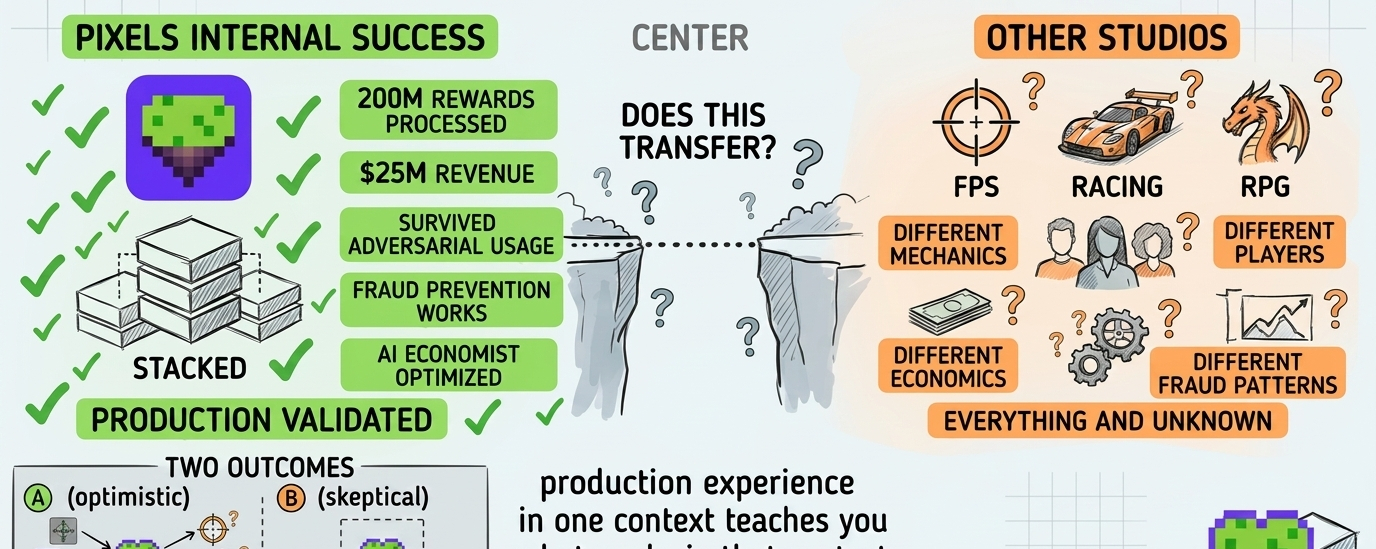

I keep watching @Pixels and trying to figure out if Stacked working for Pixels internally proves it works for other studios or if it just proves Pixels solved Pixels-specific problems that don't transfer.

What I'm watching isn't whether "built in production" is better than "built on slides." It is. What I'm watching is whether production-proven in one context means production-ready for different contexts.

The transferability question in B2B infrastructure.

Not the validation narrative. The reality where companies build internal tools that work perfectly for their use case, then discover those tools don't transfer cleanly to external customers with different requirements.

That's where most internal-tools-turned-products struggle.

Pixels says Stacked is battle-tested. It powered their ecosystem through 200 million rewards and $25 million in revenue. It survived adversarial usage, fraud attempts, bot armies.

All legitimate validation. What I can't tell is whether it's the right kind of validation.

The challenge is Pixels optimized Stacked for Pixels. They built it to solve problems they were experiencing. They tested it against fraud patterns they were seeing. They tuned it for player behavior in their games.

That's how good internal tools get built. You solve your own problems. You iterate based on your own data.

But your problems might not be everyone's problems.

@Pixels has specific game mechanics. Specific player demographics. Specific economic dynamics. The infrastructure that keeps those specific dynamics sustainable might not transfer to games with different mechanics, different players, different economics.

Most B2B infrastructure companies discover this gap between internal validation and external adoption. What works perfectly for your use case works partially for similar use cases and breaks for different use cases.

The question's how different other studios' use cases are from Pixels.

If they're building different game types with different audiences, the transferability is less obvious. Does behavioral data from farming game players inform reward strategies for FPS players. Does fraud prevention tuned for one game type work for another.

Most of that's unknowable until external studios actually deploy it.

What keeps me coming back is that Pixels isn't claiming universal applicability. They're positioning Stacked as infrastructure that worked for them and might work for others.

But honest positioning and actual transferability are different things.

The "built in production" claim is powerful marketing. It distinguishes Stacked from vaporware. It proves something works somewhere. But it doesn't prove what works or how broadly those learnings apply.

Production experience in one context teaches you what works in that context. It doesn't necessarily teach you what works everywhere. The fraud patterns Pixels saw might be specific to their player base. The reward mechanisms might be specific to their game mechanics.

Extrapolating from one production environment to all production environments is how most B2B infrastructure overestimates product-market fit.

Maybe Stacked's learnings are generalizable. Maybe the fraud prevention works across game types. Maybe the AI economist finds patterns that transfer.

Maybe they're more specific than claimed and external studios discover Stacked needs significant customization for their requirements.

I'm watching to see which one.

What I'm particularly watching is early external deployments. Do other studios adopt Stacked as-is or do they need extensive customization. Do the fraud systems work for their player bases or need retraining.

The gap between "works for Pixels" and "works for everyone" determines whether Stacked becomes category-defining infrastructure or niche tooling for Pixels-like games.

$PIXEL's value depends on that gap. If Stacked works broadly, $PIXEL becomes cross-game rewards currency with expanding utility. If Stacked works narrowly, $PIXEL stays concentrated in Pixels ecosystem.

Most infrastructure tokens optimized for one use case don't expand successfully to different use cases. The infrastructure was too specific. The learnings don't transfer.

I'd prefer Stacked's production learnings transfer broadly. I'm just not convinced internal validation proves external applicability as much as the positioning suggests.

The transferability question's fundamental. You can prove something works in production. That doesn't prove it works in different production environments with different constraints.

And honestly, I trust teams that acknowledge context-dependency more than teams that assume production experience in one environment validates all environments.