If Vanar really encounters network lag or RPC failure, will users lose their money? To be honest, after I lost data by canceling my cloud account a few days ago, I started to grapple with this question: no matter how impressive a chain is, can it really be relied upon when unexpected situations arise?

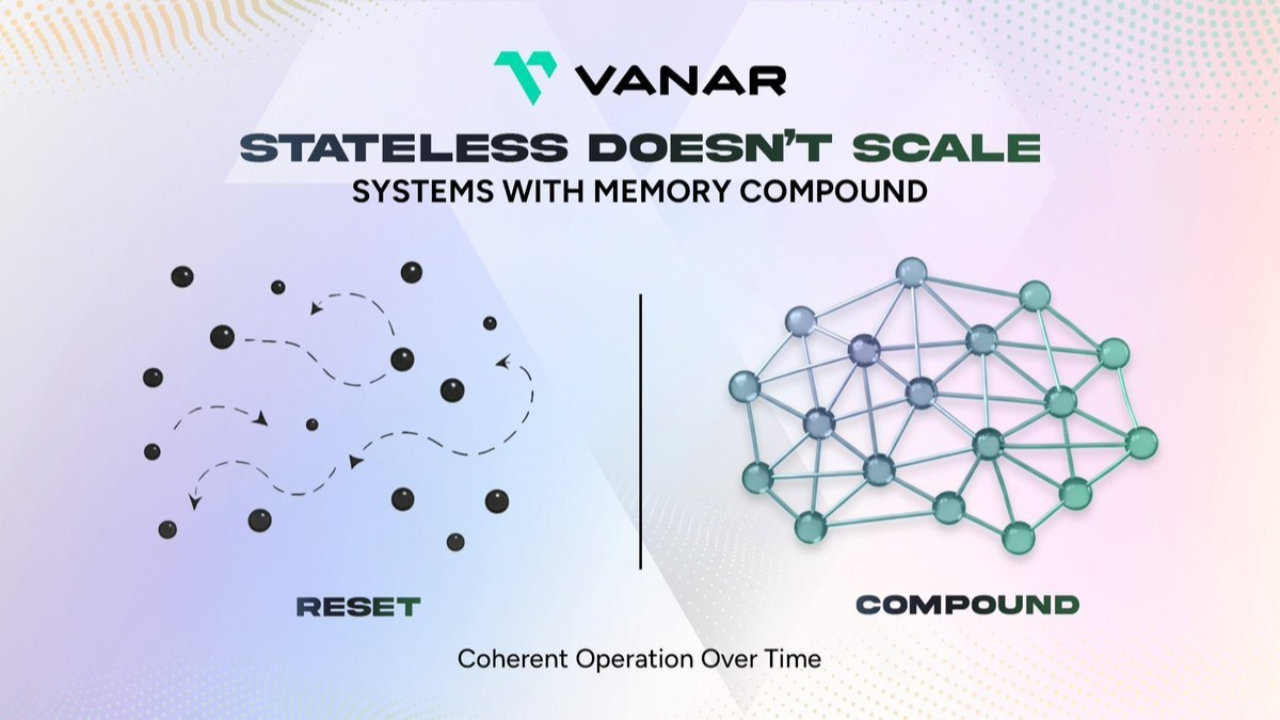

I suddenly realized that its greatest strength is not how flashy AI is, but how it has embedded 'stability' in its core. Neutron compresses all AI memories and key data directly onto the chain as verifiable Seeds, completely bidding farewell to centralized server single points of failure.

Kayon's on-chain reasoning ensures that every transaction and every upgrade has a complete and traceable on-chain record, allowing users to check historical operating status, start and end times, and impact scope at any time, without relying on an official 'has been restored' to brush it off.

Multisignature + delayed effect mechanisms make fee adjustments and contract upgrades fully transparent and auditable, giving you enough response time.@Vanarchain $VANRY #Vanar

After an incident, there is also a formal review report, publicly detailing the causes, repair logic, and improvement measures, with monitoring data (block delay, failure rate) also open to the community. This is the true safety barrier!

Unlike some chains that handle incidents coldly or scatter information across various groups, Vanar directly incorporates transparency and traceability into its underlying architecture.

When millions of AI agents are trading on-chain at high frequency, this design that allows for 'checking anytime, stopping anytime, tracing anytime' is the true moat.

The market value is still so low, is it really just because everyone has not yet seen the advantage of 'stability'.

To know whether a chain is stable or not, just see how it communicates after an incident.

In the past two days, the community has been discussing Vanar's CreatorPad and AI infrastructure, but I'm more concerned about: what if one day the network really gets congested, and the RPC becomes unstable, will users panic?

Vanar's answer is very hardcore - it has eliminated 'information chaos' from the design.

A unified information release entrance, a system upgrade with prior notification, and clear impact range descriptions during emergency repairs ensure that users are not left guessing at any point.

Permission management is even stricter: key operations must be multisigned + have a buffer period, and audit trails are permanently retained on-chain.

In extreme cases, there are also throttling and stop-loss mechanisms to quickly isolate losses within a small range, preventing a small issue from evolving into a panic across the entire network.

This set of tactics is more practical than any slogan of 'never going down'.

KOLs say Vanar is creating a 'real-world usable' chain, and I think the most real aspect is that it has made all the 'black box moments' that users fear completely transparent.

When AI agents begin to run payments and data transactions on a large scale, the ability to 'stay stable, explain clearly, and trace back' when something goes wrong is what gives both enterprises and ordinary users the confidence to invest their money.