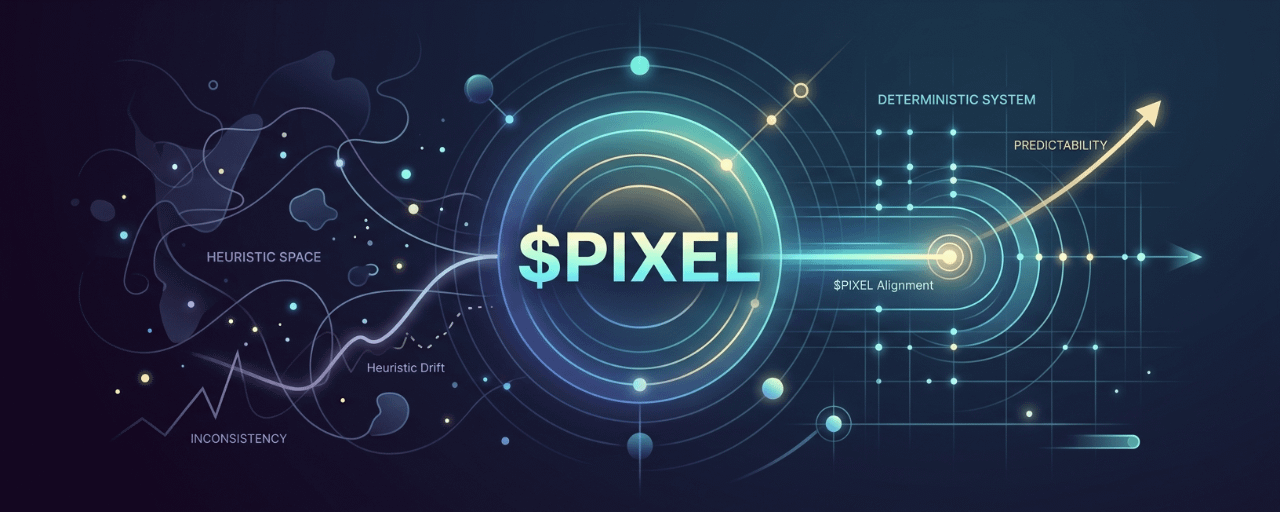

There is something I kept noticing when observing how Pixels behaves over time, and it has less to do with features and more to do with consistency under similar conditions. Most Web3 systems rely heavily on heuristics. They react based on approximations, thresholds, and loosely defined rules. That makes them flexible, but also unpredictable when pushed outside normal conditions.

$PIXEL does not feel entirely like that. I started paying attention to how the system behaves when similar conditions are recreated. Not identical, because real usage never is, but close enough in structure. What stood out is that outcomes tend to stay within a relatively stable range instead of drifting unpredictably. That suggests the system is not relying purely on heuristic adjustments, but on a more deterministic core where inputs map to outputs in a controlled way.

The difference between those two approaches is subtle but important. Heuristic systems adapt quickly but can become inconsistent as complexity increases. Deterministic systems are harder to design upfront, but once established, they provide a more stable foundation for scaling because behavior does not shift arbitrarily.

This becomes more relevant when thinking about how different layers interact. If the core logic is deterministic enough, external systems can integrate without constantly recalibrating for unexpected behavior. That is likely part of why something like the Stacked app can operate alongside the main environment without introducing excessive noise into the system.

Another detail that reinforces this is how edge cases are handled. In many systems, unusual patterns tend to break expected outcomes or create exploitable gaps. Here, those edge conditions still produce bounded results. Not perfectly controlled, but not chaotic either. That usually indicates that constraints are defined at a deeper level rather than patched at the surface.

From a structural perspective, $PIXEL operates inside this framework as a variable that follows those constraints. Its movement reflects the boundaries set by the system rather than reacting freely to every fluctuation in activity. That alignment is difficult to maintain unless the underlying logic is consistent.

I am not assuming this is fully deterministic in the strict sense, because real world systems always introduce some level of variability. But the direction is clear enough. Pixels seems to be reducing reliance on loose heuristics and moving toward a model where behavior is more predictable under defined conditions.

In a space where most systems drift over time as complexity grows, that kind of stability is not easy to achieve, and even harder to maintain as the system expands