Mira Network: Learning to Trust Machines Without Blind Faith

Mira Network did not come from a big announcement or a loud promise. It grew from a quiet frustration that many people working with AI eventually face. The answers look polished. The tone feels confident. Yet when you slow down and check, something feels off. Facts are stretched. Details are invented. Bias slips in unnoticed. The problem is not that AI is useless. The problem is that it speaks too confidently when it should hesitate.

This gap between confidence and correctness is where Mira begins. Instead of asking AI to be smarter, the project asks it to be accountable. An AI response is treated as a claim, not a final answer. Claims can be questioned. They can be tested. They can fail. This simple shift changes everything.

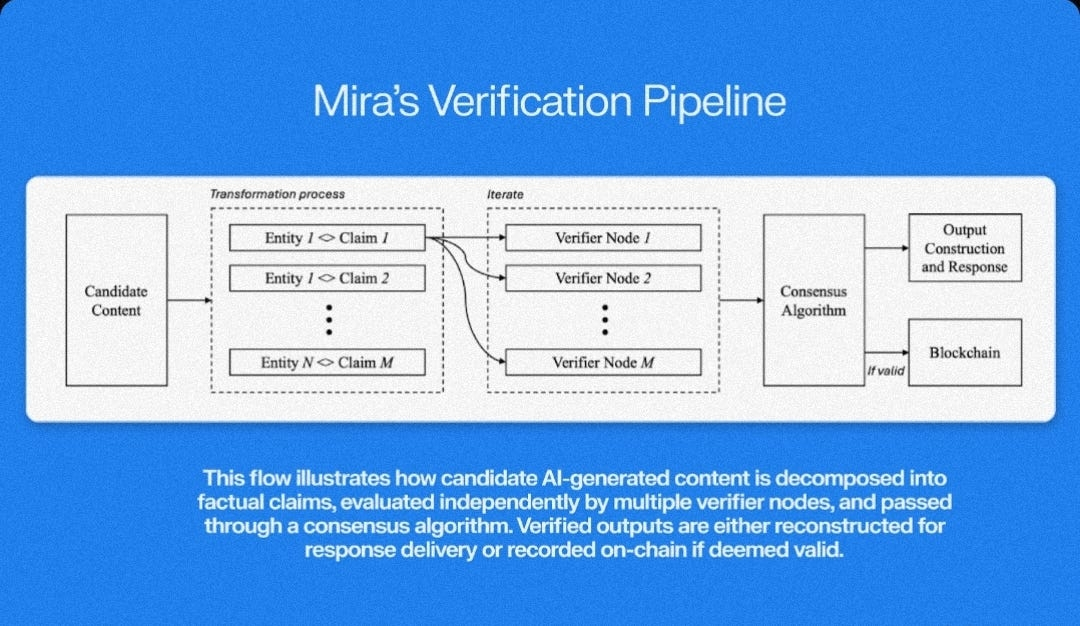

The purpose of Mira is not to compete with existing models or replace them. It accepts that AI will always make mistakes. What it challenges is the idea that those mistakes must remain hidden. Mira turns AI output into something closer to evidence. Each response is broken into smaller pieces that can be checked independently. A broad statement becomes a set of narrow claims. Narrow claims are easier to verify.

Once these claims exist, they are shared across a network of independent AI models. No single model decides what is true. Each one evaluates the claim from its own perspective. Some agree. Some disagree. Mira does not force harmony. Disagreement is recorded, measured, and priced into the result. If certainty is low, the system admits it.

One of the most practical ideas in Mira is its dual data delivery system. Not every application needs the same level of trust. Some systems value speed. Others need certainty. Mira separates these needs. One stream delivers fast responses. The other delivers verified outcomes. Developers can choose which to use. In critical situations, they can wait. In low-risk cases, they can move quickly .

.

Verification itself is assisted by AI, but tightly constrained. Models do not simply vote yes or no. They explain confidence. They flag ambiguity. They expose weak points. If results conflict too much, the claim does not pass. It stays unresolved. This avoids the illusion of accuracy, which is often more dangerous than admitting uncertainty.

Verifiable randomness strengthens this process. No one knows in advance which models will review which claims. The selection is random, provable, and resistant to manipulation. This makes coordinated attacks difficult. It also prevents quiet collusion. Over time, it keeps the system honest by design rather than by trust .

.

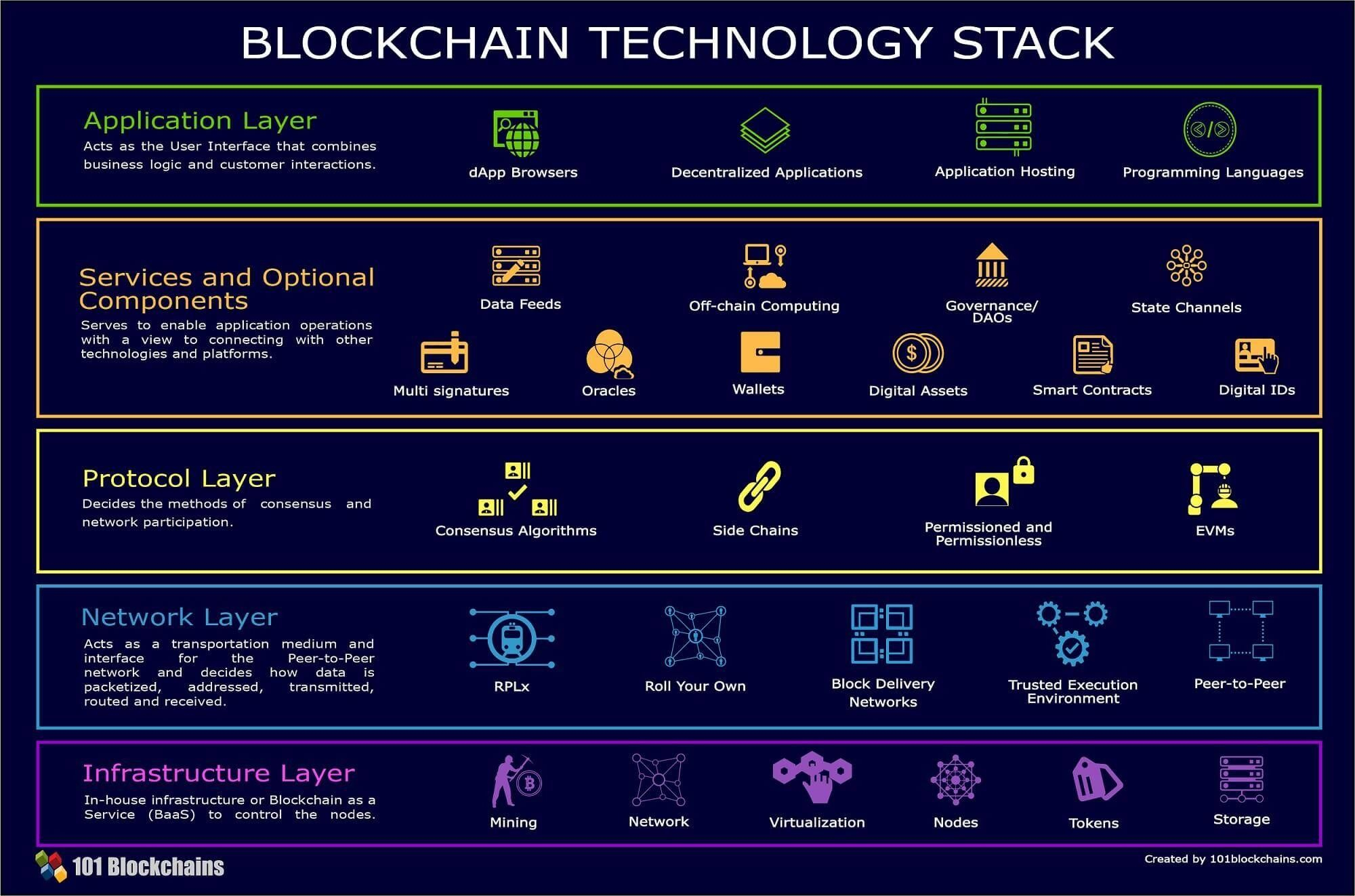

Mira’s architecture reflects its mindset. The network runs in two layers. The base layer is careful and stable. It handles consensus, staking, and final verification. Above it sits a faster layer focused on execution. This separation allows Mira to scale without sacrificing reliability. Speed and trust no longer fight each other. They live in different places.

Cross-chain support was a natural step. Mira does not assume one blockchain will dominate. Verified results are designed to travel. Proofs can be used across different networks and applications. This turns Mira into shared infrastructure rather than a closed system. It becomes something others can build on quietly.

The token plays a practical role. Participants stake value to verify claims. If they act dishonestly, they lose. If they are consistently accurate, they earn. Models that produce low-quality or misleading evaluations become expensive to operate. Over time, the economics shape behavior more effectively than rules ever could.

Developers adopted Mira not because of ideology, but usefulness. Early integrations focused on simple APIs. Submit an AI output. Receive a verification result. As tools improved, more complex systems emerged. Risk engines. Autonomous agents with safety checks. Decision systems that pause when confidence drops. These were not marketing demos. They were solutions to real problems.

What makes Mira different is its honesty about limits. It does not promise perfect truth. It does not claim to eliminate bias. It accepts that uncertainty is part of intelligence. The goal is not to remove doubt, but to expose it clearly and price it fairly.

In a world racing to make AI faster and louder, Mira takes a slower path. It asks machines to prove what they say. It allows them to say “I’m not sure.” That may seem small. Over time, it may be the difference between systems we admire and systems we actually trust