I am a dynamic visual designer. In the past, I worked on post-production effects and 3D animations, and polishing a high-quality shot could take several days. Now, running a complete AIGC workflow, the output is no longer dependent on physical strength, but on the precision of the instructions given.

This article will guide you to learn about AIGC from scratch, and I believe you will gain something after reading it.

At this point, some students might ask: "Teacher, aren't you a Web3 blogger? Why are you talking about AIGC?"

Me: 🙂

What is AIGC

AIGC stands for Artificial Intelligence Generated Content. It is a production method that utilizes artificial intelligence technology, especially generative AI large models, by giving instructions to the AI, allowing it to automatically or assist humans in creating entirely new content.

Currently mainly applied in the following core fields:

Text generation: For example, having AI write novels, scripts, press releases, business copy, and even computer code.

Image generation: You only need to input a text description (like 'a cat playing guitar in space'), and the AI can create highly detailed illustrations, photos, or 3D renderings in seconds.

Video generation: Making static images come to life, or directly generating a coherent short video through a text description.

Audio generation: This includes AI singing, text-to-speech (TTS), and automatically generating background music and songs based on emotional and stylistic requirements.

This article mainly focuses on the fields of image generation and video generation.

In practice, it only takes three steps:

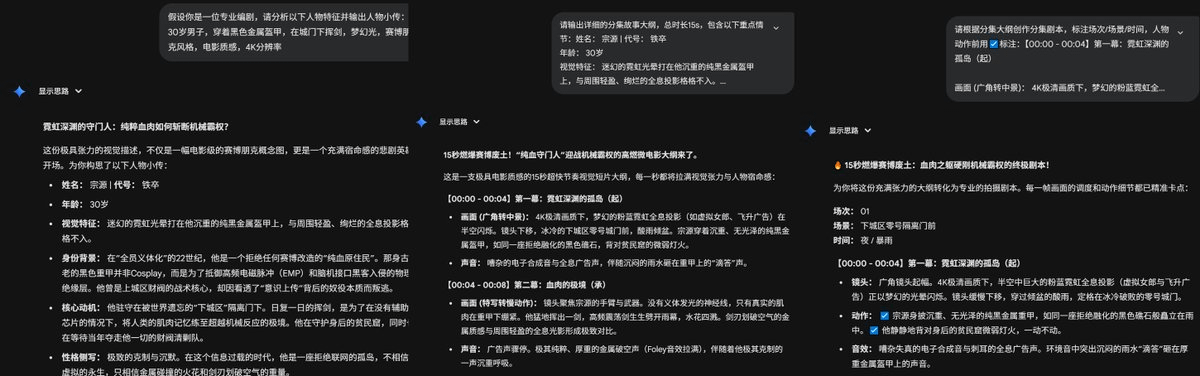

First, finalize the character profile and the script with action annotations using Gemini.

Then produce the character design image. The secret to keeping the character's appearance absolutely consistent is to first run a half-body image to establish the face, and then strictly use this image as a reference to generate full-body and multi-angle scene images.

Finally, use Dream to animate static images. A simple turn can be achieved with 'first frame-driven generation'; if you need precise control of the motion trajectory, you must use 'first and last frame generation'. Combined with 'close-up shot' camera work and 'Tyndall effect' lighting, you will achieve a cinematic quality. Moreover, with seedance 2.0 now available, AI has gained directorial thinking and is more comfortable than ever.

Chapter one: script creation

Step one: first establish the character profile, feed the story outline to the AI, so it can clearly understand the protagonist's personality, background, and appearance.

Copy the prompt: 'If you are a professional screenwriter, please analyze the following character traits and output a character profile: paste your story content.'

Step two: Next, pull the plot framework based on the previous character profile and main plot, and let AI help you set up the rhythm and framework to ensure the plot is coherent.

Copy the prompt: 'Please output a detailed episode story outline, for example, with a total duration of 5 minutes, an average of 1 minute per episode, including the following key content: paste the character profile from step one.'

You must first review the outline! If you find the outline uninteresting yourself, don’t proceed. Confirm the outline is fine before generating the script.

Step three: After completing the first two steps, the last step is to let AI output according to the format, marking all scenes, scenes, and times.

Copy the prompt: 'Please create episode scripts based on the episode outline, marking scenes/scenes/times, and use ☑️ to mark character actions: [paste outline].'

This part of the content can be directly done with Gemini.

Chapter two: image generation

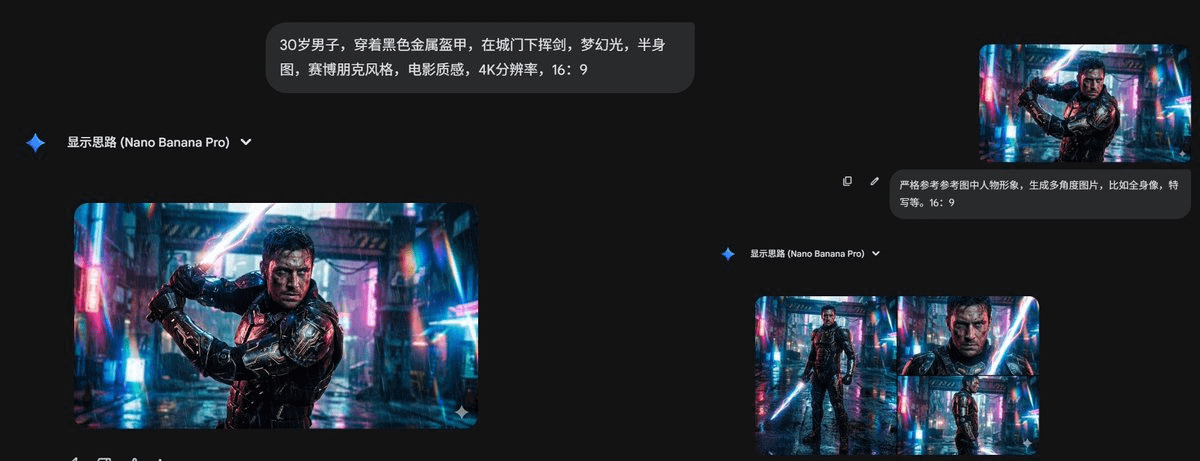

The main action is coming, input the command, the command is what we usually call the prompt or keyword, and we refer to writing prompts as casting magic. Writing prompts actually follows a very simple golden rule:

Main subject description + action + environment scene + lighting composition + art style words + image quality words.

Never write 'good-looking modern scene' such meaningless statements. Directly provide specific details, such as 'a 30-year-old man wearing black metal armor, swinging a sword under the city gate, dreamy light, half-body image, cyberpunk style, cinematic quality, 4K resolution.'

Then use Gemini to generate multi-angle images based on the created character image, adding the prompt 'strictly reference the character image in the reference picture', and then generate images based on the content in the script, remembering to use the golden rule.

Tips for filling in prompts for text-to-image or image-to-image generation:

Main subject description: character, age, hairstyle, hair color, emotional expression, clothing, and what they are doing.

Environment, scene, lighting, composition: coffee shop on a rainy day, front view, close-up of the character.

Lighting style: golden hour sunlight, Rembrandt lighting, dreamy lighting effects, volumetric light, natural light.

Art style words: cyberpunk, pixel art, Miyazaki style, Pixar style, ink wash painting, 3D style.

Image quality words: cinematic quality, 4K clarity, finely detailed, master-level works, masterpieces.

Recommended to use Gemini for generation.

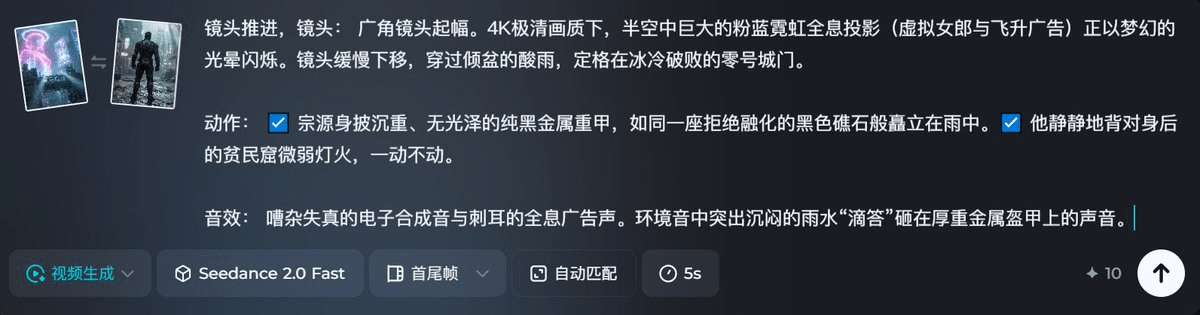

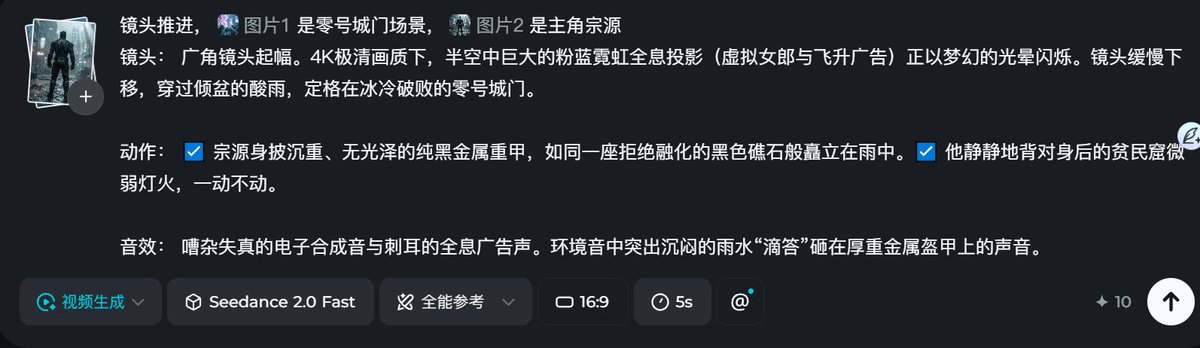

Chapter two: video generation

Open Dream directly, use seedance 2.0, and first use the first and last frame mode.

Try using universal references; universal references can use the shortcut key @ to mark a specific category in the prompt, which is very convenient. For example, here I marked image 2 as the protagonist Zongyuan, and image 1 as scene zero city gate. If there are no specific camera requirements, the universal reference mode can fully leverage AI's directorial thinking.

Time is limited, I will only generate a segment here. After watching, do you think seedance 2.0 is amazing?

After watching, how should the six major factions attack the Bright Summit's creation activity be done? I believe everyone should know!

Summary

The real power of AIGC lies in completely breaking down the professional barriers. Even those who do not understand drawing can produce exquisite works; when creativity hits a wall, it can also randomly provide completely unexpected angles, effectively maximizing creative efficiency.

Binance Square articles cannot post videos, friends who want to see the generated videos can go to my Twitter homepage. Check the pinned post.

\u003cc-80/\u003e