There was one night when I sat with Mira benchmark suite longer than I had sat with the market on some of its most panicked days. Not because it was loud or exciting, but because after enough cycles, the thing that makes me stop is no longer a grand promise, but numbers dry enough to strip away every outer layer of paint. Maybe anyone who stays in this market long enough reaches that point, when instinct stops being led by narrative and starts being pulled toward the very real limits of engineering.

What caught my attention about Mira is that the project chose to begin where almost nobody wants to begin. Prover cost, latency, and memory are not the kind of things that are easy to make sound compelling, yet they are exactly the three places that decide whether a pipeline can survive. To be honest, zkML sounds beautiful when placed inside big visions about verifiable AI, about trust being replaced by proof, about a new infrastructure layer for intelligent systems. But I have seen too many technologies die quietly simply because the cost of execution exceeded what users, teams, and the product itself could bear.

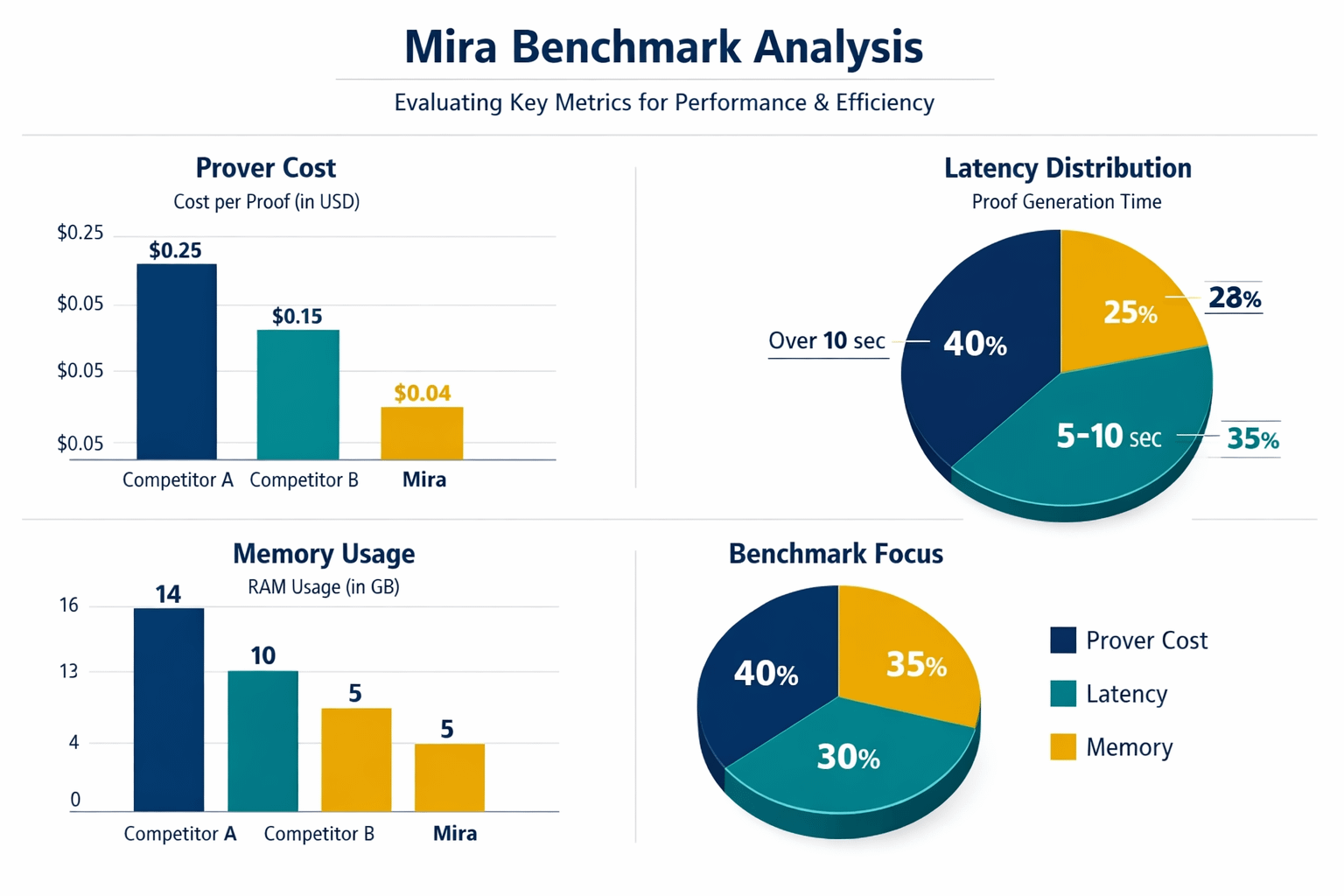

If you look closely at prover cost, I think this is the most important layer in Mira benchmark, because it forces the project to answer the hardest question in a language that cannot be dodged. A proof is not just a technical achievement. It is cost, energy, time, and the pressure of repetition thousands of times over if the system ever hopes to operate at scale. Truly, the market is often drawn to the sophistication of architecture diagrams, while long time builders care about something much rougher, whether the operating bill can actually be paid. If every proof becomes a growing burden as scale increases, then the larger the system gets, the more it starts to choke itself.

With Mira, what made it feel serious to me is that they placed proving cost exactly where it belongs, as a foundational constraint rather than a detail to optimize later. That is a very big difference between a team building a demo and a team thinking about production. Because in practice, nobody uses a pipeline simply because it is correct in theory. People use it when the value it creates is larger than the friction it introduces. Or, to say it more plainly, technology only deserves deployment when the price of correctness is not so high that it makes the whole system economically irrational. On that point, Mira approach makes me feel they understand the real price of ambition.

Latency is another layer, and maybe an even more brutal one because it touches experience directly. A system can be correct, secure, and elegant in design, but if it responds too slowly, all of those strengths erode very quickly. I have seen technically strong products fail not because they were wrong, but because they arrived too late in the moment the user needed them. Mira is right to treat latency not as a footnote in a performance table, but as the center of usability itself. Few people expect that being only a few seconds slower than the acceptable threshold can turn a pipeline from valuable into expensive, from useful into annoying.

Then there is memory, the part outsiders often overlook but builders never dare to take lightly. Memory is where every architectural decision reveals itself most clearly. How the circuit is designed, how data moves through the pipeline, where optimization really sits, how disciplined the engineering actually is, all of it becomes visible once memory starts tightening. In Mira, the fact that memory stands alongside prover cost and latency shows that they do not think about performance in a one dimensional way. To me, that is the mark of a team that understands bottlenecks in infrastructure never arrive alone. A little waste in memory can very quickly turn into higher machine costs, weaker stability, and a far more fragile path to scale than anyone first imagined.

What I value most in Mira is not only the three metrics being measured, but the attitude behind the decision to measure them. After many years in this market, I have less faith in projects that try to persuade people with vision first and only return to execution later. Because execution is where every illusion gets filtered out. A practical benchmark does not make a project look more glamorous. It makes the project easier to question, easier to compare, and much harder to excuse when the results are not good enough. But maybe that willingness to step into discomfort is exactly what maturity looks like.

The biggest lesson I take from Mira is that technology only truly begins once it accepts being measured ruthlessly. Not everything that can be measured is valuable, but almost everything with long term value must pass through a stage where it is forced to answer with concrete numbers. I have been around long enough to know that the most durable things rarely appear with the loudest applause. They appear in dry benchmark tables, where a team patiently grinds away at cost, latency, and memory until the system is strong enough to survive in the real world. And then the remaining question is whether Mira is stubborn enough to go all the way down the road it has chosen.