I spend a lot of time testing AI tools. Different models, different prompts, different outputs. And one thing keeps repeating itself.

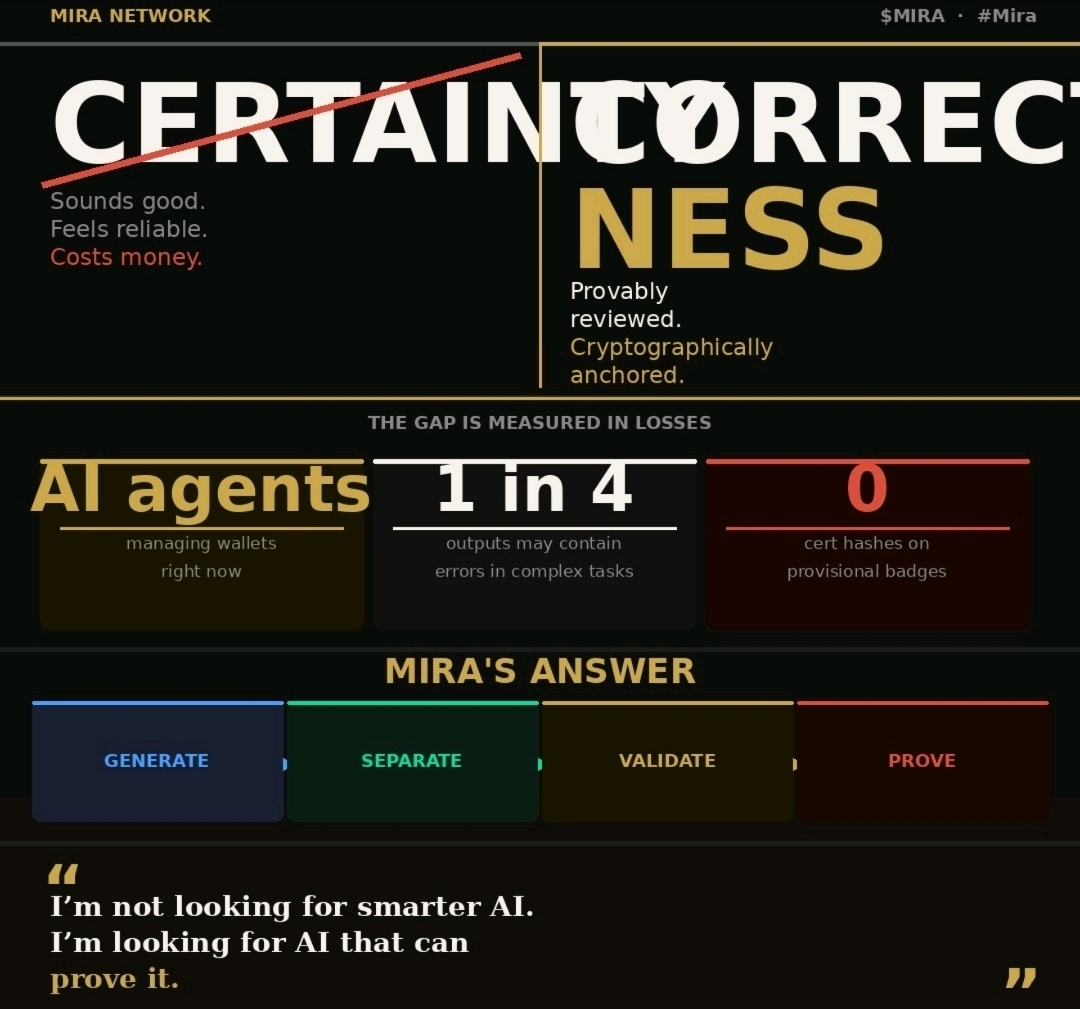

The answers often sound certain.

Clean structure. Confident tone. Convincing logic. But when I slow down and actually check things, small cracks appear. A statistic slightly off. A conclusion stretched too far. Sometimes just subtle bias hiding inside an otherwise good explanation.

That’s when I started looking more closely at $MIRA Network.

What caught my attention is that Mira doesn’t assume AI outputs should be trusted immediately. The system treats every response as something unfinished. Almost like a draft waiting for review.

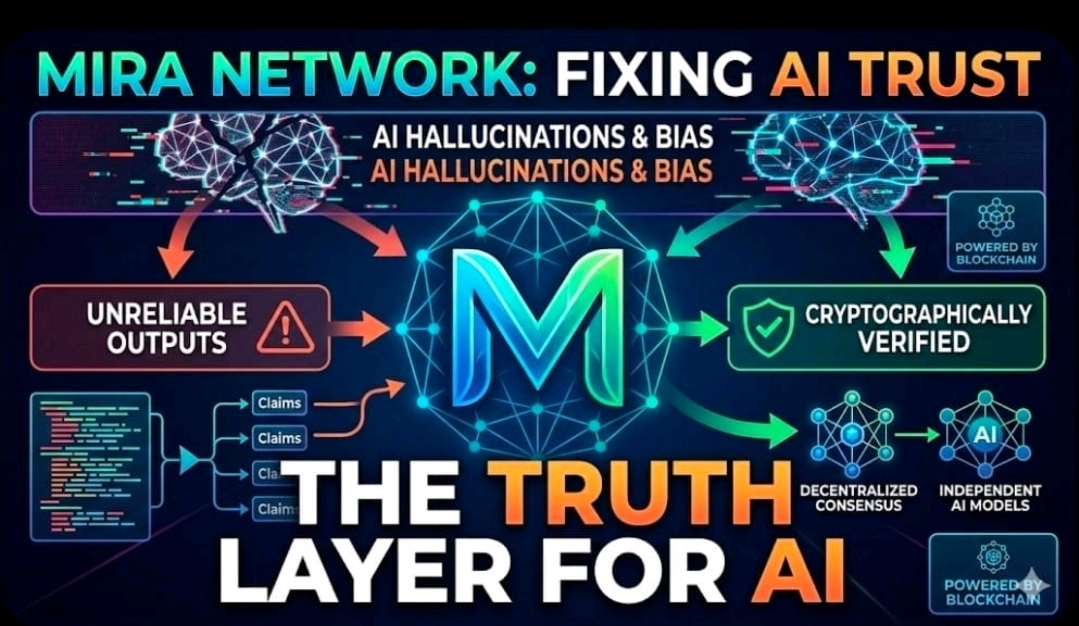

Instead of accepting a single answer, the network breaks the output into smaller claims. Each claim can then be examined independently. Different models. Different validators. Multiple perspectives looking at the same statement.

Only after enough agreement forms does the system move closer to calling the information verified.

To me that feels closer to how real knowledge works. Not one voice declaring the truth, but multiple checks forming confidence over time.

The decentralized structure matters too. Verification isn’t controlled by a single authority. Independent validator nodes participate in the process, which reduces the risk of a single model’s bias shaping the outcome.

There’s also a transparency layer built through blockchain infrastructure. Validation activity, confirmation records, and participation data are all recorded in a ledger environment. That makes the process observable rather than hidden inside a black box.

The $MIRA token ties into that ecosystem as well. Staking, validator participation, governance decisions — all connected through economic incentives. When participants commit resources to the network, accuracy becomes something they are financially motivated to protect.

I also find the hybrid security approach interesting. Elements of computational contribution combined with staking mechanics. It’s a way of balancing technical verification with economic alignment across the network.

Where this becomes really important is in real-world applications. Think about healthcare analysis, financial compliance, legal document review, enterprise risk modeling. In those areas, a confident AI answer isn’t enough. The output needs to stand up to scrutiny.

That’s why the concept behind @Mira - Trust Layer of AI resonates with me.

AI intelligence is already improving fast.

But intelligence without verification can scale mistakes just as quickly.

What the ecosystem really needs now is something different.

Systems that don’t just generate answers.

Systems that can prove them.