Imagine a future where artificial intelligence systems are used to assist judges in analyzing cases, reviewing evidence, and suggesting rulings. This is not entirely science fiction, as some systems today have already started to support legal decisions and analyze millions of documents in seconds.

But here arises a question more serious than the technology itself:

What happens if artificial intelligence makes a mistake?

The problem with many modern AI systems is that they operate like a "black box." They present the final result with high confidence, but sometimes it is difficult to know how the system reached that decision.

In ordinary fields, a mistake may be relatively acceptable…

But in fields like law or healthcare, a mistake can be extremely costly.

Here arises the need for a new concept in the world of artificial intelligence: the Trust Layer.

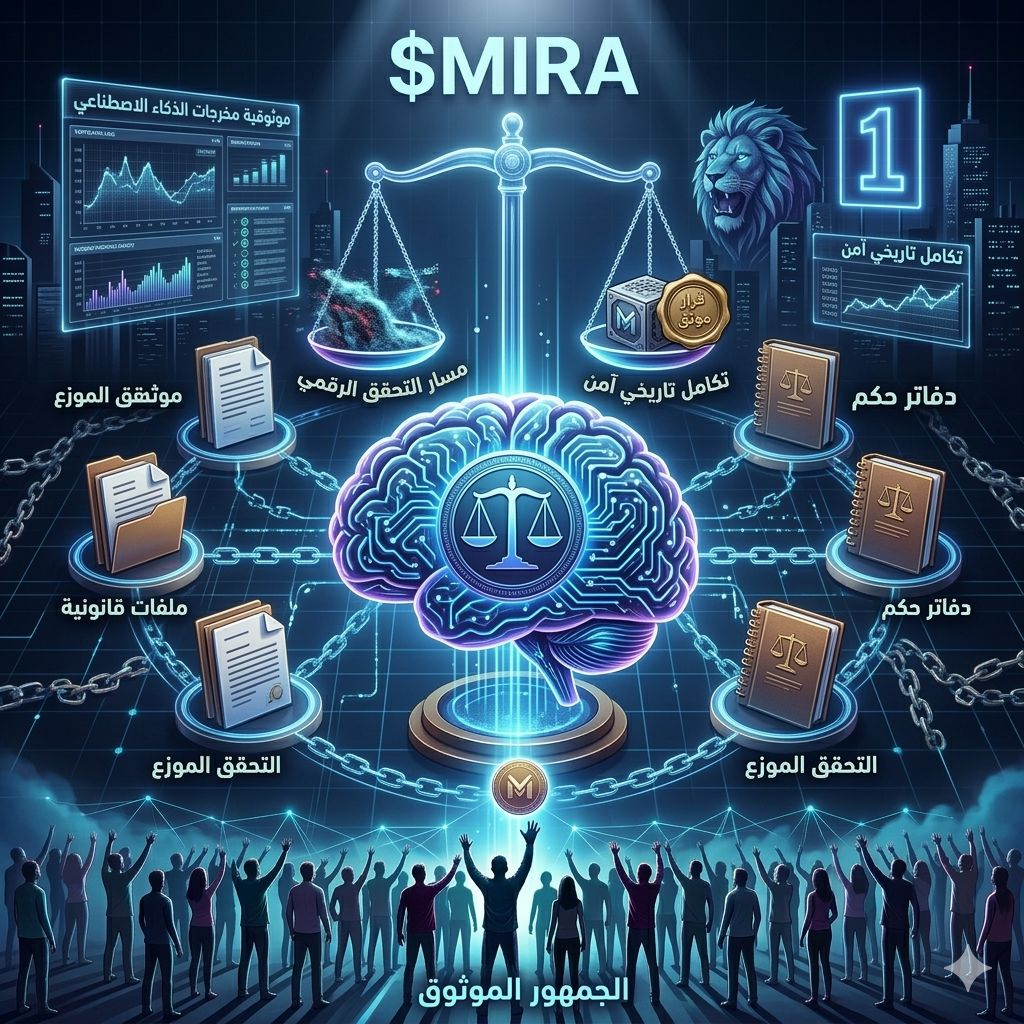

Rather than relying on users' trust in the model itself, projects like $MIRA are trying to build a verification layer over artificial intelligence.

This layer does not focus only on the result, but on the reasoning process itself:

🔍 How did the system arrive at this result?

⚙️ Was the data used correctly?

⛓️ And can this path be recorded on the blockchain to ensure it is not tampered with?

The idea here is not to replace judges with machines, but to build systems that can be reviewed and technically verified.

In this way, artificial intelligence becomes a powerful tool that assists in decision-making, but with a system that ensures transparency and review.

Perhaps in the future the question will not only be:

"Is artificial intelligence smart enough?"

But the most important question will be:

"Can we trust the way it thinks?"

💬 The question is for you:

Could artificial intelligence someday become part of the judicial system? Or should critical decisions always remain in human hands?