I didn’t start thinking about verification because of some grand theory about artificial intelligence. It started with a small moment of doubt. I asked an AI system for something simple—nothing complicated, nothing controversial. The answer looked polished, confident, and perfectly structured. But it was wrong. Not obviously wrong. The kind of wrong that hides behind good grammar and convincing tone.

What bothered me wasn’t the mistake itself. Humans make mistakes all the time. What stayed with me was how difficult it was to know whether the answer was reliable without checking it somewhere else. If AI is supposed to move into more autonomous roles—helping with research, writing code, making operational decisions—how often are we supposed to double-check it? Every time?

That question kept pulling at me. If every AI answer needs verification, then the real bottleneck isn’t intelligence. It’s trust.

That line of thinking eventually led me to something called Mira Network, though I didn’t immediately understand what problem it was actually trying to solve. At first glance it looked like another blockchain project mixed with artificial intelligence. But the more I looked at it, the more it felt like it was addressing a quieter problem that sits underneath most AI conversations: the gap between generating information and being able to rely on it.

Large language models are impressive, but they operate on probabilities. They predict what words should come next based on patterns in data. That makes them incredibly good at producing coherent answers, but coherence and correctness are not the same thing. A model can sound absolutely certain while quietly fabricating details. The industry calls these hallucinations, but the word almost makes them sound harmless.

The uncomfortable truth is that the more convincing AI becomes, the harder it becomes to notice when it’s wrong.

For a while I assumed the solution would simply be better models. Bigger training sets, better architecture, more compute. Eventually the errors would shrink enough that we could trust the outputs most of the time.

But that assumption started to feel fragile. Even very advanced systems still produce mistakes. Not because they’re poorly built, but because prediction systems don’t inherently know the difference between speculation and fact. They’re designed to generate plausible language, not to prove truth.

That realization made me look at Mira differently.

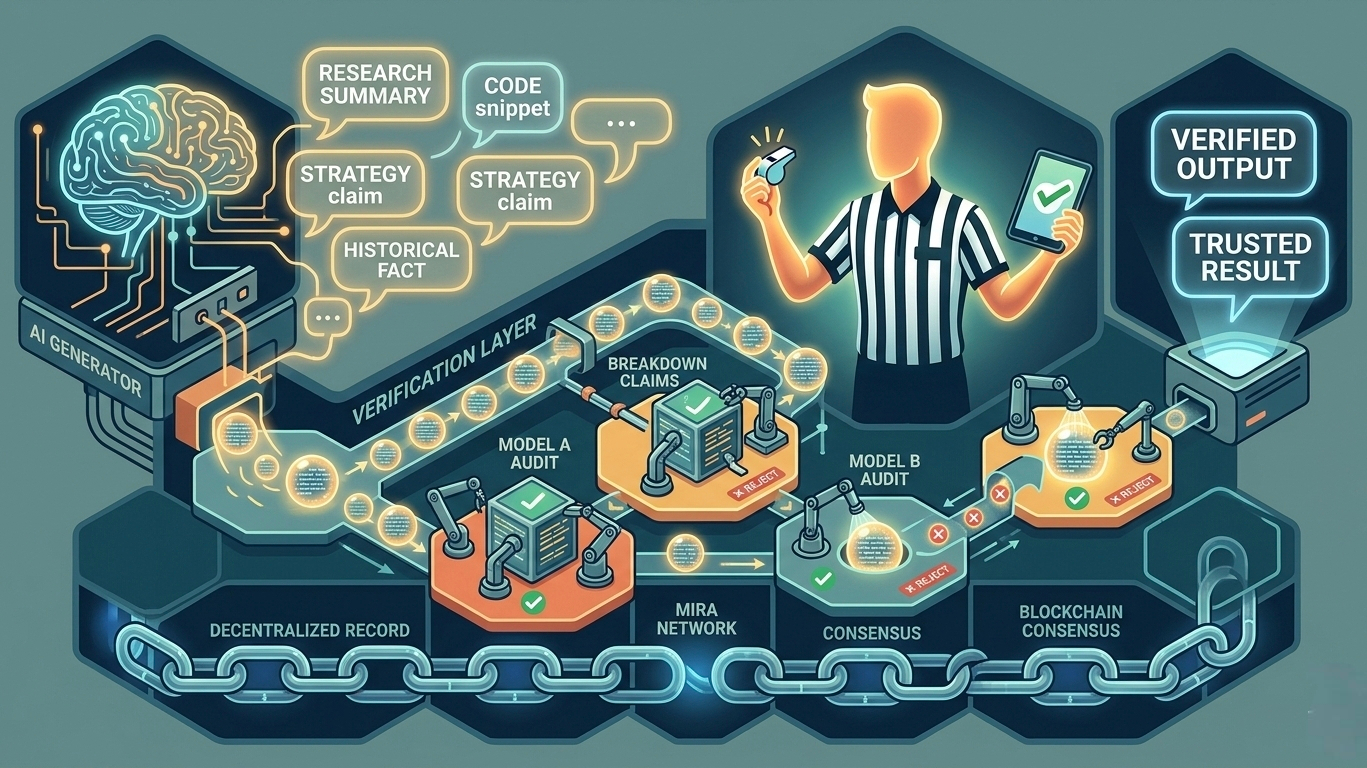

Instead of trying to make a single AI perfectly reliable, Mira seems to treat reliability as something that happens after the answer is generated. The system doesn’t assume the output is correct. It treats it more like a set of claims that need to be checked.

That shift sounds subtle, but it changes the architecture entirely.

A complex AI response can be broken into smaller statements—claims that can be evaluated individually. Those claims are then sent through a network where independent AI systems attempt to verify them. Rather than trusting the original model, the network asks multiple models whether the statements hold up.

In other words, the AI answer gets audited.

At first I wondered why this had to involve a decentralized network at all. If verification is the goal, couldn’t a single trusted system do the job? A large company could run a verification model internally and provide certified outputs.

But the more I thought about it, the more that solution started to look like another black box. If one entity controls both the model and the verification layer, then we’re simply shifting trust from one opaque system to another. The user still has to believe someone’s internal process.

Mira seems to approach this differently. Instead of one verifier, it distributes the process across a network where multiple participants evaluate the claims. The results are recorded through blockchain consensus, which means the verification process becomes visible and tamper-resistant rather than hidden behind an API.

The blockchain piece initially sounded like technical decoration, but in this context it plays a coordination role. It allows many independent participants to contribute verification results while keeping a shared record of what the network agreed on.

The system becomes less like a single judge and more like a panel.

But then another question appeared: why would anyone spend resources verifying AI claims in the first place?

That’s where the economic layer enters the picture. Participants in the network are rewarded when their evaluations align with the final consensus. If their verification turns out to be accurate, they earn rewards. If it doesn’t, they don’t.

The effect is that verification becomes a market activity rather than a purely technical function. Independent operators can run verification models and earn incentives for contributing accurate judgments.

The interesting part isn’t just the reward mechanism. It’s the behavioral shift it creates. Instead of relying on a small internal team to review information, the network encourages a distributed pool of verifiers who are financially motivated to be correct.

Truth, in a strange way, becomes something the system pays for.

Once that idea settled in my mind, I started thinking about the second-order effects. If verification networks like this actually become efficient, AI systems might begin treating verification as a standard step in their workflow.

Imagine an AI generating a research summary, automatically breaking its statements into claims, sending those claims through a verification network, and attaching cryptographic proof that the statements were validated before presenting the final result.

In that scenario, trust doesn’t come from believing the model itself. It comes from the fact that the model’s output passed through a verification process.

That possibility also reveals the tradeoffs. Verification introduces cost and delay. Not every application will want that friction. A casual chatbot probably doesn’t need cryptographic proof for every sentence it generates. But in areas like finance, research, governance, or automated systems making real decisions, the cost of being wrong may be higher than the cost of verifying.

Which suggests that Mira isn’t trying to replace AI systems. It’s trying to sit underneath them as a reliability layer that some applications will choose to use.

The design also raises deeper questions about how information gets validated at scale. Even if verification is decentralized, the network still needs rules. It needs to decide how claims are structured, which models can participate, and how disagreements between verifiers are resolved.

Those choices quietly turn governance into part of the product.

The moment a network decides how consensus around information works, it begins shaping the definition of credible knowledge within that system. That may not matter at small scale, but if a verification network became widely used, those governance decisions could carry real influence.

Another uncertainty sits inside the models themselves. Mira distributes verification across multiple AI systems to avoid relying on a single one. But if those systems share similar training data or biases, they might still converge on the same incorrect conclusions.

Decentralization reduces single points of failure, but it doesn’t automatically guarantee diversity of perspective.

So the long-term strength of the system may depend less on how many verifiers exist and more on how different they are from each other.

The more I think about it, the less this feels like a purely technical experiment and the more it feels like an infrastructure question. Not “Can AI generate answers?” but “What systems do we need around AI to make those answers dependable?”

Mira proposes one possible answer: treat AI outputs as claims that must earn credibility through verification.

Whether that approach becomes standard practice is still an open question. For now, I’m mostly watching for signals. I want to see whether verification through networks like this actually becomes cheaper than manual fact-checking. I want to see whether independent participants truly join the ecosystem or whether a few dominant actors end up controlling the process. And I’m curious whether developers start building applications that rely on verified AI outputs rather than raw ones.

Those signals will probably matter more than any early promises.

Because the real test isn’t whether AI can produce information faster.

It’s whether we can build systems that make that information trustworthy enough for people—and eventually machines—to act on.

$MIRA @Mira - Trust Layer of AI #Mira