Not honest like a person. More like… consistent. Traceable. Something you can actually reason about without needing to know the whole backstory.

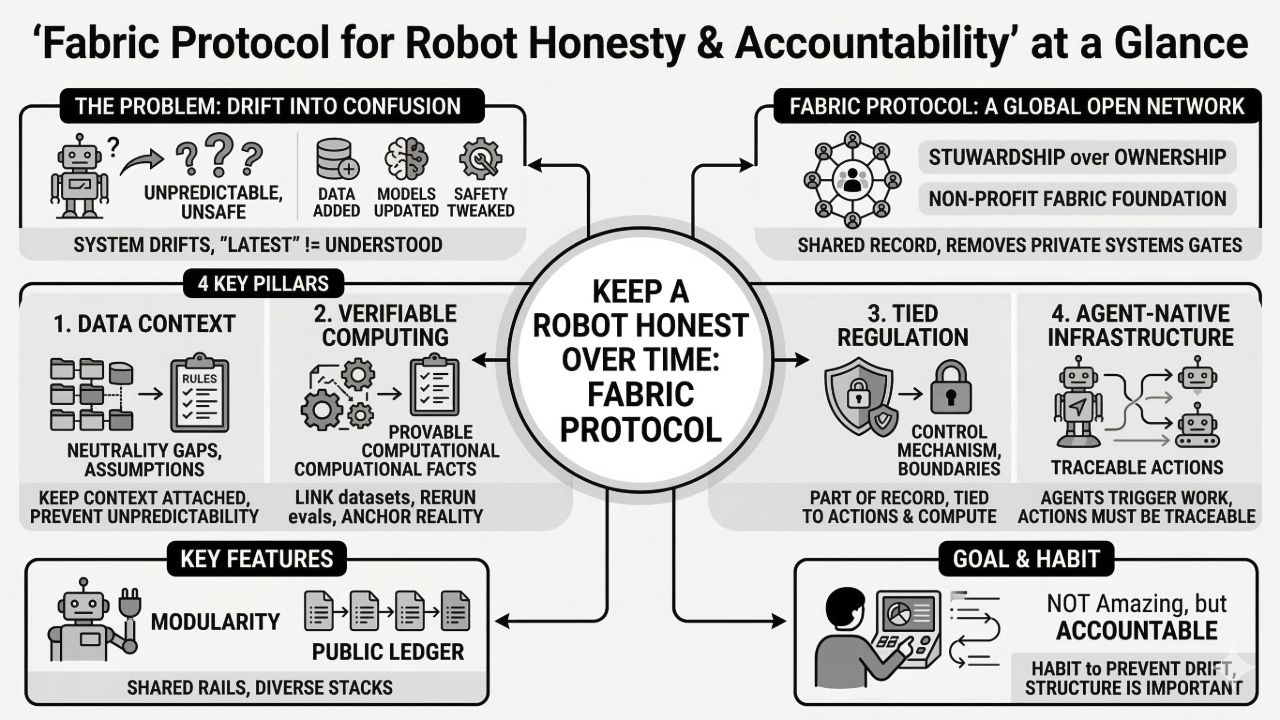

Because robots, especially general-purpose ones, don’t stay still. They change constantly. New data gets added. Models get updated. Agents get improved. Safety rules get tweaked. And most of those changes are small enough that nobody feels the need to make a big announcement. But the system as a whole drifts.

After a while, you’re not even sure what you’re looking at anymore. You’re looking at the latest version of something, but “latest” doesn’t mean “understood.”

That’s where Fabric Protocol feels like it’s trying to land.

It’s described as a global open network supported by the non-profit Fabric Foundation. The protocol coordinates data, computation, and regulation through a public ledger, using verifiable computing and agent-native infrastructure. That’s a mouthful, but the underlying problem is pretty plain: robot development creates a lot of claims, and without structure, those claims become hard to verify.

People claim a model was trained on approved data.

People claim an agent operated within certain constraints.

People claim a safety evaluation was run and passed.

People claim a new update didn’t change behavior “in meaningful ways.”

And you can usually tell when you’re in trouble because those claims start to matter more than the code itself.

So Fabric’s idea of a public ledger reads like a way to give claims a backbone. Not just “someone said it,” but “here’s a record that can be checked.” It’s a shared place to write down what happened in a form that other participants—other teams, other labs, other organizations—can verify without needing access to private systems.

That becomes important when robots are built collaboratively. Not only because collaboration is messy, but because collaboration multiplies ambiguity. Every hand-off introduces a little uncertainty. Every new contributor changes the shape of the system. Every new integration adds another place where the story can get lost.

@Fabric Foundation tries to keep that story attached.

Data is the first piece. Robots learn from data, but data is rarely neutral. It comes with assumptions. It comes with gaps. It comes with “we collected this in these conditions” and “don’t use that segment, it’s corrupted” and “this is only safe for training, not for deployment.” Those details often live in people’s heads.

If you lose that context, the robot doesn’t just become worse. It becomes unpredictable. And unpredictable is usually what people mean when they say “unsafe,” even if they don’t phrase it that way.

Computation is the second piece. The same model name can hide a thousand differences: hyperparameters, environment versions, training schedules, filtering steps, even the order of operations. And because computation is expensive and time-consuming, people rarely rerun it just to confirm details. They trust the record they have.

But if the record is incomplete or private, trust becomes fragile.

That’s why verifiable computing is interesting here. It suggests Fabric wants to make certain computational facts provable. Not in the “prove everything” sense. More in the “enough proof to anchor reality” sense. If a run happened, you can verify it happened. If it used a certain dataset, you can verify that link. If an evaluation was done under certain constraints, you can verify those conditions were real, not just written in a report.

Then regulation comes in. And regulation is really about boundaries. Permissions. Constraints. What’s allowed. What’s not allowed. When a human must be involved. Where the robot can operate. The thing is, regulation is usually treated as an external layer—like a set of guidelines people hope everyone follows.

But in real systems, “hope” isn’t a control mechanism.

Fabric’s approach implies regulation should be part of the coordinated record. Not just written down somewhere, but tied to actual actions and computations. That way you can say, “this agent ran this task under this rule set,” instead of vaguely assuming it.

The agent-native infrastructure part matters because agents are increasingly the ones doing the work. They move data. They trigger compute jobs. They schedule evaluations. They may even decide when to deploy an update. So if agents are part of the system, their actions need to be traceable in the same shared way.

Otherwise you get a modern version of the same old problem: “nobody knows why it changed, but it changed.”

The Fabric Foundation being a non-profit supporter plays into a different kind of honesty: institutional. If this is meant to be open infrastructure for many parties, people need to feel like it won’t suddenly become someone’s private gate. Governance is unavoidable. Standards change. Conflicts happen. But a foundation suggests stewardship over ownership, at least as a starting posture.

And modularity is what makes all this practical. Robotics is too diverse for one stack. Different bodies, different sensors, different use cases. If Fabric is modular, it can act like shared rails underneath many different implementations, rather than forcing everyone into the same shape.

So this angle isn’t about “robots becoming amazing.” It’s more about robots becoming accountable as they evolve. Making it easier to check what happened, what was used, what was enforced, and what claims are actually supported.

And I don’t think it ends with some clean, final state. It feels more like a habit you build into a system so it doesn’t drift into confusion. The kind of structure that only seems important once you’ve lived through the opposite for long enough.

#ROBO $ROBO

Article

I’ve been thinking about Fabric Protocol as “how do you keep a robot honest over time?”

Disclaimer: Includes third-party opinions. No financial advice. May include sponsored content. See T&Cs.

82

1.5k

Join global crypto users on Binance Square

⚡️ Get latest and useful information about crypto.

💬 Trusted by the world’s largest crypto exchange.

👍 Discover real insights from verified creators.

Email / Phone number