I want to believe in what Midnight is building. I really want that. The issue it identifies is real, and anyone who has taken the time to seriously think about where blockchain infrastructure needs to go understands that the first transparent model has limitations. Public ledgers work great for trustless verification. They work very poorly for anything involving sensitive commercial data, personal information, or the involvement of heavily regulated organizations. Midnight looks at that gap and provides something specific. The proofs are seamlessly integrated into a programmable smart contract environment. A familiar language for developers. Privacy is treated as architecture rather than an afterthought. On paper, this case is consistent.

But there is an underlying tension to all of this that I have not seen the project address honestly enough.

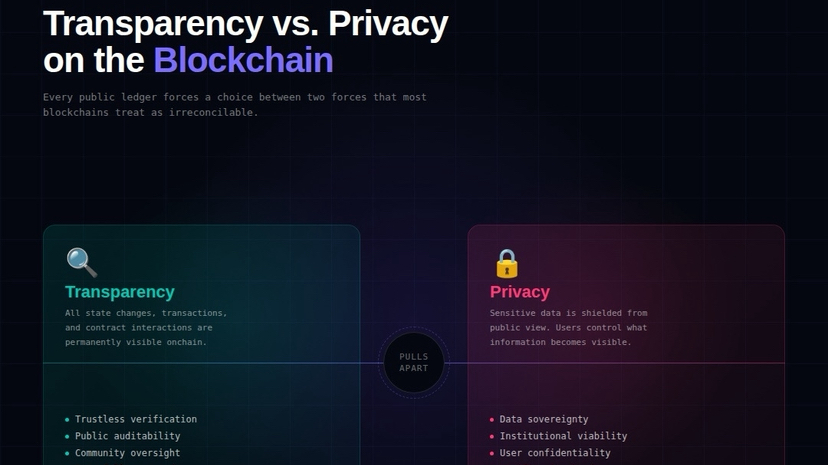

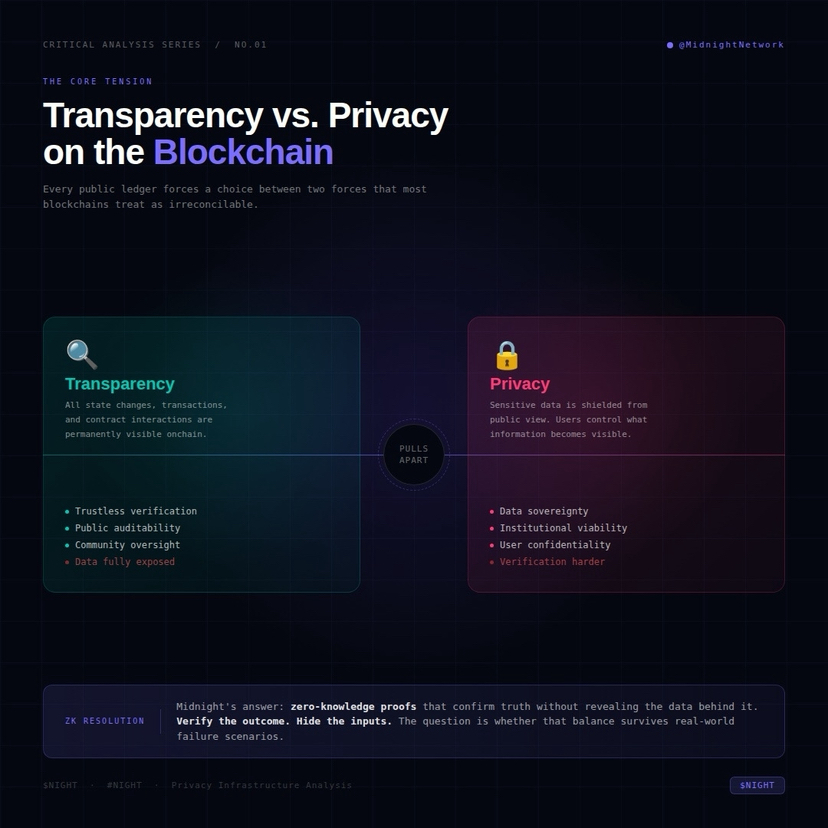

Privacy and verification capabilities are not just technical opposing forces. They are social opposing forces. And how Midnight navigates that opposition is more important than any specific implementation of encryption.

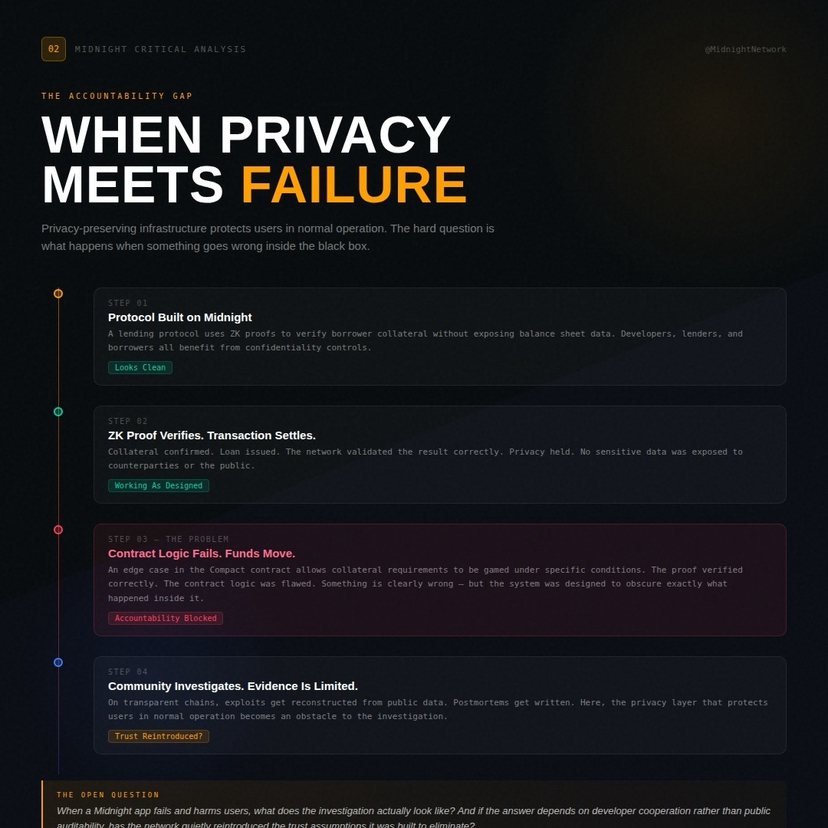

This is what I mean. Imagine a lending protocol built on Midnight. A borrower could prove that they meet the collateral requirements without disclosing their full balance sheet. The lender receives confirmation without compromising information. Both parties benefit from this arrangement. ZK proofs do their job correctly. It sounds clean.

Now imagine that the same lending protocol is exploited. There may be a logic proof that there is a special case that developers did not foresee. There may be a subtle bug in the Compact contract that allows someone to manipulate collateral verification under specific conditions. Funds move. Clearly, something went wrong. And now the community must investigate what happened inside a system designed specifically to obscure the details of what transpired within it.

This is not a hypothetical special case. It is the central design conflict of privacy-protecting financial infrastructure.

Traditional blockchains are very poor at failing, but they are transparent about how they fail. Every transaction, every contract interaction, every state change is recorded on a public ledger that independent analysts can review. Vulnerabilities are reproducible. Post-mortem reports are written. The community learns because the evidence is visible. Midnight's security controls, by design, limit that visibility. The same features that protect user data in normal operation become an obstacle to accountability when something goes wrong.

The project may respond that the ZK proofs themselves provide a verification layer. That the network can validate correctness without revealing information. But that answer overlooks the harder question. The proof system verifies what they are programmed to verify. They do not detect what they are not designed to check. When a contract behaves unexpectedly, the question is not whether the proofs verify correctly. The question is whether the contract logic was sound from the start. And auditing logic that you cannot fully check from the outside is a different and more challenging problem than auditing a transparent contract.

The Compact's lowering of barriers for developers is genuinely useful. But lower barriers mean many developers with varying levels of coding expertise write contracts that users will rely on for privacy assurance. The combination of accessible tools and opaque enforcement is not clearly safe.

Midnight positions reasonable privacy as a solution. But reasonable privacy requires reasonable implementation. And reasonable implementation requires accountability mechanisms that do not contradict the privacy model itself.

The question I always come back to is simple. When a Midnight-based application fails in a way that harms users, what happens during the investigation? And if the answer relies heavily on developer cooperation rather than public auditability, will the network quietly reintroduce the trust assumptions it is supposed to eliminate? @MidnightNetwork $NIGHT #night