I remember standing in front of an elevator in an old building in Prague. The buttons were brass, and the display was a mechanical dial that ticked as the car moved. Next to the floor buttons was a small, red circular knob labeled "Emergency Stop." I found myself staring at it. I didn't need to stop the elevator, but its presence settled my heart rate. I knew that if the cables snapped or the lights flickered, I could reach out and exert my will upon the machine.

Years later, I learned that in many modern buildings, those buttons are disconnected. They are "placebo buttons," wired to nothing but our need for agency. The elevator is controlled by an algorithm optimized for traffic flow, and the machine will not let a human interrupt its logic for anything less than a fire sensor trigger. Yet, we feel safer in the car with the fake button than in the one with a smooth, untouchable glass wall. We crave the illusion of a throat to choke.

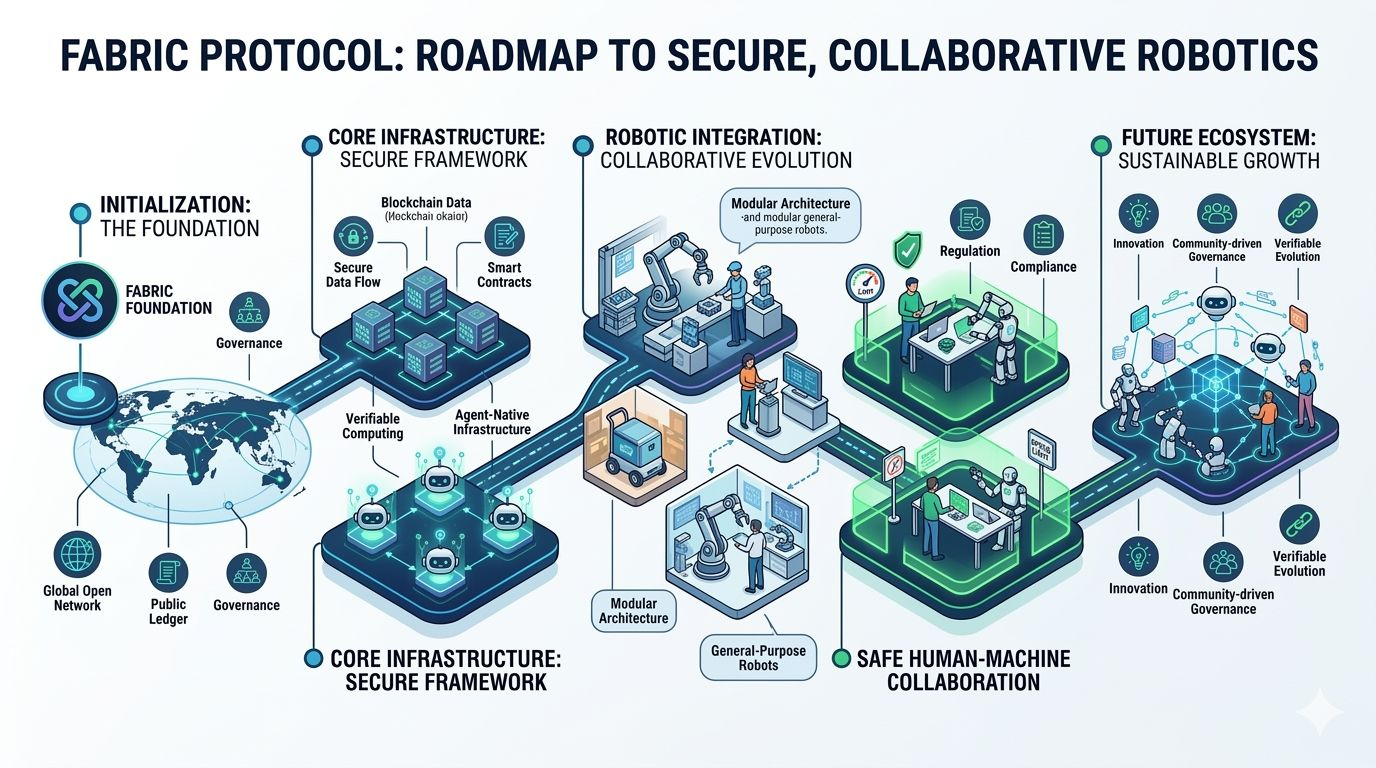

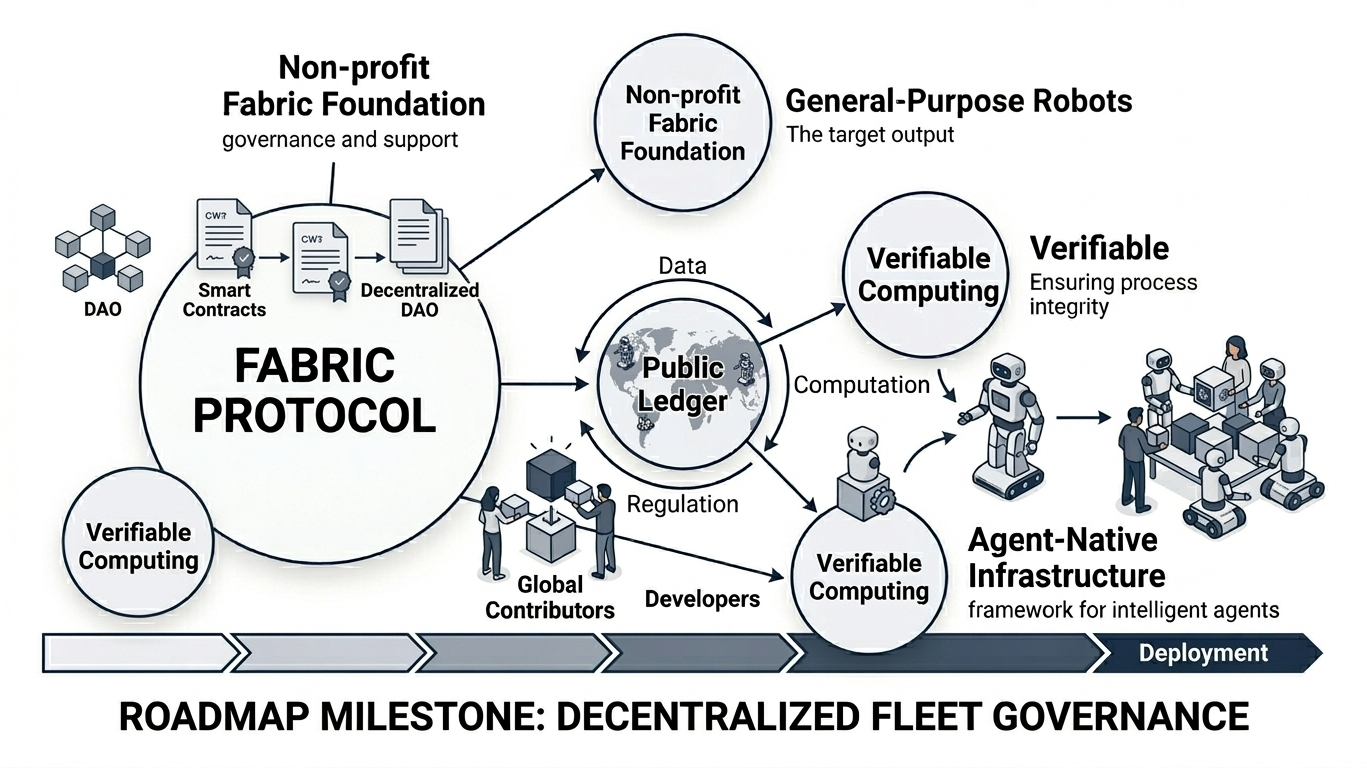

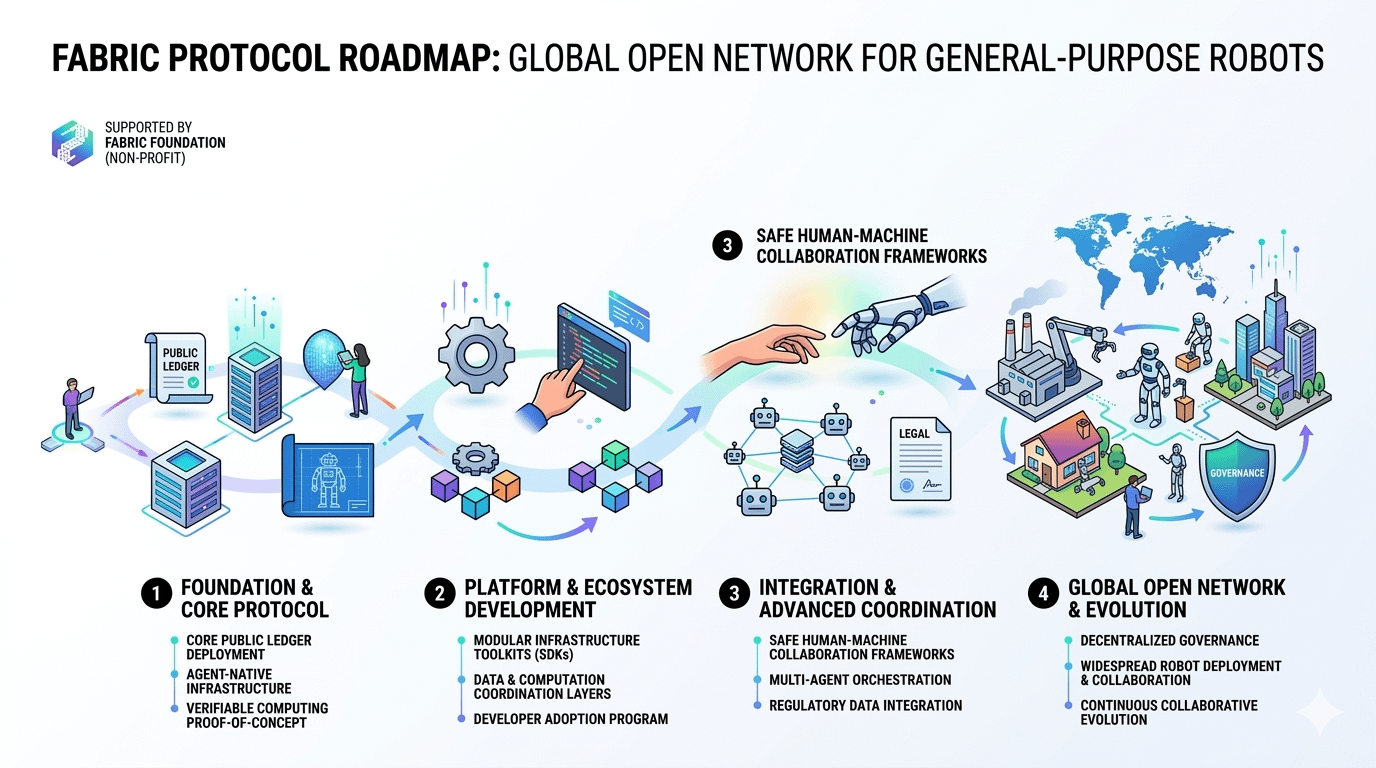

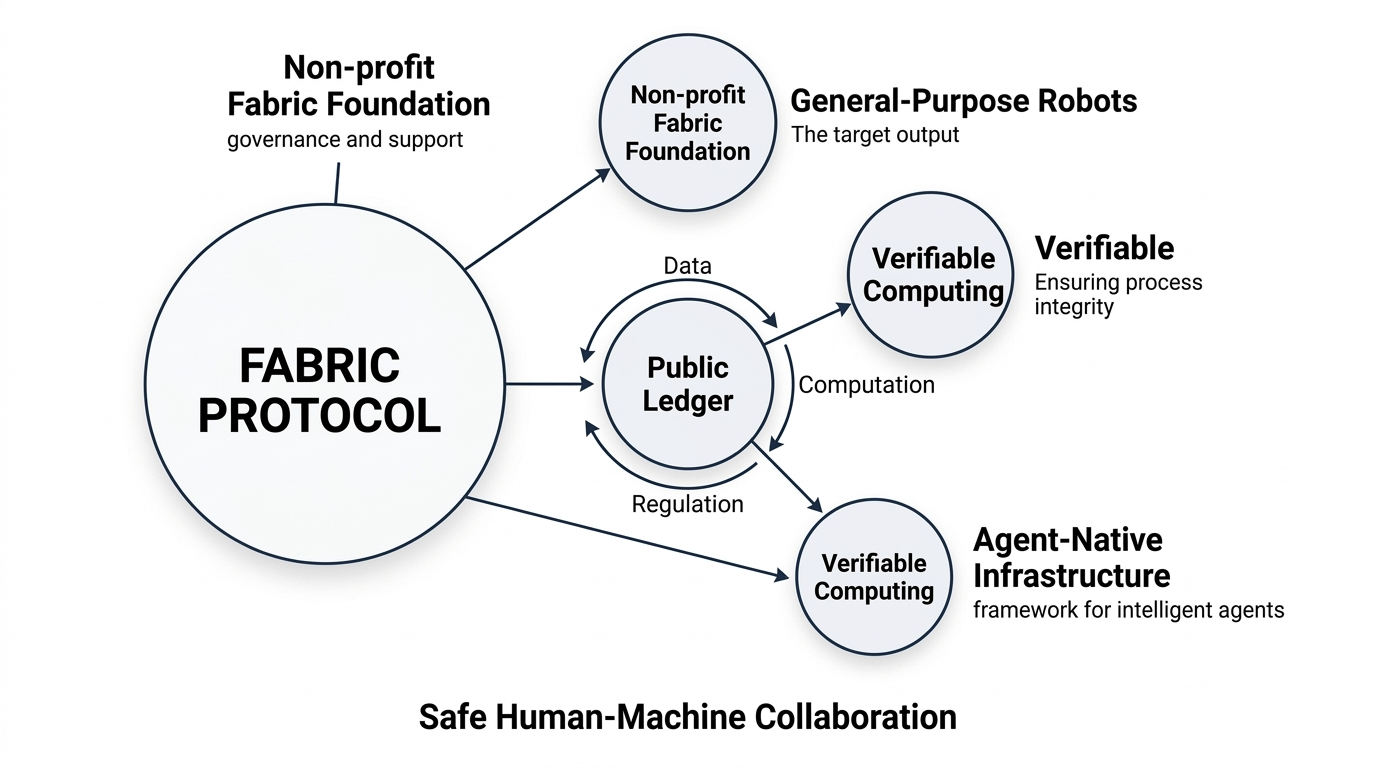

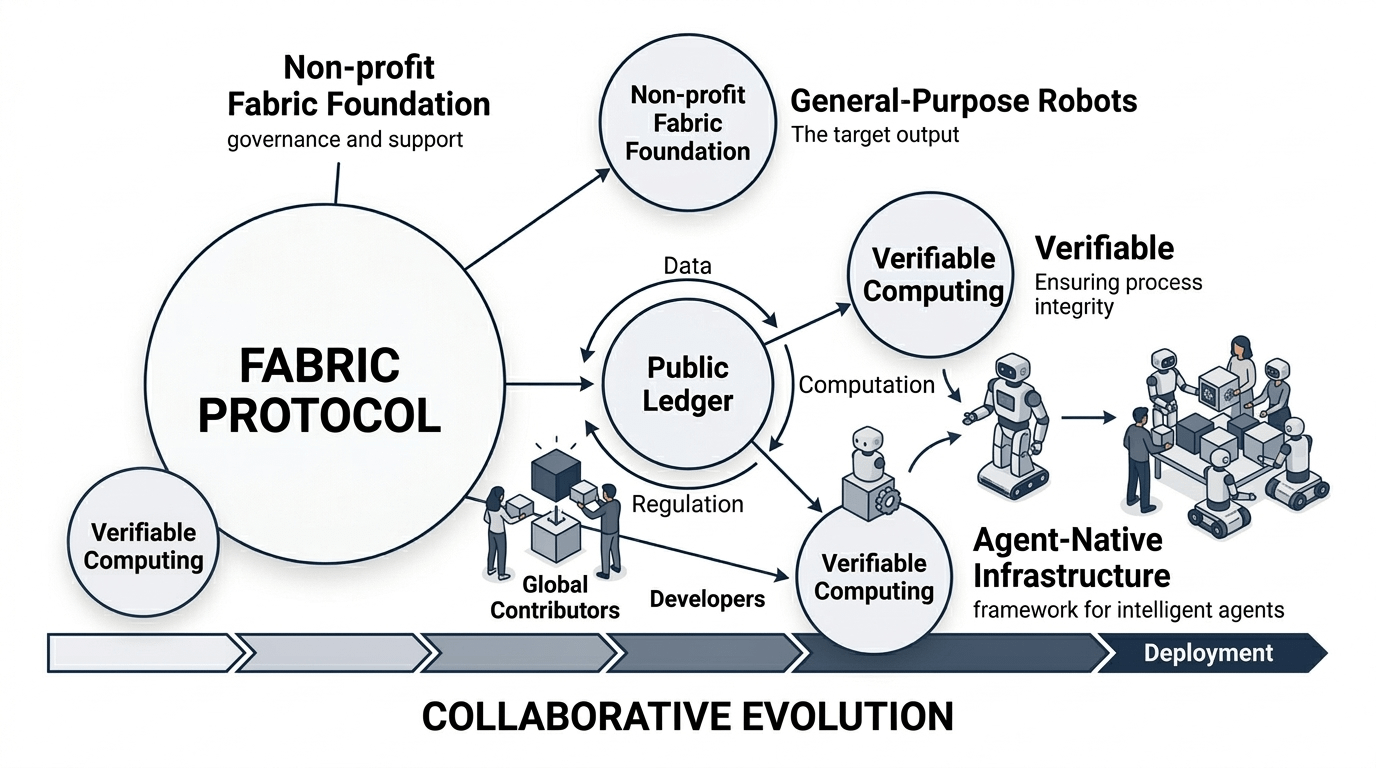

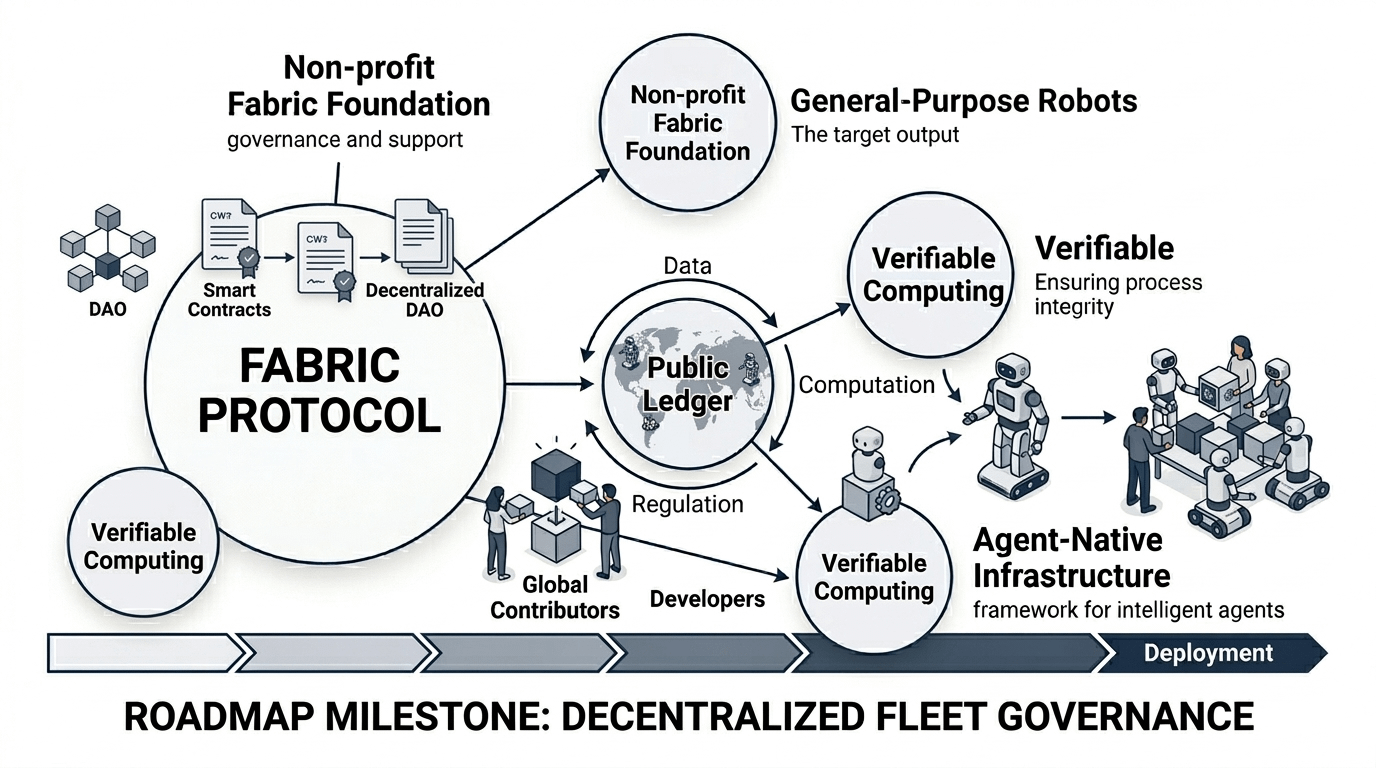

This is the psychological precipice we stand on as we transition from the era of institutions to the era of autonomous protocols like Fabric. Fabric, a system designed to coordinate the physical movements of general-purpose robots through a decentralized public ledger, is often discussed as a triumph of engineering. But beneath the talk of verifiable computing lies a profound and unsettling shift in the human experience of safety. We are moving from a world where we trust people to a world where we are asked to trust math, and we are finding that the human brain is poorly evolved for the latter.

Decentralization is not a technical upgrade; it is a psychological experiment in the removal of visible authority.

For a century, we have outsourced our sense of security to the "Visible Man." When a bank loses our money, we envision a CEO in a suit. When a plane malfunctions, we look for the black box to find the pilot's last words. We find comfort in the concentration of responsibility because it provides a target for our grievances. Fabric removes this target. By distributing the governance and evolution of robotic agents across a global, ownerless network, it creates a "God’s-eye view" of infrastructure where no single entity holds the kill switch.

This leads us to the first pressure point: the friction between emotional trust and mathematical trust.

To a cryptographer, transparency is the ultimate safeguard. If the code is open and the ledger is public, the system is "trustless." But to the average human, transparency often feels like exposure. Imagine a robot walking through your home, its every joint movement and sensory input mediated by a decentralized protocol. On paper, you are safer because no single corporation can "brick" your robot or vacuum up your data for a private server. Everything is visible.

However, visibility is not the same as understanding. When we see the raw clockwork of a system—the endless stream of hashes and proofs—we do not feel empowered; we feel small. We confuse transparency with safety, yet the more transparent a system becomes, the more we realize how little we actually control. In a centralized system, I trust the "Expert" to watch the dial. In a decentralized system like Fabric, I am told I can watch the dial myself, but I do not know how to read it. The result is a cold, ambient anxiety. We are surrounded by machines that are mathematically secure but emotionally orphaned. We miss the "fake button" because the protocol is too honest to give us one.

This honesty creates a vacuum where blame used to live. This is our second pressure point: blame concentration versus blame diffusion.

In the old world, centralization acted as a lightning rod. If a centralized robotic fleet were to malfunction, there is a headquarters to picket. There is a support desk to call. There is a human on the other end of the line who can be yelled at, pleaded with, or sued. This is "Blame Concentration." It is a vital social lubricant. It allows us to process failure by assigning it to a specific soul.

In an agent-native infrastructure like Fabric, blame is diffused across a thousand nodes and a million lines of immutable code. If the system fails, who is the villain? The non-profit foundation that doesn't "run" the code? The anonymous validators? The math itself? When there is no CEO to blame, our anger has nowhere to land. It reflects back onto us. We find that we would rather be oppressed by a visible tyrant than be inconvenienced by an invisible, perfect logic. We find centralization comforting because it gives us a clear exit strategy for our frustration.

The protocol is silent. The ledger moves. No one is home. The lights stay on. But the house feels empty. You cannot argue with a ghost. You cannot sue a sequence. It is cold. It is fair. It is terrifying.

There is a structural trade-off here that no amount of "user-friendly" interface design can solve: the inverse relationship between system resilience and human closure. To make a system truly resilient—to make it a "Fabric" that cannot be torn by a single bad actor—you must make it impossible for any single actor to "fix" it on a whim. To remove the point of failure, you must remove the point of contact. You cannot have a system that is both truly decentralized and capable of offering a human apology.

We are entering an era where our physical world—the robots that move our goods and perhaps eventually mind our children—will be governed by these "ownerless" systems. We will be told this is the ultimate form of freedom, a liberation from the whims of corporate overlords. And yet, as we watch these machines operate with a precision that no human could ever coordinate, we will find ourselves looking for the brass knob. We will look for the red button that doesn't go anywhere, just so we can feel like we are still the ones in the car.

The ledger provides a perfect record of what happened, but it offers no explanation for why it felt so wrong. We are building a world where we can verify everything and feel certain of nothing.

@Fabric Foundation #ROBO $ROBO