I keep returning to the same question whenever a new coordination protocol enters the market: what breaks first when the environment stops being friendly? Not when liquidity is abundant or narratives are expanding, but when capital begins to retreat and everyone involved suddenly cares more about survival than about ideals. Protocols built around privacy and zero-knowledge coordination—like Midnight Network—often present themselves as infrastructure for a world where sensitive information, capital, and identity can interact without intermediaries. The architecture is elegant. The mathematics are convincing. But coordination systems are rarely stress-tested by mathematics; they are stress-tested by behavior.

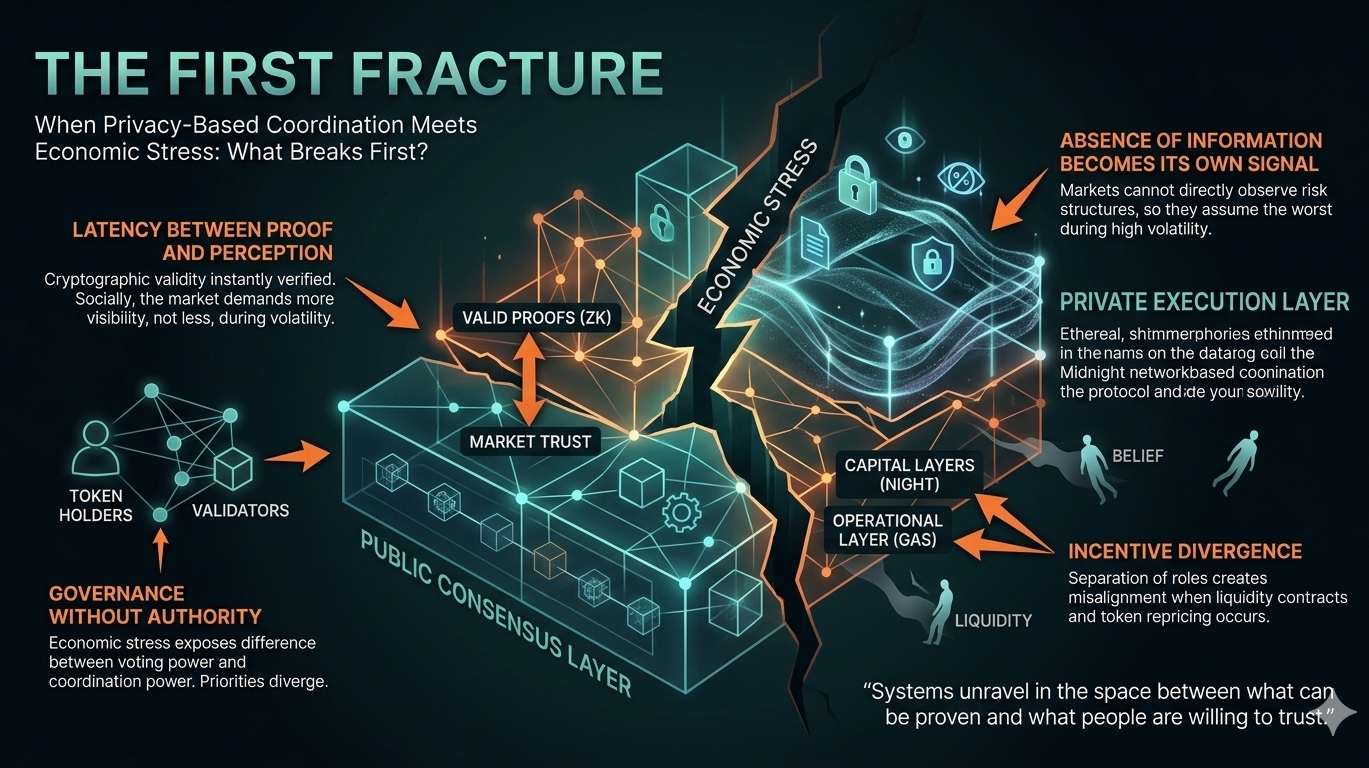

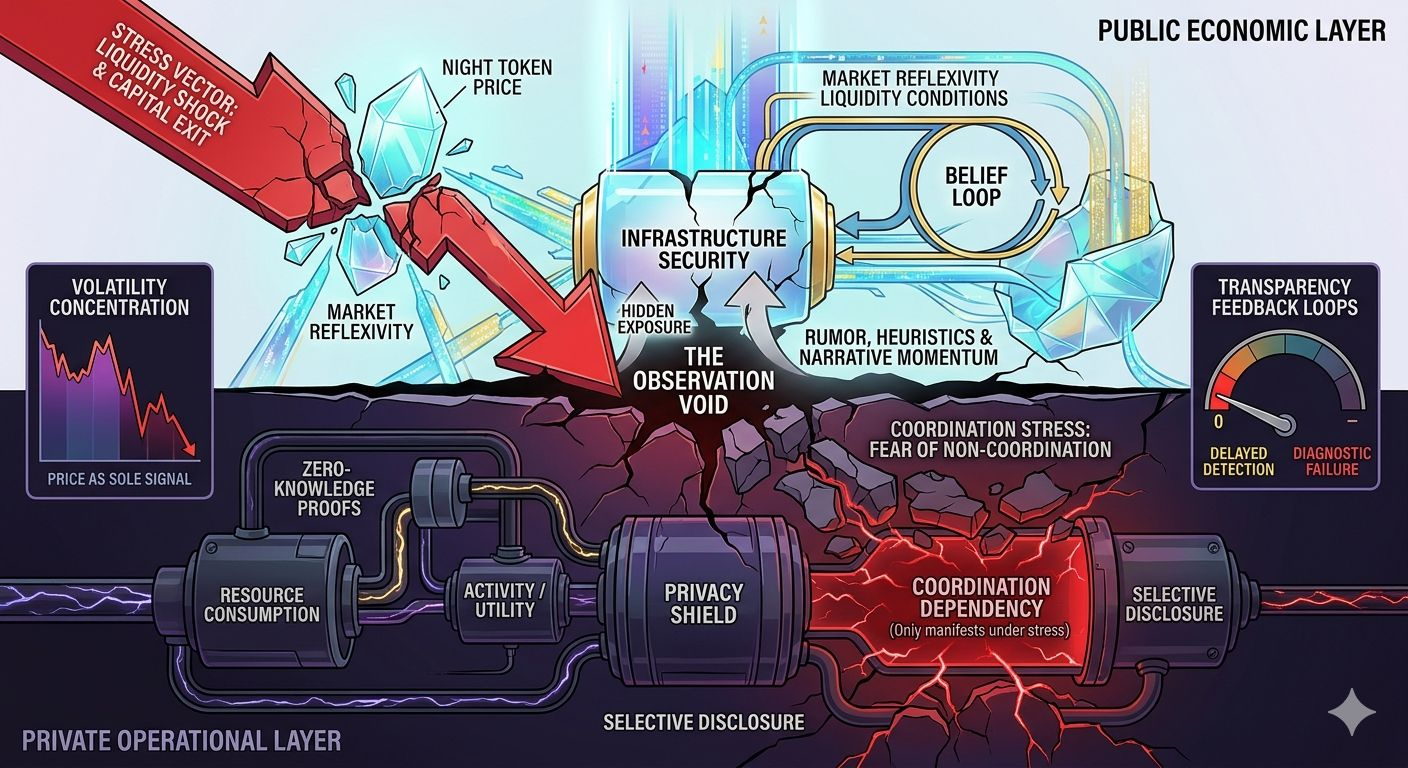

The first structural pressure point emerges from the way trust is redistributed when visibility disappears. Midnight relies on zero-knowledge proofs to allow participants to prove that something is valid without revealing the underlying information. The network’s architecture separates a transparent public ledger from a private execution environment, where sensitive state transitions occur locally and only cryptographic proofs reach the chain. From a technical perspective, this is an impressive compression of trust: the network no longer needs to know the data, only the proof that the data satisfies the rules. But in markets, trust rarely disappears—it migrates. When information becomes cryptographically hidden, participants begin substituting other signals: reputation, liquidity depth, validator behavior, or simply social consensus. Under economic stress, those substitute signals start carrying more weight than the proofs themselves.

I’ve watched enough market cycles to know that coordination systems rarely fail because their rules are unclear. They fail because incentives start diverging faster than the system can reconcile them. Midnight attempts to mitigate one common problem by separating capital ownership from operational resources. Its native token, NIGHT, functions as governance and staking infrastructure while generating a consumable resource used for transaction execution. On paper this separation reduces speculative pressure on the operational layer of the network. In practice, it also creates a subtle behavioral dynamic: the actors who control the capital layer are not always the same actors who depend on the operational layer. When stress hits—say, liquidity contraction or a sudden repricing of the token—the alignment between those groups becomes uncertain.

The uncomfortable part of privacy-centric coordination is that it changes how markets interpret solvency. Traditional blockchains rely heavily on radical transparency. Positions, balances, and flows are visible enough that markets can react before failures become catastrophic. Midnight deliberately inverts that model. Its design allows participants to prove properties of data—compliance, solvency thresholds, identity attributes—without revealing the raw data itself. The intention is rational: businesses and institutions cannot operate if every balance sheet detail is permanently public. Yet the behavioral consequence is less obvious. When markets cannot see the structure of risk directly, they tend to assume the worst during volatility. The absence of information becomes its own signal.

This leads to the first real fracture point: latency between proof and perception. Cryptographically, a system like Midnight can verify correctness instantly. Socially, the market may not accept that proof as sufficient. I’ve seen this pattern across multiple narratives. When participants lose confidence in an underlying assumption—liquidity, collateral quality, validator neutrality—they begin demanding more visibility, not less. A protocol built to minimize information leakage suddenly faces a paradox: the stronger its privacy guarantees, the harder it becomes for the market to rebuild confidence during uncertainty.

The second pressure point sits deeper in the coordination layer: governance without authority. Midnight frames its token as infrastructure for network security and decision-making rather than as a payment asset. That distinction matters. In theory, governance tokens coordinate incentives across validators, developers, and users. In practice, governance becomes meaningful only when participants believe the system can enforce outcomes. Economic stress exposes the difference between voting power and coordination power. If liquidity providers, application developers, and token holders respond to stress with different priorities, the network’s governance process becomes a negotiation rather than a command structure.

I tend to think about governance systems the same way I think about liquidity pools. They look stable until the moment participants try to exit simultaneously. Privacy layers complicate this dynamic because they reduce the informational feedback loops that normally guide coordination. Participants cannot easily observe how others are positioning themselves. They only see the resulting proofs and state transitions. That means collective behavior becomes harder to anticipate. Coordination slows down precisely when it needs to accelerate.

There is also a structural trade-off embedded in the design that rarely gets discussed openly. Midnight tries to balance privacy with auditability by keeping settlement and consensus transparent while shielding sensitive data within proofs. This hybrid approach attempts to preserve regulatory compliance while protecting individual data. But every hybrid architecture introduces friction between its two halves. Transparency enables market discipline; privacy protects participant autonomy. Under normal conditions these goals coexist. Under stress they begin to conflict.

I’ve seen capital rotate through enough narratives—DeFi summer, algorithmic stablecoins, modular infrastructure—to recognize that belief is itself a form of liquidity. Systems that rely on belief rarely notice it until it starts evaporating. In a privacy-preserving coordination protocol, belief operates on two layers simultaneously. Participants must believe that the cryptography is sound, and they must also believe that everyone else will continue respecting the incentives embedded in the system. The first problem is mathematical. The second is social.

The uncomfortable question is simple: what happens when participants begin to suspect that proofs are correct but incentives are failing?

That distinction matters more than most people realize. A system like Midnight can guarantee that computations are valid without exposing their inputs. It cannot guarantee that participants will continue behaving in ways that preserve collective coordination. Incentives drift quietly. Liquidity migrates silently. By the time the market notices the shift, the proofs are still valid—but the assumptions behind them are no longer shared.

And coordination systems, once belief fractures, rarely fail all at once. They unravel gradually, in the space between what can be proven and what people are willing to trust.

#night @MidnightNetwork $NIGHT