and it's not the one most people expect. It's not "will robots take our jobs?" or "when will they be smarter than us?" Those get the headlines, but they're not the question that actually matters right now.

The question that matters is simpler, and harder. How do you trust a machine you didn't build?

Sit with that for a second. Because it changes everything about how you think about the future of robotics.

Right now, if you use a robot in a factory, a warehouse, a research lab you generally know where it came from. You bought it from a company. That company trained its models, wrote its software, tested its systems. Your trust is based on the company's reputation, maybe some certifications, maybe a track record. It's not that different from buying a car. You trust the brand.

But general-purpose robots are going to be different. That's where things get interesting. A general-purpose robot isn't locked to one task or one environment. It's meant to adapt. Learn. Operate in places its builders never anticipated. And to get there, it's going to need contributions from many sources training data from different geographies, models improved by different teams, software updates from different developers.

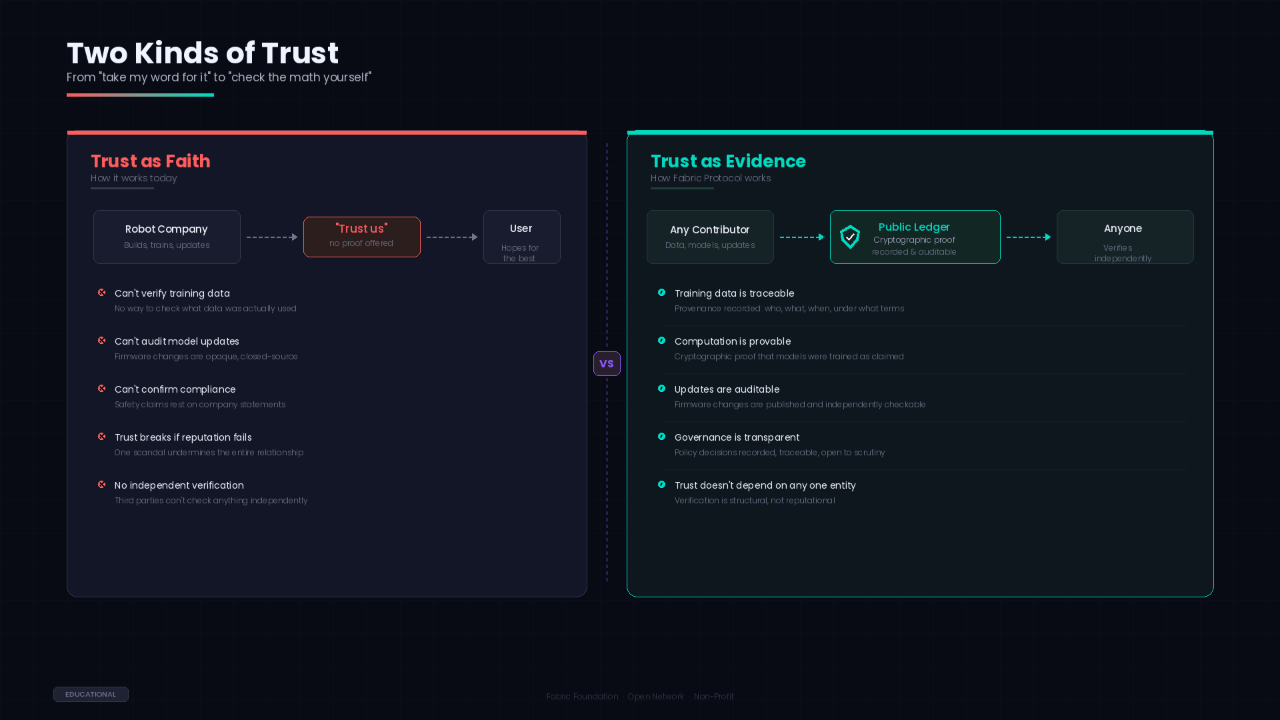

So the question stops being "do I trust this company?" and becomes "do I trust this system?" A system with many contributors, many moving parts, many decisions layered on top of each other. And that's a fundamentally different kind of trust.

You can't solve it with a brand name. You need something structural.

@Fabric Foundation Protocol is one attempt at building that structure. It's a global open network, backed by the Fabric Foundation a non-profit designed to coordinate the development of general-purpose robots through shared, verifiable infrastructure.

The word "verifiable" is doing a lot of work in that sentence. More than it might seem.

In most systems, when someone says "trust me," what they really mean is "you can't check, so take my word for it." Verifiable computing flips that. It means producing mathematical proof that a computation happened exactly as described. Not approximately. Not "our internal tests confirmed it." Actually, checkably, provably correct.

So when a model gets trained on Fabric's network, the claim isn't "we trained it properly." The claim is "here's the cryptographic proof that the training used this data, followed this process, and produced this result. Check it yourself."

That's a different conversation. And it becomes obvious after a while why it matters so much for robots specifically. A bug in a web app is annoying. A bug in a robot operating in a hospital is dangerous. The stakes demand a level of verification that reputation alone can't provide.

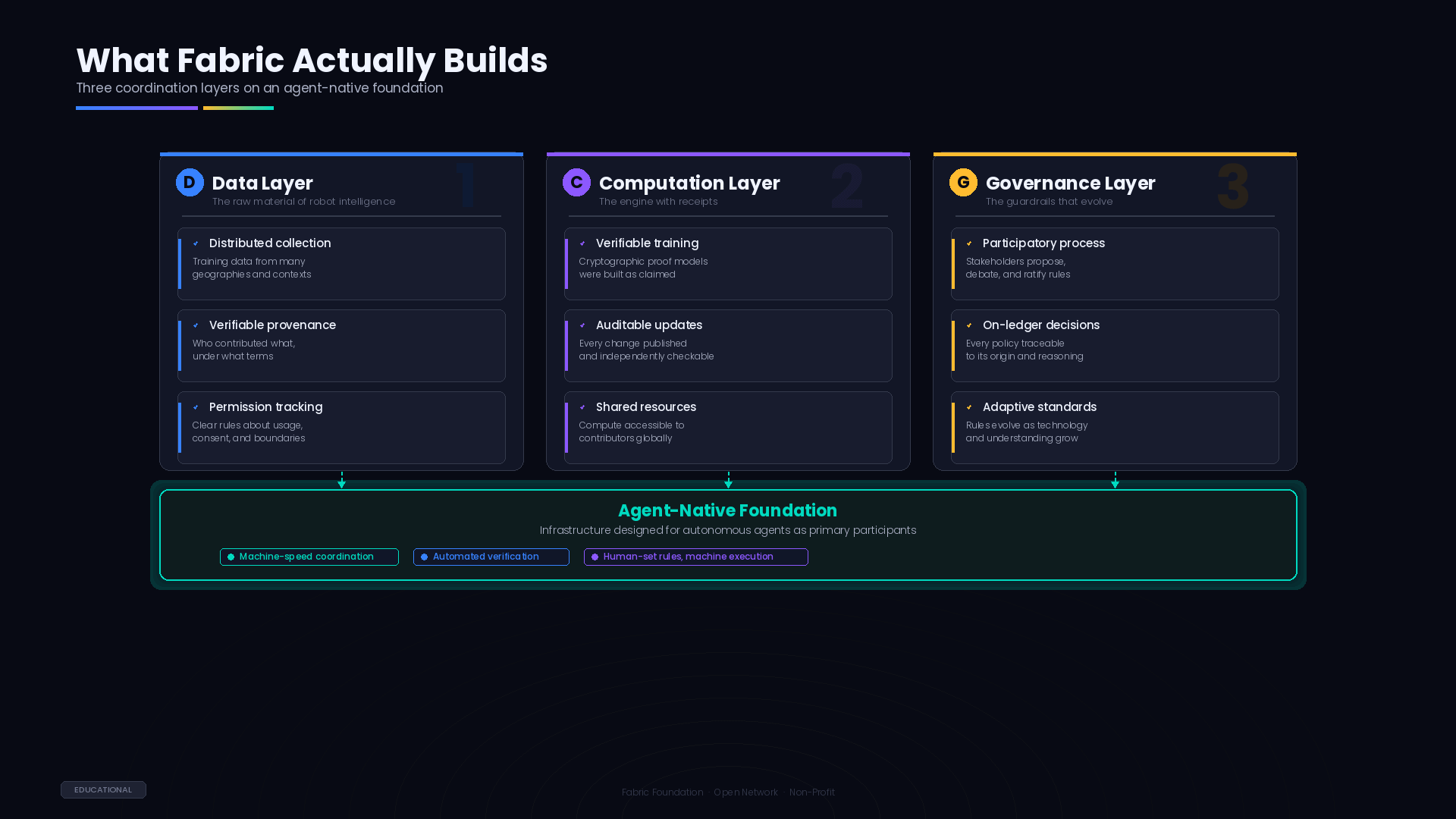

Let me back up and explain how the pieces fit together, because it's more modular than you might expect.

Fabric coordinates three things through a public ledger: data, computation, and governance. The ledger is the shared record the place where contributions, decisions, and proofs all get recorded in a way that anyone can audit.

Data is the first layer. General-purpose robots need to learn from the real world, and the real world is impossibly varied. The sounds, textures, layouts, social norms, physical objects they differ from one city block to the next, let alone from one continent to another. Collecting all that data in one place, under one organization, isn't realistic. It has to be distributed. Many contributors, many contexts, many perspectives.

But distributed data only works if you can track it. Fabric's ledger records who contributed what, under what terms, with what permissions. It's not a perfect solution to the trust problem nothing is but it gives the process a spine. A record you can actually look at.

Computation is the second layer. This is where the verifiable computing comes in. When models are trained or updated using the network's resources, the process generates proofs. Those proofs live on the ledger. Anyone who wants to verify that a particular model was trained as claimed can do so. Not by asking the person who trained it. By checking the math.

I keep coming back to how different this is from how things work today. Today, you update a robot's software because the manufacturer pushed an update. You trust them. With Fabric, you could verify the update independently. That's not a small difference. It's the difference between trust as faith and trust as evidence.

Governance is the third layer, and it's the one that's easiest to underestimate. Rules about how robots should behave, what data practices are acceptable, what safety standards apply these decisions are typically made behind closed doors by companies and regulators, often after something has already gone wrong. Fabric tries to make governance participatory and transparent. Proposals are made, debated, voted on, and recorded. The history is public. The reasoning is traceable.

Whether transparent governance produces good governance is an open question. Transparency is necessary but not sufficient. You still need wisdom, compromise, good faith. A ledger can't supply those. But it can create the conditions where their absence is visible, which is its own kind of accountability.

There's an aspect of Fabric's design that I find genuinely interesting, and it's the one most people skip over. The system is agent-native. That means the infrastructure is designed with the assumption that the primary participants aren't humans logging in and clicking buttons. They're autonomous software agents programs that request resources, submit data, negotiate with other agents, and execute tasks on their own.

This matters more than it sounds like it should. When you design a system for human users, you build in things like interfaces, prompts, confirmation dialogs. When you design for agents, those things disappear. Instead, you need machine-readable protocols, automated verification, and conflict resolution systems that operate at speeds no human could match.

The question changes from "how does a person use this" to "how do machines coordinate safely without a person watching every interaction." And that question, honestly, is one of the most important questions in technology right now. Not just for robotics. For everything that involves autonomous systems interacting with each other and with the physical world.

Fabric doesn't answer that question completely. I'm not sure anyone can yet. But it's building the infrastructure that makes the question tractable, which is a necessary first step.

Here's the thing about infrastructure, though. It's invisible when it works. Nobody thinks about the water pipes until they break. Nobody thinks about internet protocols until the connection drops. If Fabric succeeds really succeeds most people will never know it exists. They'll just notice that robots seem to work well together, that safety standards seem coherent, that the whole ecosystem feels surprisingly well-coordinated for something so complex.

The credit will go elsewhere. To the companies that build the robots. To the AI labs that train the models. To the governments that set the regulations. The infrastructure will sit underneath, unnoticed, doing the boring work of making coordination possible.

And that's fine. That's what good infrastructure does. It disappears into the background, holding everything up.

I want to be honest about the uncertainty here. Fabric Protocol is early. The problems it's tackling global data coordination, verifiable computation at scale, participatory governance for autonomous systems are genuinely hard. Not just technically hard. Socially hard. Politically hard. The kind of hard that takes decades, not quarters.

There will be false starts. Competing approaches. Heated debates about standards that sound trivial to outsiders but matter enormously to the people building the systems. That's how infrastructure gets built. Slowly, messily, with a lot of boring meetings and incremental progress.

But the underlying insight feels right to me. Robots that operate everywhere, for everyone, built by many hands that project needs shared rails. It needs a coordination layer that nobody owns and everybody can verify. It needs trust that's structural, not reputational.

Whether Fabric is the project that delivers that, or just one of the early efforts that maps the territory for whatever comes next I genuinely don't know. Nobody does. These things reveal themselves slowly, over time, through use and failure and iteration.

The thought doesn't really finish. It just opens into the next question, which is probably the right place to leave it.

#ROBO $ROBO