If LLM (Large Language Model) is a genius trapped in a glass room, then Skills are the 'digital prosthetics' that allow it to break the glass and interfere with reality.

1️⃣ What are Skills? From 'predicting the next word' to 'driving the next action'

From a bottom-up logic perspective, large models are essentially a probability-based text prediction engine. It can tell you 'how to fix a computer,' but it doesn’t have hands to hold a screwdriver.

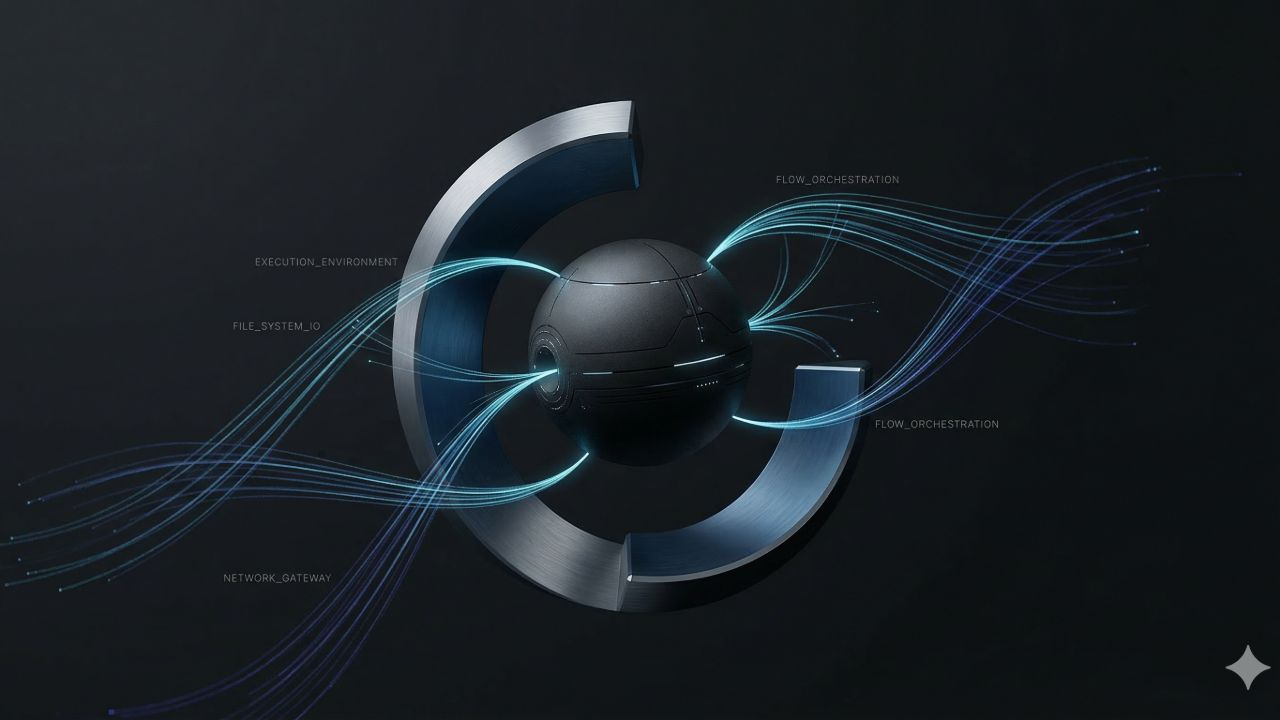

Skills (commonly referred to as Tools or Functions in technical documents) are structured encapsulations of external capabilities. It consists of two parts:

Semantic description (Interface Declaration): Using natural language to tell AI: 'Who I am, what problems I can solve, and what parameters I need to be called.'

Execution logic (Implementation): The actual running Python, JavaScript code, or API request.

When AI realizes that it cannot solve problems solely with 'brainpower' (e.g., querying real-time cryptocurrency prices for 2026), it will actively output a segment of formatted calling code to trigger the Skill execution and obtain results.

2️⃣ Evolution history: From 'black box plugins' to 'industrial protocols'

The development of AI skills has gone through three key stages, which determines why the current OpenClaw can 'do it all' with various skills:

Phase 1: The era of closed plugins (early 2023)

Represented by ChatGPT Plugins. Each platform has its own standards, and the code is highly coupled. Developers must write different code for different platforms, resulting in extreme fragmentation of the ecosystem.Phase 2: Standardization of function calls (Function Calling, 2023-2024)

OpenAI introduced the tools parameter, normalizing the description of skills into JSON Schema. This has become the de facto 'common language' in the industry. AI no longer makes random guesses but outputs instructions in a strict format.Phase 3: Unified protocols (MCP era, 2025-2026)

The emergence of Model Context Protocol (MCP) is a turning point. It separates 'skills' from 'Agent software.'Previously: You wrote a plugin for OpenClaw, and it could only be used by OpenClaw.

Now: You run an MCP Server (e.g., Google Search skill server), and OpenClaw, Claude Desktop, Cursor, and even your IDE can connect to it via the same protocol. This is the underlying reason you find skills can be 'universal.'

3️⃣ Why is the universality of Skills epoch-making?

Why can the protocol developed by Claude, OpenClaw, be used? Behind this is the victory of **decoupling**:

Separation of capability and brain: The model does not need to learn 'how to operate the Binance API' during training. It only needs to learn 'how to read the API documentation.' This means the model can be smaller and more specialized, while capabilities can be infinitely expanded through Skills.

Cross-platform 'Lego-ization': Just as USB interfaces have unified peripherals, MCP has unified AI skills. Developers only need to develop a 'skill package' once to deploy it to any global Agent architecture that supports that protocol.

Controllability of the secure sandbox: As you previously cared about, the universal protocol allows us to perform permission checks at the 'interface layer.' We can set: Guest ID can only call Weather_Skill, while Admin ID can only call Shell_Skill.

4️⃣ Commercial and ecological significance: The 'App Store' moment for AI

The maturity of Skills means that AI is shifting from Content Generation to Workflow Automation.

For individuals: You can combine different Skills to create a super avatar that can help you write code (Cursor Skill), monitor large on-chain transactions (Binance Skill), and even automatically tweet (Twitter Skill).

For developers: Skill is the new entry point for traffic. The future of search will no longer be Google, but the Skill that AI calls upon when solving tasks.

5️⃣ The necessary path to Agentic Workflow

The power of AI lies not in how much it 'knows' but in how much it can 'mobilize.' The rise of open-source tools like OpenClaw is essentially about seizing the ecological niche of the Agent scheduling center.

When the brain (LLM) is smart enough and the interface (MCP/Skills) is unified enough, all that’s left is imagination.