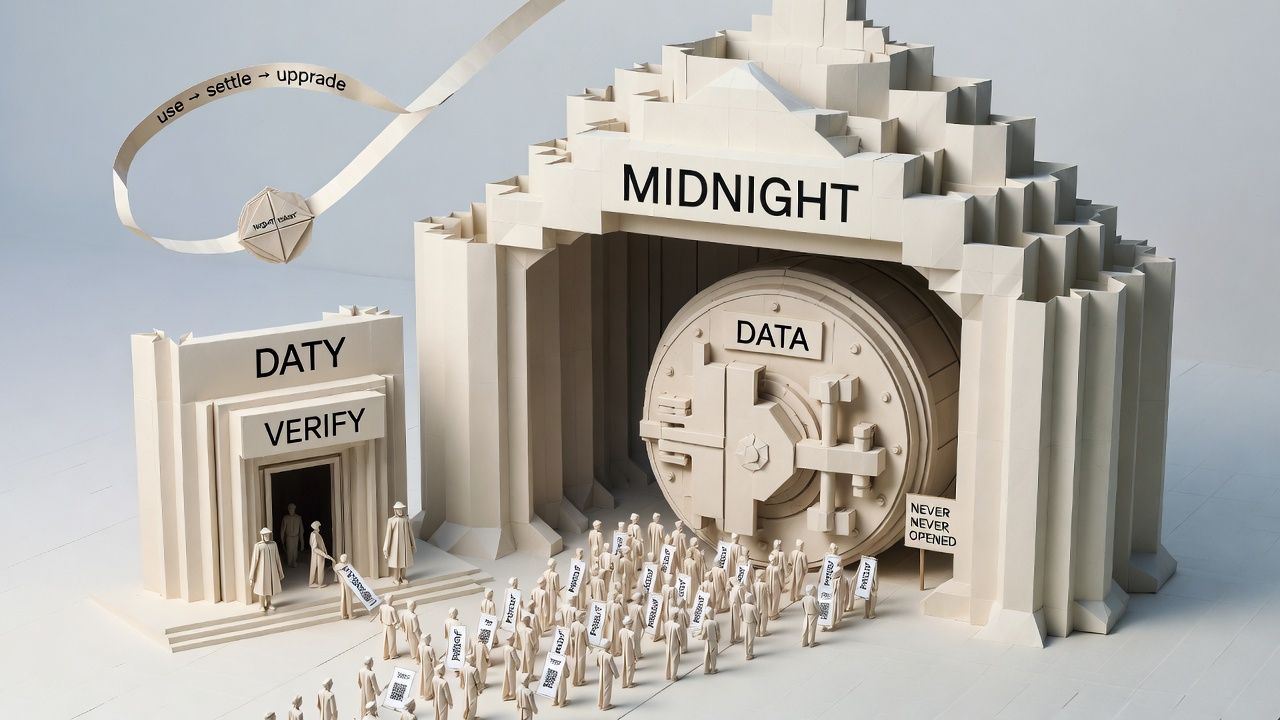

There is an invisible tax on-chain that becomes harder to detect the longer it is paid: each verification requests more information. You just want to participate through a threshold, comply with a rule, and complete a review, but the process assumes you should submit the original materials. Once the original materials enter the system, control begins to leak—archiving, copying, associating, secondary analysis, ultimately becoming an image in someone else's hands. You have not "done anything wrong," but you are forced to become transparent, and transparency is irreversible.

@MidnightNetwork The narrative is restrained: it provides practicality without compromising data protection and ownership. It does not make you hide, but allows you to continue doing your work while removing the "disclosure" from the default interaction.

1) The meaning of ZK here: change 'trust you' to 'verify you'

Many processes in the real world only need one conclusion: you meet the conditions, you haven't exceeded, you indeed have rights, and you have followed the rules. The traditional on-chain approach is to exchange originals for trust: the more original information you provide, the more the system dares to release. Midnight uses ZK to reverse the logic of trust: just providing a verifiable conclusion is enough; originals do not need to leave the premises.

This is not metaphysics; it is an upgrade of interaction modes. What you submit is 'verifiable proof', not 'reusable privacy fragments'. The more cross-platform collaboration and multi-party review, the more this mode resembles infrastructure rather than just a functional embellishment.

2) Why is data ownership easily lost: because processes love to 'keep copies'

What often steals ownership is not hackers, but habitual processes. Logs, forms, audit materials, risk control samples... To facilitate review, systems inherently want to store more and store it longer. Thus, users' privacy is not disclosed all at once, but rather handed out in installments. Once fragments accumulate to a certain threshold, you become portraitized, and even you cannot clearly say which boundaries have been exposed.

@MidnightNetwork aims to make 'verifiable' an alternative path: those that need verification are still verified, but conclusions should be released as much as possible. Originals should remain on the user side or in a controlled domain to discuss ownership.

3) The real task of tokens: turn 'proof capability' into a long-term supply

Proof generation, verification, infrastructure maintenance, toolchain support, none of these are free. The network must make 'drawing conclusions' the default experience, which requires that capabilities can be supplied long-term, costs can be sustained, and rules can evolve and correct. $NIGHT here means more like network components: it must support the consumption brought by use, as well as support long-term tasks like upgrades, parameter adjustments, and exception handling. Otherwise, the system will encounter a familiar ending: the concept is right, the experience is heavy, and in the end, everyone retreats to 'public first, then run'.

4) The harshest test: it must work as well as ordinary products to qualify as infrastructure

ZK projects are most likely to fail at 'correct but hard to use': complex integration, unpredictable costs, interaction feels like solving problems. @MidnightNetwork must pass the product gate: developers treat proofs as components, users treat verification as a routine action, and the whole process should be as seamless as possible. Only when 'less exposure can still get things done' becomes the default experience can Midnight be more than just a narrative about privacy, but rather a more rational on-chain order.

Ending

The value of Midnight Network is not in making the world mysterious, but in making verification not require disclosure: data protection and ownership are no longer a cost, and usability is no longer a trade-off. What it aims to do is to allow people to finally not have to choose between 'usable' and 'not exposed'.