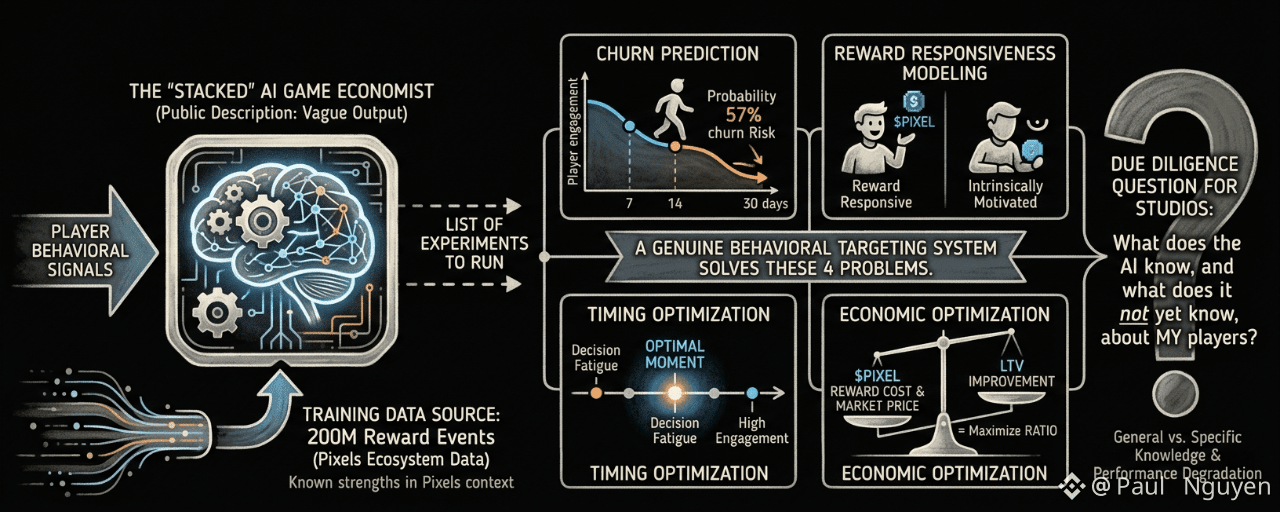

The AI game economist is the most differentiated feature in the Stacked pitch. It's also the feature that gets the least specific description of what it actually does. "Analyzes player behavior to surface experiments worth running" tells you the output: a list of experiments. It doesn't tell you the mechanism: what behavioral signals it analyzes, how those signals are converted into experiment suggestions, what confidence levels those suggestions carry, and how the confidence changes over time as the model processes more data from a specific studio.

Understanding the mechanism matters because the mechanism determines when to trust the recommendations, when to be skeptical of them, and how to detect when the model is generating low-quality suggestions that look plausible but aren't grounded in sufficient data.

Let me describe what a well-designed behavioral targeting system for gaming LiveOps actually needs to do, and then compare that against what we can infer about Stacked's AI from its public description.

A genuine behavioral targeting system needs to solve at minimum four problems simultaneously.

Churn prediction: given a player's current behavioral state, what is the probability that they will churn within the next 7, 14, or 30 days? This requires a time-series model of player engagement, calibrated to the specific game's session patterns and content cadence. The model needs to distinguish between "this player is taking a normal break" and "this player is showing early churn signals," and those patterns are specific to each game's typical engagement rhythm.

Reward responsiveness modeling: given a player's profile, how likely are they to respond to a PIXEL reward, and how large does that reward need to be to change their behavior? Not every player responds to rewards equally. Some players are highly reward-responsive: a small reward at the right moment significantly changes their behavior. Others are primarily intrinsically motivated and treat rewards as pleasant surprises rather than behavioral nudges. A targeting system that doesn't distinguish between these profiles wastes rewards on the intrinsically motivated and under-rewards the reward-responsive.

Timing optimization: given that a player is churn-risk and reward-responsive, what is the optimal moment in their session, or in their time-between-sessions, to fire the reward? Timing matters in behavioral economics. The same reward offered at a moment of high engagement has a different effect than the same reward offered at a moment of low engagement or decision fatigue.

Economic optimization: given that rewards have a real cost in $PIXEL, and $PIXEL has a market price, what is the reward size that maximizes the ratio of LTV improvement to reward cost? This requires the AI to reason about not just behavioral outcomes but economic outcomes, integrating the cost of the reward into the optimization function.

A system that genuinely solves all four of these problems simultaneously, for multiple player types, across varying game contexts, is a sophisticated piece of behavioral economics engineering. The Stacked AI may do all of this. The public description doesn't tell you.

What we can infer: Stacked has been running in the Pixels ecosystem for long enough to have trained the model on substantial behavioral data. The 200M reward events provide a large supervised learning dataset: for each reward event, the model knows what the player's pre-reward behavioral state was, what reward was given, and what the player's post-reward behavior looked like. This is genuinely good training data for learning reward responsiveness patterns in the Pixels context.

What we can't infer: how well the four problems above are solved for player types outside the Pixels ecosystem. The churn prediction model trained on Pixels daily session patterns may generalize poorly to a game with weekly engagement cycles. The reward responsiveness model trained on crypto-native players may generalize poorly to casual players with different PIXEL familiarity. The timing optimization trained on Pixels' specific session structure may generalize poorly to a different game's session patterns. The economic optimization doesn't generalize at all because it depends on PIXEL price which changes independently of any game-specific factors.

The honest representation of the AI game economist's capability would include a description of which of these four problems it has been built to solve, what performance it has achieved on each in the Pixels context, and what the expected performance degradation is when deployed in a new game context.

Instead, the public description gives you the output: "surface experiments worth running." That's the right output. The mechanism behind it determines whether you should trust those surfaced experiments on day one, on day thirty, or on day one hundred of your integration.

I find the AI game economist concept genuinely compelling. A behavioral targeting system with 200M training examples is not a toy. The institutional knowledge embedded in that training data is real. The question is how much of that knowledge is general and how much is specific, and the answer to that question is somewhere inside Stacked's model architecture documentation, which doesn't appear to be public.

For studios evaluating Stacked, this is the due diligence question worth asking directly. Not "does the AI work?" It clearly works in Pixels. But "what does the AI know, and what does it not yet know, about my players?"