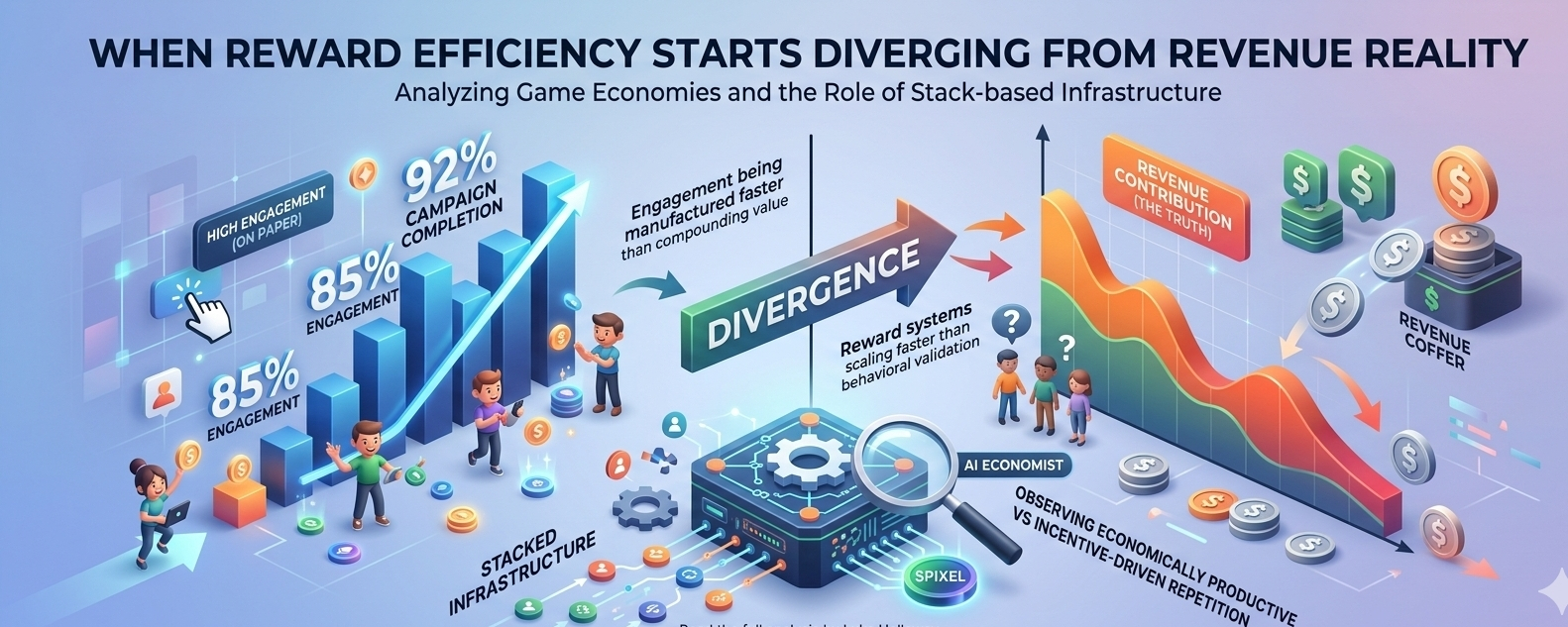

Last week, I was reviewing a cohort report where retention looked unusually strong on paper. Users were “active,” reward participation was high, and campaign completion rates were near peak levels But when I traced it back to revenue contribution, the curve didn’t match. Engagement was being manufactured faster than it was compounding into real value

That mismatch is something I’ve seen before in older game economies — especially when reward systems scale faster than behavioral validation

Inside @Pixels ’ Stack-based infrastructure, this is exactly the type of signal the system is designed to surface. The pLatform doesn’t treat engagement as a flat metric. It breaks it down into how much of that engagement is economically productive versus how much is incentive-driven repetition. That distinction becomes critical when reward loops expand across multiple games.

From an operator’s view, Stacked behaves less like a rewards engine and more like a live adjustment layer on top of game economies. It sits between player behavior and studio economics, constantly recalibrating how rewards are distributed based on observed outcomes. The AI economist layer is not just descriptive — it actively suggests where reward leakage is happening, which cohorts are over-incentivized, and which mechanics are quietly driving long-term retention decay.

On the technical side, the system’s strength comes from feedback loops. Rewards are not static distributions; they are conditional outputs tied to behavior clusters, time windows, and cohort response curves. That’s where $PIXEL indirectly becomes more than a token — it functions as a coordination asset across reward flows, allowing value to move between different experiences while still being measurable at the system level.

When I think about scalability, I keep coming back to a simple question: how many games can be plugged into this before signal degradation starts to happen? Because even well-designed systems break when input noise exceeds validation capacity. The more reward surfaces you open, the harder it becomes to ensure that what looks like “retention” is actually persistence rather than optimized farming behavior.

The risks here are subtle. Over-rewarding early engagement can flatten long-term curves. Poor cohort segmentation can make churn invisible until it compounds. And if fraud resistance lags behind behavioral adaptation, the system ends up training adversarial users instead of loyal ones.

What matters most in these environments isn’t DAU or even raw participation rates. It’s retention shape over time, reward efficiency per retained user, and whether engagement survives after incentive pressure decreases. Those are the only signals that tell you if the system is actually stabilizing or just inflating.

At scale, Stacked is closer to infrastructure than application logic. It’s a layer that tries to make game economies observable, adjustable, and partially self-correcting — but still dependent on the quality of human-designed parameters feeding into it. That tension never fully disappears.

And maybe that’s the most important takeaway — no matter how advanced the system becomes, it still reflects the discipline of its inputs more than the intelligence of its outputs.$PIXEL