I'll be honest. When I first read "AI game economist" I rolled my eyes a little 🙄 Every protocol slaps AI on something right now and calls it a differentiator. So I went digging into what this actually does mechanically, because the label means nothing without the function.

Here's what I found.

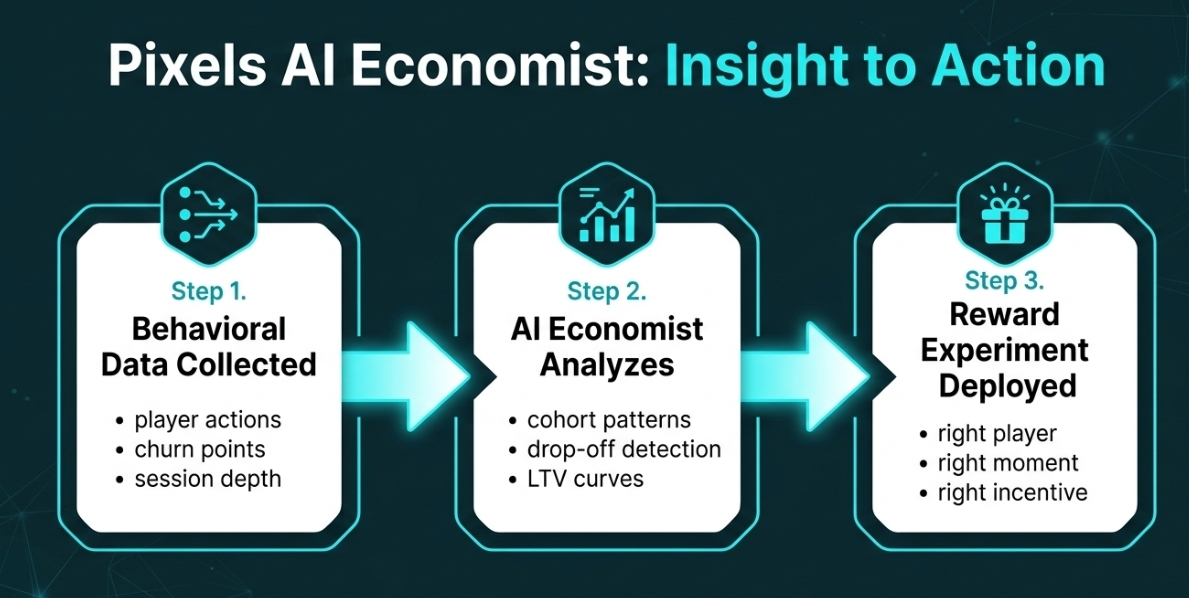

The AI layer in Pixels isn't a chatbot. It isn't a dashboard. It's a behavioral analysis engine sitting on top of the entire reward system. Studios using the platform can ask it real operational questions, why are players dropping between day 3 and day 7? What are the most loyal users doing before day 30? Which mechanics actually correlate with long term retention? And critically, it doesn't just answer those questions. It suggests reward experiments worth running next, then lets you act on those answers inside the same system.

That last part is the bit most people miss.

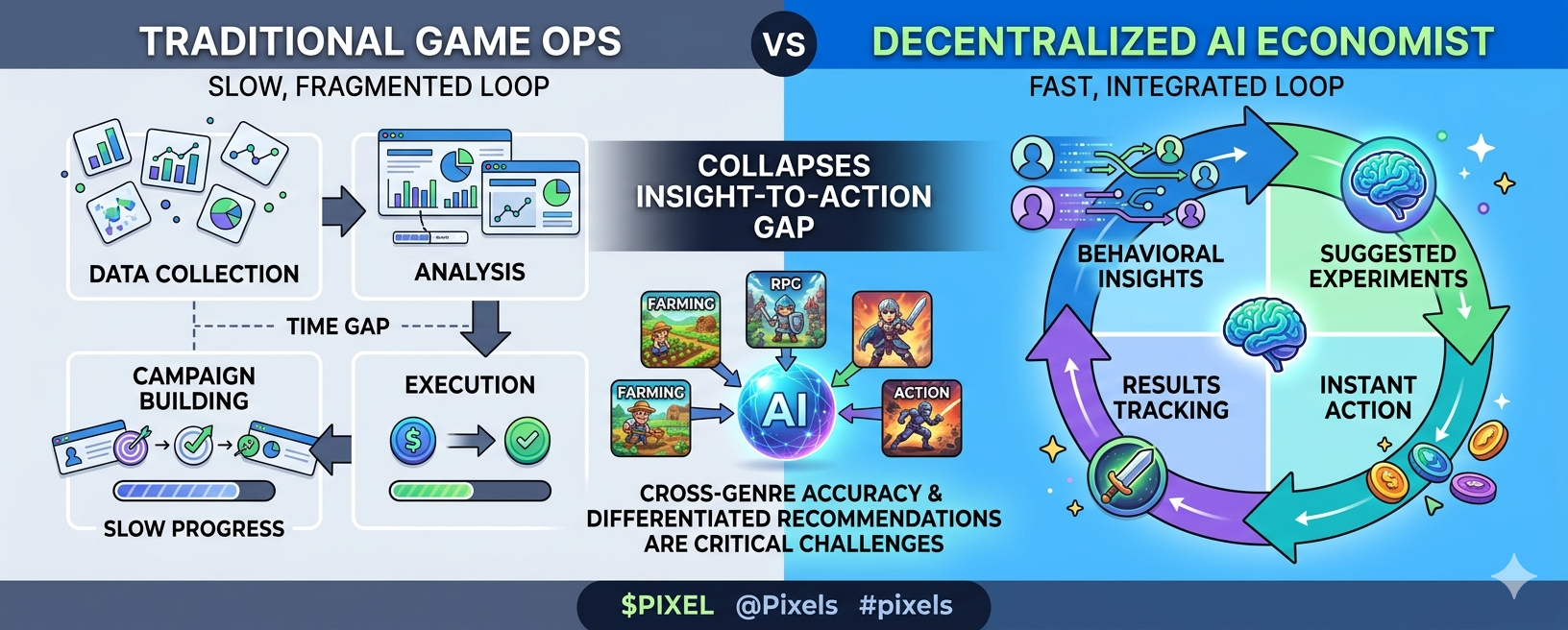

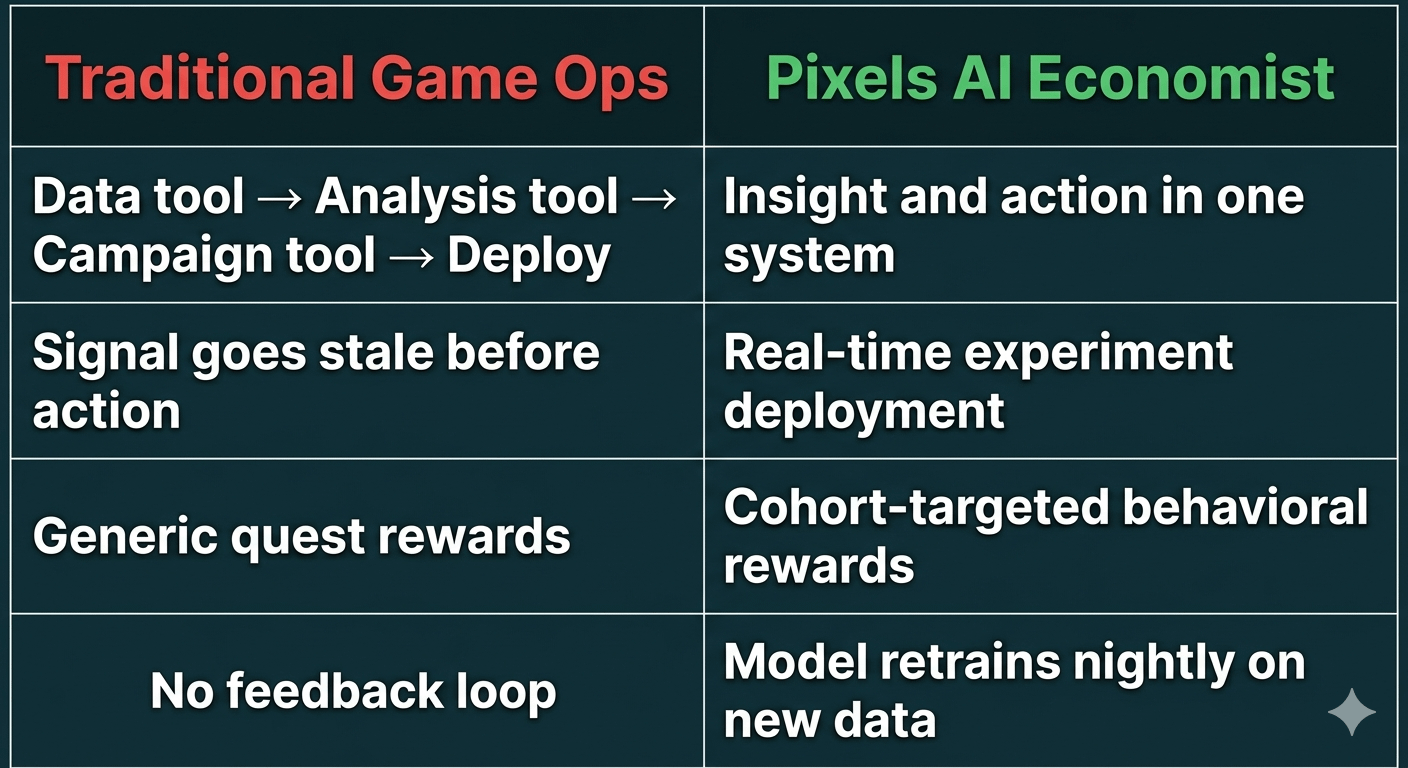

In traditional game operations, insight and action are separated. You pull data from one tool, analyze it in another, build a campaign in a third, deploy it through a fourth. By the time the experiment runs, the cohort you identified has already churned or converted. The signal is stale.

Pixels collapses that gap. The same system that surfaces the insight is the system that executes the reward. Insight to action with no waiting. I'm not sure people are appreciating how operationally significant that is for a live game studio trying to move fast.

But here's where I start asking harder questions.

The AI economist is only as good as the data it trains on. And right now, that data comes primarily from the Pixels ecosystem, core Pixels, Pixel Dungeons, a handful of partner titles. That's not nothing, it's processed over 200 million rewards across millions of players. But behavioral patterns from a farming game don't necessarily generalize cleanly to an action RPG or a competitive title. The model might be confidently wrong when applied to a genre it hasn't seen enough of.

I've been thinking about this for a couple days and I can't find where Pixels directly addresses cross-genre model accuracy. They talk about the dataset expanding as more games join, which is the right long-term answer. But a studio integrating today is betting on model quality that was trained on a pretty narrow slice of game types.

There's also something worth sitting with about what "suggesting experiments" actually means at scale. If the AI is making similar recommendations across multiple games simultaneously, because they're all seeing similar churn patterns, you could end up with homogenized reward structures across the ecosystem. Every game running the same re-engagement campaign at day 5 because the model flagged the same drop-off point. That's not necessarily better game design. It might just be synchronized mediocrity.

I'm genuinely uncertain whether that risk is real or theoretical. It depends entirely on how the model personalizes recommendations per game versus how much it generalizes from the pooled dataset.

What Pixels gets right here is the architecture. Collapsing insight and action into one system is genuinely hard to build and genuinely valuable when it works. The questions are about depth, how accurate is the model across genres it hasn't fully seen, and does the "suggest experiments" layer actually produce differentiated recommendations or does it converge toward the same playbook for every game?

Those aren't dealbreaker concerns. They're the kind of questions that only get answered after more games are live and more genres are in the dataset. Right now we're working with a system that's proven in one genre and theoretically extensible to others.

Does the AI economist get smarter and more differentiated as the ecosystem expands, or does it just get more confident about the same narrow set of patterns it already knows??