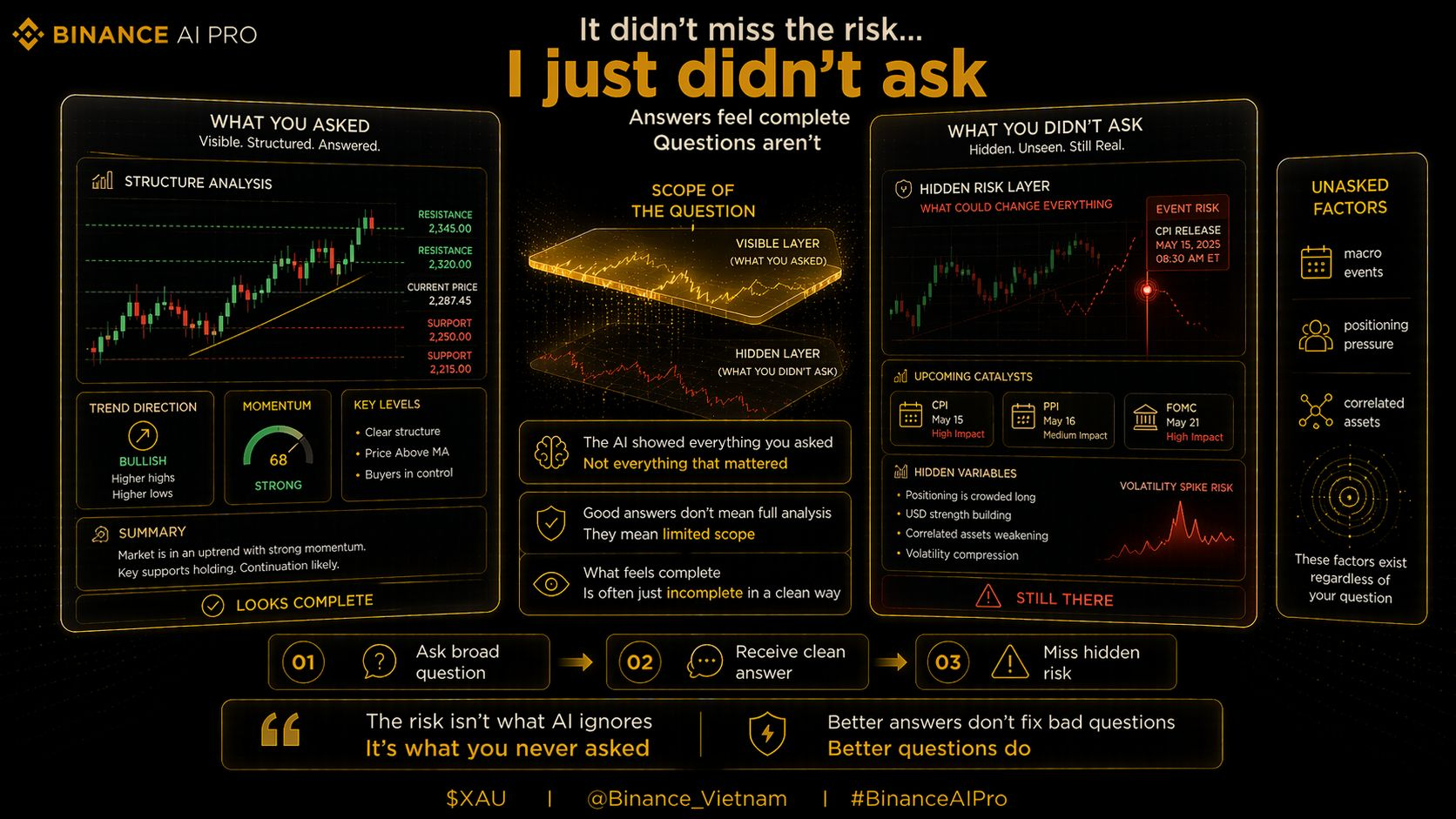

I used to think a “good session” with Binance AI Pro meant getting a clean, structured answer about XAU. Support, resistance, momentum, maybe some context. If it looked complete, I treated it like I had done proper analysis.

Then one trade made me rethink that completely.

I had a long open. Asked the usual question, something like “what does the structure look like right now?” The answer came back solid. Nothing alarming. So I held.

Next day, CPI dropped. Price moved against me pretty fast.

It wasn’t a black swan. The event was scheduled. I just… didn’t ask about it. And because I didn’t ask, AI Pro didn’t mention it.

That was the moment it clicked.

The tool didn’t miss anything. I did.

Since then I started noticing a pattern in my sessions. Most of my questions were broad. And broad questions give broad answers. They feel complete, but they quietly leave out anything you didn’t explicitly open the door to.

Things like upcoming macro events, funding shifts, positioning pressure… they’re all there in the data. But they don’t show up unless you ask.

So now before entering anything on $XAU, I force myself to go a bit deeper.

Not just structure. I ask what events are coming up, whether positioning is crowded, if correlated assets are moving in a way that could drag price.

Takes a few extra minutes. But the output feels very different.

Less “clean”, maybe. But more real.

I think that’s the uncomfortable part. A well-written answer feels complete, even when it isn’t.

AI Pro gives you exactly what you ask for.

The risk is everything you didn’t think to ask.

Still learning this, still missing things sometimes.

But now I don’t really trust a session just because it looks thorough.

@Binance Vietnam #BinanceAIPro $XAU $PIEVERSE $GUN

Trading always carries risk. AI-generated suggestions do not constitute financial advice. Past performance does not reflect future results. Please check product availability in your region.