I’ve seen enough GameFi whitepapers promise intelligent incentive alignment while quietly falling back on blunt emission schedules and blanket multipliers.

They outline reward tiers.

They mention data collection.

They claim behavioral targeting.

Then real player actions hit the system, rewards feel arbitrary, and engagement metrics flatline like they always do.

Pixels’ whitepaper leans into a conceptually sharper technical foundation.

It starts with a simple, almost stubborn assumption: rewards must be allocated through large-scale data analysis and machine learning to identify actions that genuinely drive long-term ecosystem value, rather than raw activity volume. The whitepaper describes a comprehensive data-driven infrastructure — akin to a next-generation ad network — that leverages real-time player telemetry, behavioral patterns, and contribution signals to dynamically distribute incentives. Smart Reward Targeting uses ML models to score player actions such as meaningful quest completion, user-generated content creation, consistent social engagement, and resource contributions that strengthen overall health, directing capped daily pixel emissions (100,000 new tokens) accordingly instead of passive farming loops.

The broader ambition goes beyond static token mechanics. Pixels integrates this targeting layer into a hardened ecosystem where machine learning continuously refines reward logic based on retention signals, churn predictors, and value-creation metrics. Recent execution through the Stacked AI-powered infrastructure extends this capability: an embedded “AI game economist” that analyzes SDK-integrated player movements in real time, suggests optimal LiveOps campaigns, and personalizes offers without manual intervention. Stacked already powers internal titles and opens to external studios, enabling plain-language queries for churn analysis or budget optimization while shifting some payouts toward USDC to ease direct pixelselling pressure.

It’s a cleaner technical framework than most. Behavioral analytics become the core allocation engine. Adaptive ML loops where player data shapes reward distribution — less brain-dead yield spraying, more precise alignment that could theoretically sustain engagement when initial hype volume normalizes and daily tasks lose novelty.

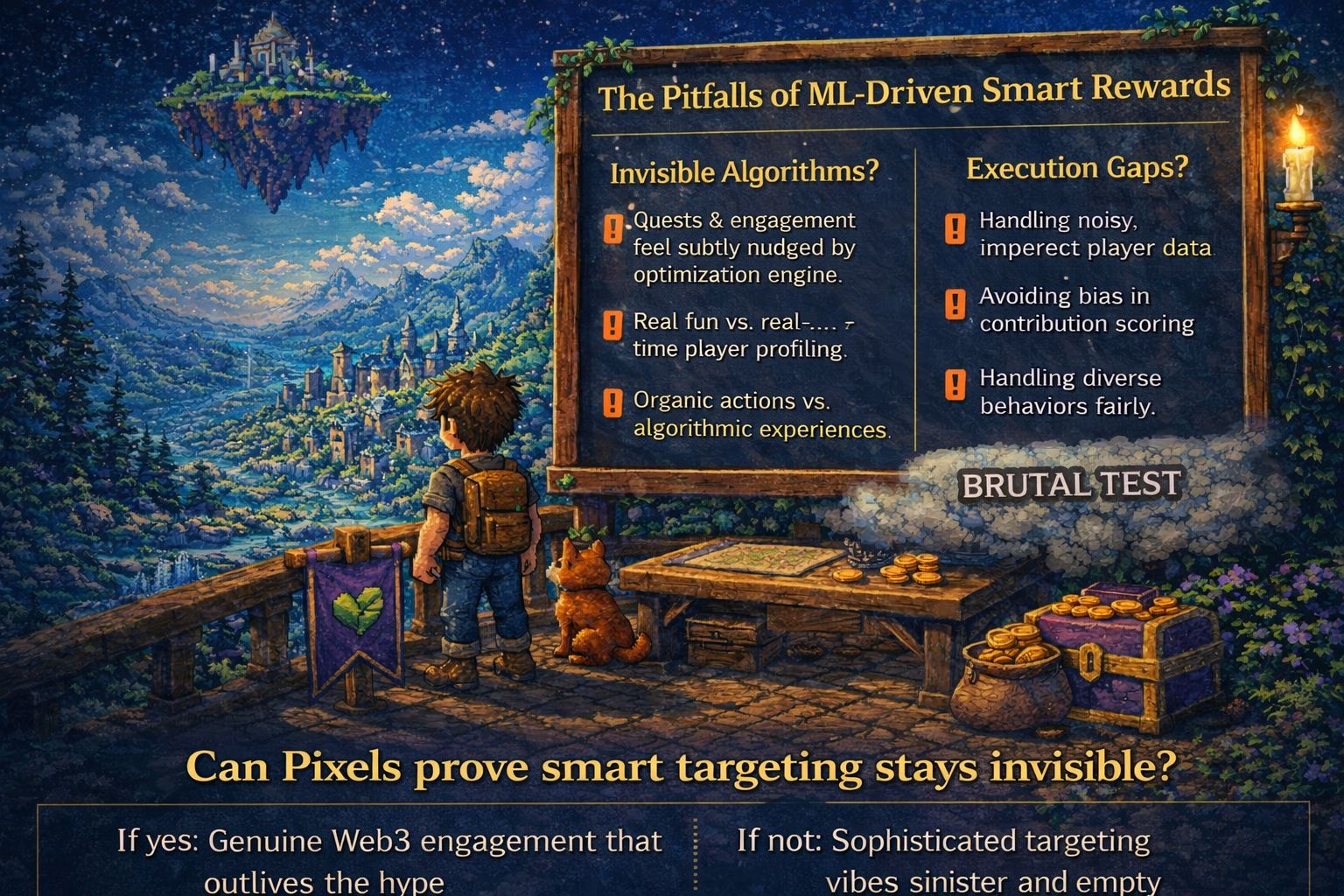

But here’s the deeper tension the whitepaper can’t fully paper over with ML architectures or telemetry pipelines.

The smarter and more granular the smart reward targeting via ML-driven behavioral analytics gets, the higher the risk that players eventually sense the optimization engine working underneath. When every quest, content creation, or social interaction is scored and rewarded by models trained on ecosystem-health objectives, the experience can shift from joyful pixel-world exploration to participating in someone else’s real-time behavioral modification system. Players have a sharp nose for when progression feels subtly steered by algorithmic nudges rather than organic discovery. No amount of data science precision, LiveOps automation, or USDC off-ramps can manufacture genuine attachment once the calculation behind personalized missions becomes perceptible.

Execution gaps remain too. Advanced ML targeting sounds robust on paper, but handling noisy real-world player data, avoiding bias in contribution scoring, and maintaining fairness across diverse behaviors doesn’t always cooperate with live game dynamics or community expectations. If the underlying gameplay loops aren’t compelling enough on their own, even the most sophisticated behavioral models may only delay familiar disengagement. Analytics help allocate better, but they can’t engineer intrinsic motivation where core fun is absent.

So the real test the whitepaper quietly sets up is brutal and conceptual:

Can Pixels implement smart reward targeting through ML-driven behavioral analytics so intelligently — with real-time telemetry, contribution scoring, and adaptive distribution — that the technical machinery stays completely invisible? Can the vision of a data-hardened, value-aligned incentive layer actually produce organic, long-term player engagement without anyone ever feeling like they’re inside a finely tuned algorithmic retention system?

nex

If the core gameplay leads and the ML quietly enhances meaningful actions, if targeted rewards feel like natural progression rather than engineered prompts, this could evolve into something that genuinely outlives most GameFi experiments and reshapes how technical incentive systems operate across Web3 gaming.

If not, even the most advanced ML-driven whitepaper risks becoming another smartly packaged version of the same old story — prettier behavioral models, more sophisticated targeting infrastructure, but the identical quiet exit when players detect the analytics layer and the gameplay was never quite deep enough to stand alone.

I’ve read too many of these documents. Pixels at least confronts the old blunt-incentive failures head-on with harder technical questions about what actually survives when reward logic must scale with real behavioral complexity. Whether the on-chain and off-chain reality of ML-powered targeting matches the theory is what player data, engagement curves, and time will judge next.

$SIREN #StrategyBTCPurchase #WhatNextForUSIranConflict #AltcoinRecoverySignals?