Honestly… I didn’t expect to feel so particularly cared for while reading how Binance AI Pro describes its platform architecture.

Not to worry. Not to doubt. But rather akin to the feeling when a technical decision that sounds like a commitment to transparency comes with an implication that no one in the product announcement directly addresses.

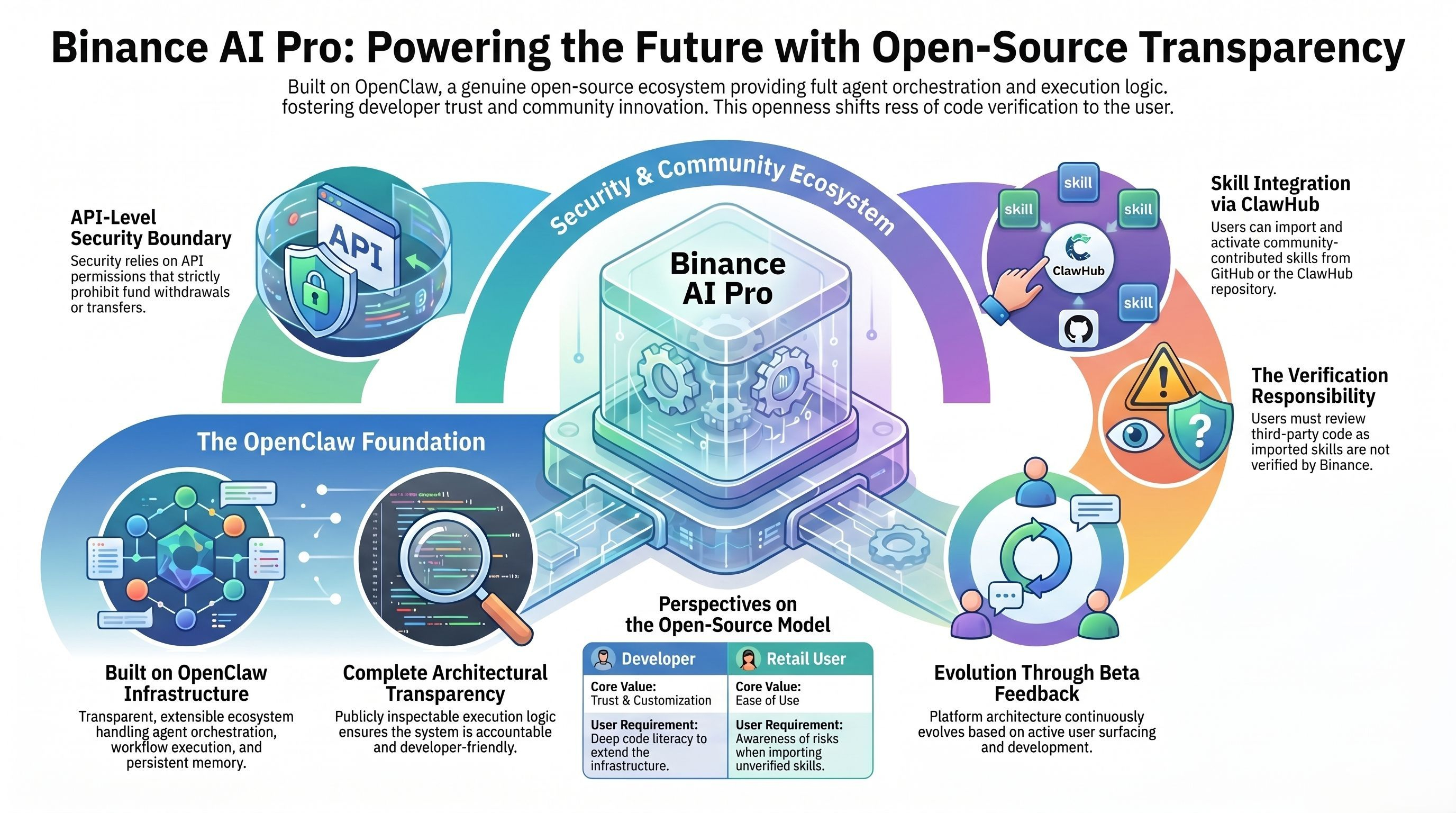

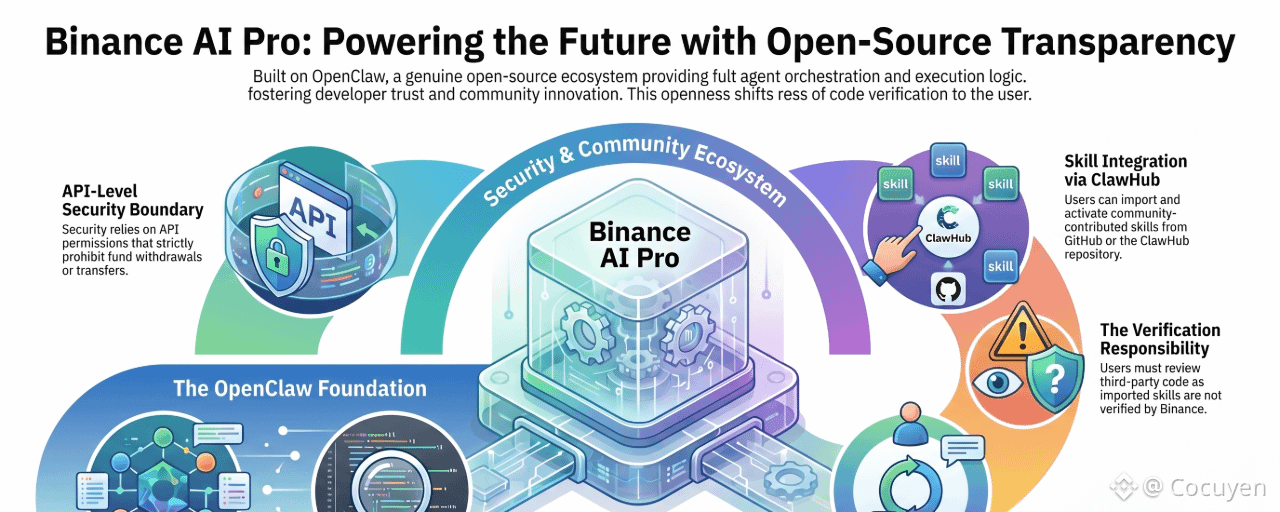

Because there is a template in how AI platforms describe open-source infrastructure that the field accepts without considering the real implications of openness for a system that performs direct transactions. The introduction shapes OpenClaw as a platform. An open-source ecosystem. Community-driven. Scalable. The type of infrastructure language that simultaneously demonstrates reliability and collaboration.

However, open-source in a productivity tool and open-source in an automated trading system are not the same type of decision. When the source code is public on a note-taking application, anyone can inspect the code, suggest improvements, and clone the project. When the source code is public in an environment that coordinates AI agents managing real capital, anyone can check the execution logic, understand how Skills are loaded and validated, and study how the system handles transaction commands.

Because the product they describe is real. Binance AI Pro is built on OpenClaw, a truly open-source ecosystem that handles agent coordination, skill loading, workflow execution, and a memory system maintained across sessions. Openness is real, and the infrastructure is thoroughly documented.

So… transparency is very important.

But transparency has never been the difficult part of open-source systems.

The difficulty lies in what sophisticated perpetrators will do when they fully understand how the system operates.

And this is precisely when the question that no one directly raises becomes difficult to ignore.

Because this is what I always return to. An open-source agent-run environment means that the skill loading mechanisms are public. The workflow tool logic is readable. How the system handles instructions from an imported skill, validates permissions, and delegates execution to the API key is recorded and can be audited by anyone who wants to research. That transparency is what makes open-source trustworthy for developers building on it. It is also what makes it understandable for anyone trying to grasp where the true boundaries of execution lie.

The security model of Binance AI Pro is based on an API key permissions layer. No withdrawal rights. No transfer rights. Limits are enforced at the API level, not at the skill level. That means the question is not whether those limits apply to a skill attempting to transfer money directly. The question is whether a researcher has read the entire OpenClaw source code and accurately understands how the execution of skills is mapped to defined API calls to identify exceptional cases that the API permissions layer was not designed to anticipate.

Next is the issue of community scaling. Of course.

And this is the moment that is hard to ignore. The OpenClaw ecosystem includes ClawHub, a community repository where skills are published and shared. Binance AI Pro’s documentation notes that third-party skills from GitHub can be imported, and Binance clearly warns that these skills are unverified and need to be reviewed before activation. That warning is very honest, and the information is published clearly.

But the relationship between the open-source runtime environment and the community skill repository is cumulative. The more the ecosystem grows, the more skills are published. The more skills published, the more difficult it becomes for an ordinary user who is not a developer to conduct a thorough review. The advice to scrutinize each line of code before activation is accurate. However, that advice assumes a level of programming knowledge that most retail traders do not possess, and the one-click activation experience does not meet that.

There is also a deeper tension that no one directly names.

This platform is currently in beta testing. A feedback-based development model means that the architecture is expected to change based on the issues users raise during the beta phase. Open-source infrastructure, positive user feedback, and future scalability plans are a combination that means the execution environment six months from now will not resemble the current execution environment. A user has assessed the risk of importing skills when launching the beta version and has commented on their comfort level while evaluating a system that will continue to evolve based on that assessment.

However… I would still say this.

Building on the OpenClaw platform reflects an architectural philosophy that truly respects developer access and community contributions in ways that proprietary closed systems cannot. An execution environment for agents that anyone can audit will be more accountable than an environment that requires users to trust an execution process they cannot verify. The open-source platform is a genuine commitment, and the transparency it creates is of real value to developers who want to understand what they are deploying.

The question is whether a user activating Binance AI Pro through a one-click interface and importing a skill from the community ecosystem has the same relationship to that openness as a developer reading the source code and understanding its meaning.

And in this space, the gap between those two users is where the most critical risk questions arise.

Trading always carries risks. AI-generated suggestions are not financial advice. Past performance does not reflect future results. Please check the availability of the product in your area. @Binance Vietnam #BinanceAIPro $XAU $PIEVERSE $GUN