I keep coming back to a simple, uncomfortable question: why does interacting with regulated systems still feel like exposing more of yourself than necessary?

Whether it’s onboarding to a platform, moving funds, or even just proving eligibility, the default assumption seems to be that you hand over everything first, and only then earn the right to participate. It works, technically. But it never really feels right.

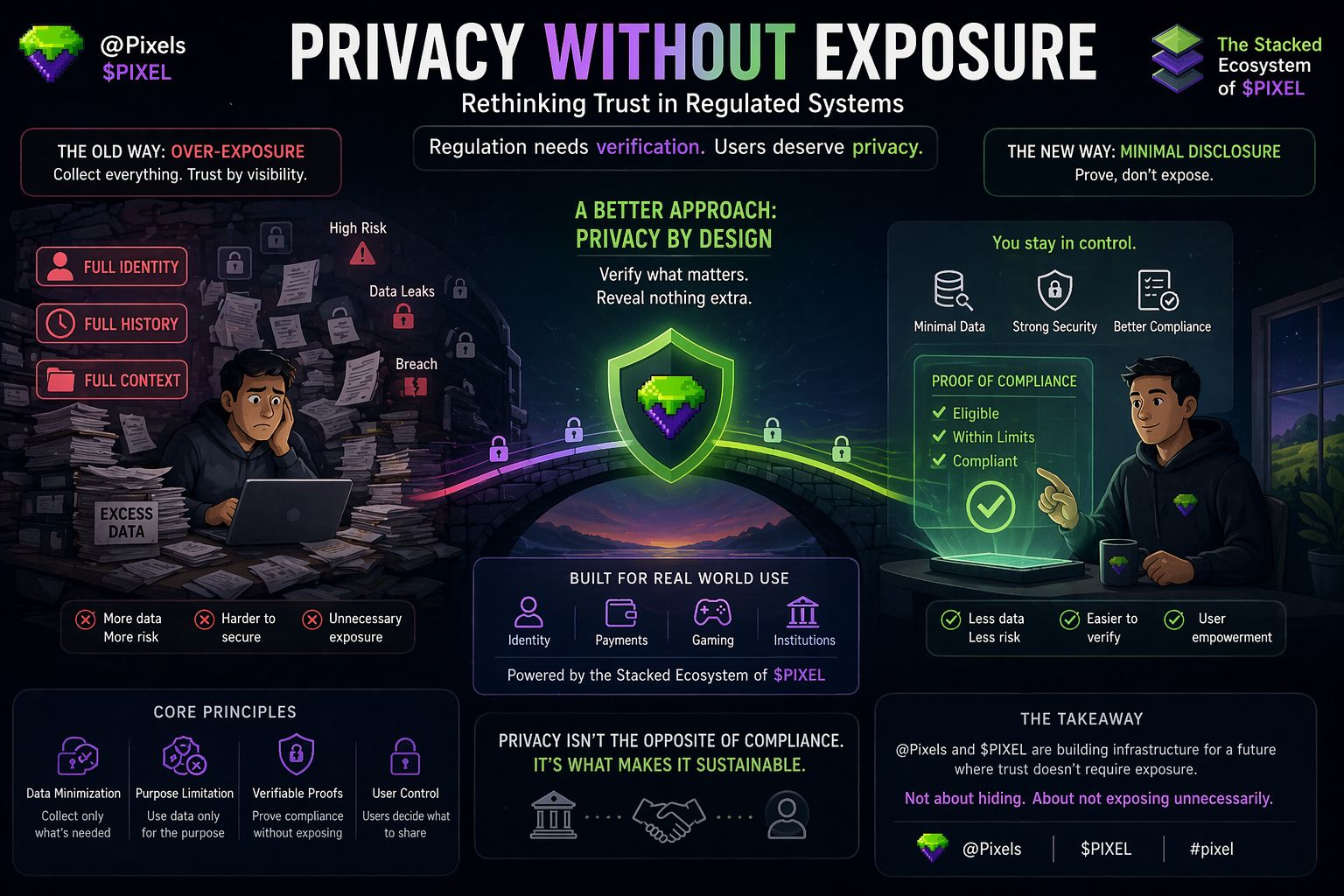

The problem isn’t that regulation exists. Most people understand why it does. It’s that the way we implement it often assumes trust is built through visibility, rather than control. So systems ask for full identity, full history, full context, even when only fragments are actually needed. Builders comply because it’s easier to over-collect than to design something precise. Regulators tolerate it because it’s auditable. Users accept it because there’s no alternative. But the result is awkward: too much data sitting in too many places, increasing risk without necessarily improving outcomes.

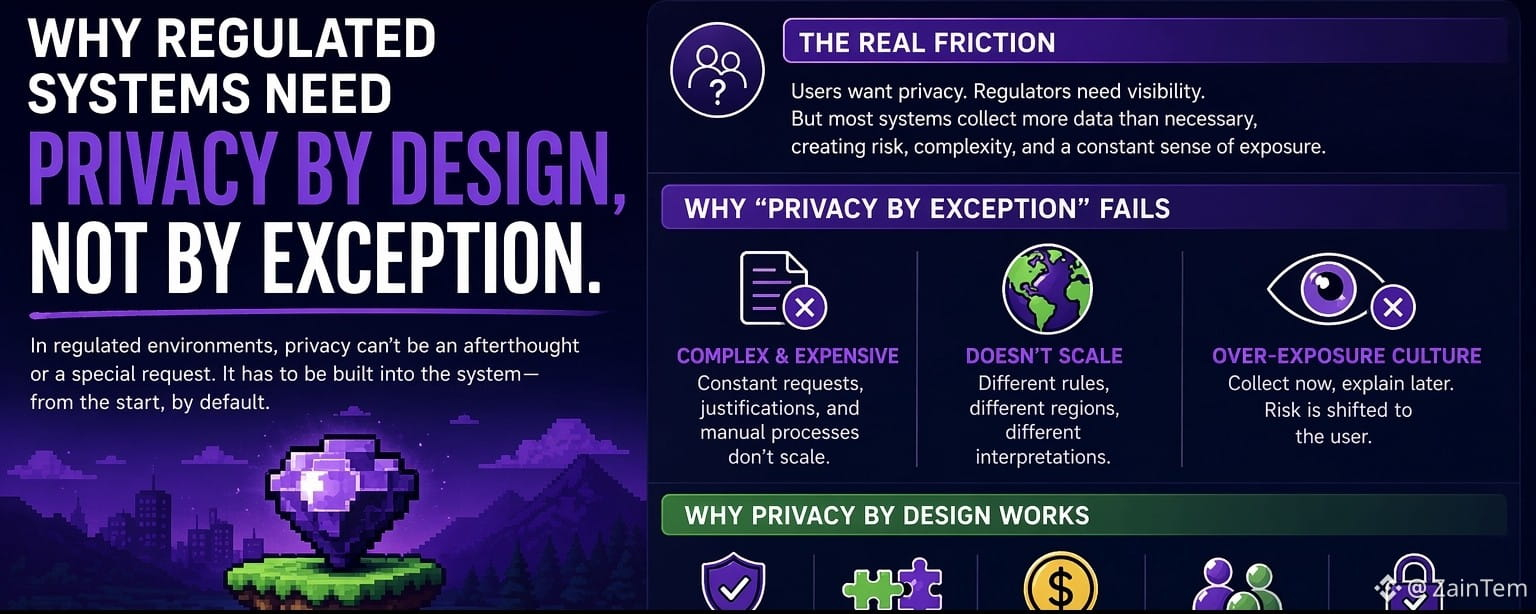

What makes this worse is that privacy is usually treated as an exception layer. Something added later. A patch. You see it in how systems bolt on encryption, or add selective disclosure features after the core architecture is already built around transparency. It feels incomplete because it is. The foundation wasn’t designed for it.

If you think about it from first principles, regulated systems don’t actually need to know everything. They need to verify specific conditions. Is this user allowed? Is this transaction compliant? Has this threshold been crossed? These are yes-or-no questions most of the time. But instead of designing for minimal proofs, we design for maximal exposure, and then try to reduce the damage.

That’s where the idea of privacy by design starts to feel less like a feature and more like a requirement. Not in an idealistic sense, but in a practical one. Systems that minimize data at the core are easier to secure, easier to reason about, and arguably easier to regulate because the surface area is smaller. But getting there means rethinking how identity, assets, and interactions are structured from the beginning.

This is where something like Pixels and the broader Stacked ecosystem around PIXEL becomes interesting to think about not as a game or a token narrative, but as infrastructure experimenting with ownership, interaction, and verification in a different way. If digital environments can separate what needs to be proven from what needs to be revealed, they start to model a system where compliance doesn’t automatically mean exposure.

I’m not convinced it’s solved yet. In fact, most attempts in this direction still feel early, sometimes even fragile. There are trade-offs everywhere: usability vs. security, flexibility vs. enforceability, decentralization vs. accountability. And there’s always the risk that systems drift back toward convenience, which usually means collecting more than they should.

But the direction matters. If Pixels and similar ecosystems can embed privacy into how interactions are structured rather than treating it as an afterthought they might align better with how real-world systems are supposed to function but often don’t. Not perfectly, but more honestly.

The real test isn’t whether it sounds good in theory. It’s whether institutions can actually plug into it without breaking their own requirements, whether users feel a tangible difference in control, and whether the system holds up under pressure legal, technical, and economic.

If it works, it won’t be because it promised privacy. It’ll be because it made unnecessary exposure irrelevant.

And if it fails, it’ll probably be for the same reason most systems fail: taking the easier path when things get complicated.

For now, I see it less as a solution and more as an experiment worth watching. The kind that doesn’t try to remove regulation, but quietly questions how much of ourselves we really need to give up to satisfy it.