Honestly, I didn't think I'd pay attention to details like this when I read the Binance AI Pro description of their credits system. At first glance, it seemed just 'okay', pretty standard. But the more I think about it, it's like a slight shift in how I view things - not necessarily doubt or frustration. It's more of a momentary pause.

It's like something presented as flexible, fair based on usage, sounds very 'user-friendly'. But the deeper you dig, the real cost doesn't show up right away. It only starts to reveal itself once the system is running, once you're in the flow, already committed to a part of it. To put it bluntly: at first, it seems 'great, solid', but as you keep going, the bill isn't staying put anymore.

At first, I thought something like '5M credits should last a whole month, no worries there.' But then I sat back for a moment and wondered: am I treating it like a fixed budget for real… or is it just a number that looks safe on the surface, so I’m comforting myself?

There’s a pretty familiar pattern in how many AI trading platforms talk about pricing, to the point where almost everyone glosses over it. Credits are packaged as a 'controllable' resource, which sounds great. You’re told you can set up strategies, run analyses, execute trades, as if that’s all you need to understand and control the system. But what’s on the surface doesn’t truly reflect the important aspects when it comes to real usage. Especially during times when credits burn unexpectedly, spiking up and breaking the initial assumption that the monthly quota will behave like a stable budget.

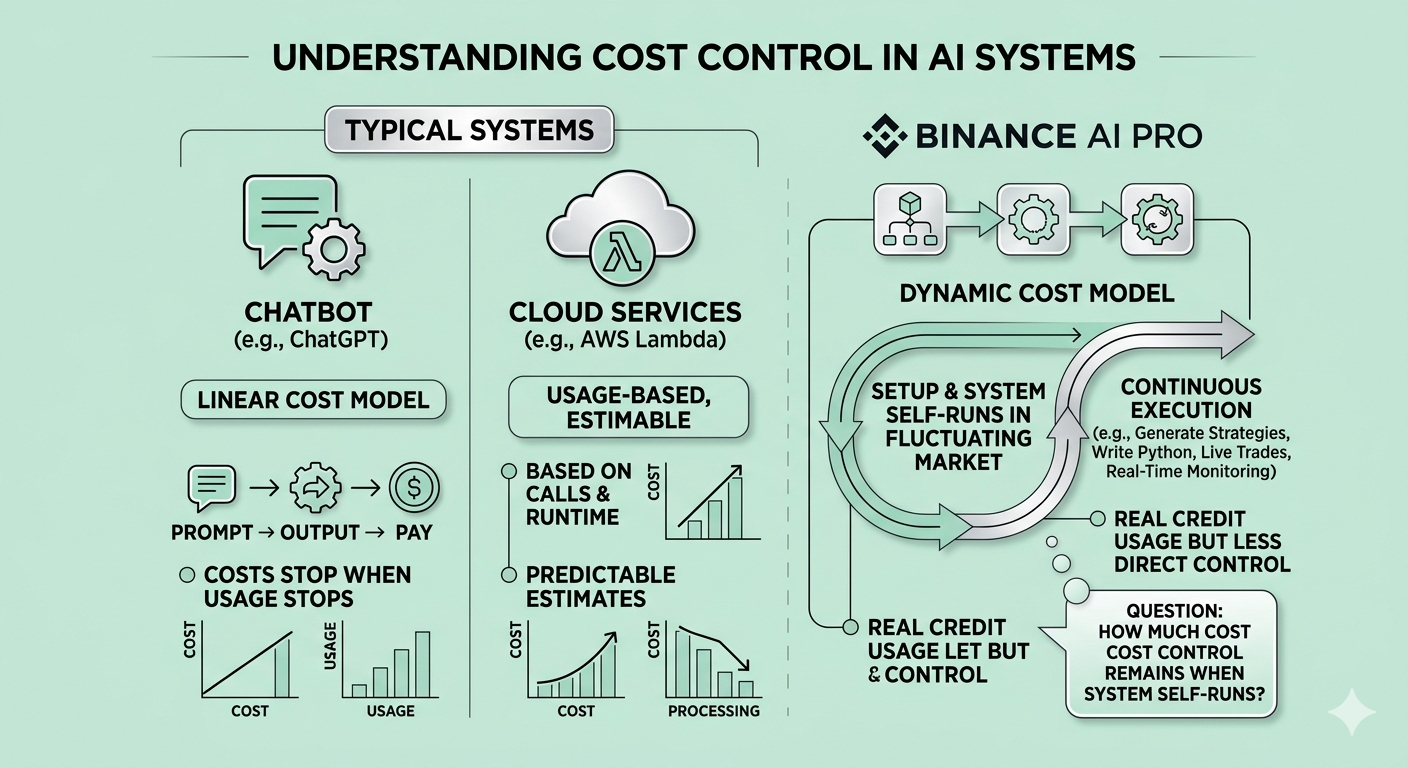

This point becomes clearer when comparing Binance AI Pro to more familiar systems.

At this point, I feel like something is off.

With chatbots like ChatGPT, costs are almost linear - you send a prompt, get output, and pay accordingly; when you stop, the costs stop too. With cloud services like AWS Lambda, even though it’s still usage-based, you can estimate based on the number of calls and runtime. But with Binance AI Pro, costs depend not only on whether you 'call the system', but also on whether the system continues to run on its own after you’ve set it up, in a constantly changing environment that you do not directly control. And it’s at this point that I start to feel something is a bit 'off' - if the system runs autonomously, how much control do I really have over the costs?

Because the reality is there's nothing 'fake' here - Binance AI Pro can indeed generate strategies, write Python code, execute directly on live positions, and monitor the market in real-time. And the credit system is also real in the sense that it measures usage costs quite clearly, not just a figment of imagination. So, if you look at the surface layer, it’s not obviously wrong. The issue is that it’s not the full picture. And this is quite common in such systems - it may look sufficient, but the deeper you go, the more layers you find that aren’t initially highlighted.

The more complex part lies in another layer, in the relationship between strategy complexity, execution frequency, and market conditions, where a seemingly simple credit counter is actually the result of many hidden variables that the user does not directly see. This becomes significant because when a strategy runs continuously, you are almost always caught between two questions: what happened, which you can revisit through logs or execution history, and why it happened, which lies beyond that layer and depends on runtime behavior, trigger frequency, and computational intensity.

A simple example: I once ran a pretty basic strategy, checking conditions every 5 minutes. When the market was stable, consumption was only around 100,000 - 200,000 credits per day, which felt quite 'comfortable'.

But when the market starts getting volatile, that same logic triggers much more frequently, and consumption rises to about 700,000 - 900,000 credits/day. Looking back at the dashboard, the feeling is quite clear: I didn’t change my strategy, but the cost changed completely. So, am I optimizing the strategy… or just inadvertently optimizing according to the market conditions at that time?

This gap is more important than it looks, because when users evaluate whether the strategy is behaving correctly, they don’t just look at the output but also try to judge alignment - whether what happened matches the original intent. In cases where credits burn faster than expected, that evaluation cannot rely on surface output but requires information about trigger frequency, execution complexity, and how the market affects runtime.

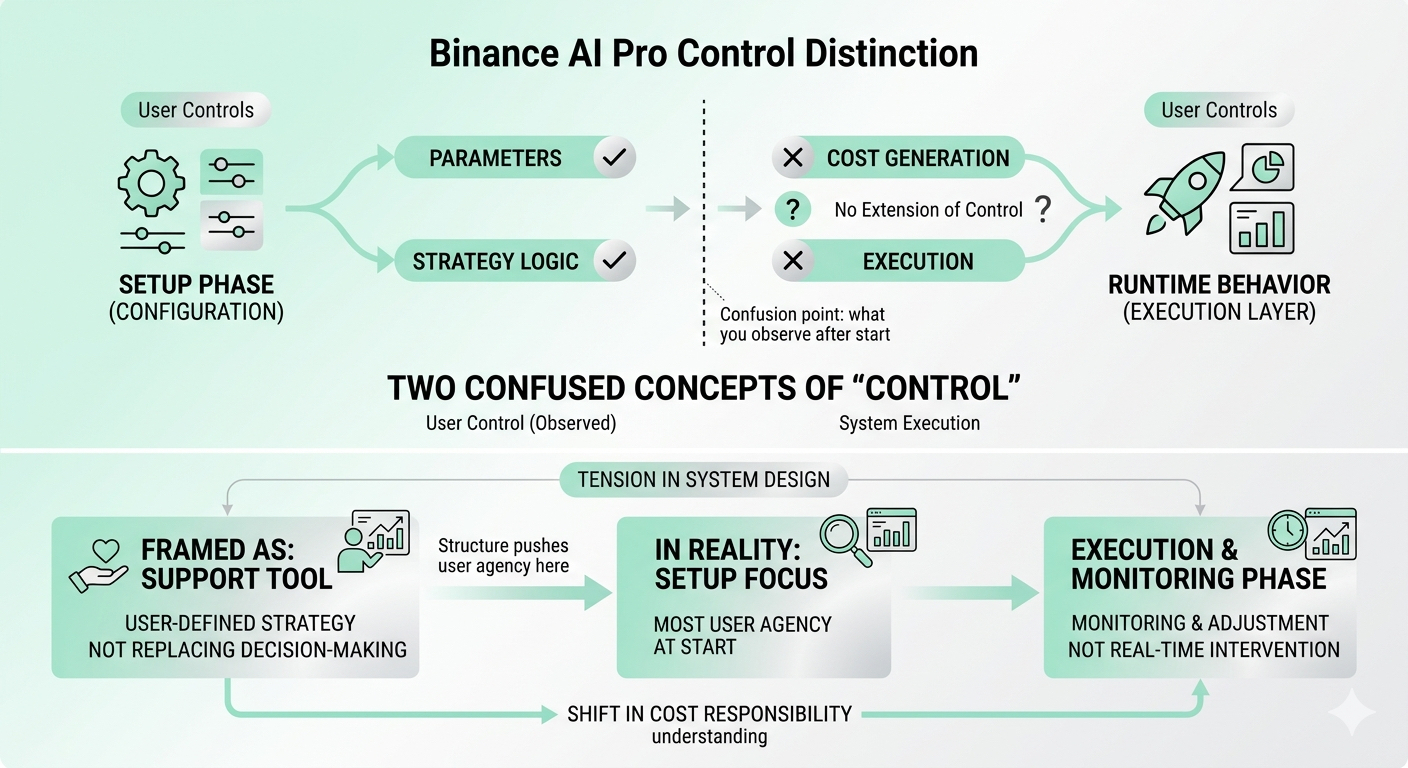

Binance AI Pro gives me control at the configuration level - from parameters to strategy logic, it feels like I set up quite 'thoroughly' at first. But the issue is that control doesn’t extend down to the execution layer, where the actual costs are incurred.

And this made me start to see a slight deviation between the two concepts of 'control' that can easily be confused. One is in the setup phase - when I configure, it feels like I’m in control of everything. The other is runtime behavior - when the system starts running live. And to be honest, only the second is what I can still observe after everything is live. The 'control' at the input stage often turns out to be more of an assumption than something that can be verified directly while the system is operating.

Beneath all this, there’s another slight tension, where the system is framed as a tool supporting user-defined strategies, not replacing decision-making. But in practice, the structure pushes most user agency into the setup phase, and when execution starts, the rest is mainly monitoring and adjustment, not real-time intervention.

This shifts the understanding of cost responsibility in ways that are not always clearly articulated.

Still, it’s important to acknowledge that the usage-based credit model is a reasonable effort to align cost with actual consumption, while many other systems use flat pricing to obscure actual usage. The platform being transparent that heavier workloads will consume credits faster also distinguishes it from completely obscure variable cost models, so the surface layer cannot be dismissed.

The remaining question is: however, whether what users see after credits drop quickly is enough to understand not only what happened, but also if the system is operating within the strategy and expected cost boundaries, or if they will need additional context that the current interface does not expose.

Because ultimately, the difference between a system that seems predictable under assumed usage conditions and a system that is actually predictable in real execution cannot be resolved just by looking at the credit balance.

It depends much more on whether the underlying cost dynamics are 'visible' enough for me to interpret. And to be honest, in most cases I've experienced, that hasn’t been the case. It’s that boundary where uncertainty exists. It’s not about the credit numbers, but how they are consumed in situations that weren't fully modeled at setup. And if the cost only truly becomes clear after the system has run for a while… then the final question is: are you controlling the system, or just gradually learning to adapt to how it behaves after each surprise?

And I might continue to monitor and delve deeper into how such systems operate at the execution layer behind the scenes, because the deeper I go, the more I see that there’s much more beneath the surface than how it was initially presented.

Trading always carries risks. AI-generated suggestions are not financial advice. Past performance does not guarantee future results. Please check the availability of products in your area.