Honestly... I didn't expect to feel this special interest when reading how Binance AI Pro describes the relationship between users and artificial intelligence.

It's not about disagreement. It's not skepticism. It's more like the feeling when a product description uses a metaphor that is technically accurate yet implicitly falls short.

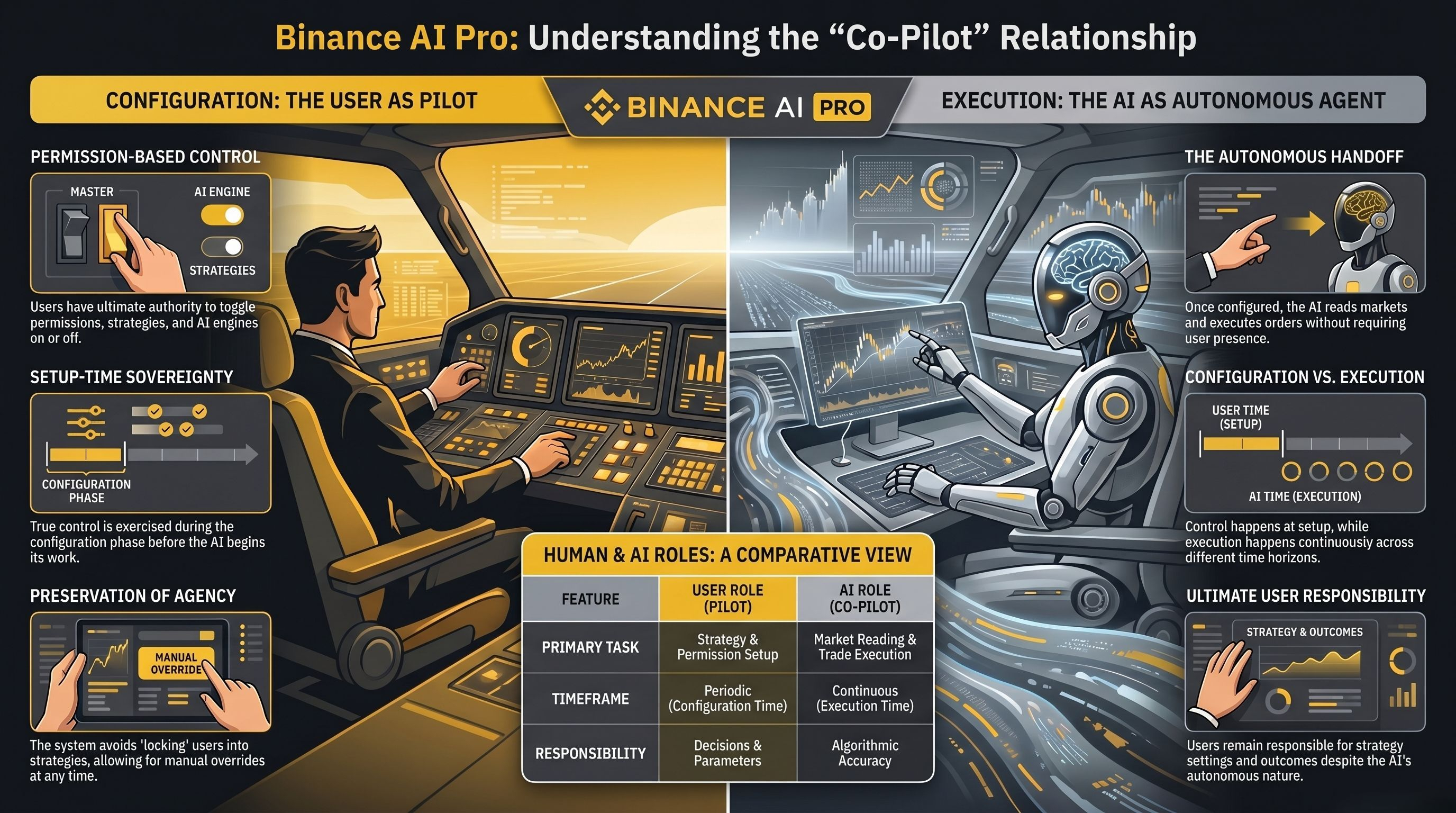

Because there is a pattern in how automated systems describe user control that the field accepts without considering what real control means once the execution process begins. The presentation describes Binance AI Pro as a co-pilot assistant. Users have ultimate control over the permissions that the AI holds. They can toggle permissions on or off at any time. The presentation is clear, and the intention is sincere.

But the role of the co-pilot on an airplane and the co-pilot in an automated trading system are not the same. On a plane, the captain and the co-pilot share the cockpit in real-time. Both can see what’s happening and intervene as decisions are made. That shared presence is what makes the co-pilot metaphor meaningful.

In an automated trading system, user control occurs at the configuration moment. Then, the AI's execution process happens continuously. Those are two different time frames, and only one of them occurs when a trade order is placed.

Because the product they describe is real. Binance AI Pro allows users to set permissions, configure strategies, select AI tools, and toggle access on and off at any time. The control mechanisms are real, and the decentralized model is transparent. Users who want to limit what the AI can do have real tools to do so.

So... control is real.

But having access to the toggle does not equate to being present at the moment of execution.

And this is when the layout starts to take on a more important role than its appearance.

Because this is what I always come back to. A user setting a strategy on Monday morning and letting it run for the whole week is not co-piloting in any sense of the word every minute. The AI is reading the market, assessing conditions against the configured parameters, and placing orders without the user being present or paying attention. The user's control has been established from the moment of setup. What happens between the setup moment and the next time the user opens the platform is automated execution, not collaboration.

The expression 'co-pilot' implies a shared journey. The actual architecture is a transfer. The user sets the course, and the AI takes the wheel.

Then comes the question of intervention. Of course.

And this is when ignoring becomes more challenging. Users wanting to exercise real-time control need to notice changing conditions, open the platform, assess the current state of the strategy, and decide whether to modify permissions or pause execution. Each of those steps takes time. And in a market that moves faster than users can track over a week, the gap between when a change occurs and when the user intervenes is not narrowed by the existence of a toggle. It is narrowed by whether the user is actually present often enough to use it.

The guidance document has been honest about this. Binance AI Pro provides AI infrastructure as a tool, while users remain responsible for strategy setup and trading decisions. That statement accurately clarifies responsibility. It also places full responsibility on the user in this collaborative relationship.

There is also a deeper tension that no one directly names.

The 'co-pilot' approach is best suited for active users who monitor, engage, and view AI as an execution layer under continuous human oversight. It describes a more complex reality for users who have activated the platform, configured strategies, and are now living their lives while the AI manages capital. Both of these users have access to the same control node. Their relationship with the 'co-pilot' metaphor is not the same.

However... I would still say this.

Describing Binance AI Pro as an assistant rather than an autopilot system reflects a significant philosophical choice about how AI should interact with human decision-making. A system that preserves user intervention capabilities and doesn't lock users into strategies they can't escape respects user autonomy more than a system that treats activation as a complete delegation. The wording is crucial, and the intention behind it is sound.

The question is whether a user activating a strategy and then leaving the platform maintains a similar co-piloting relationship as a user actively monitoring their position throughout the day.

And in this space, the answer to that question will determine whether the control that the platform provides can truly change what happens when market conditions shift unexpectedly.

Trading always carries risks. AI-generated suggestions are not financial advice. Past performance does not reflect future results. Please check the availability of the product in your area. @Binance Vietnam #BinanceAIPro $XAU $RAVE $UAI