I didn’t really question Pixels when loops broke the first time.

That’s normal in these systems. Something gets overfarmed, rewards lose weight, players drift, the team patches it.

What caught me off guard was when the same loop broke again but for a different reason.

First it was too rewarding. Then it wasn’t rewarding in the right place. Same mechanic, different failure.

That’s when it clicked for me that Pixels wasn’t just adjusting numbers anymore.

The system itself was learning where incentives stop working not just in volume, but in direction, conversion efficiency, and retention impact across cohorts.

That’s the context Stacked comes from.

Not a feature drop. Not a new app.

More like a layer built after watching too many reward systems fail in live conditions a layer designed to continuously reallocate incentive capital based on live behavioral data.

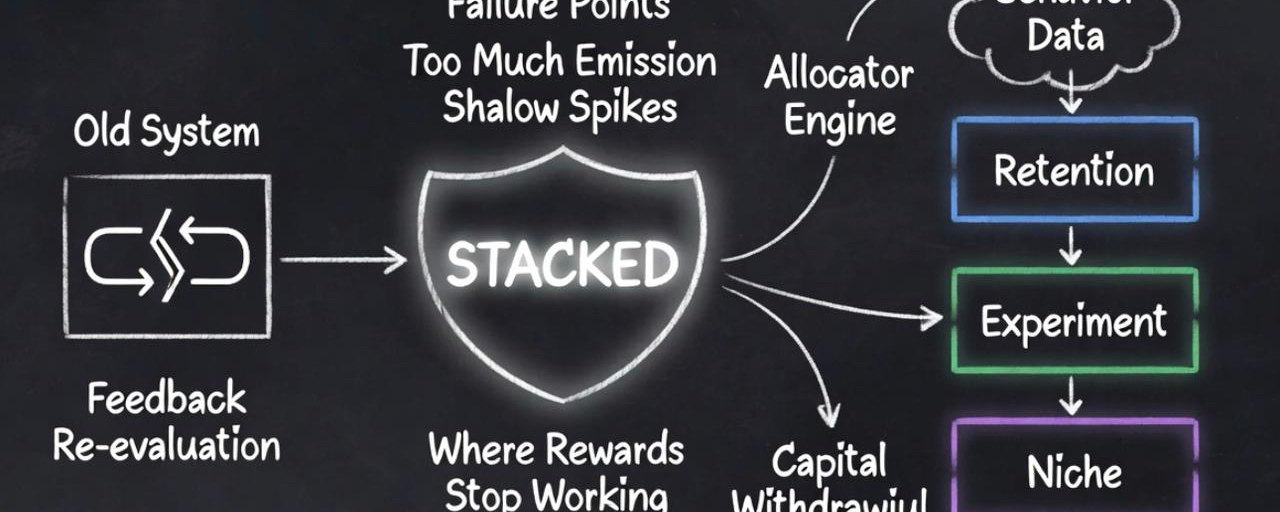

Stacked only makes sense if you look at Pixels as a system that has already gone through years of imbalance.

Too much emission, shallow engagement spikes, players optimizing for payouts instead of staying in the loop.

And more importantly distorted feedback signals where short term activity outperformed long term retention, creating false positives in growth metrics while underlying participation quality declined.

None of that shows up in theory.

It only shows up when real players push the system in directions you didn’t design for.

So instead of designing better rewards, Pixels is now trying to decide where rewards should even exist and where capital should be withdrawn entirely.

That’s the shift.

The problem was never just emissions. It was capital misallocation inside the incentive layer.

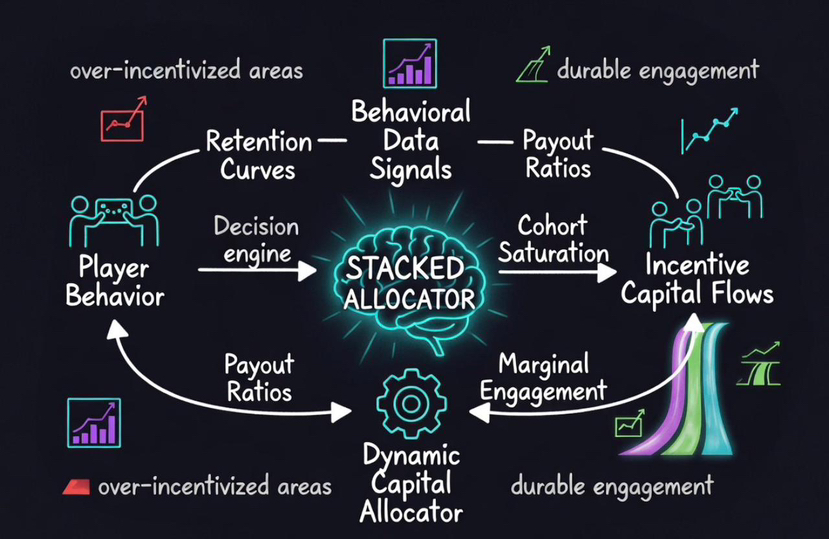

Stacked is basically a decision engine sitting on top of the game’s economy.

But more precisely, it acts as a capital allocator for incentives dynamically distributing reward budget based on retention curves, payout to engagement ratios, cohort saturation levels, and behavioral depth signals.

It’s not there to create content.

It’s there to control how incentive capital flows through the system.

Which behaviors are worth paying for

Which ones generate activity but fail to compound

Which cohorts are already over incentivized relative to their retention

Where marginal reward spend still produces incremental engagement

Where reward velocity supports circulation vs immediate extraction

And the important part is this isn’t static.

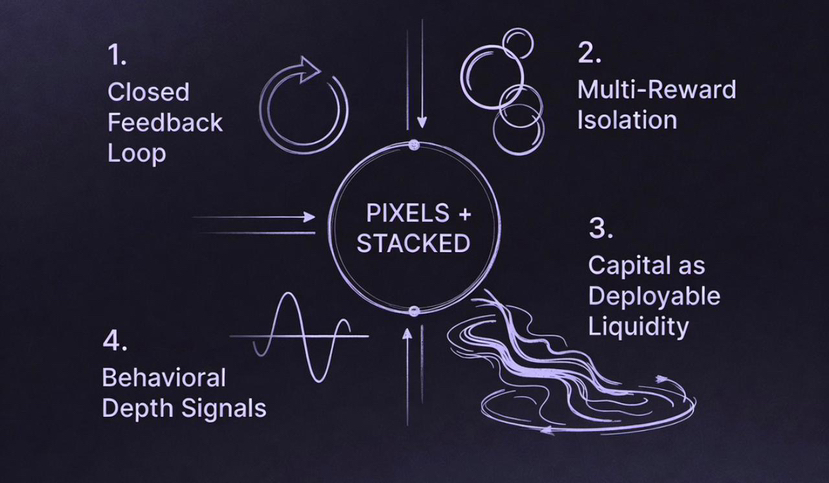

It operates as a closed feedback loop player behavior generates data → data updates allocation logic → allocation shifts incentive capital → new behavior is observed and re-evaluated.

Every time players move differently, the system has to adjust.

What worked last week can become inefficient this week.

What looks like engagement in dashboards can actually be non productive load inside the economy.

Older game models don’t deal with this well.

They design a loop, attach rewards, and scale it. When it breaks, they patch around it.

Pixels seems to be doing something else entirely.

It’s treating rewards like deployable liquidity capital that needs active positioning, rotation, and withdrawal not just emission schedules.

That’s why the controlled rollout matters more than people think.

This isn’t hesitation. It’s calibration.

If you already know your loops are sensitive, then scaling too fast doesn’t give you growth it degrades signal quality and obscures where incentive capital is leaking.

A smaller rollout creates cleaner data.

It allows isolation of variables, clearer attribution of outcomes, and precise identification of where incentives generate durable engagement vs temporary extraction.

And that’s where the multi reward direction becomes important.

Most token based games force one asset to do everything.

It has to reward players, attract speculators, support liquidity, and hold long-term value.

That creates conflicting economic pressure across functions and eventually the system collapses toward the weakest use case.

Pixels is starting to separate those roles.

Instead of pushing everything through $PIXEL, different reward types can handle different jobs.

Some can target retention curves directly.

Some can incentivize experimentation without long term distortion.

Some can reward niche behaviors without impacting global pricing dynamics.

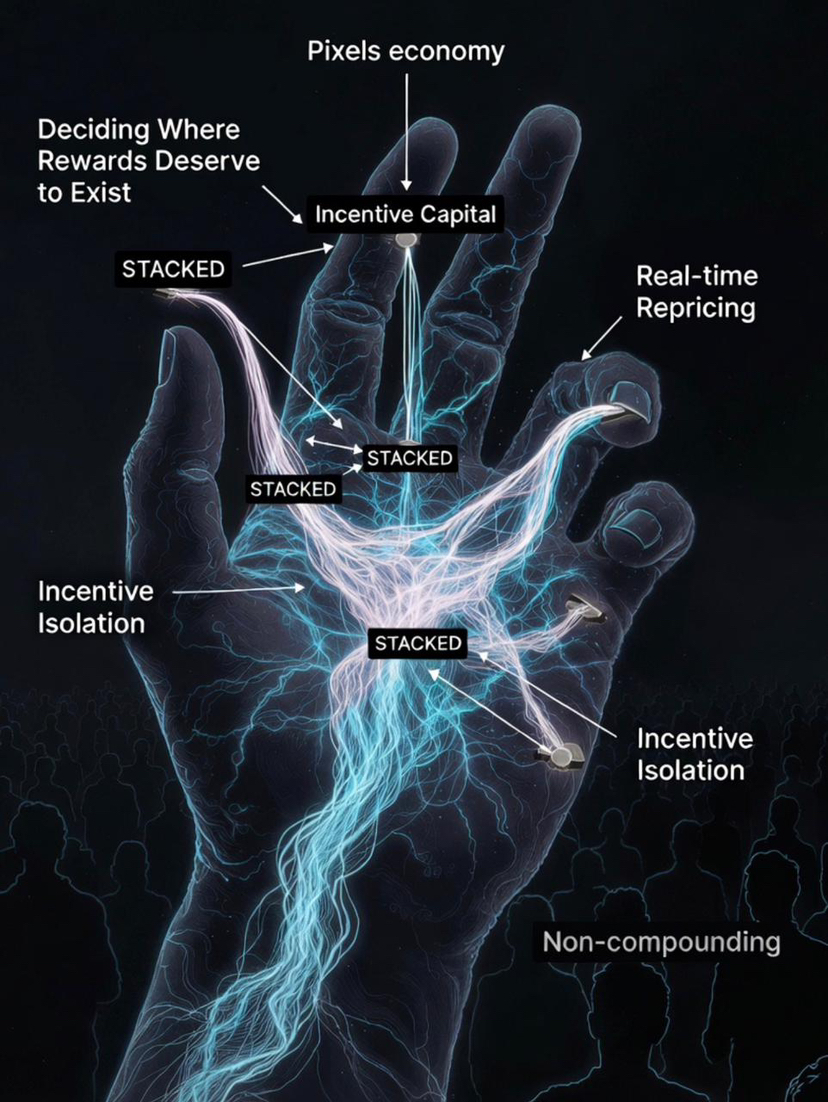

That changes how failure behaves.

In older models, when something breaks, it breaks everywhere.

Emission increases → value compresses → player quality degrades → loops collapse.

It’s systemic.

Here, failure becomes modular.

A specific loop can be over incentivized without dragging the whole economy down.

A cohort can be mispriced without forcing a global rebalance.

You can test aggressively in one segment without destabilizing everything else.

That’s not just iteration.

That’s containment through incentive isolation.

And it only really becomes visible if you’ve seen how many times reward systems collapse when everything is tied to one flow.

What makes this credible is that Pixels isn’t asking people to imagine this working someday.

They’re pointing to what already happened: millions of players, hundreds of millions in rewards, thousands of iterations.

That history matters because it explains why the system is moving toward real spend and real burn toward sinks, velocity control, and enforced economic cycling instead of relying purely on emissions.

Not because it sounds better, but because they’ve already seen what happens when rewards don’t connect to sustained participation.

If I step back, Stacked doesn’t feel like a growth feature.

It feels like a correction layer built after years of watching incentives behave unpredictably a system designed to detect inefficiency early, reprice behavior in real time, and re route incentive capital before breakdown compounds.

At its core, it’s not a reward system.

It’s an allocator deciding where incentives deserve to exist.

And that’s probably the real shift here.

Pixels isn’t trying to design the perfect reward system anymore.

It’s building a system that knows when rewards stop working and moves them before the damage spreads.