When I first checked out PIXELS, I was pretty wary. We've all seen the usual pitfalls in chain games: when the rewards are high, they get farmed out, and when the rewards stop, everyone bounces. Then I switched up my perspective. Instead of viewing it as just a 'reward-distributing project', I started seeing it as a LiveOps engine in action: the rewards are just tools. The real core is figuring out the rules for delivering those rewards to the right players, using data to prove whether this round of distribution actually boosts retention, engagement, and willingness to pay, and then feeding those results back into the system for further iteration. With this new lens, I found it a bit more intriguing.

Let me clarify one thing: Stacked resembles a rewarded LiveOps engine more than a generalized rewards app. The focus isn't on 'what benefits to give,' but on 'how to give, to whom, when to give, and whether player behavior changes as expected afterward.' More critically, it also emphasizes the AI game economist layer, which you can understand as a more engineered operational decision-making mechanism: transforming reward deployment, player behavior, retention, and LTV improvement into a testable, reviewable, and sustainably optimized process. In simple terms, it’s not about operating based on gut feelings but turning operations into an experimental science.

Why do I feel this approach is rarer in blockchain gaming? Because the blockchain gaming environment is particularly brutal on reward systems. In regular games, if rewards are distributed incorrectly, it mostly harms retention; in blockchain gaming, incorrect reward distribution can directly destabilize the economic system. Bots, scripts, and farming turn tasks into industrial assembly lines; if the system cannot distinguish between real players and yield players, the most dangerous situation arises: the more generous you are, the more you attract the wrong crowd; the harder you work on events, the more you provide certainty of profit to the bots. The end result isn’t 'more players,' but 'the economy being hollowed out,' content consumption falling behind reward output, real players being pushed away, and what’s left are just flow calculations based on ROI.

So, the challenge with LiveOps has never been about 'how to make events lively' but rather 'how to deliver rewards accurately.' I prefer to deconstruct it using an operator's approach: what goal does a round of reward deployment serve? Is it about user acquisition, retention, or increasing engagement? Who are the target users—newbies, returning players, or high-value players? What are the outreach methods, and how is the task chain designed? Then the most critical step is to review: did the data on the second and third days show structural changes, or was it just a temporary spike? True LiveOps isn't just about running one event; it’s about forming a cycle: set goals, design rules, deliver to different groups, observe behavioral changes, review reasons, then improve the rules and enter the next round. Being able to run this cycle is what real engineering capability looks like.

Here’s where the term cohort comes into play. I used to think 'segmentation' sounded too textbook, but in blockchain gaming, it’s a matter of life and death. A one-size-fits-all approach to rewards might look good short-term, but it will definitely crash in the long run. New players need guidance and small victories to form habits; mid-term players fear fatigue and need a sense of purpose and a more reasonable pace; high-value players seek scarce experiences and a sense of identity; returning players need a low-barrier path back instead of being forced to relearn everything. If you use the same reward logic to feed everyone, you will inevitably be bitten by farming. Stacked treats anti-cheat, anti-bot, and behavioral data as a moat, essentially to ensure rewards can more accurately distinguish between 'real players' and 'suspicious yield traffic,' lowering the ROI for the wrong crowd while enhancing the experience for real players.

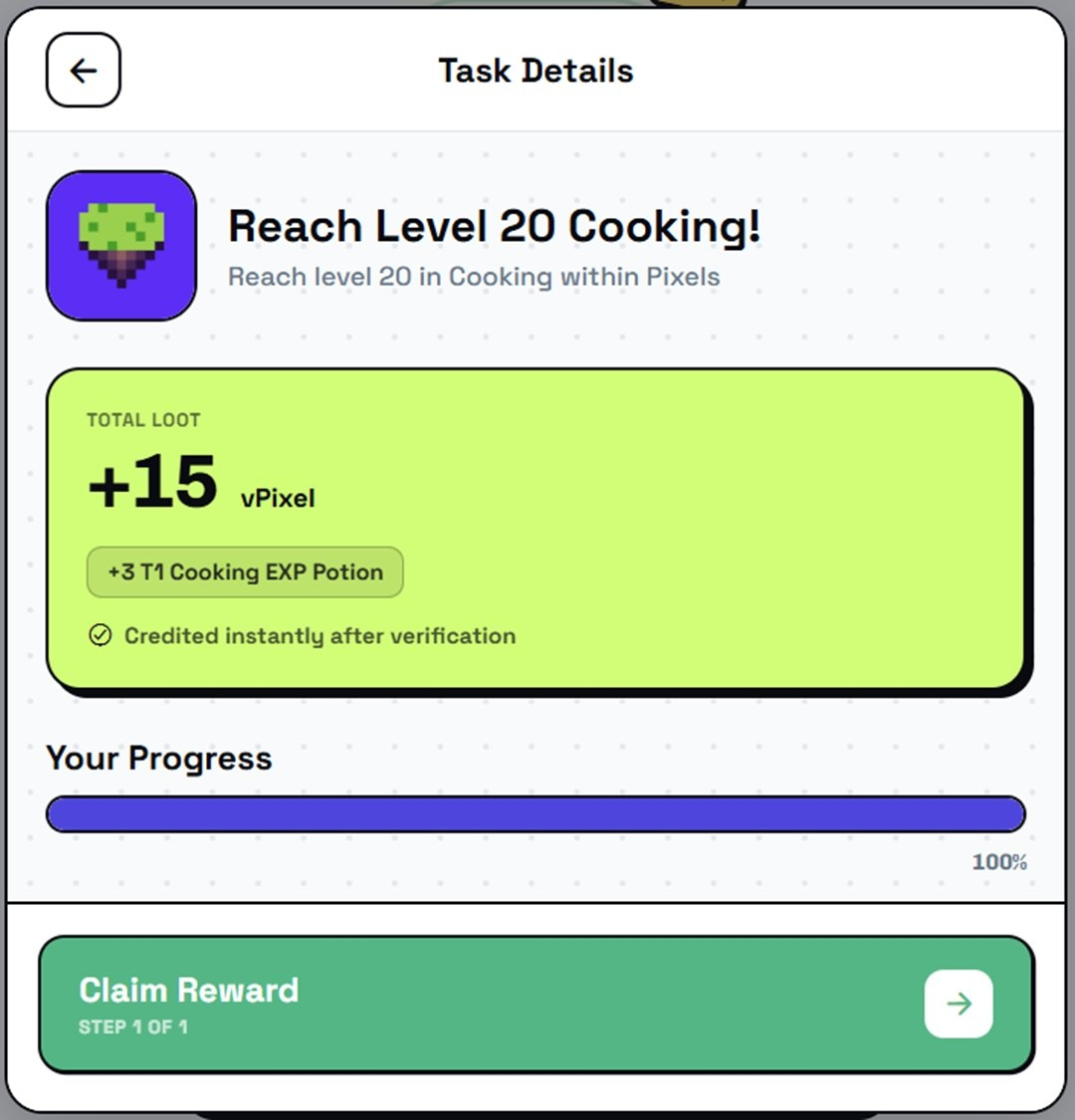

One point I care about is its repeated emphasis on being built-in production and battle-tested—not just a white paper concept, but having run in real products, supporting formats like Pixels, Pixel Dungeons, Chubkins, and handling operational complexities at the scale of 200M+ rewards and millions of players while anchoring results to commercial outcomes like 25M+ revenue. I won’t dismiss this as boasting; I will treat it as a standard for judgment: if the system has truly operated at this scale, it must have gone through countless confrontations with bots, challenges from player fatigue, and repeated pressures to adjust rules due to economic imbalance. The larger the scale, the less you can 'get by with a single narrative'; you must solve problems with engineering details, or else the system itself will collapse.

Many people see AI game economists and think it sounds too abstract; I prefer to break it down simply. It basically needs to do three things: first, identify problems, like which stage has high churn, which task chain is broken, or which group’s LTV is declining; next, propose hypotheses, such as whether the barriers are too high, the pace is too tight, or the reward structure is being exploited; finally, design and execute experiments, providing different incentives, tasks, and pacing to different groups, run it for a while to observe differences, and then review. The real value of AI here isn't in appearing advanced, but in shifting operations from 'decisions based on experience' to 'decisions based on experiments,' shortening the link between insights and actions. In blockchain gaming, there’s too much noise and too many varied player motivations; relying on subjective judgment can lead to misjudgments. Treating rewards as a repeatable experimental process is a moat in itself.

I even think the real hard skill of LiveOps isn't in 'launching' but in 'stopping' and 'changing.' Many projects are afraid to stop rewards because once they do, the data drops; they also hesitate to change rules because one change could lead to backlash. But in blockchain gaming, if you’re afraid to stop, you'll keep feeding the bots; if you're afraid to change, you'll always lag behind the scripts. A mature rewarded LiveOps engine should embed 'stop' and 'change' into its system capabilities: through fraud prevention, anti-bot strategies, and behavioral data strategies, making the returns from suspicious traffic increasingly unstable; through smarter reward structures that drive content consumption, social collaboration, and long-term goals, rather than pure withdrawals. The bigger the rewards, the more dangerous they are, and the more the rules need to evolve continuously to curb farming; that's the reason for long-term sustainability.

When it comes to PIXEL, I’ll just say one point that's closely tied to LiveOps: its 'character expansion' is more about transitioning from a single game token to cross-ecosystem rewards, loyalty currency, and reward layer fuel. This isn't a promise or me shouting about the future; it's a deduction based on infrastructure logic. If Stacked is really building a rewarded LiveOps engine, then cross-game and cross-content reward scheduling will become increasingly important, and a unified, measurable reward medium will be more convenient. But whether it can get to that point ultimately depends on whether it can consistently demonstrate 'reward deployment leading to verifiable behavioral improvements' in real operations, rather than just relying on narratives to keep people engaged.

I will score it based on three very practical observation points. First, can the reward distribution long-term distinguish between real players and bots? As long as farming resurfaces, no narrative can save you. Second, can the event pace sustain content consumption long-term? The more rewards there are, the easier it is to overspend content; if the content can't keep up, players will tire in due time. Third, can the loop from insight to action become faster? That is, can the speed of discovering problems, adjusting rules, launching experiments, and iterating through reviews improve? Speed itself is a moat. If it can achieve these three points, I’d be more inclined to regard it as 'LiveOps infrastructure capability' rather than just 'a blockchain game that can dispense rewards.'

Writing this, I’ve actually become calmer. The most worth discussing about PIXELS isn’t how big the rewards are, but whether it has engineered the rewards, transforming operations into a reusable system capability. The former can only make data look good in the short term; the latter could potentially allow the project to last longer. Blockchain games have been harshly criticized over the years for mixing 'short-term stimulation' with 'long-term operation.' If PIXELS can indeed run a rewarded LiveOps engine as a stable capability, it’s at least closer to the answer on 'how to sustain play' than many other projects.