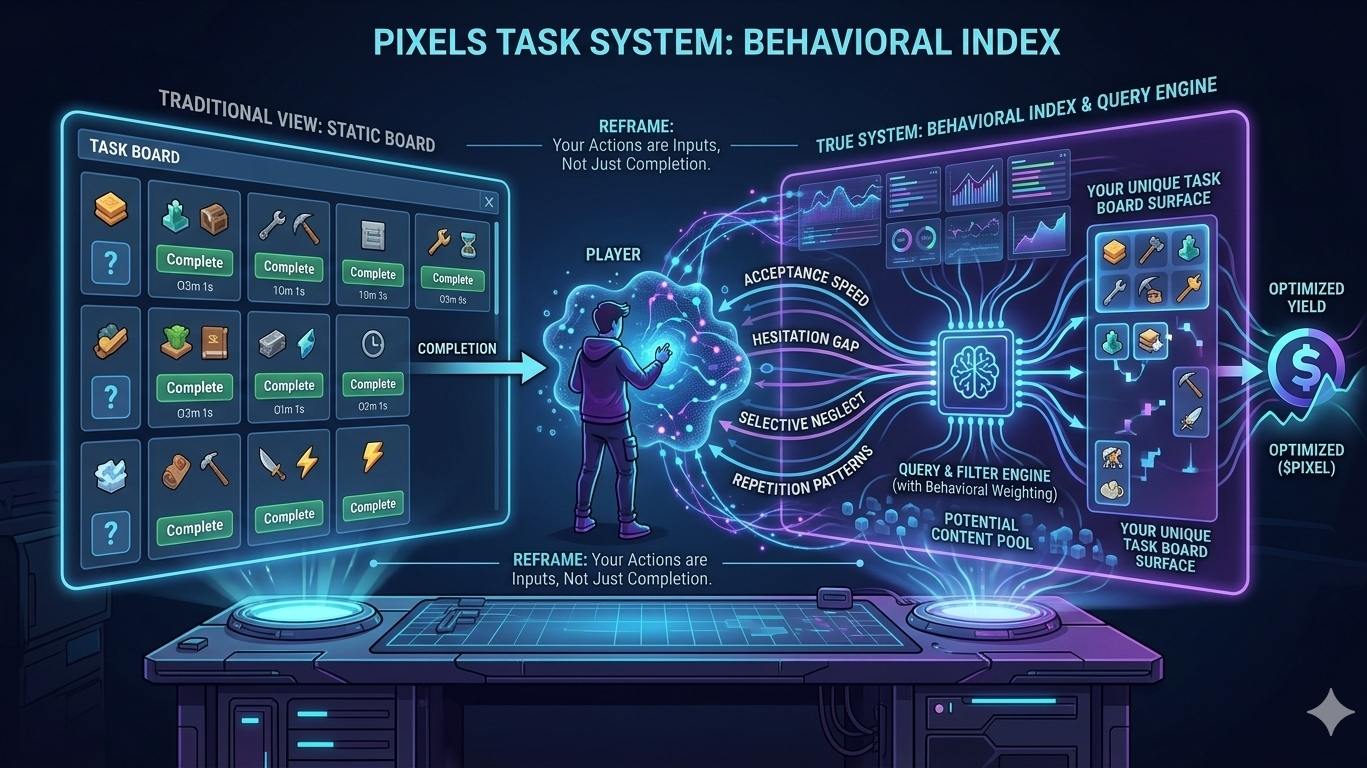

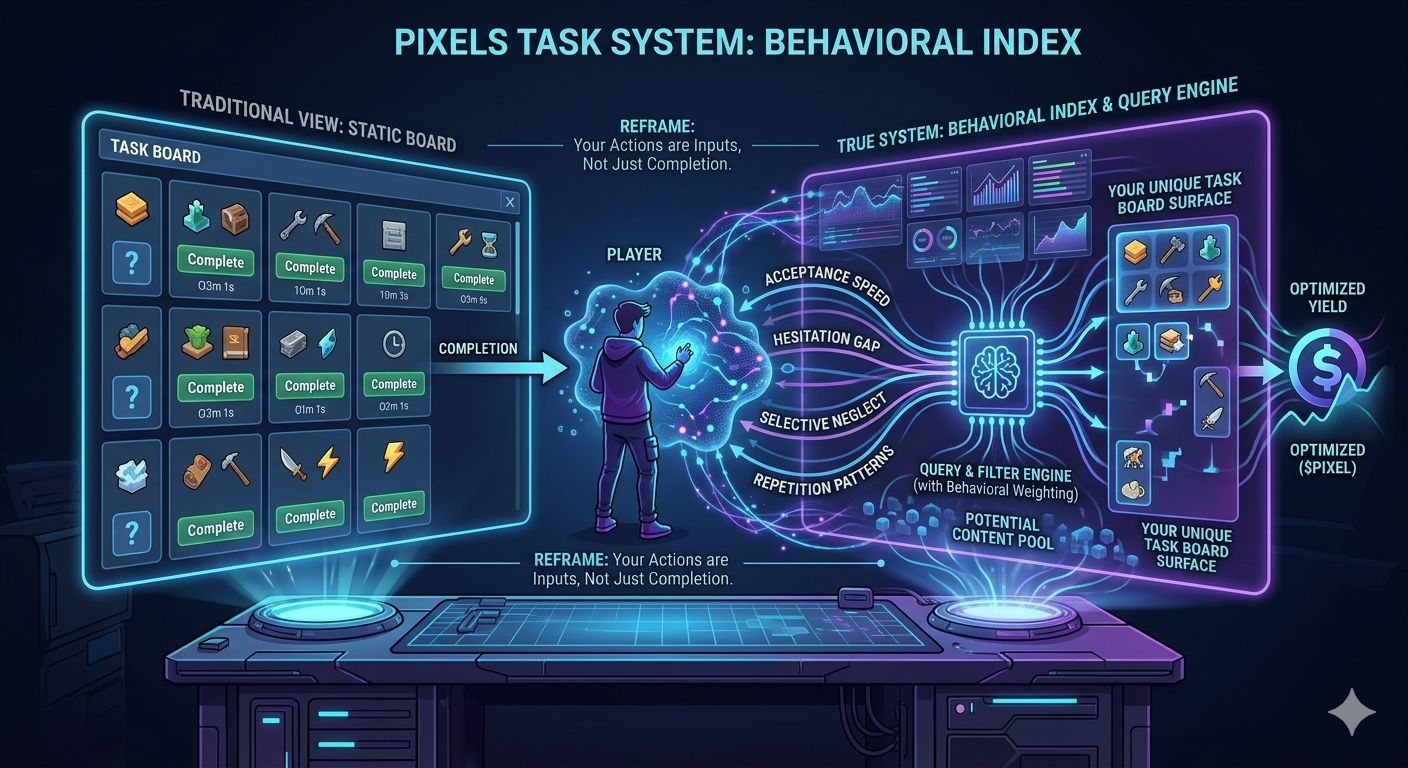

The $PIXEL task board looks like a familiar construct: a rotating set of objectives, refreshed on a timer, consumed for rewards. Most players approach it exactly that way—scan, select, complete, repeat. A static system with dynamic content.

That assumption doesn’t hold up under repetition.

The moment you stop treating the board as a list of tasks and start treating it as a system that *responds*, the framing breaks. The board isn’t just delivering content. It’s organizing it—based on you.

The shift becomes visible when interaction patterns are isolated.

Run one session where you hesitate. Open the board, observe, close it. Delay commitment. Introduce gaps between interaction and execution. Then compare it to a session where you act instantly—accept everything, complete without pause, refresh aggressively.

Completion output stays roughly the same. But the *composition* of the board begins to diverge.

Delayed interaction tends to produce clustering. Tasks begin to share structural similarities—resource types, action loops, even implied pacing. It starts to feel curated, almost like the system is tightening its scope.

Immediate interaction does the opposite. The board becomes more fragmented, more heterogeneous. Task types scatter. The system appears less opinionated, less filtered.

Same account. Same progression. Different surface.

That alone suggests the board is not a static distributor—it’s a responsive layer.

Push further.

Introduce selective neglect. Ignore a category—not once, but consistently across multiple refresh cycles. Continue engaging with everything else. Over time, the board doesn’t just “randomly” rebalance. It subtly deprioritizes what you’ve been ignoring and increases the presence of what you engage with.

Not dramatically. Not in a way that’s obvious in a single session. But enough to shift the texture of the board.

That’s the tell.

This isn’t a quest system in the traditional sense. It behaves closer to a *query engine with behavioral weighting*.

Your actions aren’t just completing tasks—they’re feeding signals into a filtering mechanism. Acceptance speed, hesitation, repetition, avoidance—these aren’t neutral behaviors. They function as implicit inputs.

The board you see is not *the* board. It’s *your version* of it.

This reframes a key question: where does the value in the system actually come from?

It’s not just in completing tasks. It’s in *surfacing the right ones*.

And that shifts the role of the player. You’re no longer just executing predefined objectives—you’re shaping the pool those objectives are drawn from. The interface stops being a passive display layer and becomes part of the logic itself.

Two players can play the same game, at the same progression level, and still describe the task system in completely different ways—not because one is mistaken, but because they are effectively querying different outputs from the same underlying structure.

From a systems perspective, this aligns less with static design and more with adaptive retrieval models. Content isn’t just generated—it’s ranked, filtered, and surfaced through interaction history.

Which brings us to the token layer.

PIXEL, in this context, isn’t just a reward for completion. It’s downstream of a more important mechanism: *task exposure*. If your interaction patterns influence which tasks appear—and those tasks determine earning efficiency—then behavior indirectly shapes yield.

You’re not just optimizing for completion speed.

You’re optimizing the system that decides what’s worth completing.

And that’s the real layer most players never touch.