Thinking out loud

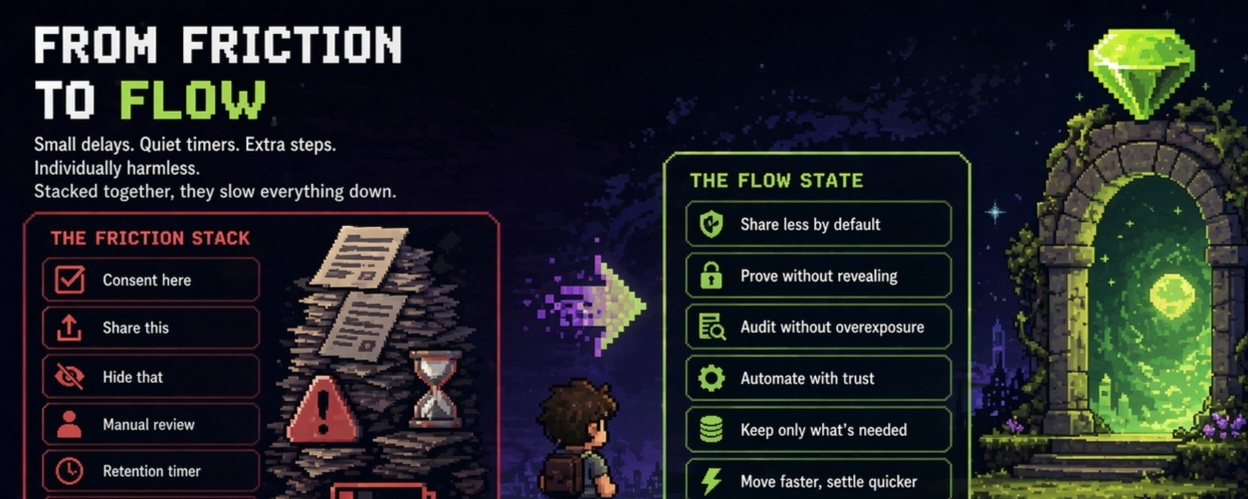

You sit there trying to onboard a new user to some financial service or submit documents for a regulated process, and suddenly there’s this checkbox dance: share this, hide that, consent here, revoke later. The friction isn’t one big wall it’s a thousand small delays and workarounds. Regulated environments need privacy, but most solutions bolt it on as an exception: audit logs that still leak, selective disclosure that still requires trust in the middleman, or “zero-knowledge” proofs that feel like magic until compliance teams start asking for the underlying data anyway. The problem exists because human behavior and institutional inertia both reward visibility over discretion. Builders optimize for speed and settlement first, then retro-fit privacy when regulators knock. Institutions fear fines more than they fear user friction, so they collect everything “just in case.” Users learn to accept leaky systems because the alternative is slower or more expensive. It stacks up the timers on data retention, the energy limits on what you’re allowed to keep private, the small pauses while something gets manually reviewed. Individually harmless. Together they create heavier drag than anyone admits.

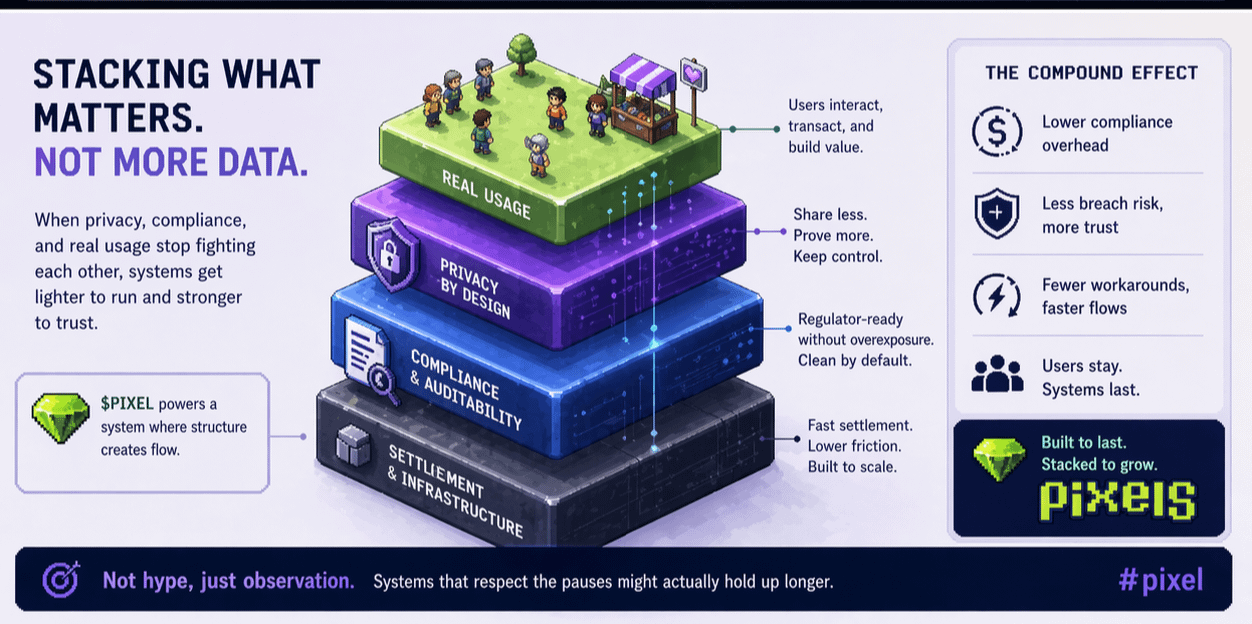

Treating something as infrastructure means asking how it actually sits inside real usage, law, settlement, and costs. Privacy by design, not by exception, would mean the default flow already minimizes what’s shared without breaking compliance or slowing legitimate verification. I’ve seen systems fail when privacy was an add-on: breach happens, trust evaporates, costs spike. Skeptical part of me wonders if it can truly scale without someone still holding a master key somewhere. But if it reduces the constant negotiation between visibility and protection, maybe fewer workarounds, lower long-term compliance overhead, less human exhaustion from managing consents

I have been mulling this over for a couple of evenings now, coffee going cold while I scroll through the usual mix of regulatory updates and on-chain activity logs. Not in some grand theoretical way, but from the kind of everyday friction that actually slows things down in practice. Picture this: you're a player who's been grinding in a Web3 game for weeks. You hit your streak, complete the missions, and the reward hits your wallet. Simple enough. But behind the scenes, to make that reward feel fair and sustainable, the system had to track your play patterns, your drop-off points, what kept you coming back. Now layer on regulation GDPR in Europe, state-level consumer data rules in the US, or the creeping AML expectations around tokenized rewards and suddenly that tracking isn't just operational. It's a liability. One misplaced log, one unintended share, and you're facing audits, fines, or user exodus. The question isn't abstract: how do you run an ecosystem where rewards actually work without treating every bit of user behavior as data to be collected, packaged, and potentially exposed?

That's the rub I've watched play out too many times. Most projects start with the fun stuff the gameplay loop, the token incentives and privacy becomes the awkward afterthought. You bolt on a consent form here, a "we don't sell your data" clause there, but in reality the architecture was never built for it. Data ends up centralized anyway because the reward engine needs it to function. Builders end up paying lawyers to review every export, or they limit features to avoid compliance headaches, which kills engagement. Institutions on the regulatory side aren't villains in this; they're trying to protect users from the last wave of rug pulls and data leaks, but the tools they have force exceptions rather than systemic change. And users? We vote with our feet. I've seen it in earlier GameFi cycles promises of privacy, followed by a breach or a quiet policy shift, and retention craters. The whole thing feels incomplete because it's reactive. Privacy by exception means you're always one regulator letter away from rewriting half your backend.

What keeps nagging at me is how rarely the infrastructure itself is designed to make privacy the default path rather than the expensive detour. Take something like the Stacked layer in the Pixels ecosystem. I'm not here to pitch it as magic; I've been around long enough to know better than to trust any single rollout. But watching how it's been positioned as a shared rewards infrastructure, not a flashy add on makes me pause. Stacked isn't about broadcasting user data outward. From what I've pieced together, gameplay signals stay internal to the system.

The AI-driven targeting for offers, the behavior matching that decides who gets what incentive when, operates without the usual third-party data sales or external feeds. It's the kind of setup where the data serves the settlement of rewards figuring out sustainable distribution across games without turning into a commodity. In a regulated environment, that matters. Compliance isn't just about ticking boxes on a privacy policy; it's about minimizing what leaves the system in the first place. Data minimization isn't a buzzword here; it's baked into how rewards get calculated and delivered.

Think about the real-world costs this avoids. I've seen projects burn through budgets on external analytics vendors or compliance consultants because their core engine was leaky by design. Every time you export signals for "better personalization," you're inviting scrutiny does this count as processing personal data under local law? Does the token reward now trigger additional financial reporting?

Human behavior complicates it further: players aren't idiots. They sense when their every click is being funneled somewhere external, and they disengage, or worse, they farm aggressively knowing the system is fragile. Stacked seems to approach this differently, from the inside out. Rewards flow based on internal models refined from actual play in the Pixels games retention curves, cohort data, what turns a casual user into someone who sticks. No need to sell the dataset to advertisers or partners to make the economics work. The $PIXEL L token sits at the center as the coordination asset for staking and pool allocation, but the privacy layer means the human element actual play doesn't get commoditized as an afterthought.

I'm skeptical, of course. I've watched too many "decentralized" systems quietly centralize data when scaling pressure hits. Regulators could still demand more transparency on how those internal AI decisions are made; opacity might breed its own distrust. And settlement in a regulated sense actual payout of rewards, whether in PIXEL or stable equivalents still has to reconcile with tax reporting or anti-fraud rules. If the infrastructure can't produce auditable trails without exposing individual behaviors, it won't hold up. Costs could still creep in if integration with new games requires heavy custom work to keep signals walled off. Human behavior is the wildcard too: some users want full visibility into how rewards are decided, especially if they feel the system is favoring whales. Privacy by design only works if it doesn't feel like a black box that hides unfairness.

Still, there's something grounded here that feels less fragile than the usual patchwork. The Stacked ecosystem didn't emerge from a whiteboard; it grew out of running the original Pixels game at scale, where they had to make rewards sustainable or watch the economy collapse. That internal testing hundreds of experiments on what actually drives retention without over-emission gives it a realism most privacy add-ons lack. In practice, for builders operating across jurisdictions, this could mean lower legal overhead: design the reward engine to keep data internal, and a chunk of compliance risk evaporates. For players, it means engaging without the constant low-level paranoia that your session data is tomorrow's data-broker asset. Institutions and regulators might even see it as a model proof that you can have verifiable incentives and consumer protection without forcing everything into public ledgers or third-party silos.

I'm not certain it'll scale cleanly. Crypto has a habit of promising infrastructure and delivering hype until the first real stress test. But if it holds, the people who'd actually lean into this are the studios and developers trying to build games that can operate in regulated markets without choosing between growth and user trust. Not the pure speculators chasing the next token pump, but the ones who want playable economies that survive beyond one bull cycle. It might work precisely because it's treated as plumbing quiet, functional, focused on how rewards settle and how behavior compounds over time rather than the front-page narrative. What would make it fail? If the internal models ever start leaking signals under competitive pressure, or if regulators shift to demand explicit user level audit rights that the design can't accommodate without breaking the privacy default. Or simpler: if human nature wins out and players demand more transparency than the system is built to give without adding those awkward exceptions again.

Either way, it's the kind of quiet evolution worth watching, not because it's revolutionary, but because it tries to solve the friction at the root instead of papering over it. @Pixels

Pixels has been iterating on this with PIXEL as the anchor, and the Stacked approach feels like one of the less delusional attempts I've seen at making regulated reality and user-level privacy coexist without constant compromise. #pixel