I remember logging off one night thinking I had done everything right, and still feeling like something didn’t add up. Not in a dramatic way, just a quiet mismatch that stayed with me. I had followed the loop, stayed consistent, avoided obvious mistakes. And yet the outcome felt slightly disconnected from the effort. Not wrong, just interpreted differently than I expected. That’s what made it uncomfortable. It didn’t feel like failure, it felt like misalignment.

At first, I went where everyone goes. Maybe I wasn’t efficient enough. That’s almost the default belief in Web3 games. If rewards don’t match effort, then you assume someone else has optimized better. So I tightened everything. Shorter loops, cleaner routes, less wasted time. It slowly stopped feeling like a game and started feeling like maintaining a system. You repeat until it becomes predictable. And for a while, that explanation felt sufficient.

But then I started noticing players who didn’t fully fit that pattern. They weren’t grinding more, and they weren’t obviously more optimized. If anything, they looked less structured. Yet their progression felt smoother, like they weren’t hitting the same invisible resistance. That’s when it stopped being about efficiency. Because if efficiency was the only variable, outcomes wouldn’t drift like that.

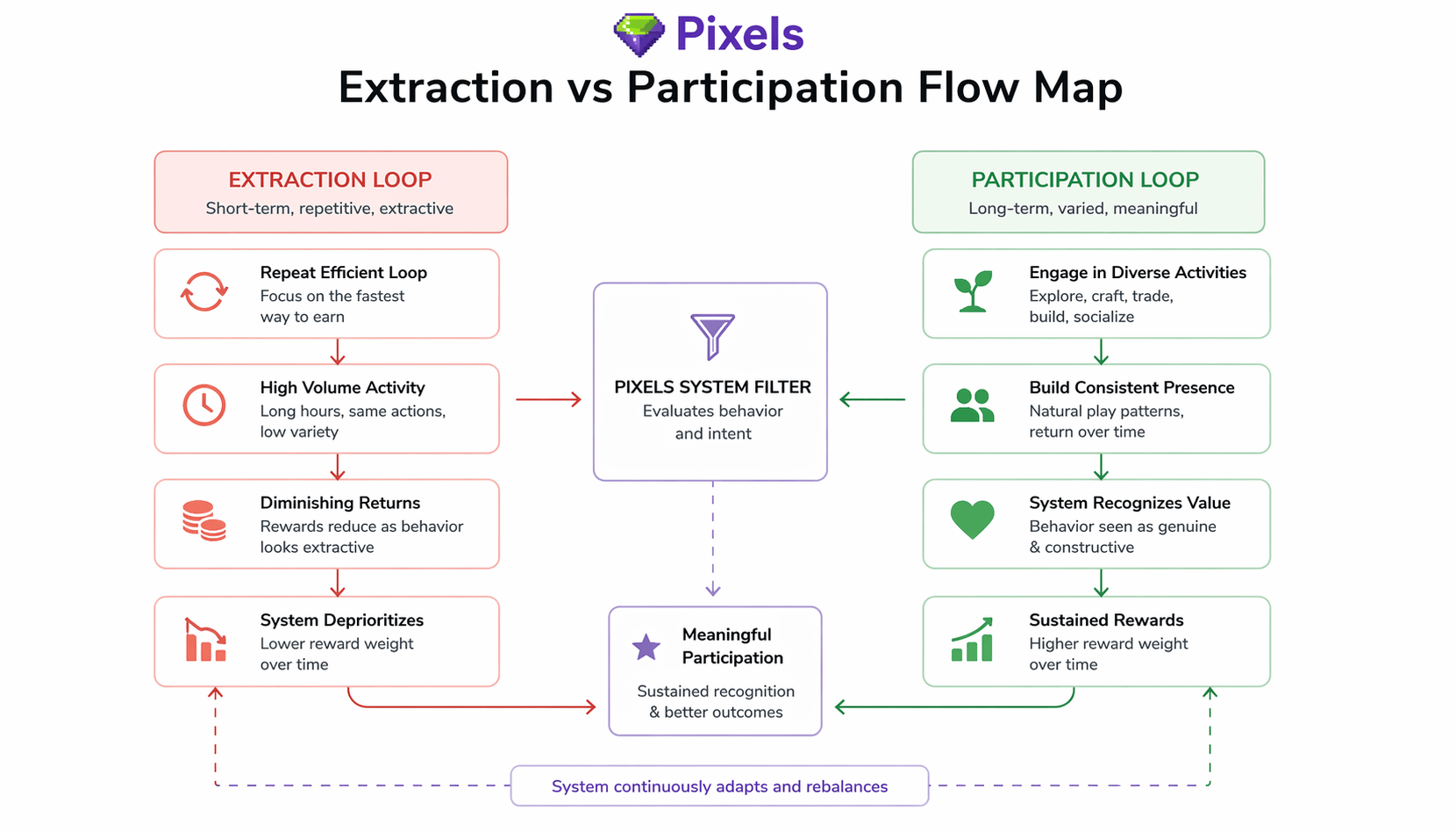

That shift made me look at these systems differently. Most GameFi environments aren’t really games, they’re economic machines. They reward throughput. The more cycles you complete, the more value you extract. Over time, players adapt to that and stop engaging with the game itself. They start operating it. The system doesn’t ask who you are as a player, it only measures how much you produce.

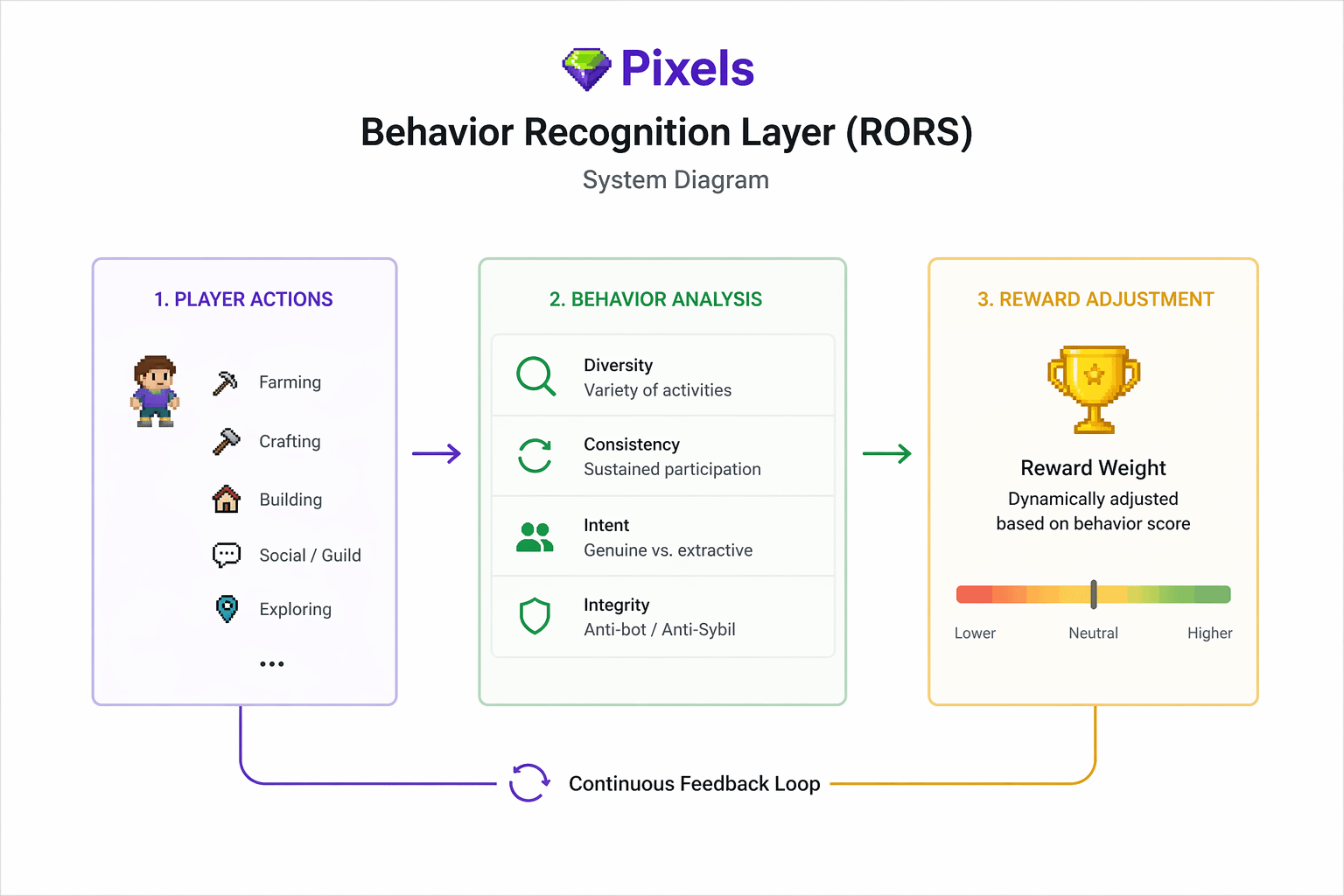

Pixels feels like it’s pushing against that, even if it doesn’t say it directly. The longer I spent in it, the more it felt like the system wasn’t neutral. Outcomes didn’t scale cleanly with effort. Sometimes rewards compressed, sometimes they held, sometimes they surprised you. It didn’t feel random. It felt like the system was forming an opinion about behavior. Not just what you did, but how you did it, and how consistently that pattern held over time.

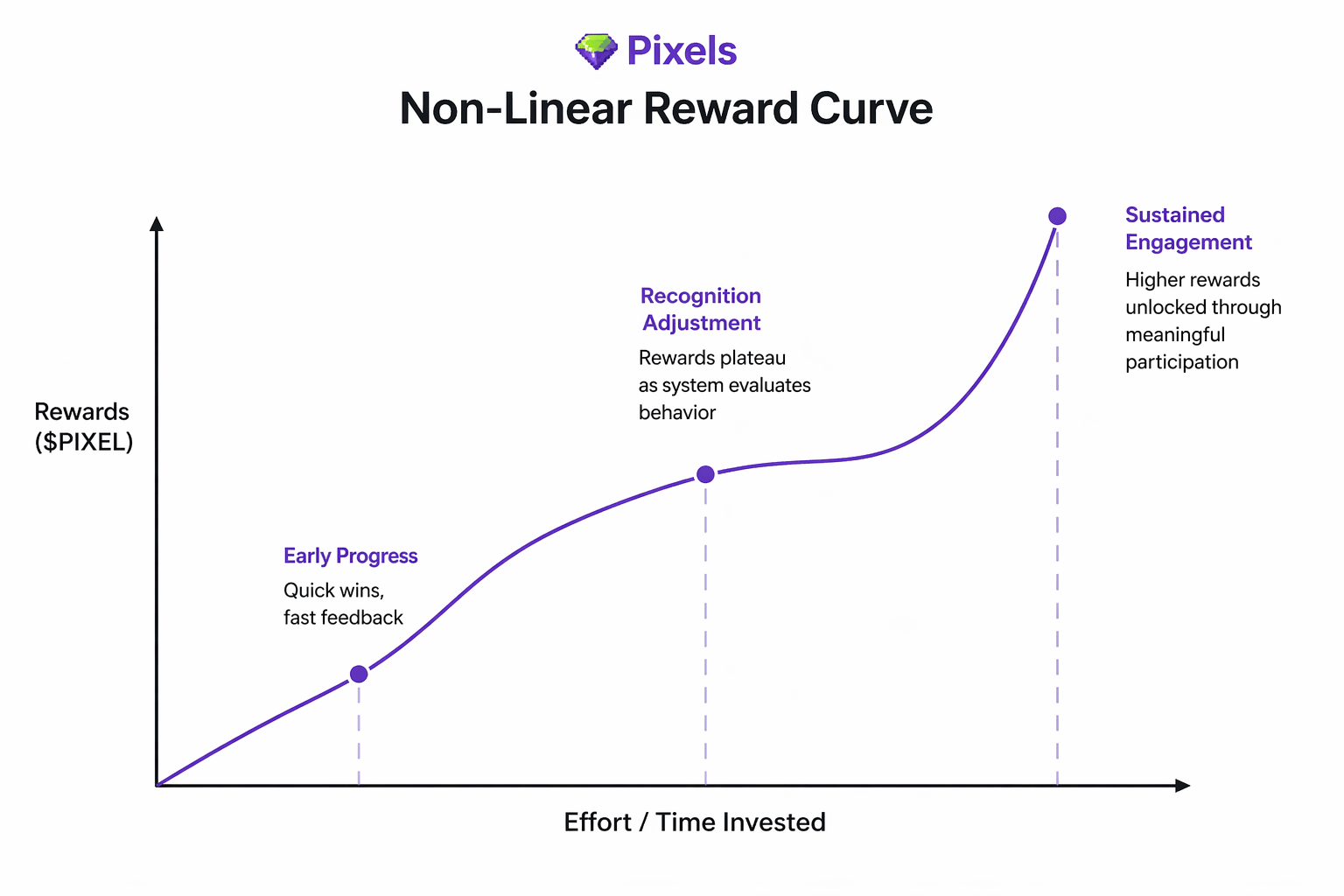

And that’s where the structure underneath starts to reveal itself. Rewards aren’t just distributed, they’re adjusted. If behavior starts to resemble extraction loops, returns begin to flatten. If it looks harder to replicate at scale, more embedded in the actual flow of the game, the system seems to respond differently. At the same time, progression isn’t free. Crafting, upgrading, maintaining land, even participating in certain loops slowly pulls value out of circulation. You feel it in small frictions, in costs that don’t always pay back immediately. It changes how you move. The system isn’t just giving, it’s also quietly taking, trying to keep the balance from breaking.

That balance matters more when you look at the token itself. With $PIXEL still moving through a post launch phase, supply unlocking gradually and sentiment shifting with player activity, the economy feels sensitive. Not fragile, but reactive. If rewards were purely linear, it would be easy to overwhelm the system. So instead, behavior becomes the control layer. Not just how much activity exists, but what kind of activity the system decides to sustain.

What stands out most is how invisible that sorting process feels. There’s no clear signal telling you you’ve crossed a threshold. But over time, small differences compound. Two players can spend similar hours and still drift apart in outcomes. Not because one paid more, but because the system seems to categorize them differently. It reminds me of how recommendation systems work elsewhere. You’re not told what you did right or wrong, but your experience slowly changes based on patterns you barely notice.

Still, I’m not fully convinced it holds under pressure. Because once a system starts recognizing behavior, it also becomes something that can be studied. And once it’s studied, it can be imitated. That’s where it gets tricky. What happens when extractors learn to behave like participants? Or worse, what if the system starts rewarding the appearance of good behavior more than the real thing? There’s also the risk that genuine players get misread, that consistency gets flattened because it looks too repetitive from the outside. The more adaptive the system becomes, the more fragile its judgment layer might be.

At some point, this stops being about rewards entirely. It becomes about whether players choose to stay. Because even the most intelligent system doesn’t matter if people don’t come back. You can feel that tension underneath everything. Progression has cost, rewards have variance, outcomes aren’t always predictable. So the real question becomes whether that experience creates enough meaning for someone to return the next day. Utility only works if someone comes back tomorrow. Otherwise, the system is just delaying extraction, not replacing it.

So the loop starts to feel different, even if it looks similar on the surface. You show up, you engage, the system responds, and over time it adjusts how it treats you. Not in a fixed way, but in a shifting one. It’s less about maximizing a single session and more about how your behavior accumulates. The outcome isn’t immediate, but it isn’t random either. It sits somewhere in between, shaped over time.

I don’t really see @Pixels as just a game, or even just a token economy. It feels more like an attempt to build a system that decides what kind of behavior it wants to keep, and then slowly reinforces it through outcomes rather than rules. Not perfectly, and not without risk, but deliberately.

Whether that actually works at scale is still unclear. Early players shape systems as much as systems shape players, and not everyone shows up with the same intention. Distribution, timing, and behavior all collide in ways design alone can’t control.

For now, it feels like the idea is ahead of certainty. And maybe that’s the point.

You don’t optimize for rewards here. You try to understand what the system is willing to keep.