I almost missed what was actually interesting about how Pixels handles fraud.

Most Web3 game coverage treats anti-bot systems as a technical footnote. Something the team mentions briefly to signal they thought about it. A checkbox. You build the game, you add fraud prevention, you move on. Nobody writes seriously about fraud prevention because it doesn't fit neatly into any compelling narrative. It's not exciting. It doesn't make good headlines.

But I kept coming back to it.

Because the more time I spent trying to understand why Pixels is still functioning when most comparable projects aren't, the more the fraud prevention piece kept appearing at the center of the answer rather than the edges of it.

Here's what I mean.

Every Web3 game economy has a fundamental vulnerability that traditional games don't have. In a traditional game, cheating affects your experience. Maybe you get banned. Maybe you ruin someone else's match. The consequences are contained. The economy of the game — if it has one — doesn't get structurally damaged by individual bad actors.

In a Web3 game, cheating is an economic attack.

A bot that farms rewards inside Pixels is not just breaking the rules. It's extracting real value from the ecosystem. Every token a bot pulls out is a token that didn't go to a genuine player. Every reward cycle a bot captures is a cycle that didn't strengthen genuine engagement. The damage isn't contained. It distributes across the entire economy, invisibly, in small increments, until the ratio shifts enough that genuine players start feeling it without being able to explain why.

That's how most play-to-earn economies got hollowed out. Not by a single dramatic exploit. By thousands of small extractions running simultaneously that the system couldn't distinguish from legitimate activity.

Pixels lived through that with $BERRY. Watched it happen in real time. Watched the economy start looking healthy on dashboards while something underneath was already deteriorating.

What came out of that experience wasn't a better bot detection algorithm. It was a different philosophy about what fraud prevention actually needs to be inside a live game economy.

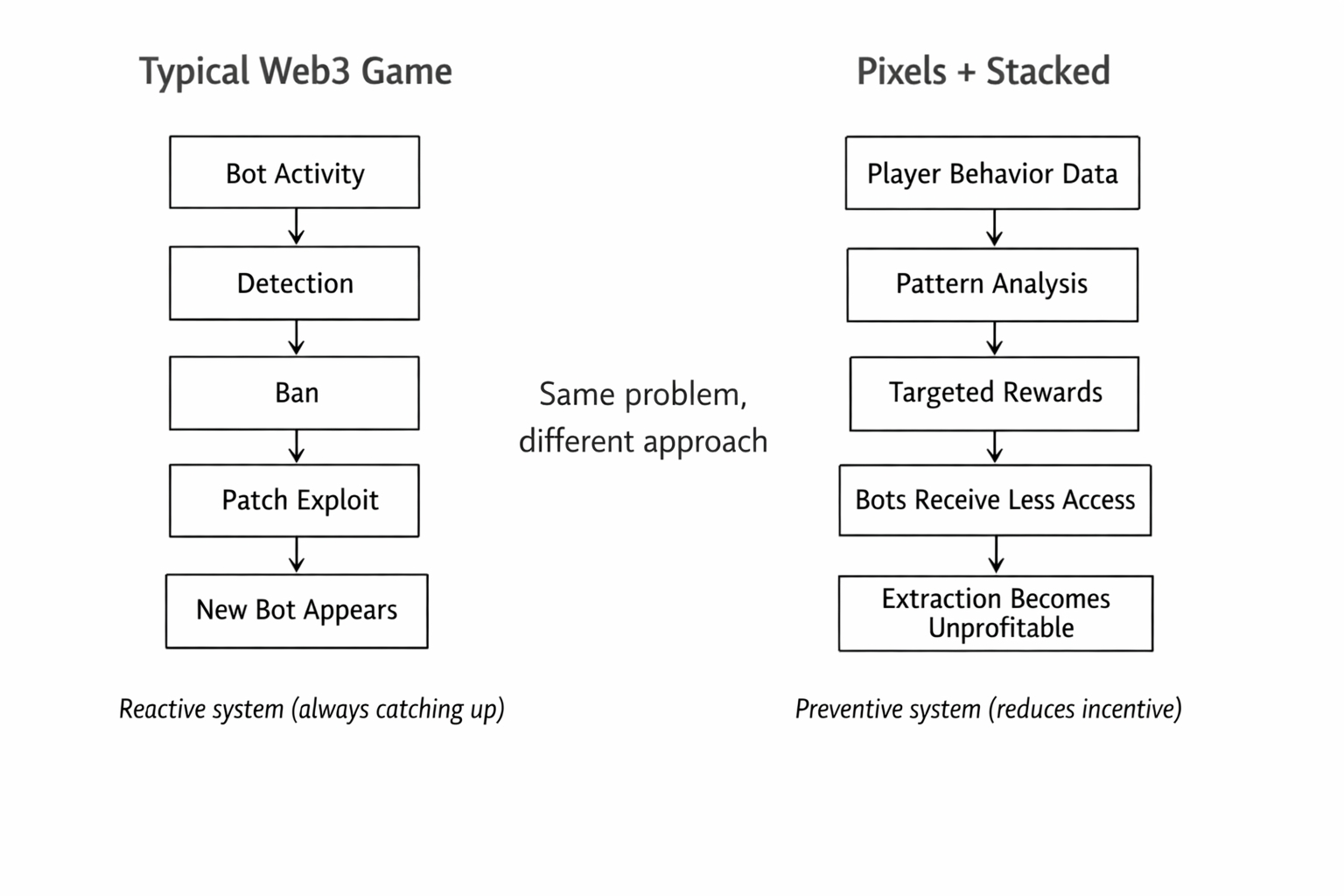

Most fraud prevention systems are reactive. Something looks wrong. You investigate. You ban the accounts. You patch the exploit. You move on until the next one. The system is always catching up to behavior that already happened.

What Pixels built inside Stacked operates differently. The behavioral data layer isn't just tracking what players do. It's building a picture of what genuine engagement looks like at the pattern level — across sessions, across time, across different types of activity. And it's constantly comparing new behavior against that picture.

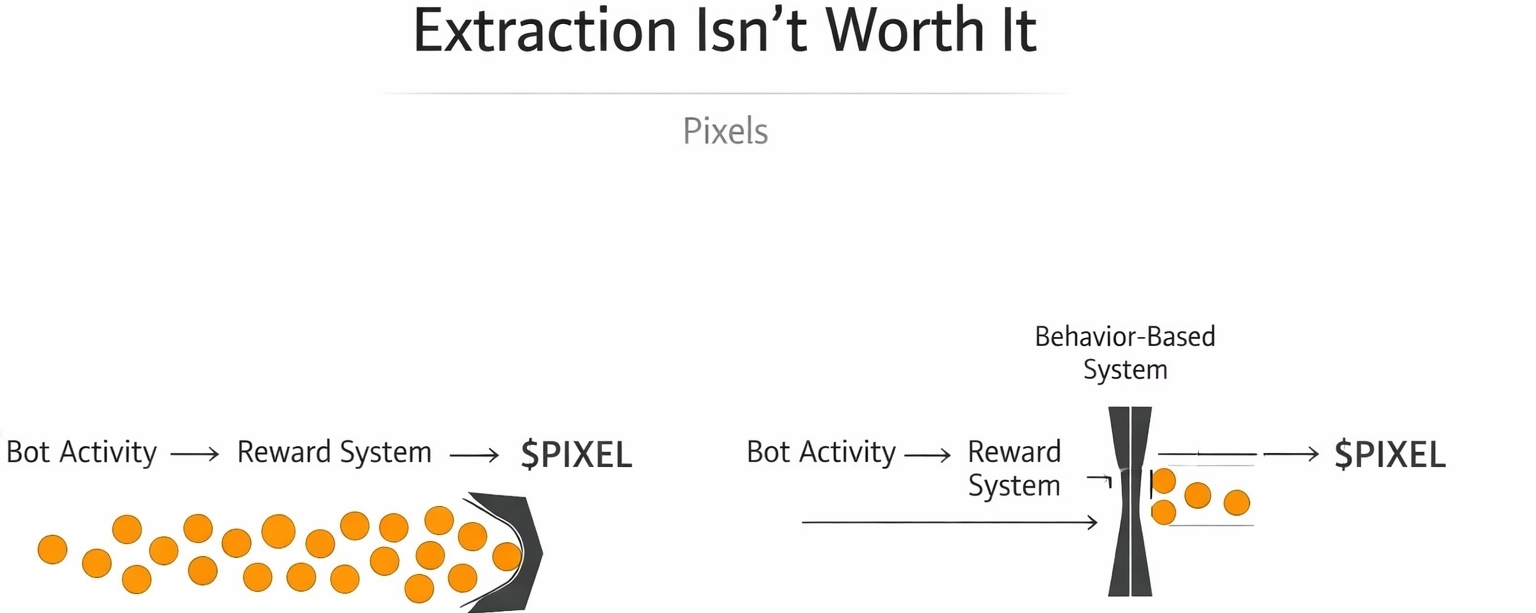

Not to catch bots after the fact. To make the reward surface less profitable for bots in the first place.

That distinction sounds subtle. It has enormous practical consequences.

When the reward system can target specific players at specific moments based on behavioral patterns that are expensive to fake, the economics of bot farming change. A simple script that repeats the same actions in the same sequence can no longer reliably access the same rewards as a genuine player whose behavior shows the kind of organic variation that comes from actually caring about the game. The bot still runs. But its yield compresses. The extraction becomes less worth the effort.

I kept thinking about something outside gaming while sitting with this. Security systems that work well don't usually announce what they're looking for. The moment you publish your detection criteria, you've handed bad actors a blueprint for evasion. The most effective systems create uncertainty about exactly where the line is — not to be arbitrary, but because uncertainty itself raises the cost of exploitation.

Pixels' behavioral targeting creates that uncertainty naturally.

Because it's not looking for specific bot behaviors. It's looking for the absence of genuine player behaviors. And genuine player behaviors are hard to enumerate because they emerge from actually caring about something rather than optimizing against a known set of rules.

That's a fundamentally different security posture than most Web3 games have ever attempted.

And $PIXEL sits at the center of it in a way that most holders haven't fully thought through.

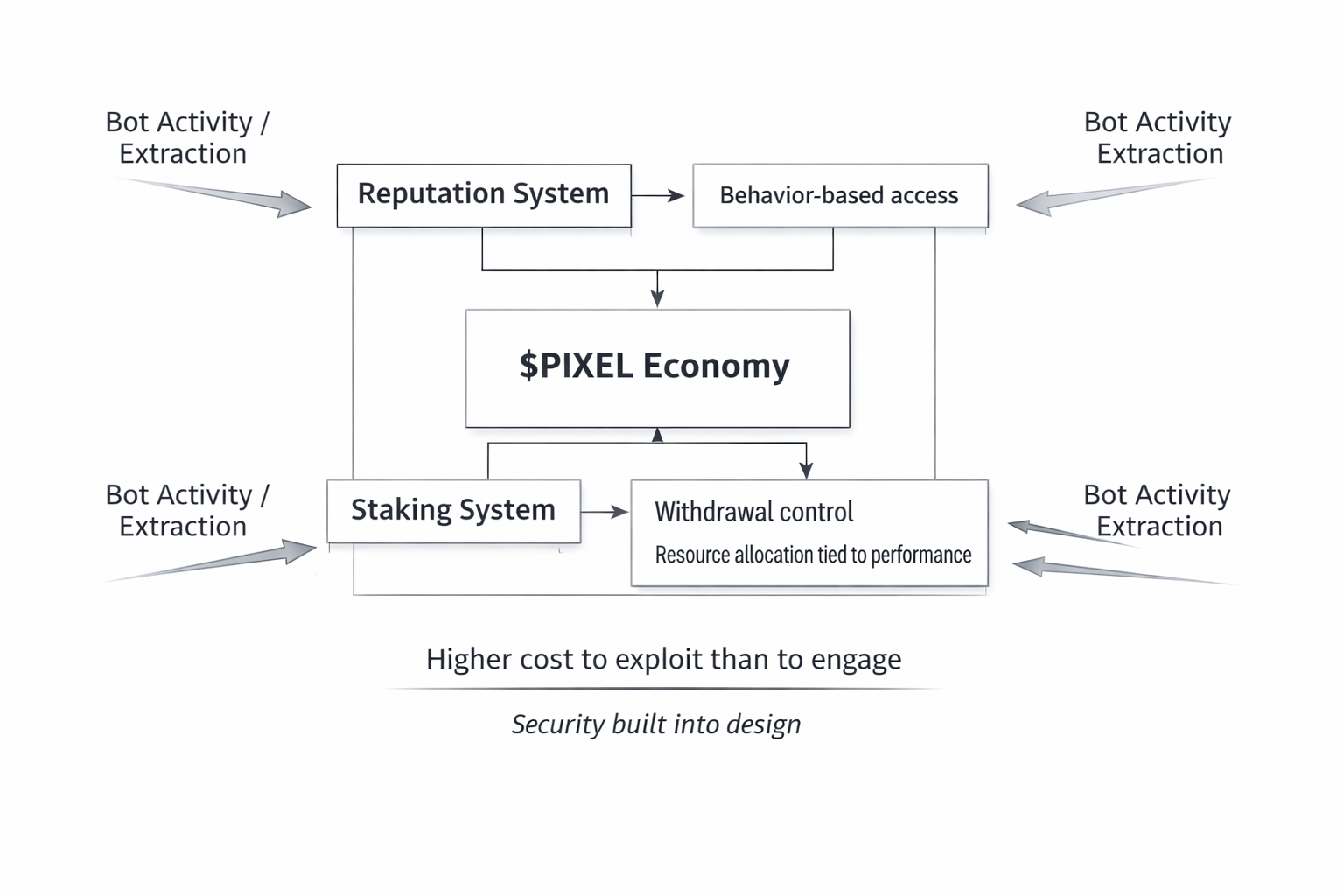

The reputation system gates PIXEL task access behind behavioral signals that accumulate over time. The VIP system gates PIXEL withdrawal behind an investment that raises the cost of pure extraction. The staking system ties PIXEL allocation to game performance metrics that bot activity actively damages. Every layer of the PIXEL economy was designed with the assumption that bad actors would try to exploit it.

Not as an afterthought. As a founding design constraint.

That changes what holding PIXEL actually means underneath the surface.

You're not just holding a game token. You're holding a position inside an economy that was specifically engineered to be more expensive to exploit than to engage with genuinely. The fraud resistance isn't a feature sitting on top of the token. It's baked into the architecture the token operates inside.

I'm not sure most people think about token value in those terms. The usual frameworks are simpler. More players means more demand. More utility means more demand. Staking reduces supply. Burns reduce supply. The variables are familiar.

But there's a less discussed variable that might matter more than any of them in the long run.

Whether the economy the token lives inside is structurally resistant to the kind of extraction that destroyed every comparable project.

Pixels built that resistance the hard way. By living through the failure first.

PIXEL is the token sitting inside what that experience produced.